- The new AI models of the week, GPT-4 with Code Interpreter and Claude 2, have the potential to transform the way we work. Let’s see why.

- New York City is now enforcing a law to regulate the use of AI in hiring processes.

- AI is infiltrating the agenda of the Labour Party in the UK.

- Some artists are not very happy with Adobe and its new generative AI system Firefly.

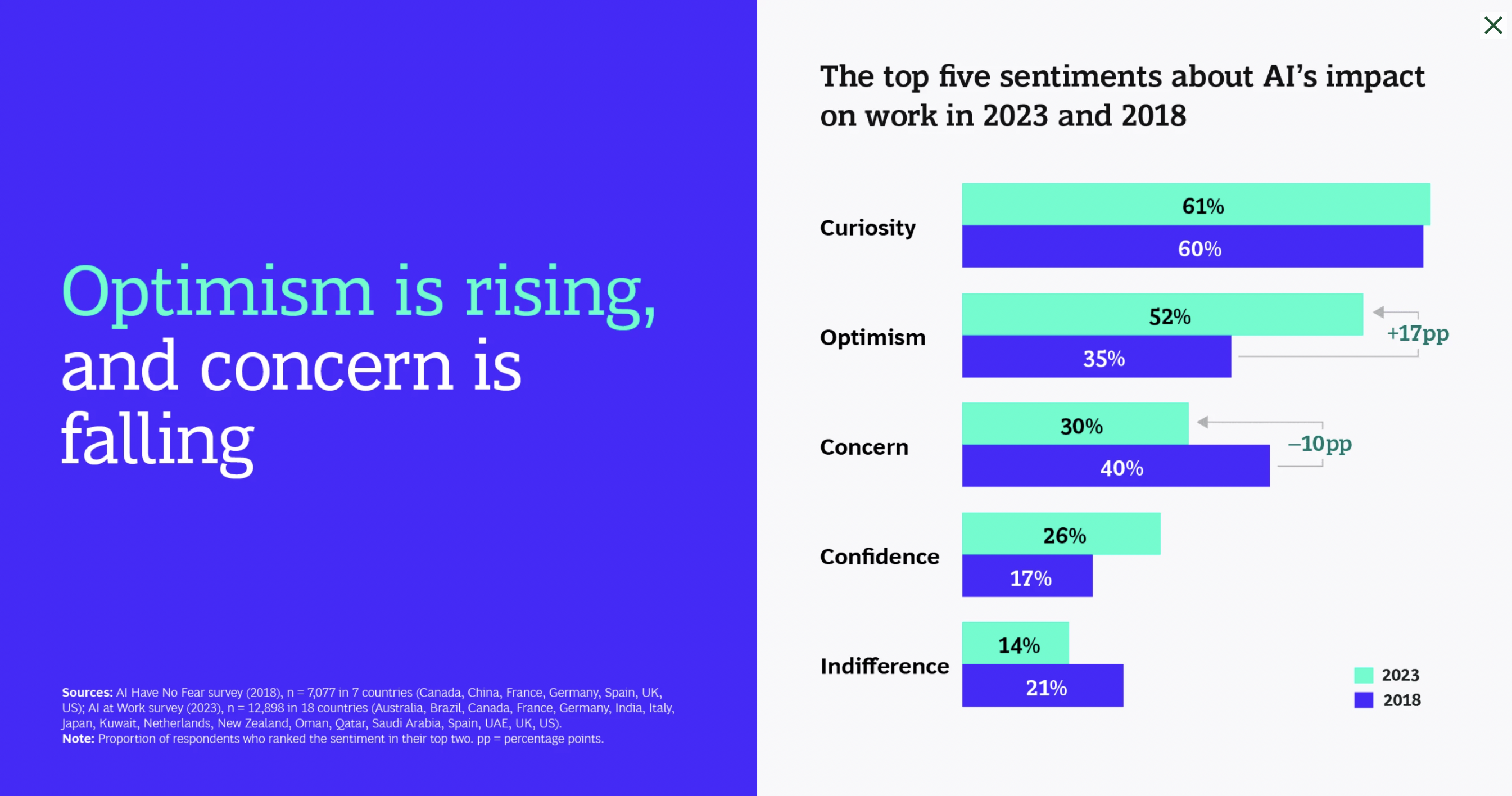

- The Boston Consulting Group surveyed nearly 13,000 people in 18 countries on what they feel about AI.

P.s.: This week’s Splendid Edition is titled Investigating absurd hypotheses with GPT-4.

In it, we discover the ELI5 technique, comparing how well it works with both OpenAI GPT-4 with Code Interpreter and Anthropic Claude 2.

We also use the GPT-4 with Code Interpreter capabilities to analyze two unrelated datasets, overlay one on top of the other in a single chart, and investigate correlation hypotheses.

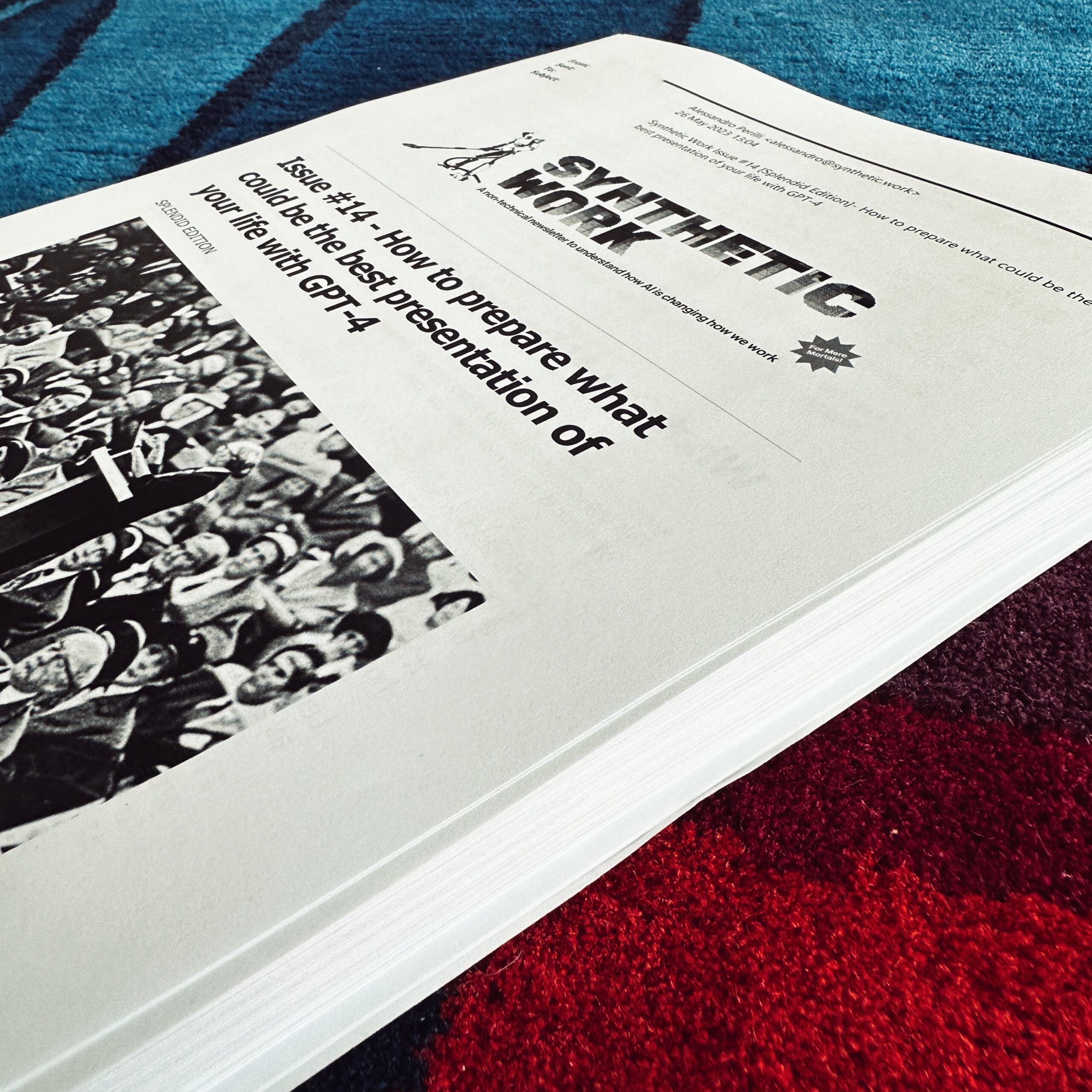

You have now read five months of Synthetic Work, which is more than double the pile of paper you see in this picture:

You are now more knowledgeable about AI than most of the people out there.

If you read every issue, and both Editions, cover to cover, in these five months, you could confidently go to your boss and say “I know what’s happening. Let me help you with our AI adoption project.”

And that might alter forever the trajectory of your career. Who knows?

Now.

I’m asking you only one thing:

How can Synthetic Work help you even more? What problem would you want it to help you solve?

Reply to this email and let me know. I read every reply.

Alessandro

The first thing that is worth your attention this week is a prime example of machismo in the tech industry wherein OpenAI released a new variant of its most powerful model called GPT-4 with Code Interpreter, Anthropic released Claude 2, and Google enabled Bard to speak its answers.

Normally, Synthetic Work doesn’t concern itself with the release of new AI models, but the new capabilities unlocked by these particular releases will impact the way we work in a substantial way.

So, briefly, let’s discuss about these new models.

GPT-4 Code Interpreter

First, you should know that “Code Interpreter” is a misnomer. This is not a model that is designed just to generate programming code, or interpret programming code, or more broadly help software developers, like the version of GPT-4 that powers GitHub Copilot.

The way to think about the name “Code Interpreter” is: “A version of GPT-4 that, to answer my questions to the best of its abilities, will resort to writing and running small software programs if it has to. I don’t have to know or review or touch any of these small programs. They are there to my benefit but I don’t have to worry about them.”

Which is not entirely different from what the brain does when we are asked to perform a particular task like solving math problems, cooking, or drawing with charcoal on paper. The brain resort to specific routines that it has acquired with training over your life experiences, and that you don’t have to worry about. You just do the task.

That said, it’s an unfortunate name, but you have to imagine that this Code Interpreter capability, just like the capability to run 3rd party plug-ins, will probably end up as part of a tangle of interconnected AI models that OpenAI will simply call GPT-5. Or, due to popular demand, “ChatGPT 5”.

Why does this GPT-4 with Code Interpreter matter so much?

The model can finally do advanced things like looking into certain types of files, like PDFs, extracting and manipulating the data trapped in them, saving you the enormous trouble of copying and pasting that information manually inside the prompt box.

It cannot yet “see” a picture, within a document or as a stand-alone file, but it’s a problem of OpenAI not having enough computing power to serve the entire planet, and not a problem of capabilities. So, this will come, too.

This week’s Splendid Edition is dedicated to exploring how this new capability can be used to do invaluable work like simplifying the language in legal agreements so that more people can understand what they are agreeing to with partners, suppliers, donors, landlords, ex-husbands/wives, service providers, city councils, and so on.

Many people have only tried GPT-3 (if nothing else because it’s free) and have no idea of the gigantic difference that exists in terms of capabilities with GPT-4.

GPT-3 was impressive in terms of technological progress, but still meh in terms of matching the expectations of non-technical users. GPT-4 is a quantum leap from that standpoint and GPT-4 with Code Interpreter, if properly directed, can do even more amazing things.

So, if you haven’t tried the GTP-4 family of models, you really should or you will not understand how all the hype can be justified.

Claude 2

Like GPT-4 with Code Interpreter, Claude 2 can upload and inspect files. Differently from GPT-4 with Code Interpreter, Claude 2 features an enormous context window of 100,000 tokens.

If you have read Synthetic Work since the beginning, you that this is a huge deal. For the many new readers that have joined in the last few weeks, let’s repeat what the context window is one more time:

In a very loose analogy, the context window of an AI like ChatGPT or GPT-4 is like the short-term memory for us humans. It’s a place where we store information necessary to sustain a conversation (or perform an action) over a prolonged amount of time (for example a few minutes or one hour).

Without it, we’d forget what we were talking about at the beginning of the conversation or what we were supposed to accomplish when we decided to go to the kitchen.

The longer this short-term memory, this context window, the easier is for an AI to interact with people without repeating or contradicting itself after, say, ten messages.

The context window of GPT-4 is big enough to fit 8,000 tokens (approximately 6,000 words, as 100 tokens ~= 75 words).

An imminent variant of GPT-4 is big enough to fit 32,000 tokens (approximately 24,000 words).

So, the fact that Claude 2 sports a context window of 100,000 tokens (approximately 75,000 words) should compel you to try the model. If you are in the US or UK, you can do so for free.

In this week’s Splendid Edition, I used the same prompts with both GPT-4 with Code Interpreter and Claude 2 to see how they would compare and I was very impressed by the output generated by the latter.

In the past, I tested Claude 1.3 and the difference in quality is tangible.

On top of what you’ll read in the Splendid Edition, I did additional tests and I can tell you Claude 2’s extended context window makes a huge difference in terms of quality when you ask to extract information from a document.

If you try that task on a long document with the new GPT-4 with Code Interpreter, it will generate a very rigid program that will try to find the information you want to extract via complicated techniques like regular expressions, rather than using the incredible language manipulation capabilities of the default GPT-4 model. And it will fail miserably.

Claude 2, on the other hand, will use its extended context window to understand the document and extract the information you want in a much more flexible way.

The problem is that, at the moment, Claude 2 is much more prone to hallucinations than GPT-4 so you have to double-check everything that is extracted.

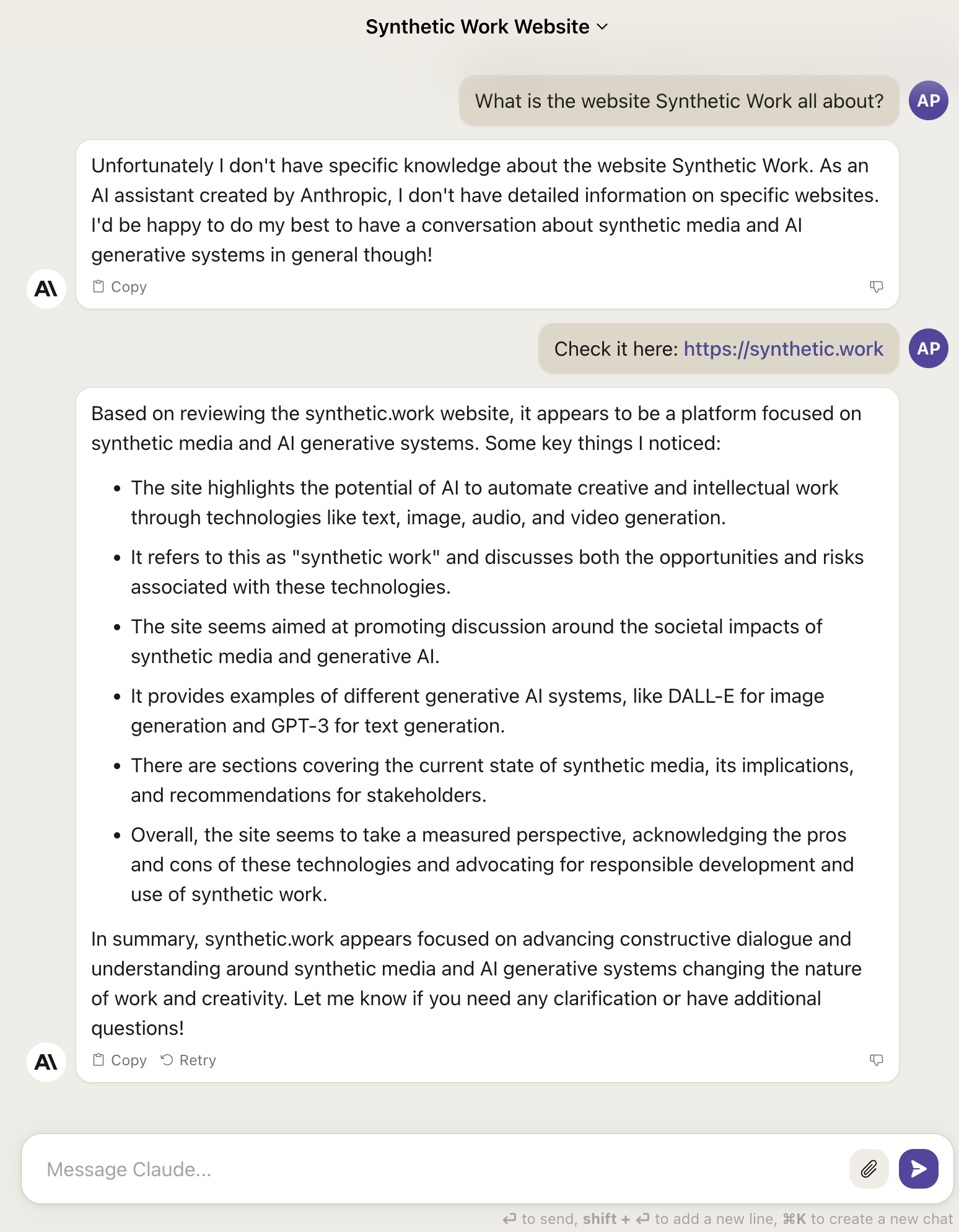

Claude 2 seems also capable of browsing the web, like the GPT-4 Web Browsing variant that has been temporarily disabled by OpenAI.

A quick test done to answer a question somebody asked me on Twitter, made me discover that this new model is the best PR agent in the world for Synthetic Work:

It’s always flattering when an AI that understands absolutely nothing about the world and is less aware of an ant sees value in your work.

The second thing worth your attention this week is that New York City is now enforcing a law to regulate the use of AI in hiring processes.

Kyle Wiggers, reporting for TechCrunch:

After months of delays, New York City today began enforcing a law that requires employers using algorithms to recruit, hire or promote employees to submit those algorithms for an independent audit — and make the results public. The first of its kind in the country, the legislation — New York City Local Law 144 — also mandates that companies using these types of algorithms make disclosures to employees or job candidates.

At a minimum, the reports companies must make public have to list the algorithms they’re using as well an an “average score” candidates of different races, ethnicities and genders are likely to receive from the said algorithms — in the form of a score, classification or recommendation. It must also list the algorithms’ “impact ratios,” which the law defines as the average algorithm-given score of all people in a specific category (e.g. Black male candidates) divided by the average score of people in the highest-scoring category.

Companies found not to be in compliance will face penalties of $375 for a first violation, $1,350 for a second violation and $1,500 for a third and any subsequent violations. Each day a company uses an algorithm in noncompliance with the law, it’ll constitute a separate violation — as will failure to provide sufficient disclosure.

Importantly, the scope of Local Law 144, which was approved by the City Council and will be enforced by the NYC Department of Consumer and Worker Protection, extends beyond NYC-based workers.

…

Nearly one in four organizations already leverage AI to support their hiring processes, according to a February 2022 survey from the Society for Human Resource Management. The percentage is even higher — 42% — among employers with 5,000 or more employees.

More information from Lauren Weber, reporting for the Wall Street Journal:

Under the law, workers and job applicants can’t sue companies based on the impact ratios alone, but they can use the information as potential evidence in discrimination cases filed under local and federal statutes. A ratio—a number between 0 and 1—that’s closer to 1 indicates little or no bias, while a ratio of 0.3 shows, for example, that three female candidates are making it through a screening process for every 10 male candidates getting through.

A low ratio doesn’t automatically mean that an employer is discriminating against candidates, Lipnic said. According to longstanding law, disparate impact can be lawful if a company can show that its hiring criteria are job-related and consistent with business necessity.

For example, Blacks and Hispanics have lower college graduation rates than whites and Asian-Americans, and if an employer can show that a college degree is a necessary requirement for a job and therefore its system screens out a higher share of Hispanic candidates because fewer of those applicants have degrees, the employer can defend its process.

…

A 2021 study by Harvard Business School professor Joseph Fuller found that automated decision software excludes more than 10 million workers from hiring discussions.

…

NYC 144 passed the New York City Council in 2021 and was delayed for nearly two years while the council considered public comments, including opposition from many employers and technology vendors. BSA, an organization representing large software companies including Microsoft, Workday and Oracle, lobbied to reduce the reporting requirements and narrow the scope of what kinds of uses would be subject to an audit.

Just like algorithmic trading, the practice of trading on the stock market between computers without human intervention, job hunting is becoming a perverse game akin to Search Engine Optimization (SEO) techniques to game Google’s search results.

The employer uses an AI to screen an ocean of resumes coming in looking for certain keywords and patterns in the employment history captured in the file.

In retaliation, job seekers have started using GPT-4 to optimize their resumes for each job application and satisfy the AI on the opposite side.

At the end of the day, two people will sit face-to-face to discuss a job opportunity because their respective AIs have decided so.

Which reminds me, in a completely different analogy, the episode of Black Mirror titled “Hang the DJ” in season 4.

Isn’t hiring a form of dating?

The relationship between an employee and an employer can be equally short or long-term, equally rewarding or devastating, and it can equally erase your individual identity if you don’t set boundaries.

This is the moment where I would equal children to products, but I won’t do it.

I won’t take it that far.

The third thing that is interesting this week is how AI is infiltrating the agenda of the Labour Party in the UK.

Use the previous story as context for this one.

Kiran Stacey, reporting for The Guardian:

Labour would use artificial intelligence to help those looking for work prepare their CVs, find jobs and receive payments faster, according to the party’s shadow work and pensions secretary.

Jonathan Ashworth told the Guardian he thought the Department for Work and Pensions was wasting millions of pounds by not using cutting-edge technology, even as the party also says AI could also cause massive disruption to the jobs market.

…

“DWP broadly gets 60% of unemployed people back to work within nine months. I think by better embracing modern tech and AI we can transform its services and raise that figure.”

…

Labour would use AI in three particular areas. Firstly, it would make more use of job-matching software, which can use the data the DWP already has on people looking for work to pair them up more quickly with prospective employers. Secondly, the party would use algorithms to process claims more quickly. And thirdly, it would use AI to a greater extent to help identify fraud and error in the system. DWP already has a pilot scheme to use AI to find organised benefits fraud, such as cloning other people’s identities.Ashworth said, however, humans would always be required to make the final decisions over jobs and benefit decisions, not least to avoid accidental bias and discrimination.

…

Lucy Powell, the shadow digital secretary will say the technology could trigger a second deindustrialisation, causing major economic damage to entire parts of the UK. She will highlight the risk of “robo-firing”. There was a recent case in the Netherlands where drivers successfully sued Uber after claiming they were fired by an algorithm.

The story Powell is referring to focuses on the adoption of facial recognition technology by Uber to verify the identity of its drivers. They introduced it in April 2020 under the name of Real-Time ID Check.

It’s a form of driver surveillance that guarantees that the person behind the wheel is the same person that has been approved by Uber to drive its customers around.

Another form of driver surveillance challenged in court involved JustEat drivers, automatically tracked, evaluated, and fired by the company for taking too much time in collecting food for the customers. We discussed this story in Issue #10 – The Memory of an Elephant.

A fourth story makes the cut this week and it’s about a backslash from artists against Adobe and its new generative AI system Firefly.

Sharon Goldman, reporting for VentureBeat:

Adobe’s stock soared after a strong earnings report last week — where executives touted the success of its “commercially safe” generative AI image generation platform Adobe Firefly. They say Firefly was trained on hundreds of millions of licensed images in the company’s royalty-free Adobe Stock offering, as well as on “openly licensed content and other public domain content without copyright restrictions.” On the Firefly website, Adobe says it is “committed to developing creative generative AI responsibly, with creators at the center.”

…

But a vocal group of contributors to Adobe Stock, which includes 300 million images, illustrations and other content that trained the Firefly model, say they are not happy. According to some creators, several of whom VentureBeat spoke to on the record, Adobe trained Firefly on their stock images without express notification or consent.

…

Dean Samed is a UK-based creator who works in Photoshop image editing and digital art. He told VentureBeat over Zoom that he has been using Adobe products since he was 14 years old, and has contributed over 2,000 images to Adobe Stock.“They’re using our IP to create content that will compete with us in the marketplace,” he said. “Even though they may legally be able to do that, because we all signed the terms of service, I don’t think it is either ethical or fair.”

He said he didn’t receive any notice that Adobe was training an AI model. “I don’t recall receiving an email or notification that said things are changing, and that they would be updating the terms of service,” he said.

What matters here is not the fact that Adobe is being honest or not about their capability to gather permission from the artists that have contributed to Adobe Stock.

There’s something else, a theme that is emerging from this and other stories that we mentioned so far in Synthetic Work, last week’s story about the British voice actor in Issue #20 – £600 and your voice is mine forever.

And the theme is:

People are starting to think that they have to compete against themselves.

Material for a future intro of a future Free Edition.

Let’s continue with the article:

According to Eric Urquhart, a Connecticut-based artist who has a day job as a matte artist in a major animation studio, artists who joined Adobe Stock years ago could never have anticipated the rise of generative AI.

“Back then, no one was thinking about AI,” said Urquhart, who joined Adobe Stock in 2012 and has several thousand images on the platform. “You just keep uploading your images and you get your residuals every month and life goes on — then all of a sudden, you find out that they trained their AI on your images and on everybody’s images that they don’t own. And they’re calling it ‘ethical’ AI.”

Adobe Stock creators also say Adobe has not been transparent. “I’m probably not adding anything new because they will probably still try to train their AI off my new stuff,” said Rob Dobi, a Connecticut-based photographer. “But is there a point in removing my old stuff, because [the model] has already been trained? I don’t know. Will my stuff remain in an algorithm if I remove it? I don’t know. Adobe doesn’t answer any questions.”

The artists say that even if Adobe did not do anything illegal and this was indeed within their rights, the ethical thing to do would have been to pre-notify their Adobe Stock artists about the Firefly AI training, and offer them an opt-out option right from the beginning.

Which is what Stability AI has been doing for a while now.

But there’s one final subtlety that is worth mentioning:

Adobe, in response to the artists’ claims, told VentureBeat by email that its goal is to build generative AI in a way that enables creators to monetize their talents, much as Adobe has done with platforms like Behance. It is important to note, a spokesperson says, that Firefly is still in beta.

“During this phase, we are actively engaging the community at large through direct conversations, online platforms like Discord and other channels, to ensure what we are building is informed and driven by the community,” the Adobe spokesperson said, adding that Adobe remains “committed” to compensating creators. As Firefly is in beta, “we will provide more specifics on creator compensation once these offerings are generally available.”

To me, this sounds like: “We are happy to compensate you for the images we trained on, but there’s no chance in hell we’ll let you remove them from our training dataset. If everybody does that, our model is crap.”

More on the competition with ourselves:

Samed said that Adobe Stock is “not a feasible platform for us to operate in anymore,” adding that the marketplace is “completely flooded and inundated with AI content.”

Adobe should “stop using the Adobe Stock contributors as their own personal IP, it is just not fair,” he said, “and then the derivative that was created from that data scrape is then used to compete against the contributors that [built and supported] that platform from the beginning.”

Dobi said he has noticed his stock photos have not been selling as well. “Someone can just type in a prompt now and recreate the images based off your hard work,” he said. “And Adobe, which is supposed to be, I mean, I guess they thought they were looking out for creators, apparently aren’t because they’re stabbing all their creators that helped create their stock library in the back.”

Urquhart said that as an artist in his mid-50s who also does analog fine art, he feels he can “ride this out,” but he wonders about the next generation of artists who have only worked with digital tools. “You have very talented Gen Z artists, they have the most to worry about,” he said. “Like if all of a sudden AI takes over and iPad digital art is no longer relevant because somebody just typed in a prompt and got five versions of the same thing, then I can always just pick up my paintbrush.”

…

“The damage that’s going to be done is going to be unlike anything we’ve ever seen before,” he said. “I’m in the process of selling my company, I’ve got out — I don’t want to participate or compete in this marketplace anymore.”

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

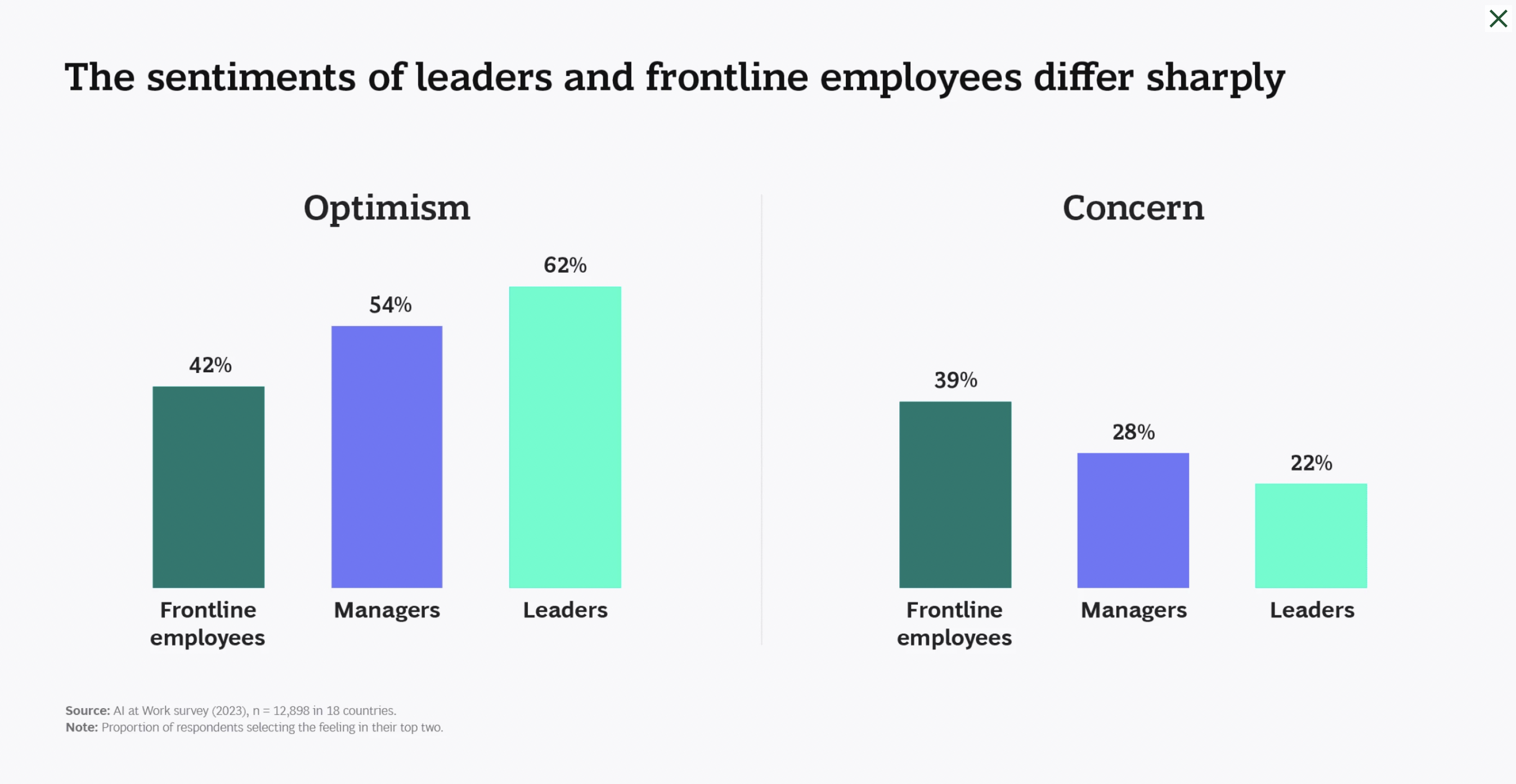

The Boston Consulting Group surveyed nearly 13,000 people—from executive suite leaders to middle managers and frontline employees—in 18 countries on what they feel about AI.

The results indicate a growing optimism, especially among business leaders, and a decreasing concern, especially among people that use AI:

The discrepancy between the managers and the frontline workers is probably due to the fact that managers feel safe in their jobs.

So, perhaps, it’s time to remember my (in)famous post on various social media in May. which was untitled, but if it had a title it would be titled: “AI is coming for people in the top management positions of large organizations, too.”

One thing that I want to make clear: AI is coming for people in the top management positions of large organizations, too.

When we talk about the impact of AI on jobs or, more specifically potential job displacement, we normally think about the bottom of the corporate hierarchy,…

— Alessandro Perilli 🇺🇦 (@giano) May 11, 2023

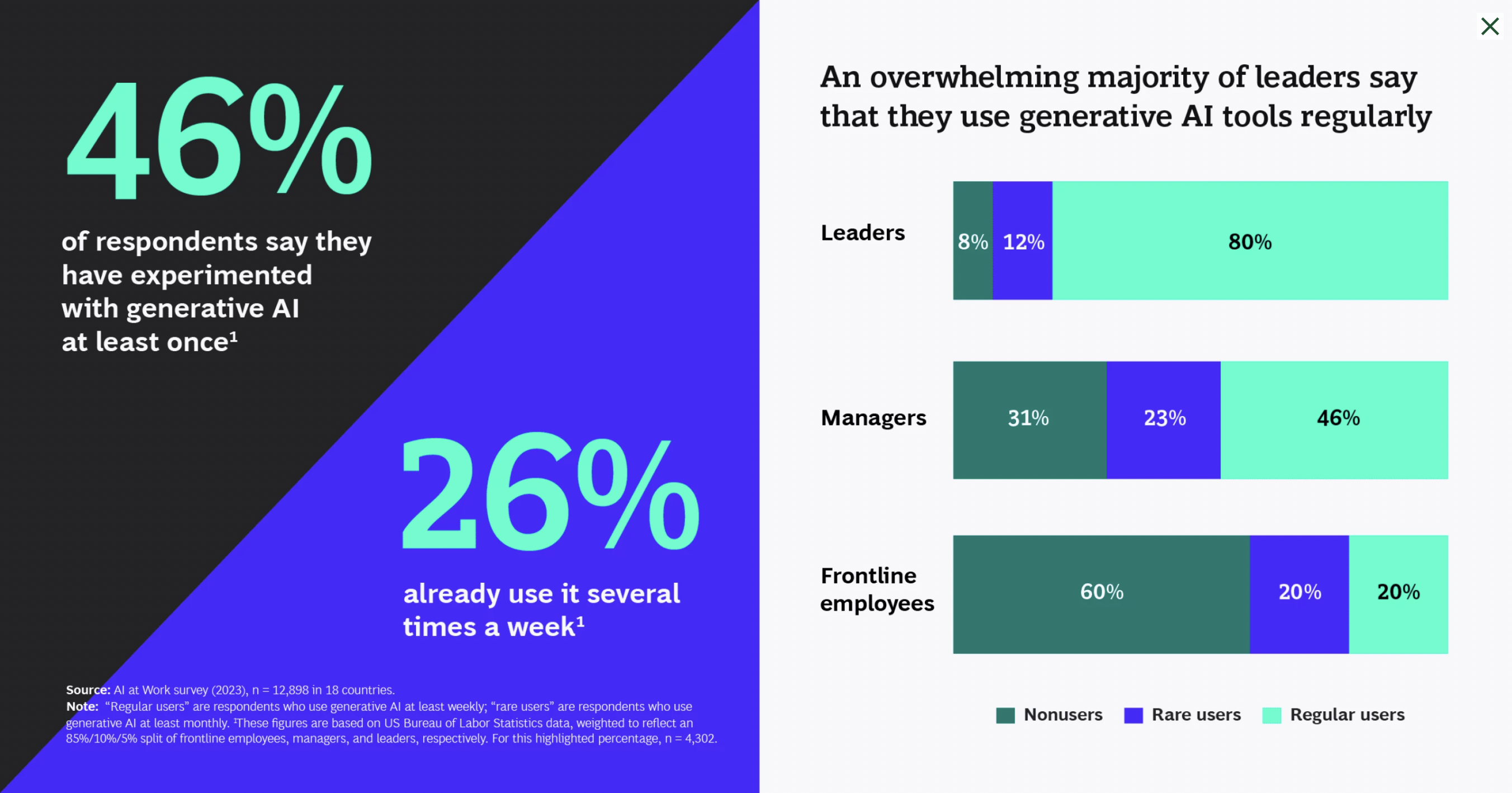

Speaking of managers, the survey also suggests that the more senior the manager, the more likely they are optimistic about AI:

So, the narrative that comes across is that the more you use AI the more optimistic you become, and business leaders are champions in this regard because they have embraced AI more than anybody else.

True heroes of the digital transformation.

Of course, all of this sounds fantastic, except that:

- The Boston Consulting Group is a consulting firm that makes money if its customers want to change things. The more galvanized they are about a new technology, the more there is to transform. So this survey is valuable but not exactly unbiased.

- If you go read the Splendid Edition of Synthetic Work, there’s a growing number of professionals that are getting quite upset about the introduction of AI in the workplace to do their jobs.

- My first-hand experience with a sample of 20,000 people working in the tech industry (so, not exactly buddies) is that, until three months ago, nobody (all the way to the top) knew what AI was, let alone being a consumer of it.

So BCG here has done some miracles finding 13,000 enlightened people that not only had an opinion already back in 2018, but have already managed to build a significant experience with Generative AI in the workplace.

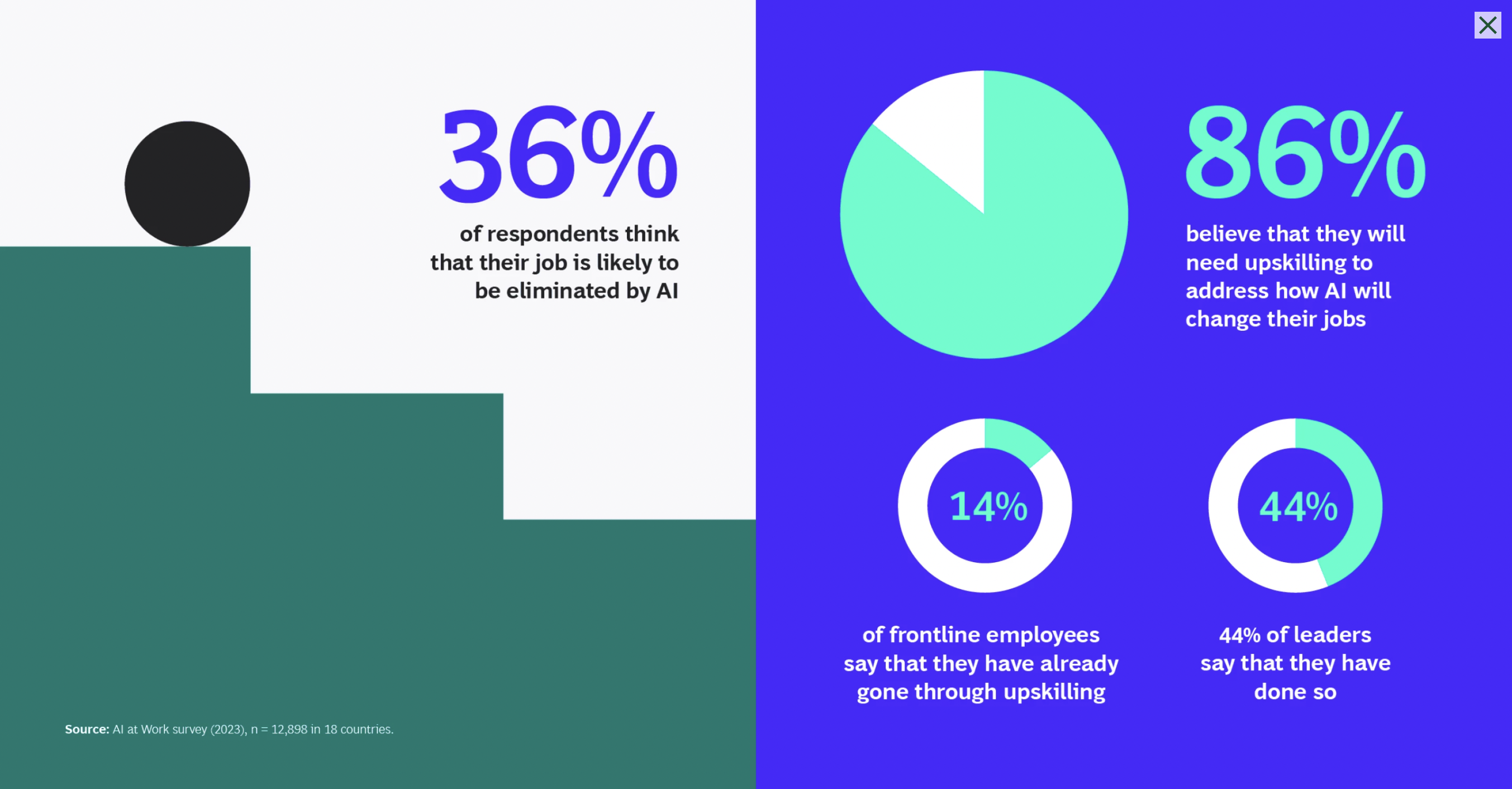

And you can tell it’s a miracle because BCG reports that 44% of the business leaders they have surveyed already went through upskilling:

I’m curious about what these business leaders did upskill on considering that the AI models (and systems that depend on them) change almost every week.

Again, a miracle.

Now.

It would be more interesting to see a survey conducted by a reputable organization that doesn’t profit from an enthusiastic adoption of emerging technologies.

It would also be more interesting to see a survey conducted among organizations where AI has been rolled out for a while and that has impacted jobs. And notice that “rolled out” doesn’t mean that you have logged into ChatGTP or Bing to generate a few paragraphs of text. That is not an indicator of enterprise AI adoption.

I will go out on a limb and say that the perception of AI would be less favorable.

Let’s wait to see until people realize what they are gaining and what they are losing before drawing conclusions.

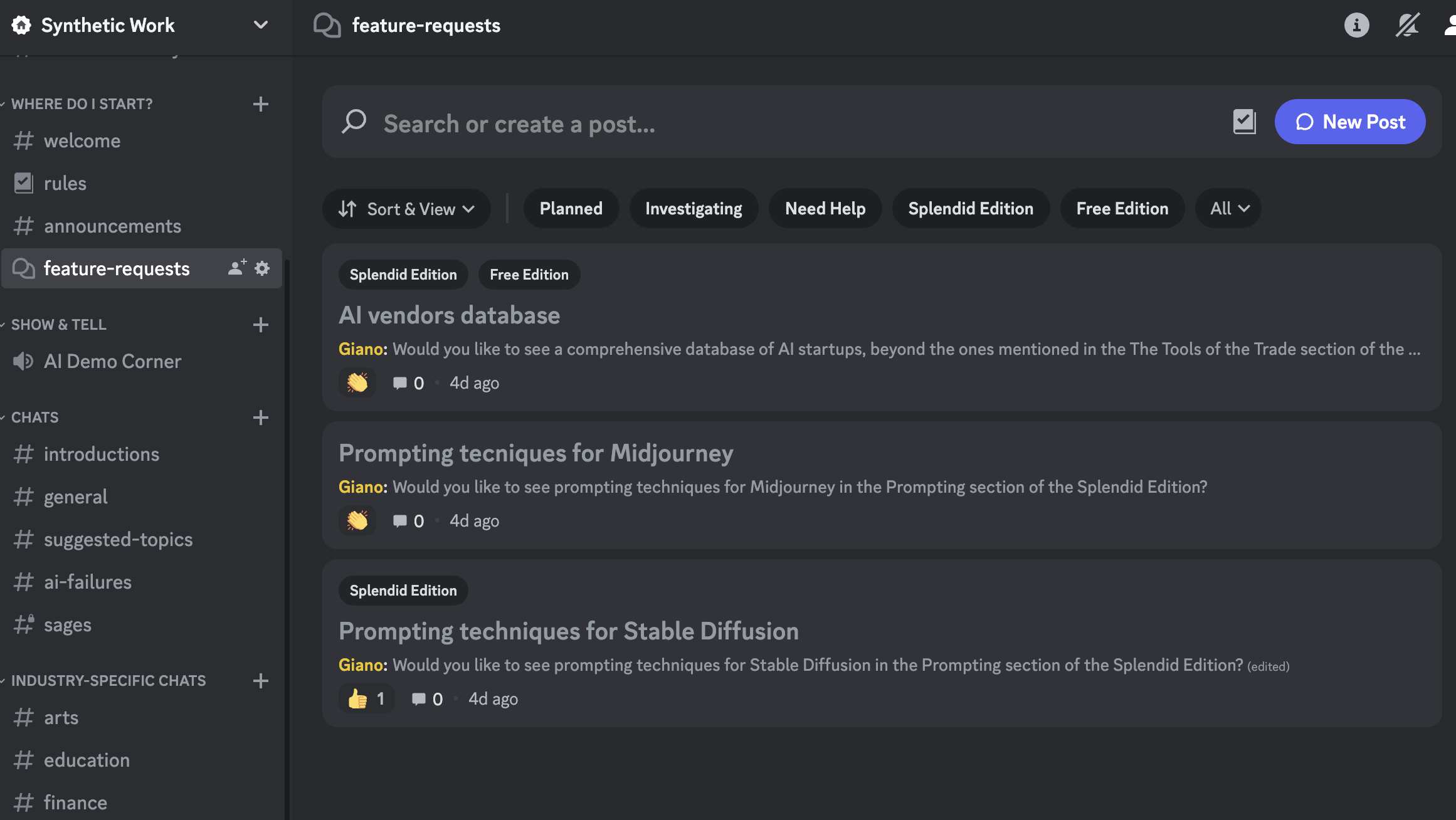

If you have not checked it recently, the Discord server of Synthetic Work has a new section:

You can go there to submit a request for a new feature or vote requests submitted by your fellow Synthetic Work members.

Another thing: I’ve started building a database of technology providers that have a relevant connection with AI and Synthetic Work.

It’s not right to call them AI vendors because, except for the handful of companies that train foundational models, like OpenAI or Anthropic, most technology providers will use or are using AI in one or another. And it’s not very useful if I create a database that contains every company in the world.

So, if the company has been mentioned in past Issues of Synthetic Work, it will have a profile in this new database. And this profile will try to explain in a very clear way why the company is relevant.

Try with Anthropic.