- Intro

- The top AI experts in the world, the ones that build foundation AI models used by the rest of the planet, have no idea of what are the limits of generative AI.

- What Caught My Attention This Week

- A couple of economists insinuate that employers could use spyware to monitor their employees’ activities and use that data to train AI models to replace them.

- The Writers Guild of America (WGA) agrees on a contract that regulates the use of AI in Hollywood

- Two CEOs suggest that their AI-focused startups create jobs in the film industry, rather than destroy them.

- The Way We Work Now

- On top of replacing humans in the act of creating art, AI is also being tested as a better art authenticator and a better art curator.

- How Do You Feel?

- A university math professor claims he’s never used ChatGPT and never will. Yet, he speaks about AI at conferences.

The top AI experts in the world, the ones that build foundation AI models used by the rest of the planet, have no idea of what are the limits of generative AI.

In May 2023, Ilya Sutskever, co-founder and chief scientist of OpenAI, said (as reported by Sharon Goldman for VentureBeat):

“You can think of training a neural network as a process of maybe alchemy or transmutation, or maybe like refining the crude material, which is the data.”

And when asked by the event host whether he was ever surprised by how ChatGPT worked better than expected, even though he had ‘built the thing,’ Sutskever replied:

“Yeah, I mean, of course. Of course. Because we did not build the thing, what we build is a process which builds the thing. And that’s a very important distinction. We built the refinery, the alchemy, which takes the data and extracts its secrets into the neural network, the Philosopher’s Stones, maybe the alchemy process. But then the result is so mysterious, and you can study it for years.”

Dario Amodei, CEO and co-founder of Anthropic, in an interview with Devin Coldewey for TechCrunch:

“Do you think that we should be trying to identify those sort of fundamental limits?” asked the moderator (myself).

“Yeah, so I’m not sure there are any,” Amodei responded.

It’s not in the best interest of these people to say something like “Oh yes, we can see the limits of generative AI, and they are right around the corner.” to the press, potential investors, and customers. But if a limit is in sight, a skilled executive won’t make bold statements in the opposite direction.

Unless you are trying to build another Theranos.

Let’s pretend that Sutskever and Amodei are not the next Holmes. Their statements are credible enough that:

- Microsoft is planting the seed of generative AI deep into Windows and Office.

- Amazon announced an investment of up to $4 billion in Anthropic.

We have reached a point of no return where we can’t do anything but keep moving forward.

Nothing would stop humans from seeing what generative AI can do if we keep scaling up computational power and data.

And even if we’ll hit a wall, what we’ll have built from here to there is enough to permanently alter the course of history.

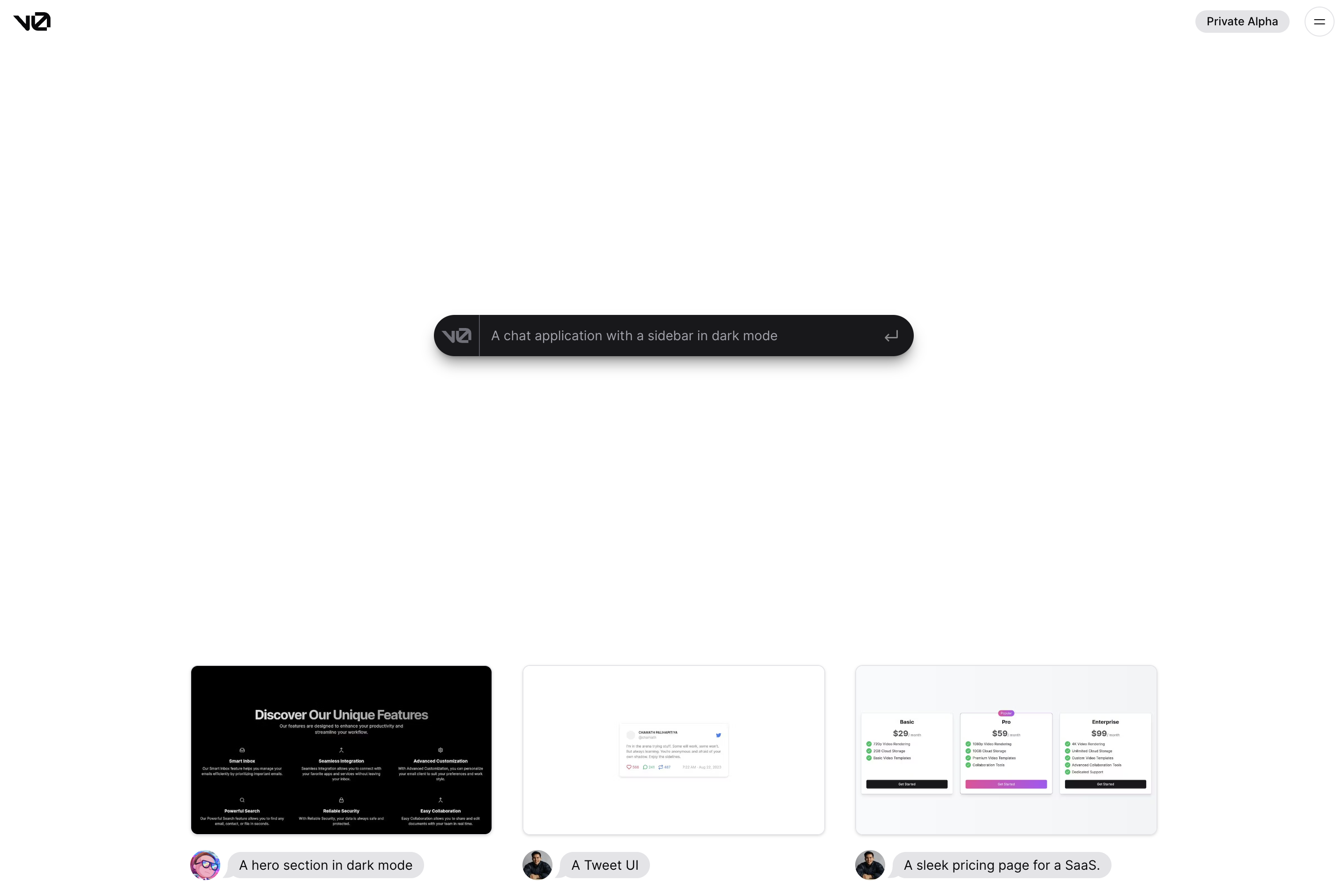

Look at the following screenshot. This is an upcoming new service from a startup called Vercel.

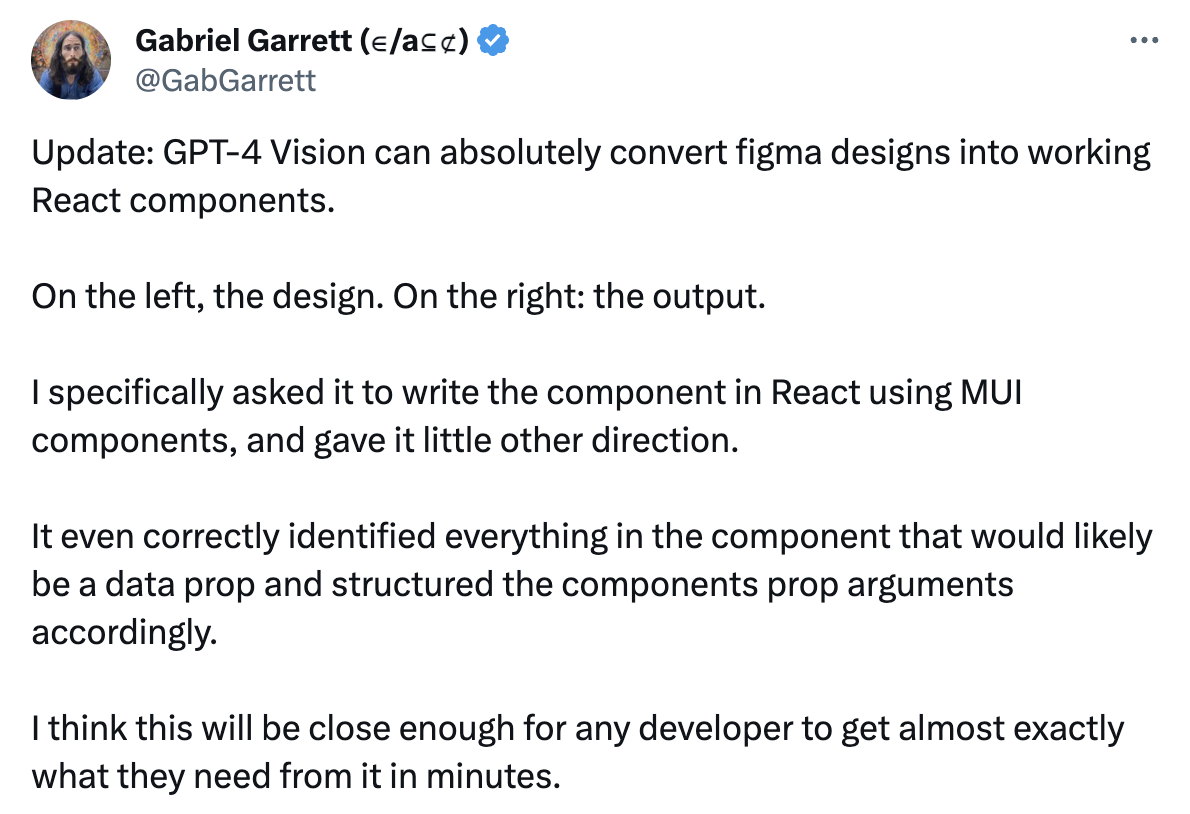

Now look at this, slightly more technical comment on X about the new GPT-4V (as in Vision) model announced by OpenAI:

This is the design mockup:

This is the functional prototype created by GPT-4V, without any fine-tuning specific to the task:

As you can infer, in a not-too-distant future anybody will be able to give shape to an idea and build a product that millions of people might want to use. Everyone will be able to simply describe how the product looks and how it works by using everyday language, without the need to learn complex jargon and programming languages.

Nobody will be able to stop anyone from solving problems and creating value, for themselves and for others.

People all around the world that never had a chance to access venture capital will be able to show prototypes to investors and customers.

The way we work today is going to change dramatically.

The relationship between company owners and their workforce is going to change dramatically.

You can lead your employees, your students, or your partners into this new world. Or you can endure it once the transition is over.

It’s a choice you can make today.

Alessandro

The first thing that caught my attention this week is the insinuation of a couple of economists, from Oxford University and MIT, that employers could use spyware to monitor their employees’ activities and use that data to train AI models to replace them.

Thor Benson, reporting for Wired:

Enter corporate spyware, invasive monitoring apps that allow bosses to keep close tabs on everything their employees are doing—collecting reams of data that could come into play here in interesting ways. Corporations, which are monitoring their employees on a large scale, are now having workers utilize AI tools more frequently, and many questions remain regarding how the many AI tools that are currently being developed are being trained.

Put all of this together and there’s the potential that companies could use data they’ve harvested from workers—by monitoring them and having them interact with AI that can learn from them—to develop new AI programs that could actually replace them. If your boss can figure out exactly how you do your job, and an AI program is learning from the data you’re producing, then eventually your boss might be able to just have the program do the job instead.

“When it comes to monitoring workflows, I do think that’s going to be a way we automate a lot of this stuff,” Frey says. “What you might be able to do is take some of those foundational models and train them on some of the data you have internally and fine-tune them, or you could train a model from scratch just with your internal data.”

David Autor, a professor of economics at MIT, says he also thinks AI could be trained in this way. While there is a lot of employee surveillance happening in the corporate world, and some of the data that’s collected from it could be used to help train AI programs, simply learning from how people are interacting with AI tools throughout the workday could help train those programs to replace workers.

“They will learn from the workflow in which they’re engaged,” Autor says. “Often people will be in the process of working with a tool, and the tool will be learning from that interaction.”

As an AI researcher and practitioner, I can guarantee you that we are very far from such a scenario, and it’s bizarre that Wired published a similar story without any evidence that we have the technology to do so.

You see, I had the idea of learning employees’ behavior by observing their activities on a desktop computer a few years ago. I wanted to use it to build a next-generation automation solution for my former employer.

While my former employer didn’t show any interest in the idea, or in AI in general, I thought it would be critical to further research the topic. So I proceeded with an in-depth exploration of the current capabilities and limits of today’s AI models to materialize my vision. And I got in touch with the researchers and practitioners that have been exploring that side of AI.

Computer vision applied to desktop interfaces, to simplify the terminology, is still in its infancy and almost no startup in the world is working on it.

From today’s state of affairs to the scenario described by Wired, there’s an ocean. The amount of progress that the AI community should make to get there is enormous. More importantly, the AI community should start looking into it. And it’s not.

So it’s unclear why the reporter decided to interview, of all people, two economists on a topic that requires a deep technical understanding of the state of the art in AI.

Of all the things that you could be concerned about when it comes to the impact of AI on jobs, this is not one of them.

The second thing that caught my attention this week is the end of the Writers Guild of America (WGA) strike after 146 days.

Of course, the one thing every professional category was waiting to read is the terms regulating the use of AI in the new contract.

The summary of these new terms is as follows:

We have established regulations for the use of artificial intelligence (“AI”) on MBA-covered projects in the following ways:

- AI can’t write or rewrite literary material, and AI-generated material will not be considered source material under the MBA, meaning that AI-generated material can’t be used to undermine a writer’s credit or separated rights.

- A writer can choose to use AI when performing writing services, if the company consents and provided that the writer follows applicable company policies, but the company can’t require the writer to use AI software (e.g., ChatGPT) when performing writing services.

- The Company must disclose to the writer if any materials given to the writer have been generated by AI or incorporate AI-generated material.

- The WGA reserves the right to assert that exploitation of writers’ material to train AI is prohibited by MBA or other law.

If you are interested in reading the full agreement, it’s here.

This is for the writers. The actors are still on strike and we’ll see what kind of protection they’ll manage to negotiate against the use of synthetic versions of themselves

In the meanwhile, the SAG-AFTRA members approved a video game strike with 98.32% of the votes.

Quoting the official announcement:

The strike authorization does not mean the union is calling a strike. SAG-AFTRA has been in Interactive Media Agreement negotiations with signatory video game companies (Activision Productions Inc, Blindlight LLC, Disney Character Voices Inc., Electronic Arts Productions Inc., Formosa Interactive LLC, Insomniac Games Inc., Epic Games, Take 2 Productions Inc., VoiceWorks Productions Inc., and WB Games Inc.) since October 2022. Throughout the negotiations, the companies have refused to offer acceptable terms on some of the issues most critical to our members, including wages that keep up with inflation, protections around exploitative uses of artificial intelligence, and basic safety precautions. The next bargaining session is scheduled for Sept. 26, 27 and 28, and we hope the added leverage of a successful strike authorization vote will compel the companies to make significant movement on critical issues where we are still far apart.

…

“Between the exploitative uses of AI and lagging wages, those who work in video games are facing many of the same issues as those who work in film and television,” said Chief Contracts Officer Ray Rodriguez. “This strike authorization makes an emphatic statement that we must reach an agreement that will fairly compensate these talented performers, provide common-sense safety measures, and allow them to work with dignity. Our members’ livelihoods depend on it.”

This second strike tells you how big of a deal this is.

A lot of people, with a lot of different jobs, fear that generative AI will replace them.

The third thing that has caught my attention is the statement of two CEOs suggesting that their AI-focused startups create jobs, rather than replace them.

Haje Jan Kamps, reporting for TechCrunch:

“Honestly, and I really can say this with a straight face, we create jobs. Because there’s so much shit to do to actually put these use cases into production, that a lot of our customers can’t fill those jobs fast enough,” May Habib, CEO at enterprise-targeted AI tool Writer, told me onstage at TechCrunch Disrupt last week — just days after the company raised $100 million at an undisclosed valuation.

…

The use of AI in the writing process was front and center in the writer’s strike; on Sunday evening, the Writers Guild and Alliance of Motion Picture and Television Producers reached a tentative agreement. Yet, Ofir Krakowski, co-founder and CEO at deepdub.ai, claims that his product hasn’t caused a single job loss. His take is that the studios are using his tools to make content more accessible:As of today, nobody has lost his job because of what we do. Actually, most of our customers are looking to monetize on content that was not economically viable to monetize on. So we are enabling them to do more work.

You must be completely out of touch with reality to make statements like those in a moment like this. Especially considering the terms of the new WGA contract that we discussed in the previous story.

Expect more CEOs to exhibit the same level of delusion in the coming months.

Here’s the full interview:

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

It’s well established that artificial intelligence changing the way we work in the Art industry. But not only in the way you might think.

On top of replacing humans in the act of creating art, AI is also being tested as a better art authenticator and a better art curator.

Let’s start with art authentication.

Dalya Alberge, reporting for The Guardian:

Authenticating works of art is far from an exact science, but a madonna and child painting has sparked a furious row, being dubbed “the battle of the AIs”, after two separate scientific studies arrived at contradictory conclusions.

Both studies used state-of-the art AI technology. Months after one study proclaimed that the so-called de Brécy Tondo, currently on display at Bradford council’s Cartwright Hall Art Gallery, is “undoubtedly” by Raphael, another has found that it cannot be by the Renaissance master.

…

Having used “millions of faces to train an algorithm to recognise and compare facial features”, they stated: “The similarity between the madonnas was found to be 97%, while comparison of the child in both paintings produced an 86% similarity.”They added: “This means that the two paintings are highly likely to have been created by the same artist.”

But algorithms involved in a new study by Dr Carina Popovici, a scientist with Art Recognition, a Swiss company based near Zurich, have now returned an 85% probability for the painting not to be painted by Raphael.

…

Sir Timothy Clifford, a leading scholar of the Italian Renaissance and former director general of the National Galleries of Scotland, was intrigued to hear of science’s conflicting findings: “I do feel rather strongly that mechanical means of recognising paintings by major artists are incredibly dangerous.“I’ve never contemplated the idea of using these AI things. I think they’re terribly unlikely to be remotely accurate. But how fascinating.”

Sir Timothy Clifford might be up for a rude awakening. There’s an enormous amount of money at stake, as insurers have to pay out millions of dollars when a painting is found to be a fake. They won’t just rely on human expertise if they have a better alternative.

Let’s continue with art curation.

Zachary Small, reporting for The New York Times:

Marshall Price was joking when he told employees at Duke University’s Nasher Museum of Art that artificial intelligence could organize their next exhibition. As its chief curator, he was short-staffed and facing a surprise gap in his fall programming schedule; the comment was supposed to cut the tension of a difficult meeting.

But members of his curatorial staff, who organize the museum’s exhibitions, embraced the challenge to see if A.I. could replace them effectively.

…

“We naïvely thought it would be as easy as plugging in a couple prompts,” Price recalled, explaining why curators at the North Carolina university have spent the past six months teaching ChatGPT how to do their jobs.

…

The experiment’s results will be unveiled on Saturday when the Nasher opens the exhibition “Act as if You Are a Curator,” which runs on campus through the middle of January.

…

ChatGPT, a prominent chatbot developed by the company OpenAI, was able to identify themes and develop a checklist of 21 artworks owned by the museum, along with directions of where to place them in the galleries. But the tool lacked the nuanced expertise of its human colleagues, producing a very small show with questionable inclusions, mistitled objects and errant informational texts.Museum employees and researchers at Duke are debating whether the show is comparable to others or simply considered “good enough” for a computer. When asked if the ChatGPT experiment resulted in a good exhibition, Price paused before laughing.

“I would say it’s an eclectic show,” he said. “Visually speaking it will be quite disjointed, even if it’s thematically cohesive.”

…

The process began with Mark Olson, a professor of visual studies at Duke, who worked through the technical challenges of fine-tuning ChatGPT to process the museum’s collection of nearly 14,000 objects. A curatorial assistant named Julianne Miao explored the possibilities of that system in some of the first “conversations” with the chatbot.“Act as if you are a curator,” Miao instructed. “Using your data set, select works of art related to the themes of dystopia, utopia, dreams and subconscious.”

Those specific themes came after an earlier conversation in which the machine generated ideas for exhibitions about social justice and environmentalism. But its most prevalent responses were on topics like the subconscious, and the human curators directed ChatGPT to continue developing those ideas. The A.I. named its project “Dreams of Tomorrow: Utopian and Dystopian Visions.”

The process was not altogether different from a typical curatorial brainstorming session, but the chatbot could search through the entire collection within a few seconds and surface artworks that humans might have overlooked.

I’d argue that there’s an enormous difference between fine-tuning an AI model to understand a museum’s archive and fine-tuning aan AI model to understand the job of a curator.

As long-term readers of this newsletter know too well, I always recommend thinking about an AI model as the best Hollywood actor working in your movie. And you are the Film Director. This is a much more fitting analogy than the stereotypical “AI is a bright intern” that you hear everywhere.

In my recommended analogy, what the Nasher Museum of Art did is the equivalent of hiring a Hollywood actor, asking him to play the role of a speleologist, and telling him to study rocks inside out. Never study the profession and the day-to-day tasks that the profession of a speleologist entails. Only the rocks.

Quote a misguided approach.

In fact:

“The algorithm was adamant that we included several Dalí lithographs on the mysteries of sleep,” explained Julia McHugh, a curator and the museum’s director of academic initiatives.

Those seemed like a reasonable choice since Salvador Dalí is associated with Surrealism and the artistic interpretation of dreams. But it was unclear why ChatGPT was pulling other objects into the exhibition, including two stone figures and a ceramic vase from Mesoamerican traditions. The curator said the vase was in particularly bad condition and not something she would typically put on display.

ChatGPT, McHugh said, might have picked up information from keywords included in the digital records for those objects, describing them as accompaniments in the afterlife. However, it also incorrectly titled the stone figures as “Utopia” and “Dystopia” and named the Mayan vase “Consciousness,” which made all three perfect candidates for the exhibition.

…

The mistakes demonstrated clear drawbacks of automating the curatorial process. “It made me think really carefully about how we use keywords and describe artworks,” McHugh said. “We need to be mindful about bias and outdated systems of cataloging.”

There might be more details that have been omitted for the sake of brevity, but from what’s been shared, it seems that the whole fine-tuning approach was focused on the wrong learning objective.

This is one of the reasons why I’m so insistent in recommending all my clients to develop an internal team that becomes skilled at fine-tuning.

The process of teaching an AI model to do a job is not as trivial as one might think.

Putting all that aside, clearly, there’s ample room for generative AI to take over more jobs in the Art industry.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

A few weeks ago, a short post on X caught my attention:

This is a perfectly valid feeling and there are plenty of people that don’t want to have anything to do with new technologies.

But this is a university professor. It’s his job to prepare his students for the future.

In Mathematics. It’s his job to unravel the math behind generative AI for his students.

And he talks about AI.

How many people out there are talking about AI without even having tried it?

This week’s Splendid Edition is titled The AI Council.

In it:

- Intro

- How it feels to build a company with human collaborators and AIs.

- What’s AI Doing for Companies Like Mine?

- Learn what the European Central Bank, the European Centre for Medium-Range Weather Forecasts, and the Australian Federal Police are doing with AI.

- A Chart to Look Smart

- GPT-4 performs exceptionally well in diagnosing arcane CPC medical cases (with the right prompt). Are large language models the future of diagnoses?

- The Tools of the Trade

- What if you could ask the advice of the top experts in the world in their fields, and watch them debate the business challenge you are facing? Now you can.