- People have started automating the production and publishing of YouTube shorts. I call it “The Perpetual Garbage Generator”.

- GPT-4 can now memorize all the infamous details of your life and use them against you in future conversations

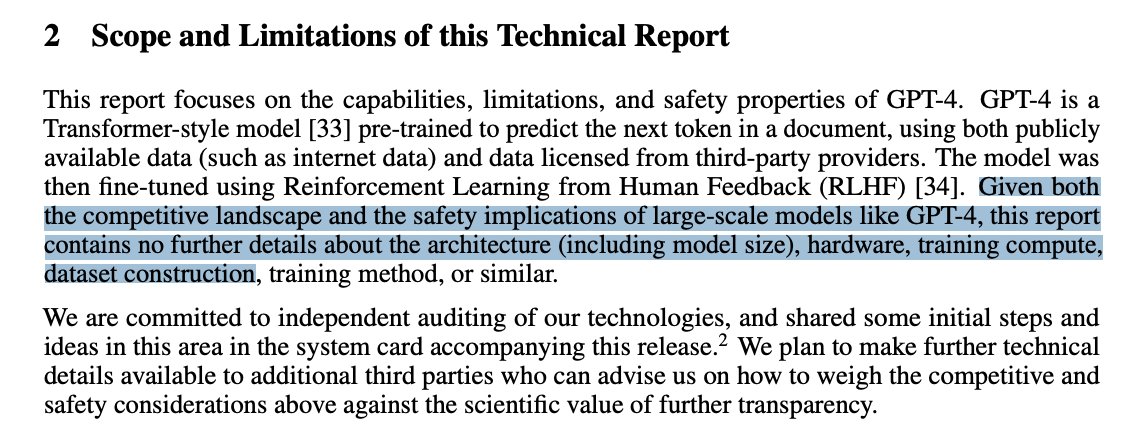

- If you are a telemarketer, a teacher, or a psychologist, I have a scary, scary chart to show you

- In less than 50 years, we moved from clunky digital cameras to sleek smartphones that use AI to make up photos

- The Romanian government has started using AI to work way less than any other politician on Earth

- People using AI for software development are caught smiling like teenagers in love in front of the screen

- South Park has dedicated an entire episode to ChatGPT and it’s glorious

P.s.: This week’s Splendid Edition of Synthetic Work is titled Medical AI to open new hospital in the metaverse to assist (human) ex doctors affected by severe depression and it’s focused on how AI is impacting the Health Care industry.

This week, I’m unveiling a new section of Synthetic Work.

You see, Synthetic Work is dedicated to understanding the impact of artificial intelligence on human labor, economy, and society. Hopefully, in a way that is not intimidating to non-technical people.

Given this mission, it’s paramount that Synthetic Work showcases and promotes AI-assisted and AI-generated labor. Which means all forms of synthetic media.

Last week, I announced Fake Show. That is a prime example of synthetic media: the synthetic voices you’ve heard in the promo are the state of the art, and the images of the puppets are made with Stable Diffusion. More aspects of the show, except the dialogues, will become synthetic as technology evolves.

But I also wanted to build a home dedicated to each type of synthetic media: photography, painting, music, literature, etc. So you can see what’s really possible.

This week we start with photography: photography.synthetic.work

For now, in this section, there are only the synthetic photos that I have generated, but I hope Synthetic Work will host the works of many talented people in the future. If you know any of those, send them my way.

Alessandro

I am not quite sure why I keep selecting two things every week rather than one, or three. But here we go again with two things that I believe are worth your attention.

The first thing to pay attention to this week is that somebody (almost completely) automated the production and publishing of YouTube videos thanks to generative AI.

Before I explain, take a look by yourself:

So, what’s happening here?

- The historical facts are taken from a website that publishes that kind of information on a daily basis. (no AI is involved in this step)

- The narrative is generated by asking ChatGPT to create an article based on the historical facts in the previous step.

- The background image is generated by asking ChatGPT to describe images that could accompany the article generated in the previous step. Those descriptions are then used as prompts in Stable Diffusion.

- The narration is generated with one of the best synthetic voices (but not as good as the ones I use in Fake Show) available on the market today, from 11Labs.

- The background music is randomly selected from a library (but in the future, it will be synthetic as well)

- All these ingredients are combined together to generate a video that is then uploaded on YouTube

Minus a few steps that the user is struggling to automate, but that are not impossible to automate, the whole process could be set on autopilot and generate new content on daily basis.

This is what I was referring to when I said “People will use generative AI to saturate every content platform that exists: short stories, (audio)books, images, videos, music, etc.” in the previous issue of Synthetic Work: For Dummies: How to steal your coworkers’ promotion with AI.

A future Splendid Edition of Synthetic Work will focus on the Media industry and media production. This is just a glimpse of what’s happening.

This example is also very important to give you a sense of the enormous competitive advantage that people using AI for their work will have compared to people that don’t use AI for their work. The former group will be able to offer much higher quality in infinite quantity. And not just in the Media industry, but everywhere.

–

The second thing to pay attention to this week is, obviously, the release of GPT-4, the AI model with mythological qualities superior to the ones of unicorns and centaurs.

As I wrote on the About page, I have no intention to turn Synthetic Work into a news-breaking media project. Plus, by the time you read this newsletter, you’ll probably have read hundreds of articles regurgitating the press announcement.

Hence, here a few non-obvious things about GPT-4 that are interesting:

1. As I mentioned in a previous issue of Synthetic Work, GPT-4 introduces an unprecedented capability to memorize the context of a conversation held with the user. In technical jargon, this memory is called context window, and it’s what helps a large language model (LLM) like GPT-4 to not repeat the same things over and over, or to not contradict itself during a conversation.

The smaller the context window, the more probably you’ll feel like talking to a patient with Alzheimer’s disease.

GPT-4’s context window can store up to 32,768 tokens, which is equal to approximately 25,000 words (a token doesn’t always equal a word). Which is enough to store half a short book (normally between 70,000 and 120,000 words), the entire script of a movie (Avengers: Endgame is 24,000 words), the whole branding guideline of a large corporation, or:

Biggest practical improvement in @OpenAI GPT-4 to me are 8K and 32K context windows. In our weekly all hands, the median speaker says 200+ tokens/minute. So you can talk to the model without any chaining about almost 3 hours of human speaker content. 16x up from a few months ago.

— Mario Schlosser (@mariots) March 14, 2023

Mario Schlosser is the co-founder and CEO of Oscar Health, a health insurance startup that uses AI, and he might be referring to the implications of this enormous context window for all those use cases where a protracted interaction with the user is necessary: imagine a virtual therapist that can now sustain an hour-long session with a patient affected by mild depression.

By comparison, ChatGPT has a context window of just 4,096 tokens or 3,072 words. Enough for a 6-page college-level essay and not more.

2. Despite its name, and more than ever, OpenAI is increasingly secretive about the data they use to train its AI models. They released GPT-4 along with a 98-page whitepaper (partially written with the help of GPT-4) which makes it very clear they are not going to give any concession to competitors:

Why would readers of Synthetic Work care about this?

Because the decision makes it increasingly hard to evaluate the bias of AI. And that bias eventually strikes back in unexpected ways, at unexpected times, after we have started using GPT-4 (or 5, or 6) in the working environment.

World-class scientists like Steven Pinker agree:

Several colleagues are pressuring me to pivot to analyzing Large Language Models as a professional focus. 1 inhibitor is that the workings are not completely open to inspection, like the 2nd-gen neural networks that I analyzed (w Alan Prince, @garymarcus & others) in the 80s-90s. https://t.co/XrDY3iaM2l

— Steven Pinker (@sapinker) March 15, 2023

3. Brett Winton, the Chief Futurist of ARK Invest, the famous but not-so-wonderfully-performing investment firm founded by Cathie Wood, shares some back-of-the-napkin math considerations about the cost of GPT-4 compared to the cost of humans:

A human typing flat-out for an hour will generate 3,000 or so tokens. At prevailing wages you will have paid $6 per 1k tokens.

GPT-4 is priced at between $.03 and $.06 per 1k tokens (or $.06 to $.12 for 4x the context window.)

1/100th the price.

— Brett Winton (@wintonARK) March 14, 2023

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

What jobs are the most impacted by the progress of AI technologies we are seeing today?

The ones in the right column, according to the researchers of Princeton, Pennsylvania, and New York universities:

Let me explain.

In 2021, these researchers came up with the idea of measuring in a practical way how AI can impact different jobs. They didn’t use the word impact but rather the word exposed. You know, to not trigger panic. Exposed, which we use for flu viruses sounds so much more reassuring.

Anyway.

They called this measurement the AI Occupational Exposure (AIOE).

They decided that the best way to measure this impact/exposure would be to list all the tasks that comprise a certain profession and see how good AI is at performing each one of those tasks.

For example, let’s pretend that an executive assistant job entails the following tasks:

- Write emails on behalf of the employer

- Summarize the emails received by the employer

- Find availability for and coordinate the meetings in the agenda

- Approve requests for paid time off from direct reports

- Make and book travel plans

and so on.

The more the AI becomes proficient in each one of these tasks, the more the job of an executive assistant is exposed to AI.

Before you panic: exposure to doesn’t necessarily mean displaced by. It might mean that the executive assistant can become 200% more productive if given access to an AI system.

It seems obvious that the more progress AI makes, the more often you need to recalculate this measure. And boy AI has made an ocean of progress since 2021. In fact, AI has made an ocean of progress in the last 32 minutes.

Given that there was no generative AI in 2021, the researchers proceeded to re-measure the exposure and published their results last week.

The column on the left lists the jobs they considered most exposed in 2021. The column on the right lists the jobs they calculated as most exposed in 2023, mainly because of generative AI.

The research is complex and I’m oversimplifying. If you have time, both the 2021 and the 2023 papers are worth a read, but, if you know a psychologist, maybe send them this newsletter?

When we think about how artificial intelligence is changing the nature of our jobs, these memories are useful to put things in perspective. It means: stop whining.

The very first digital cameras used to look like this:

This is the first prototype, by Steve Sasson in 1973 for Eastman Kodak. After this one was put together, another 20 years passed before digital cameras became mainstream.

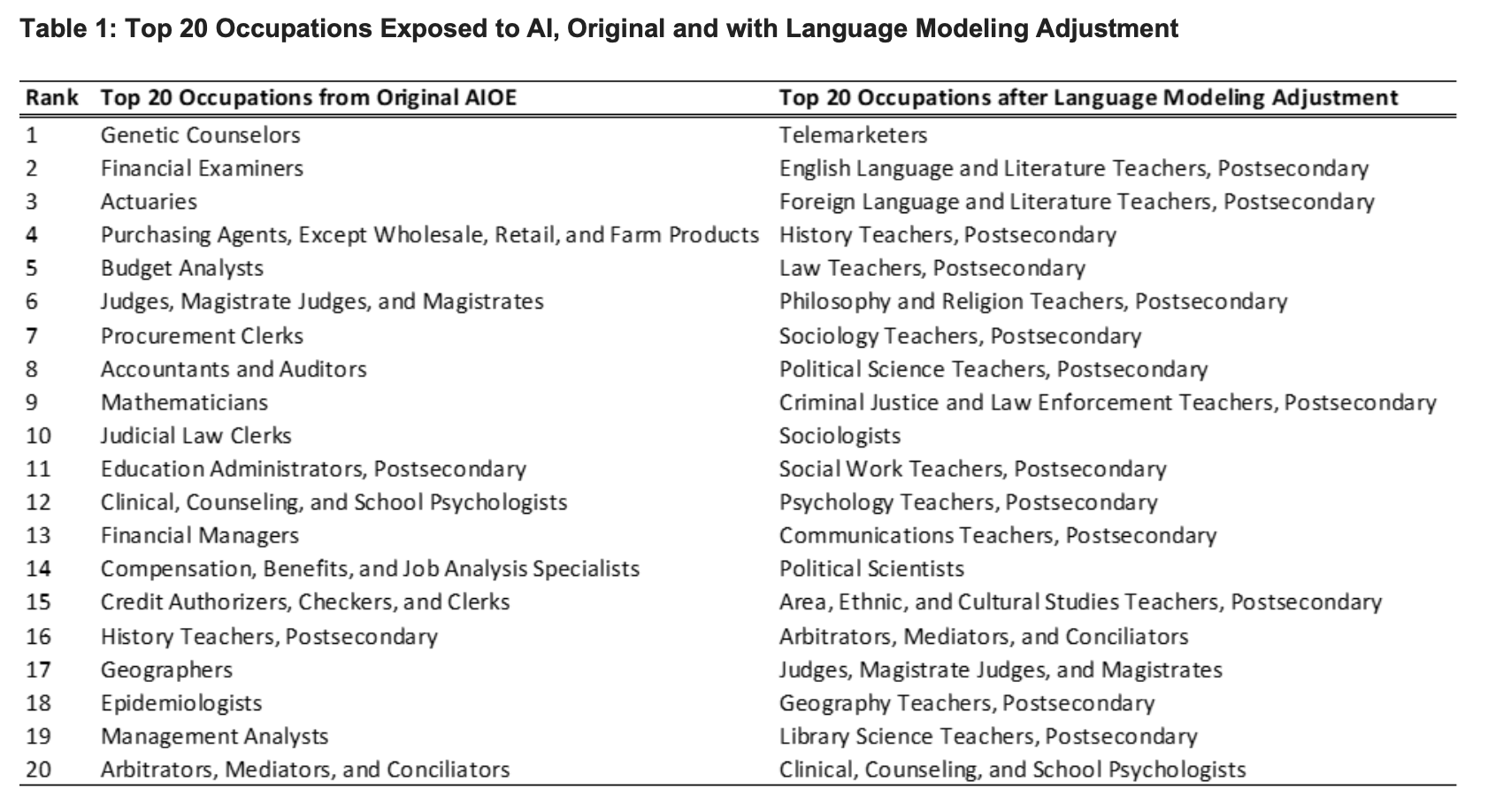

Less than 50 years later, we now take pictures from our smartphones that are augmented by AI (unbeknownst to the users).

If you haven’t read the story, apparently Samsung is using AI to artificially add details to any image of the Moon taken with their smartphones.

In other words, this famous photo, taken with a Galaxy S21 Ultra, was not really taken by the smartphone:

It was augmented by an AI model that Samsung trained for the job, as the user ibreakphotos uncovered by in a Reddit post.

Once caught, Samsung admitted the use of AI and explained the process.

People are screaming that it’s scandalous, but very soon this might become the norm.

Generative AI doesn’t just create images from prompts (the so-called txt2img approach), but it can also create altered versions of existing images (this is called img2img). And these altered images can be completely different versions of the original image, or just slightly improved versions of it.

Today’s generative AI models are too big and demanding to run on a smartphone, but very soon it won’t be the case anymore and that will be the next quantum leap in computational photography.

Let’s be honest:

1. Nobody has the patience to run photo filters on the photos we take.

2. Also, we are very important. We don’t have time for this.

3. Reality is such a pedantic concept.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

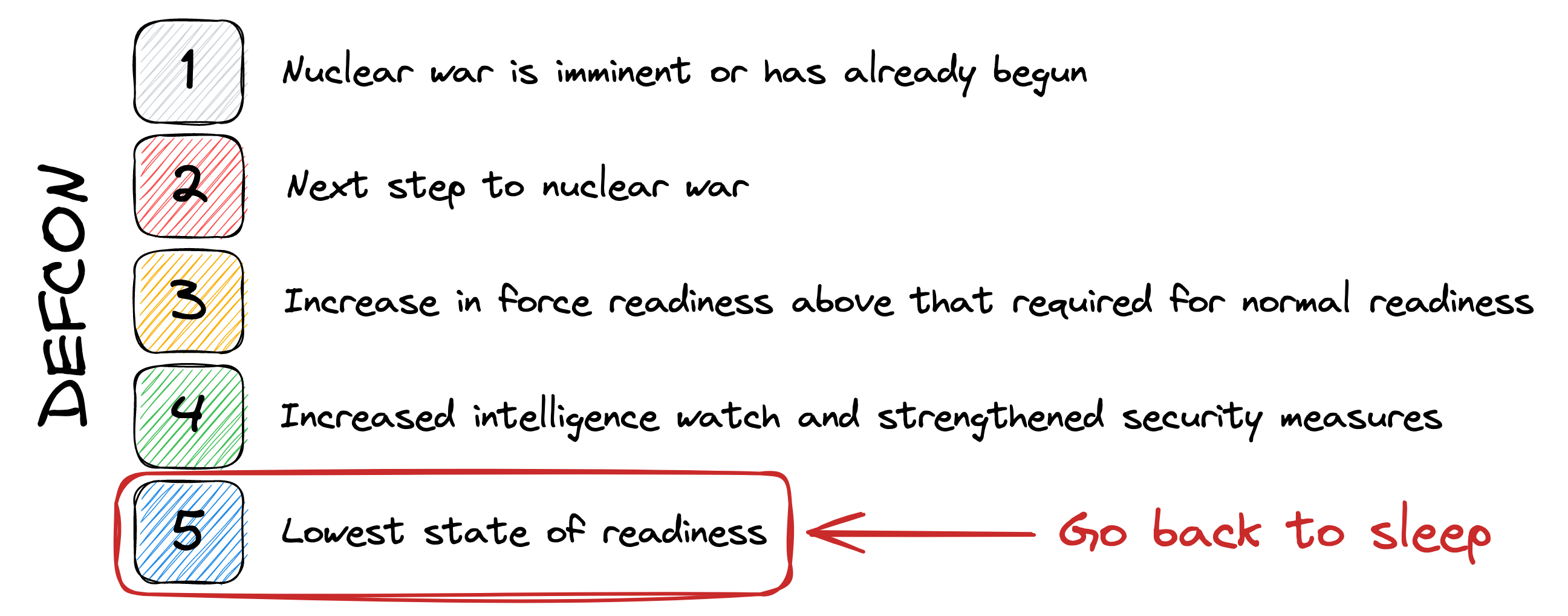

Worldwide governments are paying a lot of attention to AI, but not just because they need to regulate the crap out of it before somebody does something stupid or dangerous.

Politicians follow the evolution of AI also because they want to use it themselves. Not only they will potentially work less more efficiently, but in doing so, they will also look progressive and the whole thing will make great PR. What’s not to love?

One of the first governments to experiment with generative AI is the Romanian government.

Alexandru Costea, writing for Digi24, reports:

Prime Minister Nicolae Ciucă presented, on Wednesday, at the beginning of the Government meeting, an artificial intelligence (AI) project designed by Romanian researchers and teachers, called “Ion”, which was made “to help us so that we can help them more the Romanian citizens, informing the Government in real time with the proposals, with the problems and with the wishes of the Romanians”.

…

The Minister of Research, Innovation and Digitization, Sebastian Burduja, explained, for his part, that the project will aggregate the messages sent by the public through the ion.gov.ro website in order to analyze all this information at a given moment and prepare reports with the problems priorities of the Romanians. He did not elaborate on how these reports would be compiled, only that they could be drawn from key messages that have been entered multiple times and analyzed as charts or lists, later becoming public policy.At the same time, the minister and the coordinator of the Romanian research team explained that the “Ion” algorithm will “learn” from the information entered, without being able to communicate with the user like other systems like the one developed by OpenAI. This artificial intelligence developed by Romanian researchers would thus grow and be able to be used for other purposes, an example given in the field of “education” and information.

If you speak Romanian, the actual announcement is here:

In other words, they plan to use a large language model to summarize the email received, produce charts that show trends about the received messages, and automatically generate new policy drafts to address the concerns contained in those messages.

I’d also expect them to use AI to gauge the overall sentiment of the population in a way infinitely more precise than any voting intention poll.

Imagine using this approach before the French Revolution:

FrenchGPT: 75% of the email you received say “we want bread or there will be beheading”.

Louis XVI: Sure, sure, whatever. Write a new policy to make them shut up.

FrenchGPT: No problem! I’ve just generated a new French Hollywood movie with Marie Antoinette in the role of Lara Croft. By your edict, everybody will be able to watch it for free.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

One of my favourite startups ever (I keep a list of them) is called Replit. They offer a tool to develop code that is available online, via the browser.

Even if you don’t care anything about software development, keep reading. Trust me.

Replit recently added AI to the many cool features of its product. They use their own large language model (LLM) to generate lines of code for Replit users, just like GitHub Copilot does inside Microsoft VSCode.

Differently from Copilot, Replit’s alternative, called Ghostwriter, appears in the form of a chatbot and users interact with it to get things done. They can ask Ghostwriter in plain English to write code to achieve this goal, or to explain code that is already in the program and they don’t understand (maybe because they copied from somewhere else).

So far, so good.

Two weeks ago, a Replit user was streaming on YouTube his session of coding, and he couldn’t get exactly what he wanted from Ghostwriter. So, all of a sudden, the AI does something it was not trained to do: it asks the user to see an example of what the user wanted to accomplish.

The user is incredulous as he believes that the AI cannot actually see things as humans do. Nonetheless, he’s curious and obliges. He uploads a screenshot inside the Replit tool and informs Ghostwriter that the image is ready to be seen.

One millisecond later, the AI provides the right answer to the original question.

Now, even if you care nothing about software development, it’s really important that you look at the face of the user throughout the whole experience:

Did you watch it?

Do you really think that a user that reacts like this would give up using AI?

By now, you must have read an infinite amount of articles about AI bias, ethical concerns and the so-called alignment problem, the likely acceleration of disinformation, the potential risks for human safety, and a lot more. But you can be sure that for that user AI only means the feeling he had when he covered his face with his hands. And that’s what’s driving a lot of the innovation today, despite all the risks.

And now the final twist in the plot: exactly as the user had guessed, Ghostwriter cannot see anything. At all. Like any other LLM, the AI simply regurgitated the most likely interaction in that context, learned from infinite human interactions it was trained with.

That was enough to create the illusion of watching. But the illusion is already over. GPT-4 can see images. From here, we’ll move from ecstatic smiles in front of the screen to butterflies in the stomach.

If the joke generated by the AI is actually funny, then we are in deep s**t.

I’ve something better than a lame AI-generated joke for you this week. The South Park team has just produced an entire episode dedicated to ChatGPT and partially written by ChatGPT.

It’s glorious. Even if you are not into South Park, it’s 22 minutes worth watching:

Now, the problem is that you can watch it only if you are in the US. If you are not, you either turn on your VPN, or you get the lame AI-generated joke of the week.

This week, instead of relying on ChatGPT, we rely on LLaMA, a large language model released by Meta AI:

Me:Tell me a joke

LLaMA: Knock, knock.

Me: Who is it?

LLaMA: The Interrupting Chicken.

Now you understand why Meta only releases AI for “research purposes”?

I want to start with the one thing that captured my imagination the most in the months before launching Synthetic Work: medical training augmented by generative AI.

One of the biggest challenges in any profession, but especially in the medical field, is preparing students for edge cases. Situations they will encounter rarely, if ever, during their career.

It’s harder to train a surgeon on how to remove a tooth that is growing in a person’s brain when he/she has never studied the situation.

I hope you were not eating lunch. Sorry about that.

So, to better prepare medical students to edge cases, a month ago, a group of radiologists and AI graduate students at Stanford decided to use Stable Diffusion in a novel way.