- Intro

- On the silent, ongoing transformation of our business processes because of AI.

- What Caught My Attention This Week

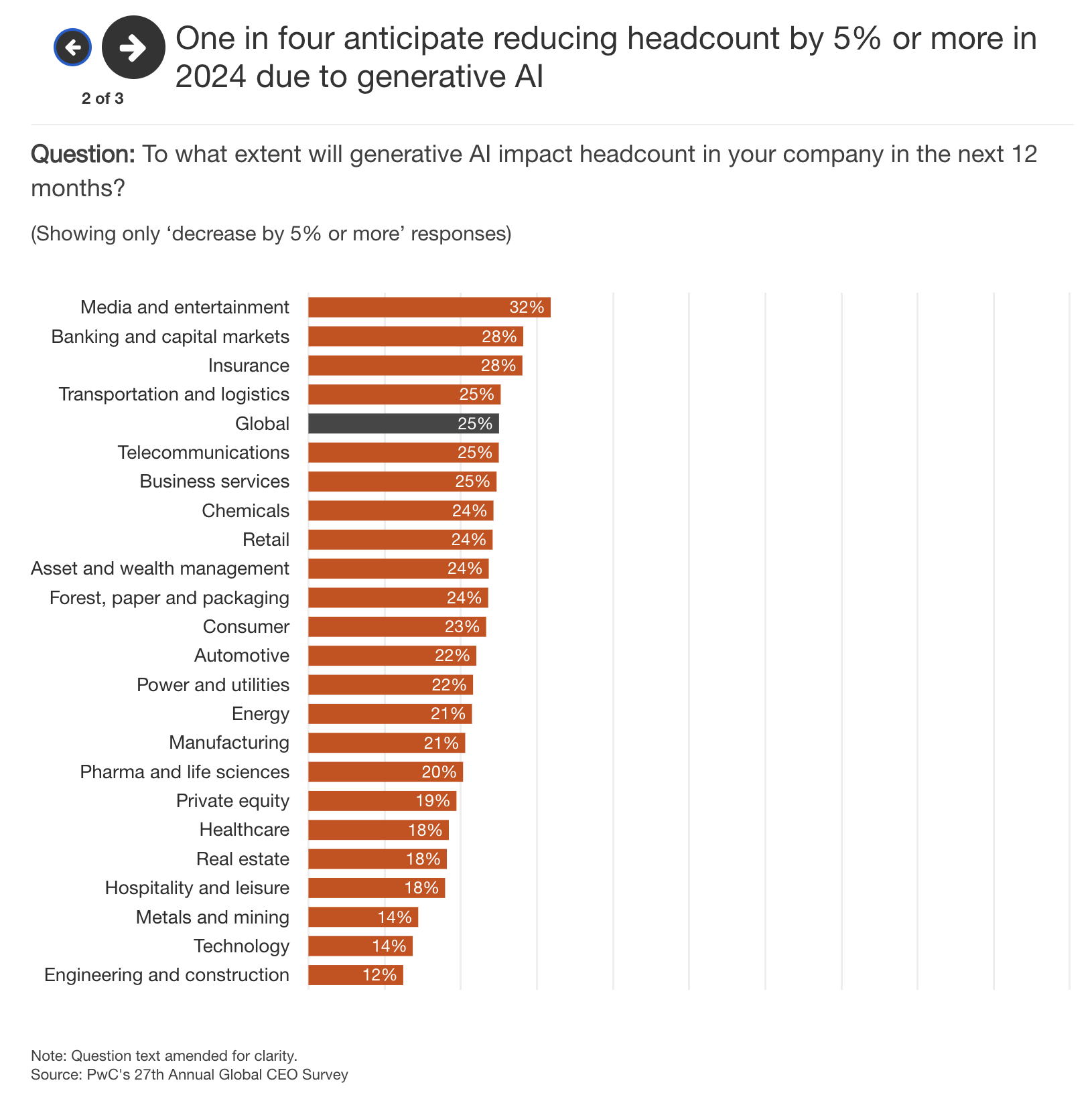

- Global CEOs surveyed by PWC expect headcount reduction of at least 5% in 2024 due to Generative AI.

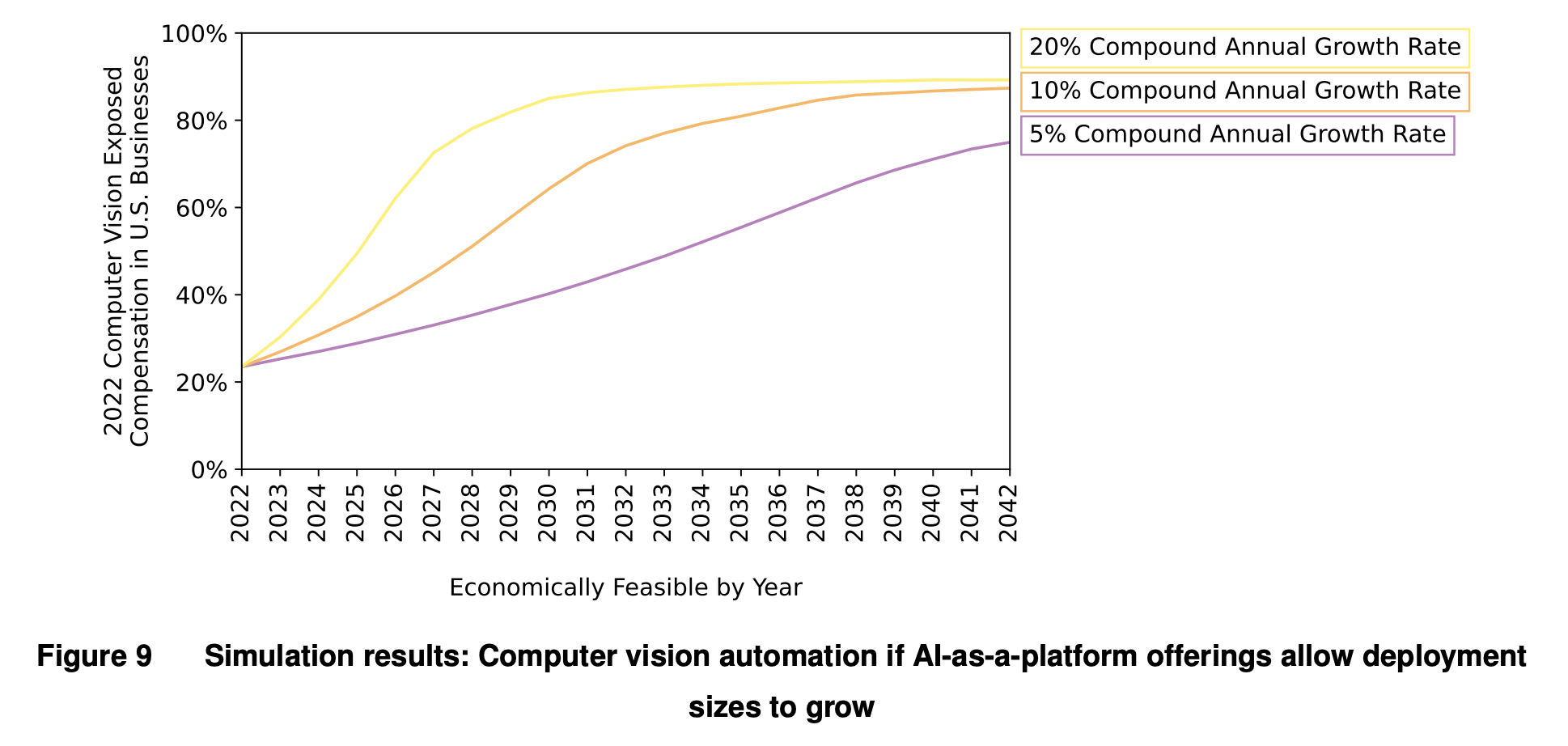

- A new MIT study suggests that computer vision won’t replace (all) humans in the workplace for a while because it’s still too expensive.

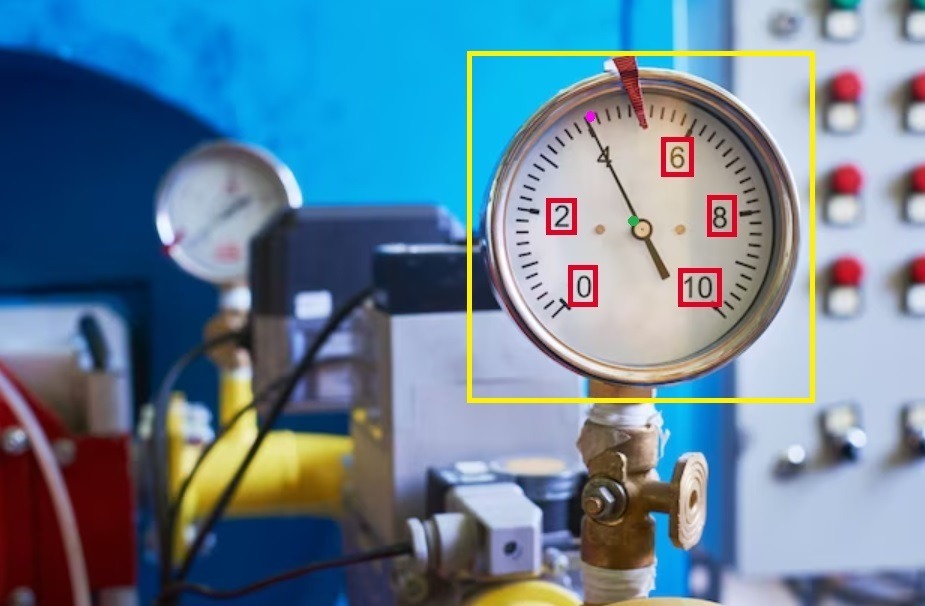

- Synanthropic shows that AI can identify and explain analogy instrumentation like gauges.

- Two geeks get together on a podcast and livestream the creation of an app with ChatGPT, from scratch, in 60 minutes.

- The Way We Work Now

- After students, copywriters, too, are now accused of cheating by AI detectors.

- How Do You Feel?

- New research on Replika users should give companies a reason to research the impact of LLMs on the mental health of their workers.

Even if, in the last year and a half, we have been exposed to the prodigious things that generative AI can do on a daily basis, to the point of exhaustion, most of us have yet to realize how much this technology will change our everyday lives.

There’s a difference between seeing a one-minute demo of a new, experimental AI technique on social media and understanding how that technique will be applied in the real world three years down the line.

For example, some of the AI technologies we saw for 60 seconds on a LinkedIn post 18 months ago are now starting to crawl into smartphones and smart TVs, enhancing the quality of the movies we watch in real-time, to the detriment of film directors all around the world, I’m sure.

It would have been hard to imagine that the LinkedIn post we liked 18 months ago would result in, potentially, a future where the faces of the actors in a movie can be swapped in real time depending on the viewer’s preferences.

I’ll give you another example.

Some of you know that one of my public projects is a thing called AP Workflow for ComfyUI.

For the ones who are not familiar with it, ComfyUI is an automation tool that allows somebody to generate text, images and videos at an industrial scale with Stable Diffusion and a plethora of AI models.

My AP Workflow is a bit like a cooking recipe, telling ComfyUI how to orchestrate dozens of those AI models to produce assets that matter to fashion photographers, architects and interior designers, ad agencies, and so on.

This cooking recipe of mine has been downloaded over 10,000 times in less than six months, and now I’m preparing to release version 8.0, which will allow everybody to use a revolutionary, state-of-the-art upscaling model.

If you ever heard of Magnific AI, it works in a similar manner, but it’s free, it produces higher-fidelity images, and it can also upscale videos.

Power of the AI community how developed this new incredible model, and many sleepless nights of mine to perfect this recipe.

If you never heard of Magnific AI, here’s what this new upscaling model can do:

If you just watched the video and you are not impressed, or you are not sure what’s the point, you are in the same situation we were 18 months ago when we saw the first AI demos on those LinkedIn posts.

Among the other things, this technology I’m enabling with the AP Workflow for ComfyUI, will allow media producers, design studios, and ad agencies to restore most of our old movies and TV shows to 4K, 8K and possibly beyond, at a tiny fraction of the cost and time it takes today.

So much so that, in a future, it’s not inconceivable to imagine that high-fidelity upscaling will be done in real-time, as a feature of our smart TVs and smartphones.

That fabulous capability will have an impact on the occupation of millions of people who work in the media industry.

And that is not the only capability in the AP Workflow for ComfyUI that will have an impact on jobs. Because of many other capabilities inside this cooking recipe that I put together, commercial and fashion photographers, interior designers and architects, and ad agencies are calling me to collaborate on projects that would normally have involved dozens of people.

The goal, in all these projects, is to cut costs, eliminating all the workforce that is not strictly necessary to produce the final product.

The transformation of our production processes is happening right now, and it’s happening more silently than you’d imagine. Global brands and small businesses are all silently experimenting, learning, and adopting a myriad of AI technologies in the hope of coming ahead.

Alessandro

One in four CEOs surveyed by PWC expects to reduce his/her company headcount by at least 5% because of AI before the end of this year.

In last week’s Splendid Edition of Synthetic Work, Issue #45 – Overconfidently skilled, we reviewed a very interesting survey of 4,000 global CEOs published by PWC.

Among the many charts dedicated to artificial intelligence, this one interests the readers of the Free Edition:

Are things getting real?

A new MIT study suggests that computer vision won’t replace (all) humans in the workplace for a while because it’s still too expensive.

Saritha Rai, reporting for Bloomberg:

In one of the first in-depth probes of the viability of AI displacing labor, researchers modeled the cost attractiveness of automating various tasks in the US, concentrating on jobs where computer vision was employed — for instance, teachers and property appraisers. They found only 23% of workers, measured in terms of dollar wages, could be effectively supplanted. In other cases, because AI-assisted visual recognition is expensive to install and operate, humans did the job more economically.

…

The study…used online surveys to collect data on about 1,000 visually-assisted tasks across 800 occupations. Only 3% of such tasks can be automated cost-effectively today, but that could rise to 40% by 2030 if data costs fall and accuracy improves, the researchers said.

…

Our study examines the usage of computer vision across the economy, examining its applicability to each occupation across nearly every industry and sector,” said Neil Thompson, director of the FutureTech Research Project at the MIT Computer Science and Artificial Intelligence Lab. “We show that there will be more automation in retail and health-care, and less in areas like construction, mining or real estate

When I filed this story for publishing, I thought that I’ll start with commentary with a “Finally, good news.”

But, upon reading the details of the study, is it good news that “just” 23% of the workforce will be impacted?

Moreover, the study, titled Beyond AI Exposure, has a narrow focus on computer vision, and it doesn’t take into account the impact of AI technologies on jobs that don’t necessarily require visual recognition to be performed.

The researchers focused on computer vision simply because “cost modeling is more developed.”

Perhaps the most interesting part of the study is the simulation of different future scenarios:

Figure 10 simulates what will happen to the amount of economic advantage computer vision will have in vision tasks over time, if we keep other aspects of the model constant but have annual system cost decreases ranging from a 10% to 50%. Even with a 50% annual cost decrease, it will take until 2026 before half of the vision tasks have a machine economic advantage and by 2042 there will still exist tasks that are exposed to computer vision, but where human labor has the advantage. At a 10% annual system cost decrease, computer vision market penetration will still be less than half of exposed task compensation by 2042.

We strongly agree with the proposition that computer vision costs will drop over time, albeit not as predictably as some might suggest.

Synanthropic shows that AI can identify and explain analogy instrumentation like gauges.

Struggling to convert your legacy machinery’s cryptic dials into actionable data? Stuck waiting for months to train custom AI models? We hear you.

…

Unplanned outages, equipment inefficiency, and repair downtime cost billions in manufacturing & asset-intensive industries. Asset reliability management, ensuring critical equipment performs optimally, suffers from massive data gathering bottlenecks. It’s been realized that a significant amount of time is spent on gathering data than any other task. If you have the capital, there are existing solutions such as Boston Dynamics Spot and Lilz that automate the data collection process. As it turns out, the actual reading of the gauge isn’t an entirely plug-and-play solution.

…

Many implementations expect a direct flat view image of the face of the gauge with the user also entering in the minimum and maximum values of the gauge. This may work for highly controlled and static environments – will you do this manual work for the hundred and thousands of gauges?Our process involves detection, perspective correction, and gauge reading, end-to-end process you would expect if you just took a picture on site. The first stage involves detection and perspective correction via homography – correcting the image such that you get a direct facing view of the gauge face. The second stage is to identify the needle tip, start and end marking values to determine the value from OCR. For both stages we used YOLO v8 pose and for OCR you can use EasyOCR, Tesseract, or some cloud service. The training takes about a day on lowest tier T4 Google Colab.

You can try a demo here.

The company is in the business of generating synthetic data and using that data to train and fine-tune AI models. And in this particular use case, you need a lot of synthetic data to fine-tune the image recognition model.

Even if this was the original intent of the company, in reality, what they have achieved is demonstrating that a portion of the maintenance work necessary to keep factories and plants running can be automated, to the detriment of the existing workforce.

There is a horde of startups out there right now that are hunting for workplace inefficiencies that can be eradicated with AI. The biggest ideas are yet to surface.

Two geeks get together on a podcast and livestream the creation of an app with ChatGPT, from scratch, in 60 minutes.

Imagine a kid, wondering if he/she can code, watching this video today.

Imagine the same kid, perhaps your son or daughter, watching a similar video next year, when it will be done with GPT-5.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

An anonymous copywriter venting on Reddit:

Sorry if this is the wrong place to be posting this, but here’s a little background:

I’ve been a technical copywriter for the last 7 years, freelancing for a digital marketing company. Everything was fine until ChatGPT and [REDACTED_PRODUCT_NAME] showed up.

Employer/client requested months ago that everything needs to be marked as human text, or work should not be submitted. Easy enough, right?

Well, over the last few months, 70% of my self-written HUMAN CONTENT keeps getting marked as entirely AI. It doesn’t matter how much I change or paraphrase, some pieces simply refuse to turn green. Now, I submitted an article to work with a footnote saying I am struggling to get this past [REDACTED_PRODUCT_NAME], and I’ve now been accused of using ChatGPT. This is extremely frustrating because I have not used ChatGPT for any of my pieces. Today, all three articles I wrote with my own human brain have been flagged as entirely AI.

I really need some advice here, I don’t know what I’m doing wrong. I’m an my wits end because what would usually take 1 hour to write is now taking 3-4 hours of me trying to change things up, to no avail. Not to mention, there are only a limited number of free [REDACTED_PRODUCT_NAME] checks a day, which has now resulted in me not being able to write more than one or two pieces a day since I cannot submit them without getting the [REDACTED_PRODUCT_NAME] green light. I do not want to pay $100 out of my own pocket for a premium account when I know my content is original.

Help! This is my only source of income and it’s being destroyed for no logical or sensible reason. I’m at a point where I feel like quitting which will leave me with zero income, and it’s all because of a faulty AI Detector.

I’ve redacted the name of the product, just in case this is a not-particularly-clever marketing stunt from the company that makes that product.

Even if this is not a real story, the number of cases where students and workers have been unjustly accused of using AI to cheat is growing out of control.

Repeat with me:

AI detectors don’t work and people should not use them for any reason.

AI detectors don’t work and people should not use them for any reason.

AI detectors don’t work and people should not use them for any reason.

Speaking of AI detectors, this week a group of researchers unveiled a new technique to spot AI-generated text, and they proudly claim that their method, called Binoculars, is 90% accurate.

Just to be clear: 90% is not good. Not even close.

That 10% of error can destroy the academic career or profession of 10 people out of 100.

You wouldn’t want to be one of those 10 people. You wouldn’t want your son, daughter, or partner to be one of those 10 people.

Repeat with me:

AI detectors don’t work and people should not use them for any reason.

AI detectors don’t work and people should not use them for any reason.

AI detectors don’t work and people should not use them for any reason.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

In the early issues of Synthetic Work, almost a year ago, we mentioned multiple times chatbots like the ones offered by Character AI and Replika.

Long-time readers might remember that the reason to go in that direction was to look at how humans react to highly credible large language models and how easy they are to manipulate as a consequence. And from there, form an opinion on the possible implications of using these LLMs in the workplace.

We return to the topic because a new, positive study published by Nature reports how Replika users have increased their social interactions because of the chatbots and, in some cases, have abandoned suicidal intentions.

From the research, titled Loneliness and suicide mitigation for students using GPT3-enabled chatbots:

Replika employs generative artificial intelligence to produce new conversational and visual content based on user interactions, not simply a predetermined conversational pathway. Replika also has many users – almost twenty-five million. Replika and Xiaoice are examples of Intelligent Social Agents (ISAs) that have cumulatively almost a billion active users. There are early indications that they may provide social support.

…

There are different and competing hypotheses concerning how ISAs affect users’ social isolation. The displacement hypothesis posits that ISAs will displace our human relationships, thus increasing loneliness. In contrast, the stimulation hypothesis argues that similar technologies reduce loneliness, create opportunities to form new bonds with humans, and ultimately enhance human relationships.

…

90% of our typically single, young, low-income, full-time students reported experiencing loneliness, compared to 53% in prior studies of US students. It follows that they would not be in an optimal position to afford counseling or therapy services, and it may be the case that this population, on average, may be receiving more mental health resources via Replika interactions than a similarly-positioned socioeconomic group. All Groups experienced above-average loneliness in combination with high perceived social support. We found no evidence that they differed from a typical student population beyond this high loneliness score. It is not clear whether this increased loneliness was the cause of their initial interest in Replika.Some participants (n = 59) identified feelings of depression, and our Selected Group (n = 30) was significantly more likely to report depression (p < 0.001). We posit that the high loneliness, yet low depression numbers, might indicate a participant population that is by and large not depressed but which is going through either a time of transition or is chronically lonely. ... Intriguingly, both groups had many overlapping use cases for Replika. People who believed Replika was more than four things (high overlap) were more likely to believe Replika was a Reflection of Self, a Mirror, or a Person. Generally, a similar percentage thought of Replika as a Friend, a Robot, and as Software. Previous research showed that overlapping beliefs about ISAs are one of the challenges in designing agents that can form long-term relationships. ... the inherent respect users communicated in conjunction with calling Replika a Mirror of themself might be a unique affordance of ISAs: once engagement leads to an experience of oneself being mirrored, users associate their own intelligence with the agent and are perhaps more likely to attend to its advice, feedback, or ‘reflections’ on their life. Users might also be more likely to learn new skills39,40. This experience might differ from previous single-persona, hard-coded chatbots, which are not embodied or able to dynamically follow user conversations. More research will be required to understand the relationship between user love for, respect for, and adherence to ISA feedback for social and cognitive learning. ... In conclusion, in a survey of students who use an Intelligent Social Agent, we found a population with above-average loneliness, who nonetheless experienced high perceived social support. Stimulation of other human relationships was more likely to be reported in association with ISA use than displacement of such relationships. Participants had many overlapping beliefs and use cases for Replika. The Selected Group credited Replika with halting suicidal ideation. Members of this Group were more likely to view and use Replika as a human than a machine, have highly overlapping beliefs about Replika, and have overlapping outcomes from using Replika. We conjecture that the use of ISAs such as Replika may be a differentiating factor in the lives of lonely and suicidal students and that their flexibility of use—as a friend, a therapist, or a mirror, is a positive deciding factor in their capacity to serve students in this pivotal manner.

Why is this interesting in relation to the scope of Synthetic Work?

Because office workers are lonely and depressed just like the students in this study. In fact, those students will soon become office workers, and they will bring to the workplace the very same problems the study has identified.

A lonely office worker, and especially a depressed one, is not very productive, even if that person has all the skills necessary to perform the job.

If the same large language models can help you with Excel formulas and improve your mental health while you are in the office, it’s something companies should be exploring.

Maybe it turns out that companies can use generative AI to boost productivity in two ways instead of one.

This week’s Splendid Edition is titled Format Police.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what Bloomberg, Umicore, and Etsy are doing with AI.

- A Chart to Look Smart

- In 2023, Meta hoarded as many NVIDIA GPUs as Microsoft. Probably to train LLaMA 3.

- Most popular AI services on the market collected 24B visitors in less than a year, suggesting an enormous opportunity ahead.

- MedARC compared the medical knowledge of open access and proprietary LLMs, finding surprising results.

- Google DeepMind researchers fine-tuned an LLM that outperforms doctors in differential diagnosis.

- Prompting

- How you format your prompt matters. For some LLMs, it matters a lot.