- It took just two months for 3,600 students in the third most prestigious university in the world to start cheating with AI.

- ChatGPT passes top exams designed for humans with (almost) flying colours

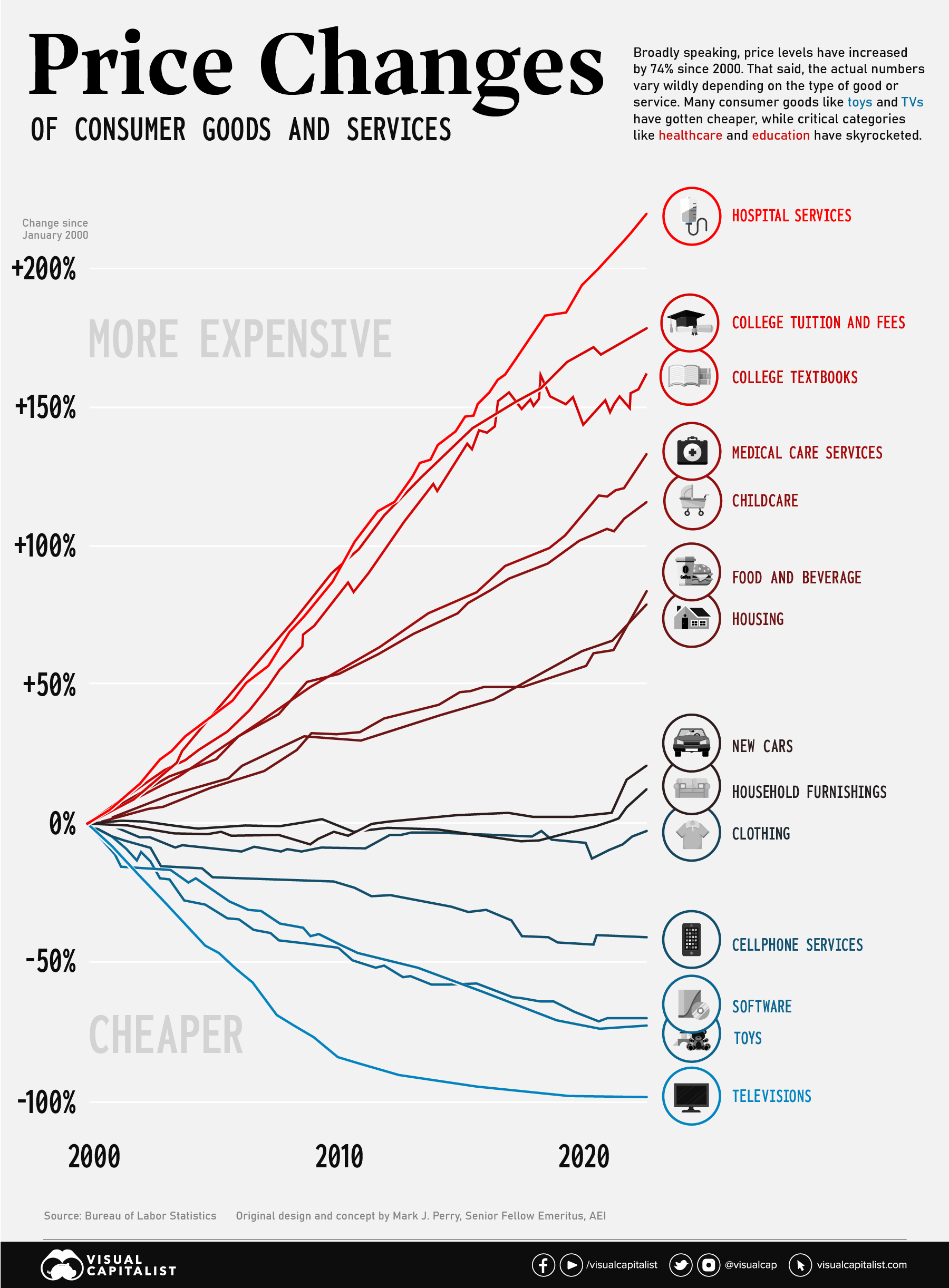

- Meanwhile, tuition and college textbooks prices have skyrocketed in the last 22 years

- Of course, schools start banning ChatGPT across the world

- Unfortunately for them, watermarking is useless

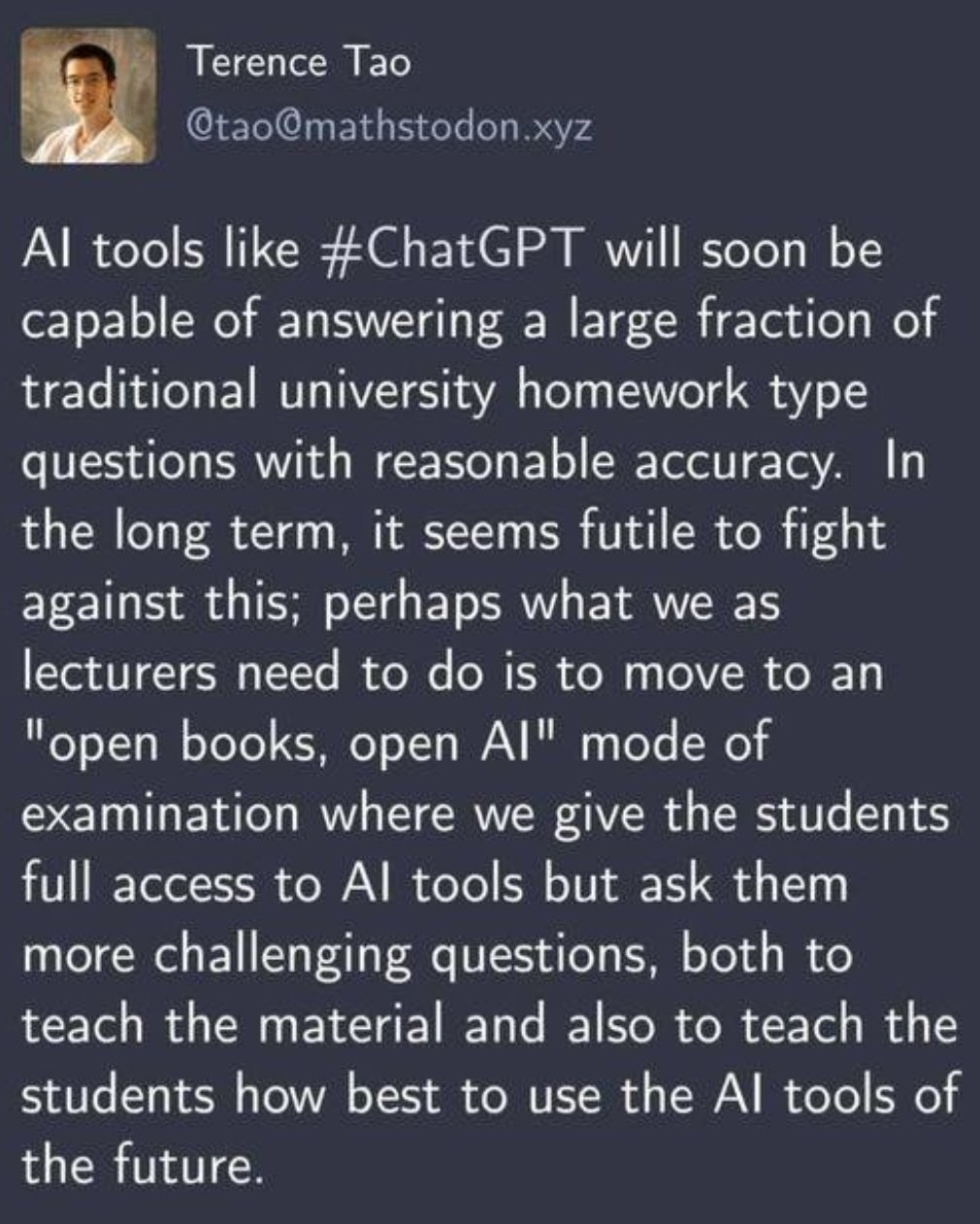

- Rather than having a knee-jerk reaction, some teachers incorporate ChatGPT in their classes

- Thankfully, Seth Godin weighs in with his usual wisdom

If you are not exhausted after reading the Free Edition of Synthetic Work Issue #1, don’t worry. There’s a lot more tedious AI stuff to talk about below.

The first industry where artificial intelligence is wreaking havoc is Education. And given that many of you have or will have kids, I thought it would be interesting to dedicate the Splendid Edition of Synthetic Work Issue #1 by exploring what’s happening in our learning institutions.

Alessandro

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

This is what’s happening in our learning institutions:

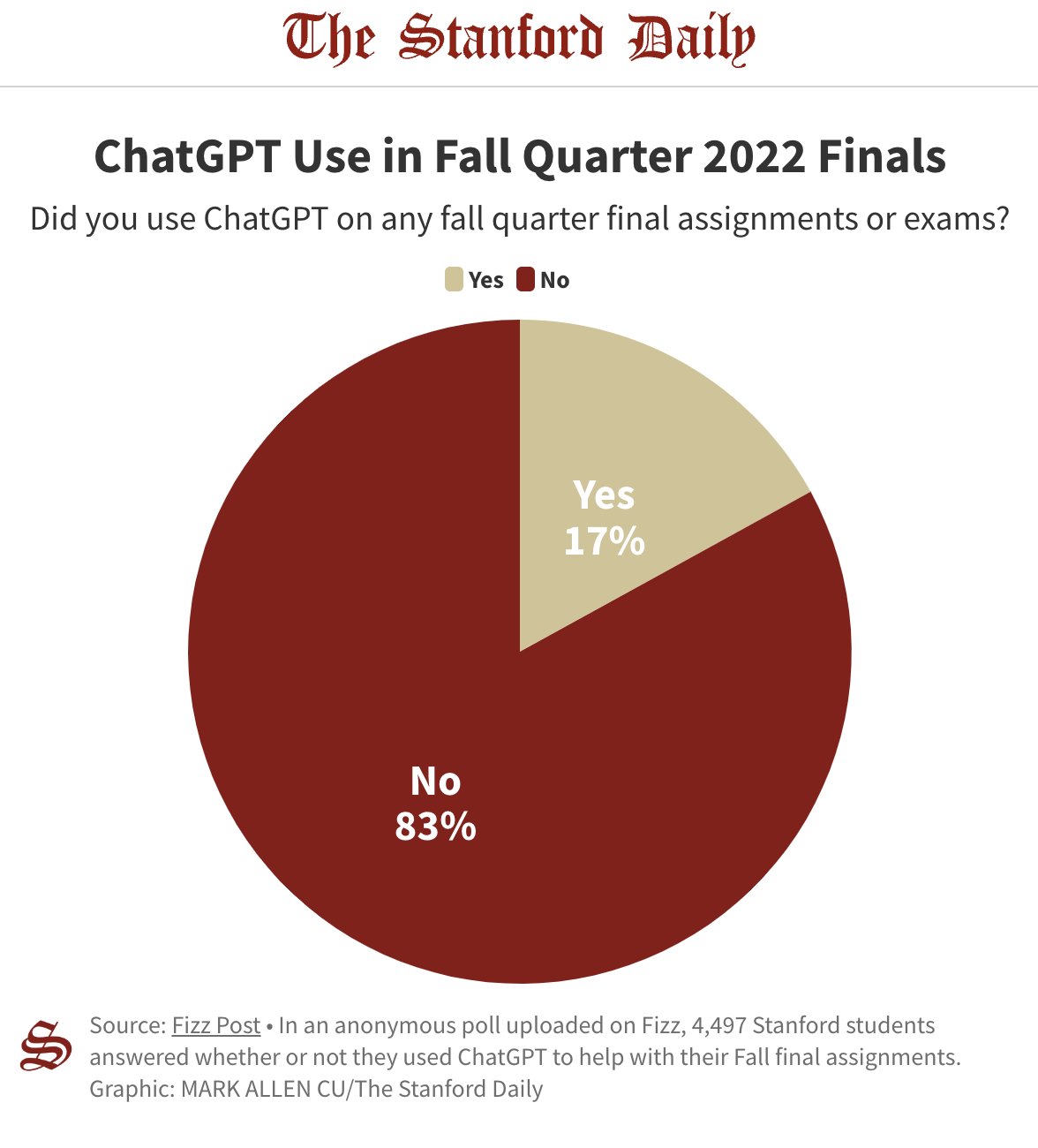

ChatGTP was launched in November 2022. This chart comes from an article published by The Stanford Daily in January 2022.

It took just two months for 3,600 students in the third most prestigious university in the world to start cheating with AI.

Yeah, yeah. 60% of the responders said that they used ChatGTP only for “Brainstorming, outlining, and forming ideas”, but isn’t one of the main things you are supposed to learn at school?

And this is a school with an Honor Code. Imagine what’s happening in other schools around the world. Actually, you don’t have to imagine:

Me while cheating in the exam with ChatGPT pic.twitter.com/3vejqaGW4E

— Joy | Emo Girl (@Vermoxiverse) February 22, 2023

“Wait a second” you might object. “I read that ChatGTP often gives incredibly wrong answers in a very confident and credible way?”

Yep. And you should never forget that. Especially when you read that ChatGTP has performed surprisingly well in some big-deal exams:

- United States Medical Licensing Exam (USMLE), which it passed with a score of 68.0%/, 58.3%, and 62.4% for the three respective exams

- Multistate Bar Examination (MBE) or Bar Exam, which it passed on average at the level of a C+ student

- Operations Management, a core exam part of the MBA at the world-famous business school Wharton, where it earned a B to B- grade

(if you to read some deeper criticism about these performances, but can’t be bothered to read the long and tedious papers, just read this)

But none of this matters.

What do you think will happen now that people know that an AI system that costs nothing (or $20 / month, if you want to skip the queue) is so accurate that it can theoretically pass an MBA exam?

On top of this, you have to remember that:

- ChatGTP was released in November 2022. This is just the beginning.

- ChatGTP uses an AI that was NOT trained on specific medical, legal, or business material. And yet, it performed remarkably well in some cases.

What do you think will happen when we’ll start to see AI systems that are specialized in the various fields of our economy?

The point: there are many jobs in the Education industry, but the two most important ones are the student and the teacher. And now both are changing because of AI.

A student might ask: “If AI can do it for free, why should I spend time and money learning this skill instead of things that I’ll truly need at work?”

Good question. I have personally worked with corporate executives who proudly mention their MBA in their LinkedIn profile or their email signature, but who don’t know how to write an “Executive Summary”.

Oh, the irony.

Maybe, my hypothetical question is one that also the students’ parents are asking themselves, given that tuition prices have increased by 178% and the cost of college textbooks has increased by 162% in the last 22 years:

Meanwhile, a teacher might ask: “If a computer can pass the exam of a course, maybe we should remove the material from the curriculum.”

And how are these teachers reacting to this?

Kalley Huang, a technology reporter for The New York Times, writes:

Some public school systems, including in New York City and Seattle, have since banned the tool on school Wi-Fi networks and devices to prevent cheating, though students can easily find workarounds to access ChatGPT.

Of course, it’s not just American schools that are panicking. In India, to mention one, it’s the same:

#ChatGPT has officially become one of the cheating tools.

Congo @elonmusk 😂 Blessings from every student

ps: Admit Card of an exam in India pic.twitter.com/axtnmeF8qn

— Aditya Kanu (@AdityaKanu_) February 20, 2023

Good luck with the bans.

Those schools that ban access to ChatGPT fail to understand that ChatGPT is just the beginning. Today, it’s technically impossible to download a Large Language Model (LLM) like the one that underpins ChatGPT on your computer or your phone. But we have already started seeing alternative LLMs released for free and as open source projects that the AI community is optimizing to be more portable than ChatGTP.

Last week, a group of researchers announced a new method to run LLMs on a home computer with a single GPU (an acronym for “graphics card” – oddly enough, AI models need very powerful graphics cards to run, like the ones that gamers buy to play Elden Ring on Windows).

We’ll have thousands of websites with ChatGTP-like capabilities. And those capabilities will also creep into our phones.

Stable Diffusion, the generative AI system that creates synthetic images, is open source. It was launched in August 2022. By December of the same year, it was possible to install Stable Diffusion on an iPhone.

In other words, dear teachers, it seems that the school as you know is about to disappear. And that your job is about to change forever.

The New York Times article continues:

At schools including George Washington University in Washington, D.C., Rutgers University in New Brunswick, N.J., and Appalachian State University in Boone, N.C., professors are phasing out take-home, open-book assignments — which became a dominant method of assessment in the pandemic but now seem vulnerable to chatbots. They are instead opting for in-class assignments, handwritten papers, group work and oral exams.

…

Gone are prompts like “write five pages about this or that.” Some professors are instead crafting questions that they hope will be too clever for chatbots and asking students to write about their own lives and current events.

The change is bigger than this. There are also new tools to learn (assuming that the school can afford to pay for them).

The same people that have invented ChatGTP, a company called OpenAI, are hard at work to create a technique that can help identify text generated by their own AI. It’s a technique called watermarking.

Watermarking synthetic text requires that the AI generates a specific subset of words from its vast vocabulary, but uses them in a way that is transparent to the users.

University teachers might have to learn how to use these watermarking identification systems. Last quote from the New York Times article:

More than 6,000 teachers from Harvard University, Yale University, the University of Rhode Island and others have also signed up to use GPTZero, a program that promises to quickly detect A.I.-generated text, said Edward Tian, its creator and a senior at Princeton University.

By January 25th, the total number of teachers on the waiting list for GPTZero was 23,000.

If you are a teacher reading this newsletter, before you rush to try the tool, you should know that there is a plot twist.

Same, if you are an entrepreneur and you are already planning a clone of GPTZero.

So far, not even OpenIA has been successful in identifying synthetic text. They created a tool to do that, and it does not work well, as they warned at the end of January:

Our classifier is not fully reliable. In our evaluations on a “challenge set” of English texts, our classifier correctly identifies 26% of AI-written text (true positives) as “likely AI-written,” while incorrectly labeling human-written text as AI-written 9% of the time (false positives).

…

Our classifier has a number of important limitations. It should not be used as a primary decision-making tool

…

The classifier is very unreliable on short texts (below 1,000 characters). Even longer texts are sometimes incorrectly labeled by the classifier.

…

Sometimes human-written text will be incorrectly but confidently labeled as AI-written by our classifier.

…

We recommend using the classifier only for English text. It performs significantly worse in other languages and it is unreliable on code.

Now back to the mad rush to use GPTZero: what are the chances that this tool performs better than the tool invented by top AI experts in the world? Also: can the school be dragged to court if a teacher unjustly accuses a student of cheating by submitting synthetic essays?

Alternatives: some teachers are forcing students to do homework exclusively in class and exclusively with pen and paper.

Makes sense. What better way to prepare people to enter a society that is completely reliant on the Internet, automation, and artificial intelligence if not denying them access to all these technologies for their entire education period?

Also, let’s hope there’s not another global pandemic. Ever.

Also, what happens to those students with disabilities if schools go back to pen and paper?

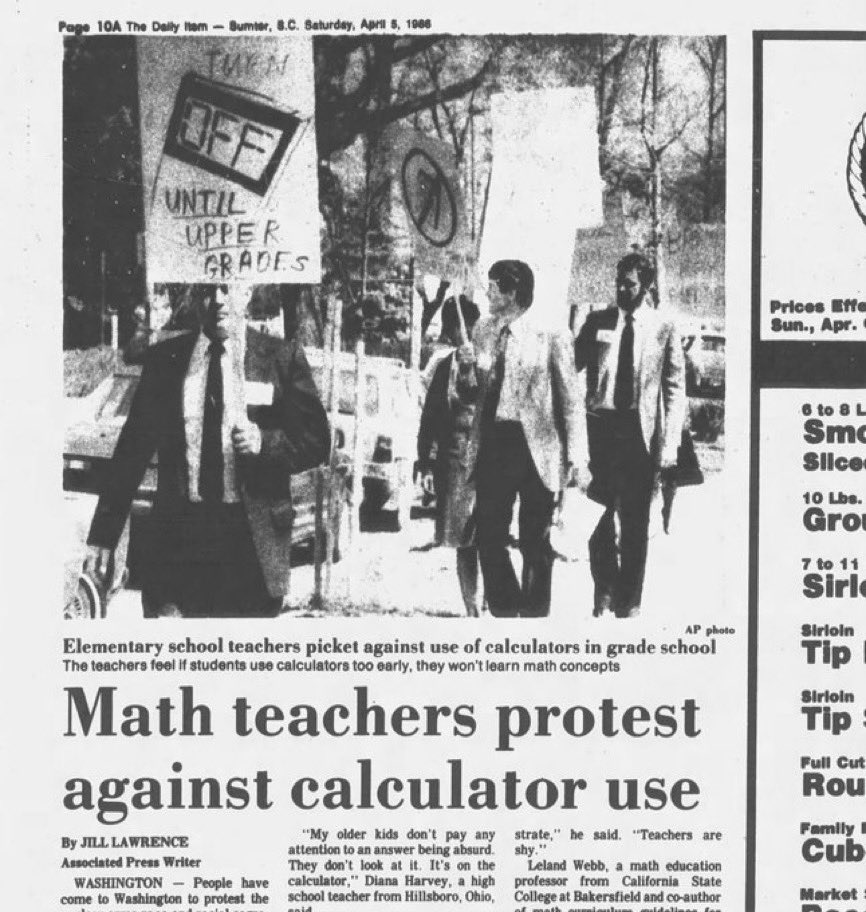

Also, do you see a pattern here?

Thankfully, on the opposite side of the spectrum, some teachers have embraced AI and are teaching the students how to use it.

Andrew Piper, Professor in the Department of Languages, Literatures, and Cultures at McGill University, in a Twitter thread, writes:

5/ I said they could use #chatGPT for their papers to help them with their prose when it comes to technical language, like writing up the results of a t.test or linear regression. This may be the type of writing they haven't mastered yet (it's a 200 level course). +

— Andrew Piper (@_akpiper) January 16, 2023

Terence Tao, Professor of Mathematics at the University of California, writes:

I don’t know about you, but to me, it sounds like a lot more work for the same pay. If we are talking about a private school that can afford to pay more, maybe this is sustainable. But in public schools where salaries are already miserable? How many teachers will want to do that, now?

And then there is the darkest side of this revolution to consider: students empowered with AI will more easily expose mediocre teachers. What happens to the credibility of a teacher when his/her students openly challenge them in the classroom? How many teachers will want to do that, then?

I leave you with a must-read blog post on the potential impact of AI on the school system: The end of the high school essay

It’s written by the most famous (and my absolute favourite) marketer alive, Seth Godin. He writes:

When typing became commonplace, handwriting was suddenly no longer a useful clue about the background or sophistication of the writer. Some lamented this, others decided it opened the door for a whole new opportunity for humans to make an impact, regardless of whether they went to a prep school or not.

…

So, now that a simple chat interface can write a better-than-mediocre essay on just about any topic for just about any high school student, what should be done?

The answer is simple but difficult: Switch to the Sal Khan model. Lectures at home, classes are for homework.

When we’re on our own, our job is to watch the best lecture on the topic, on YouTube or at Khan Academy. And in the magic of the live classroom, we do our homework together.