- White Castle has started using generative AI to replace drive-thru employees.

- Chegg articulates how it plans to use GPT-4 to deliver personalized tutoring to its customers.

- Netflix unveiled how they are using AI to create more engaging trailers for the shows and movies in their catalog.

- In the Prompting section, we explore two techniques: Ask for Variants and Choose the Best Variant.

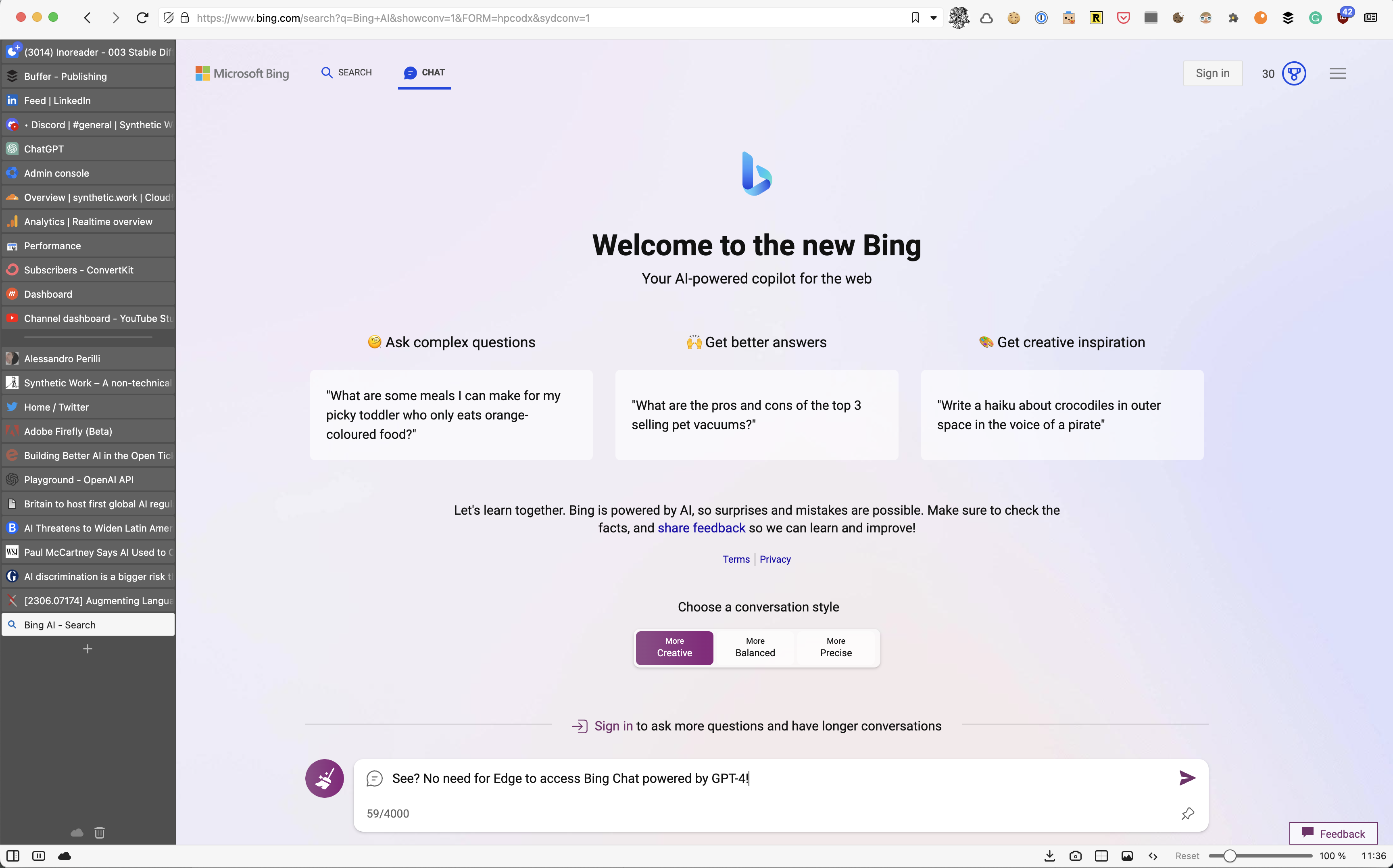

- In The Tools of the Trade section, we discover how Vivaldi browser can let you use Bing Chat without downloading Microsoft Edge.

How do you find this new Sunday delivery? Do you like it better than Fridays?

Let me know

Alessandro

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

In the Foodservice industry, the American food chain White Castle has started using generative AI to replace drive-thru employees in the interaction with customers.

We already saw Wendy’s do the same in Issue #12 – And You Thought That Horoscopes Couldn’t Be Any Worse.

Heather Haddon, reporting for The Wall Street Journal:

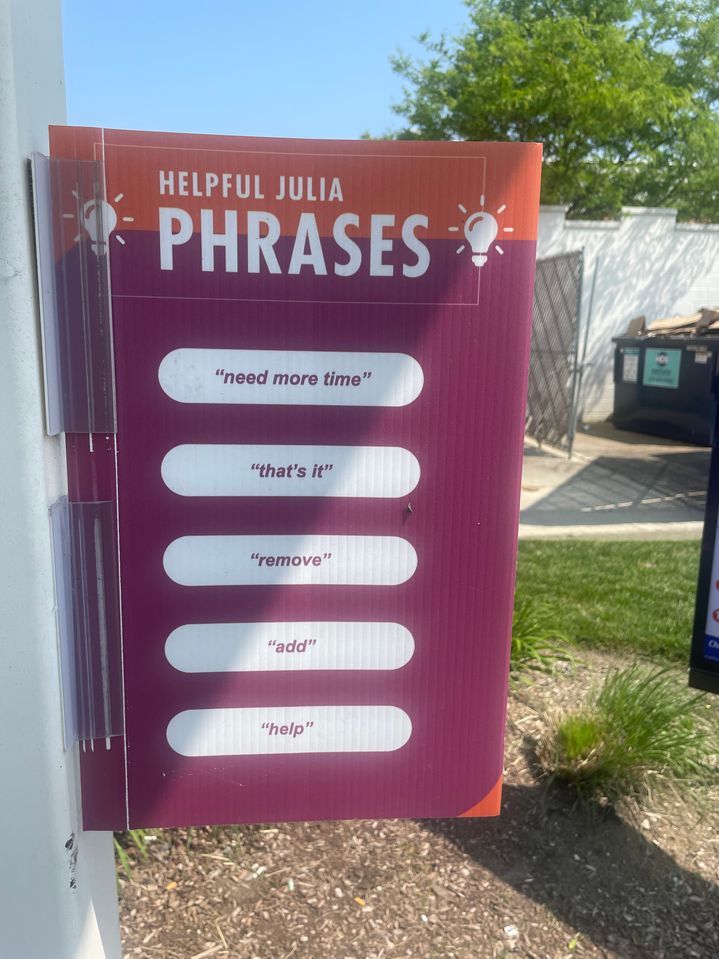

Restaurant executives say Julia, as White Castle’s system is called, and its chatbot colleagues can make their restaurants more efficient and free up often-scarce workers to do other jobs. The jury is still out with customers.

…

Across 10 orders at the Indiana White Castle on a recent day, three customers asked to talk to a human attendant after conversing with Julia, either because they preferred human interaction or because Julia misheard orders. One woman was peeved that her jalapeño slider order hadn’t come out correctly. Others shouted repeatedly that they accepted the terms and conditions of ordering through a robot, though they otherwise ordered without incident.White Castle Vice President Jamie Richardson said some customers have a love-hate relationship with Julia, and that the company is listening to feedback and investing to make the system better.

…

Besides White Castle, McDonald’s, Wendy’s and Dunkin’ are all testing AI-driven chatbots in drive-throughs.

…

The robots, which employ conversational-style AI algorithms used by technologies such as OpenAI’s ChatGPT, can take burger orders, substitute cheddar for American cheese and thank customers for their patronage.They also are programmed to encourage customers to binge on an extra burger or a dessert. Unlike a human, chatbots are never shy about selling more, nor do they need a break or get distracted by other business, said Michael Guinan, White Castle’s vice president of operations services.

Do you remember when I said that, in the future, these AI systems are going to have a voice? And that this voice will be personalized for you? And that, because of this personalization, some people will develop an even stronger bonding with their AI? And that, because of this stronger bonding, they will be more easily influenced or manipulated? And that this voice will follow you around when you sign into products or services? And that, because of this voice ubiquity, advertisers and other interested parties will be able to push you to do things that you wouldn’t do otherwise?

Buy an extra burger, my dear. You deserve it.

Let’s continue the article:

Some customers say they are sick of cranky fast-food workers who can’t hear their orders through defective speaker boxes, and look forward to the robot revolution. A number of restaurant workers agree.

“Just having that relief of not having to communicate with the customer would be awesome,” said Derrick Bower, a 38-year-old Panera Bread worker who has gladly ordered from chatbots at McDonald’s and Checkers Drive-In Restaurants.

These workers don’t seem to realize that their capability to interact with other human beings is the only thing that stands between them and unemployment.

Cooking the burger is something that the AI won’t be able to do only for a little longer. So, if the feeling is “Finally, AI can remove me from the burden of having to deal with annoying humans all day long, and I can go work alone in the kitchen”, they are in for a surprise.

More from the article:

”The robot asked me what would you like to order,” said Rucker, 21, a part-time Marriott International worker. “And I was like, ‘Is that a robot?’ They should make it more personable. It had no name.”

See? People will seek that voice personalization that reinforces the delusion of speaking with a real person.

Finally:

White Castle has retooled Julia since first putting it to work in 2020, and it now sounds more conversational, saying things like “you betcha” and “gotcha,” Guinan said.

Del Taco is testing its own chatbot, also named Julia, at five locations. Executives said that programmers are teaching it to not be thrown off by the weird orders the California-based chain’s drive-throughs occasionally encounter late at night.

—

In the Education industry, the online learning provider Chegg articulates how it plans to use GPT-4 to deliver personalized tutoring to its customers.

This is the same company that saw its stock price collapse almost 50% after revealing that the arrival of ChatGPT has impacted its subscriptions in a material way. We discussed this just last month in Issue #11 – The Nutella Threshold.

Paresh Dave, reporting for Wired:

For years, on afternoon walks outside Chegg’s Silicon Valley headquarters, former executives say they had discussed someday slashing costs by tapping AI programs to replace an army of instructors that answer student questions and draft flashcards. Matthew Ramirez, a product leader who left Chegg two years ago, says he even advised CEO Dan Rosensweig in 2020 that generative AI would be the bus that ran down Chegg if it didn’t prepare itself. Outside advisers flagged similar concerns. And just weeks after OpenAI launched ChatGPT last November, a source familiar with the exchange says, one Chegg executive had the bot write an email to Rosensweig urging him to develop a ChatGPT rival.

…

Interviews with two current and five former Chegg executives, along with three other former employees, indicate the company had considered the potential for AI to supplant its services but figured it would not manifest soon. The company’s chief operating officer, Nathan Schultz, says executives had bet in a recent five-year plan that an experience like ChatGPT wouldn’t be possible until at least 2025. And even after the bot’s debut, Chegg saw no cause for alarm, because data showed that the chatbot wasn’t luring away the 8 million paying subscribers to human-generated study guides and homework help.But the sirens went off in March, when OpenAI unleashed GPT-4, its most powerful model yet. It reignited the AI excitement just as students in the US and some other countries began taking spring exams. Undergraduates and high schoolers who might have paid Chegg as little as $16 a month for practice exams and term paper feedback quietly opted for ChatGPT instead—the free, fast, and cool new kid on the block.

There are many other companies that risk the same fate as Chegg. In the last few years, I have warned a few of them about the threat that generative AI represents to their business, including the ones I have served on.

Some people are too busy with their bullet points and KPIs to listen. Others are just too slow in understanding.

Many more companies, including technology providers, in the most disparate lines of business will collapse because of generative AI. If you are not preparing right now, you might be one of them.

And make no mistake: you don’t have much time to prepare. Next year, generative AI will explode in such a way that it will make what we see today look like a warm-up.

Let’s continue the article:

Chegg CFO Andy Brown would later describe the chatbot as vanishing 100,000 would-be subscribers “right around the fringes” of the subscription services that account for 90 percent of the company’s overall sales. Rosensweig says he had met with his friend Sam Altman, OpenAI’s CEO, for a couple of hours to discuss the future of education, and months later in mid-April, they announced a partnership to create CheggMate, an AI learning companion powered by GPT-4, Chegg’s own algorithms, and its repository of 100 million study questions built over previous years.

The deal may have erected a barrier, but the bus came crashing through anyway. Two weeks later, on May 1, Rosensweig revealed the stunted growth to investors and said Chegg would not provide revenue forecasts for the second half of this year because the relationship students would have with ChatGPT when they return to school after the summer break was anyone’s guess.

…

Students can save time, money, and perhaps their grades by “Chegging it” instead of hiring tutors, going deep into books, or knocking on professors’ doors. The idea that AI might change or challenge those services has been on Chegg executives’ minds for years. Since late 2018, it has used free, open-sourced models developed by OpenAI to help offer grammar and composition suggestions to students in a writing aid feature and to score the quality of internal documents, according to Ramirez, the former director of Chegg’s writing aid.“We could see whether a suggestion we gave actually made the writing more fluent, whether the text was better or worse with what we were suggesting,” says Ramirez, who now runs AI-based writing helpers Rephrasely and Paraphrase Tool. AI programs also help route subscribers’ academic questions to appropriate experts among Chegg’s over 150,000 contractors, most of them in India.

The prospect of using AI to create the content students and other learners want isn’t new to the company either. Since long before ChatGPT came on the scene, Brown has said the holy grail for Chegg has been generating content with algorithms to reduce tens of millions of dollars in labor and licensing costs. But OpenAI’s early models were not very fluent at text generation, and some Chegg leaders talked regularly about how difficult it would be to safely operate generative AI, according to three former employees. They feared students could goad a chatbot into silly and problematic responses that could tarnish Chegg’s reputation, while any instructional errors held huge academic consequences for users and liability questions for the company.

In subjects such as engineering, chemistry, and statistics, which drive significant traffic to Chegg but often involve diagrams, there was a sense that relying too heavily on AI to parse visual information was unreasonable, the former employees say. So the ethics of unleashing an imperfect product gave Chegg pause. “We knew generative was coming down the pike,” says one former executive. “Text analysis was easy to embrace in the short run.”

In 2020, OpenAI’s GPT-3 model was released and made text generation much better. Some machine-learning leaders at Chegg wanted to get their hands on it, but one source says executives weren’t aggressive about securing access to the technology, which OpenAI did not open-source. Early this year, GPT-3’s successor was added to ChatGPT, and the centrality of generative AI to Chegg’s future became inarguable, carved as it was into the company’s dented user growth.

…

Chegg is now focused on proving with its in-house bot CheggMate that it’s possible to outcompete ChatGPT when it charges onto your turf. “We happened to be one of the industries that’s facing it first, and that gives us a wonderful opportunity to understand it deeper and sooner and come on to the other side of it with unique and value-creating products for our consumers,” says Schultz, the COO.The company has marshaled all extra hands onto CheggMate and AI development, including by reassigning teams that worked on collecting more data from users to personalize services through more traditional means. Brown, the CFO, told investors last month that the company’s summer interns will be fully focused on CheggMate. But Chegg doesn’t have the best record of developing products from scratch and has previously leaned on acquisitions, leaving some former executives closely following CheggMate unsure of its prospects.

…

Select users, along with Chegg’s subject matter experts and academic advisers, began testing CheggMate over the past couple of weeks, but it isn’t expected to publicly launch until next year.When a user types a query to CheggMate, it first attempts to categorize whether the request is for help understanding a concept, solving a particular problem, or concerning a particular subject, Schultz says. The system then tries to direct the question to the best resource, with the options including prompting GPT-4, having a human expert answer, or re-airing an old answer from Chegg’s database. CheggMate is designed to keep users engaged through positive reinforcement and pushing related content. “We could say, ‘Why don’t you try this similar problem? Why don’t you guess a step?’” says Huntemann, the chief academic officer. “Conversation allows us to extend the experience.”

Chegg executives hope tuning their chatbot to education that way will make ChatGPT look less attractive as a homework helper. Pricing for CheggMate has not been determined; operating generative models is expensive, and those costs rise with usage. But two former employees say that having a human expert answer a question costs about $2. Generating a comparable response through GPT-4 possibly runs half a US cent, and having an expert edit it might cost $1 overall, they say, suggesting the economics could work out for Chegg.

In other words, Chegg is resisting surrendering all its interactions with the customers to OpenAI. It’s understandable, but futile: GPT-4 is perfectly capable of doing the classification that the company is trying to do on its own and, almost certainly, better.

I expect this plan to change for at least two reasons:

- Once the students start to see that GPT-4 is more capable than the alternative methods, they will demand that or simply use OpenAI native service.

- By the time CheggMate will be online, OpenAI will have reduced the cost of GPT-4 and greatly expanded its capabilities. Chegg’s executives will see that it’s cheaper and more powerful to rely exclusively on it.

And that, assuming that CheggMate doesn’t arrive too late.

Eari Nakano, reporting for Bloomberg:

Chegg Inc., which offers online homework-help services, will cut roughly 4% of its workforce as students increasingly turn to artificial intelligence chatbots like ChatGPT for assistance.

The cuts, which will affect 80 employees globally, will “better position the company to execute against its AI strategy,” it said Monday in a regulatory filing.

—

In the Media Production industry, Netflix unveiled how they are using AI to create more engaging trailers for the shows and movies in their catalog.

At Netflix, we aim to bring joy to our members by providing them with the opportunity to experience outstanding content. There are two components to this experience. First, we must provide the content that will bring them joy. Second, we must make it effortless and intuitive to choose from our library. We must quickly surface the most stand-out highlights from the titles available on our service in the form of images and videos in the member experience.

Here is an example of such an asset created for one of our titles:

These multimedia assets, or “supplemental” assets, don’t just come into existence. Artists and video editors must create them. We build creator tooling to enable these colleagues to focus their time and energy on creativity. Unfortunately, much of their energy goes into labor-intensive pre-work. A key opportunity is to automate these mundane tasks.

…

Punchy or memorable lines are a prime target for trailer editors. The manual method for identifying such lines is a watchdown (aka breakdown).An editor watches the title start-to-finish, transcribes memorable words and phrases with a timecode, and retrieves the snippet later if the quote is needed. An editor can choose to do this quickly and only jot down the most memorable moments, but will have to rewatch the content if they miss something they need later. Or, they can do it thoroughly and transcribe the entire piece of content ahead of time.

…

Scrubbing through hours of footage (or dozens of hours if working on a series) to find a single line of dialogue is profoundly tedious. In some cases editors need to search across many shows and manually doing it is not feasible. But what if scrubbing and transcribing dialogue is not needed at all?

…

Artists and video editors routinely need specific visual elements to include in artworks and trailers. They may scrub for frames, shots, or scenes of specific characters, locations, objects, events (e.g. a car chasing scene in an action movie), or attributes (e.g. a close-up shot). What if we could enable users to find visual elements using natural language?

…

Natural-language visual search offers editors a powerful tool. But what if they already have a shot in mind, and they want to find something that just looks similar? For instance, let’s say that an editor has found a visually stunning shot of a plate of food from Chef’s Table, and she’s interested in finding similar shots across the entire show.

…

As we developed more media understanding algos and wanted to expand to additional use cases, we needed to invest in system architecture redesign to enable researchers and engineers from different teams to innovate independently and collaboratively. Media Search Platform (MSP) is the initiative to address these requirements.

…

The media understanding platform serves as an abstraction layer between machine learning algos and various applications and features. The platform has already allowed us to seamlessly integrate search and discovery capabilities in several applications.

The blog post is very technical, and well beyond the intended scope of Synthetic Work. But if you are interested in the topic, it’s worth your time.

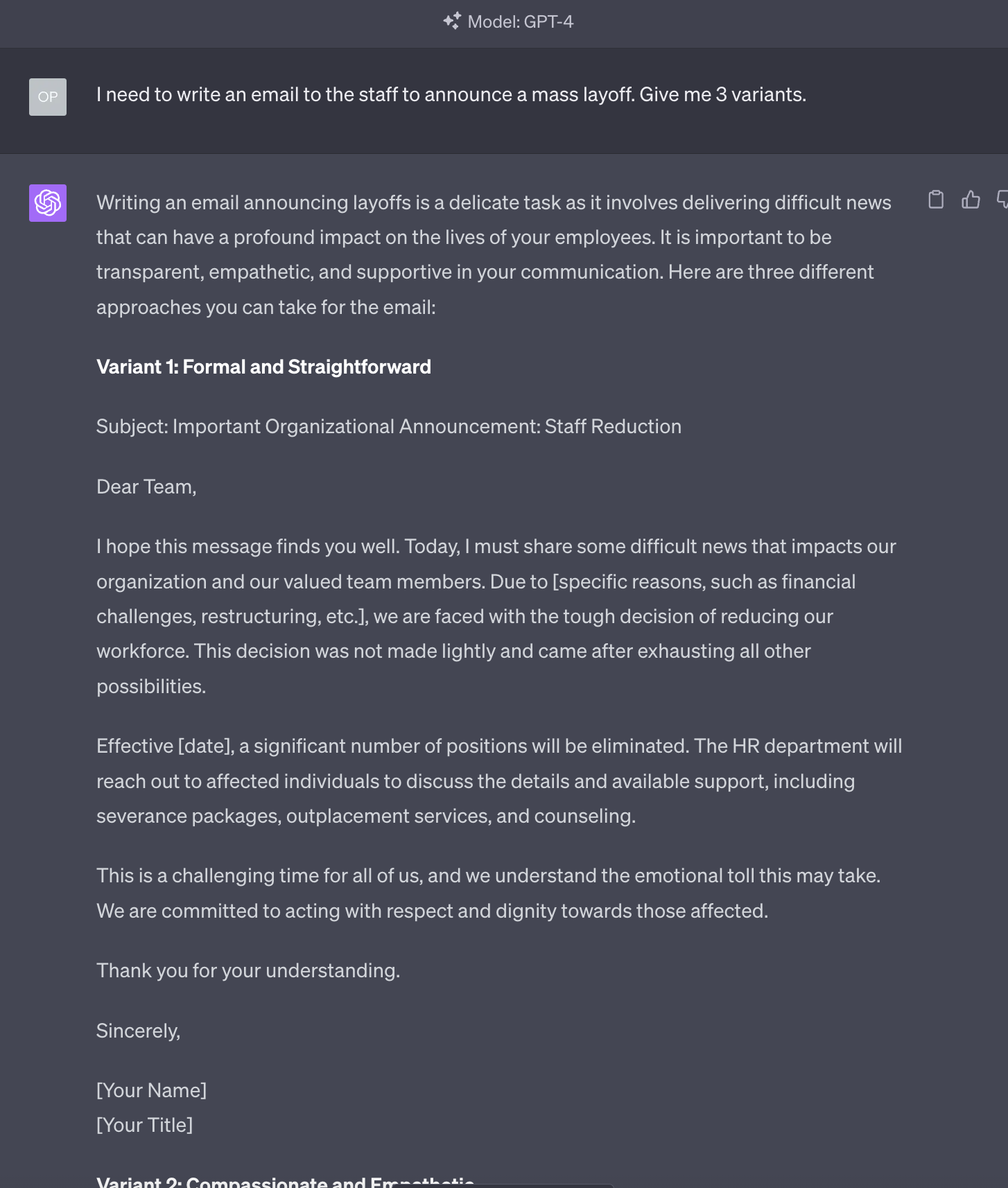

Before you start reading this section, it's mandatory that you roll your eyes at the word "engineering" in "prompt engineering".

Today we take a look at two techniques, one very simple, and one very complicated. The latter builds on the former.

Let’s start with the simple one.

Ask for Variants

When you write your prompt, you are psychologically prepared to either accept the answer you get from the AI or iterate on that answer until you get a satisfactory outcome.

Totally normal. That’s the way we interact with people, so we bring the same approach in our interactions with the AI.

But there’s something that large language models like ChatGPT can do and people can’t (at least, not easily): give you a wide range of alternative answers.

In Issue #16 – The Devil’s Advocate, we have used a technique called The Devil’s Advocate to obtain an alternative answer from GPT-4, pushing the AI to go in the opposite direction compared to its original approach.

If we don’t need to go in a specific direction, we simply ask for a specific number of variants that we want to evaluate:

Remember that, differently from humans, the AI doesn’t get tired, and the information it has accumulated during the training phase hopefully represent a broad range of perspective. People rarely, if ever, have that kind of breadth of experience.

So, while it generating multiple variant answers for the same questions imply more cognitive load for you, as you have to evaluate which variant best fits your needs, it’s something that you should always consider doing to improve your chances to find out-of-the-box solutions.

Now, with this exceedingly simple, yet invaluable, technique in mind, let’s review a technique significantly more complicated that builds on it.

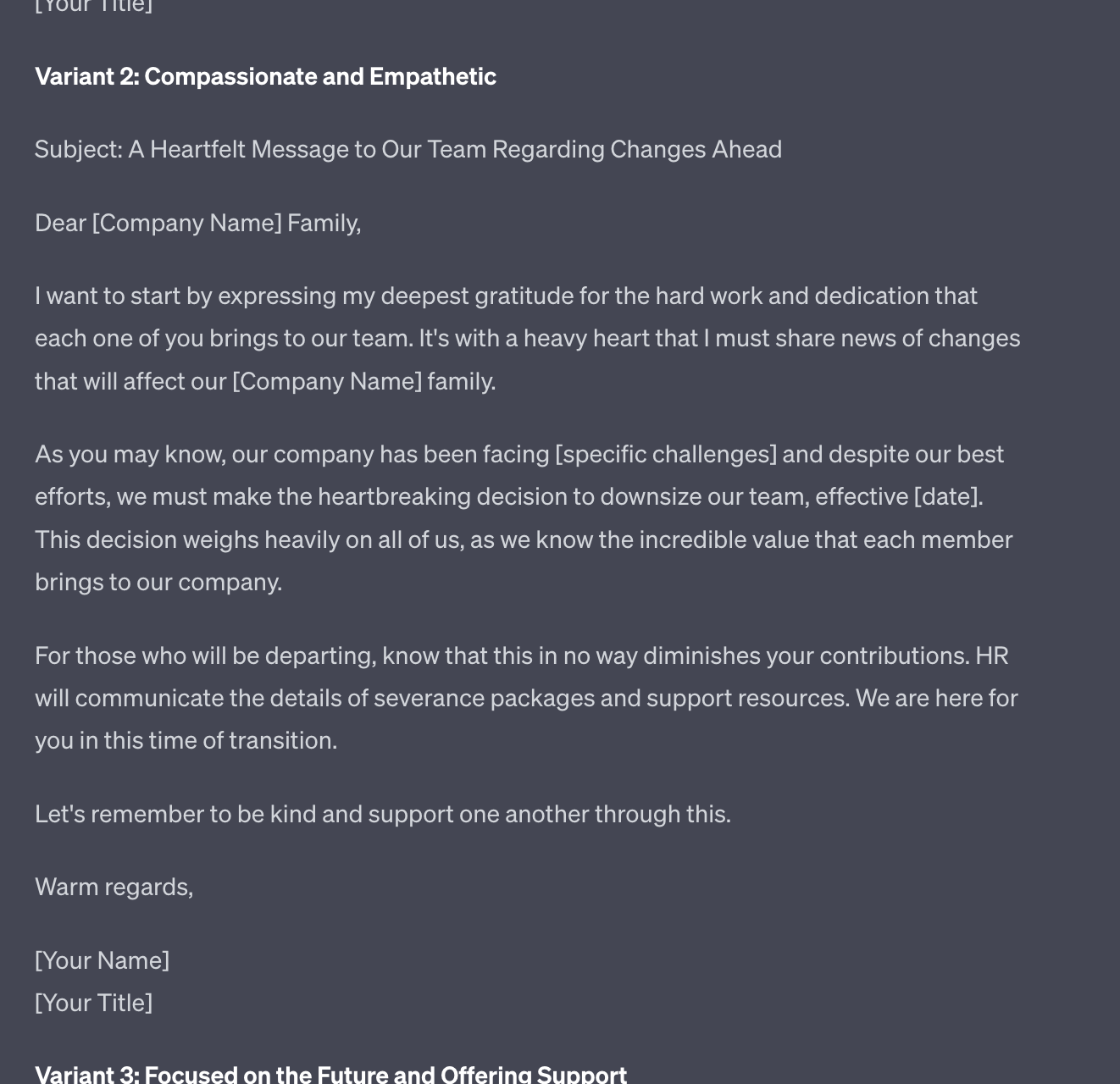

Choose the Best Variant

Normally, I avoid describing complex prompting techniques as I believe that, if you have to memorize a long prompt or submit a complicated sequence of prompts, you are not going to use them.

This is always why I categorically refuse to use the names that AI researchers have given to all the techniques you find in the past Splendid Editions of Synthetic Work and in the How to Prompt section of the website. They are unnecessarily complicated and non-tech people are not going to remember them.

For example, the technique we are going to discuss below has been called Tree of Thoughts (ToT). Who in the world is going to remember that name or what it does?

So, let’s call it Choose the Best Variant and let’s see how it works.

Do you remember that, moments ago, in discussing the Ask for Variants technique, I said that you have to evaluate which variant best fits your needs? And that adds cognitive load to your process?

Good. The Choose the Best Variant technique offloads the evaluation of the variants to the AI. And apparently, this approach greatly improves the quality of the answers in certain scenarios.

Today, as we focus on the technique and not the use case, we use it to generate some creative text, but in a future Splendid Edition, we are going to use it to improve our decision-making capabilities.

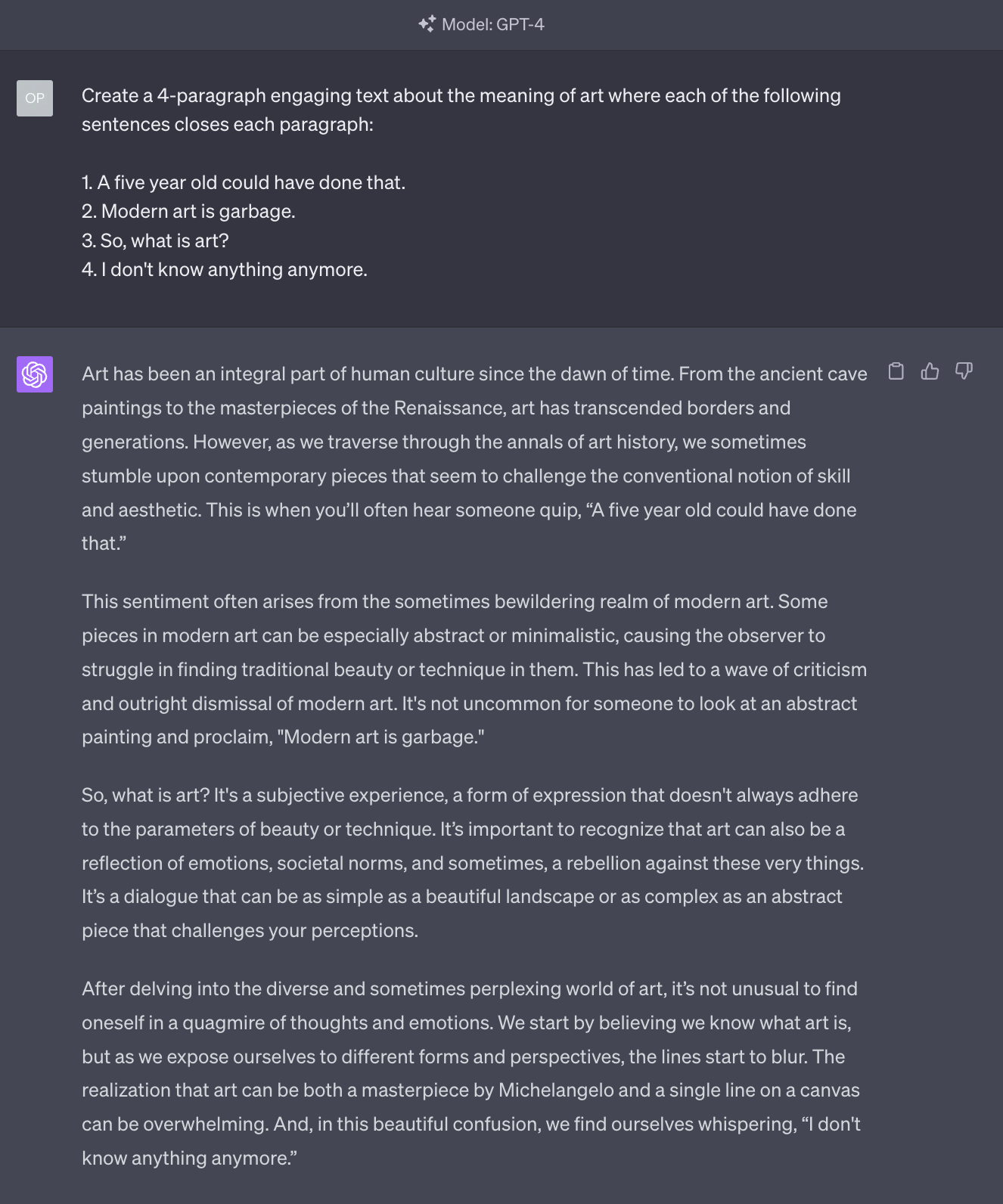

First, let’s what happens if we “simply” ask GPT-4 to generate some text by using four sentences that we provide:

I don’t know about you, but to me, the fact that AI can generate text that follows a coherent narrative and respects the constraints I provide is mind-blowing.

Now that we got our text, as we normally do, we can ask GPT-4 to make modifications (for example by invoking the Request a Refinement technique). Or, we can ask to generate variants, as we have done above.

But it’s a lot of work.

(yes, it’s as outrageous a statement as it sounds)

Let’s ask GPT-4 to do everything for us. Shamelessly.

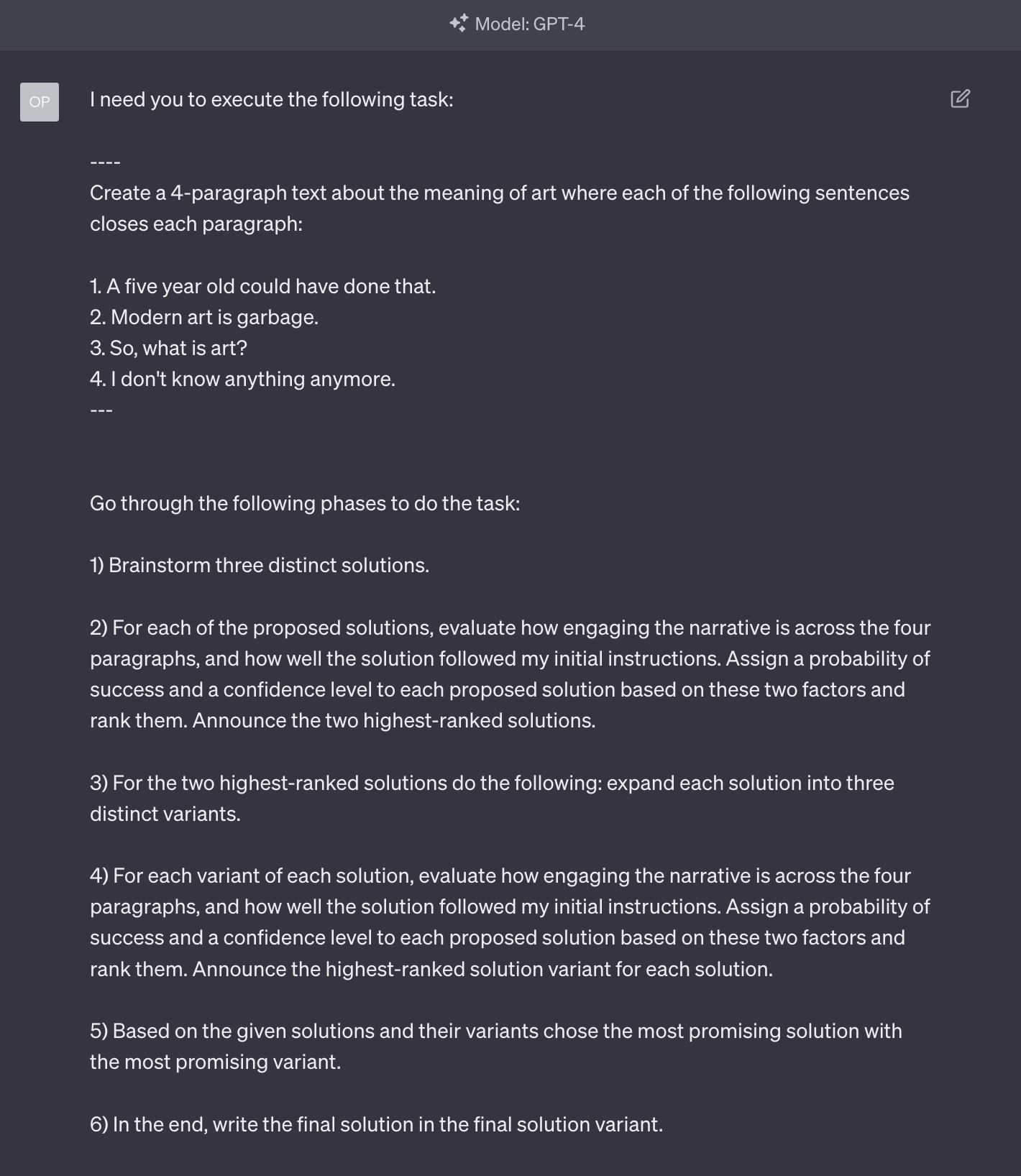

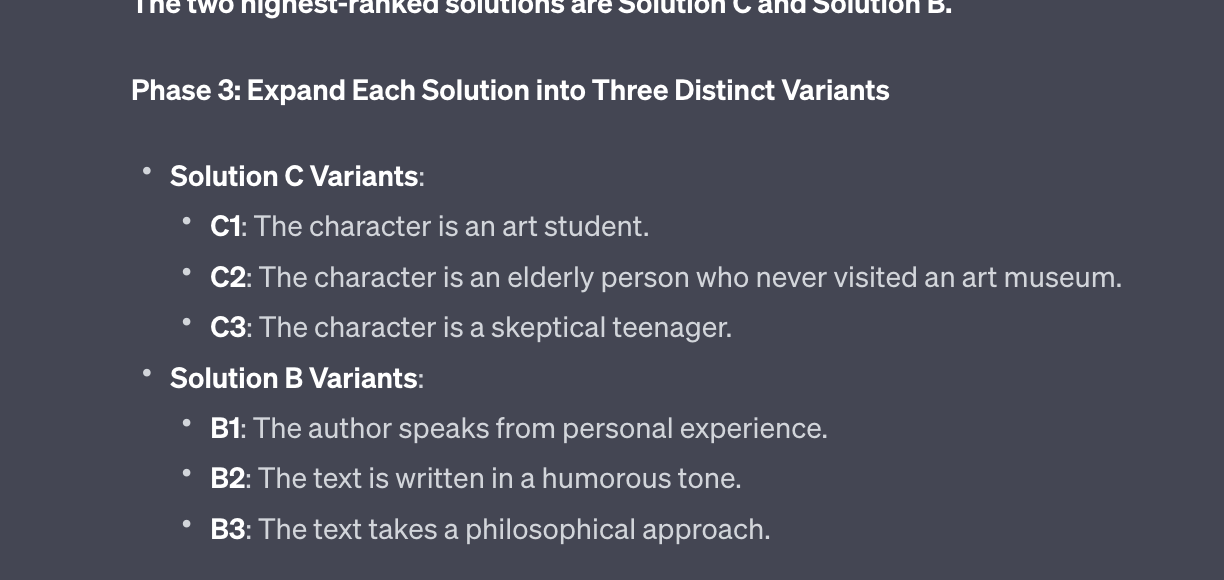

This is what we want the AI to do:

- Generate a certain number of variants.

- Evaluate these variants against a set of criteria we’ll define (for example, how eloquent the text is).

- Save the variants that matched our criteria best and discard the other variants.

- For each surviving variant, generate a certain number of sub-variants.

- Evaluate these sub-variants against the set of criteria previously defined.

- Adopt the variant that emerged as the best one from this process.

Told you it would be complicated.

But if you thrive in complexity, consider that you could create multiple sub-variants for each sub-variant, going as deep as you like.

The value of this approach is that it allows you to automatically consider a large number of alternatives in a very short amount of time.

Of course, you need to be careful in defining the criteria you want to use to evaluate the variants.

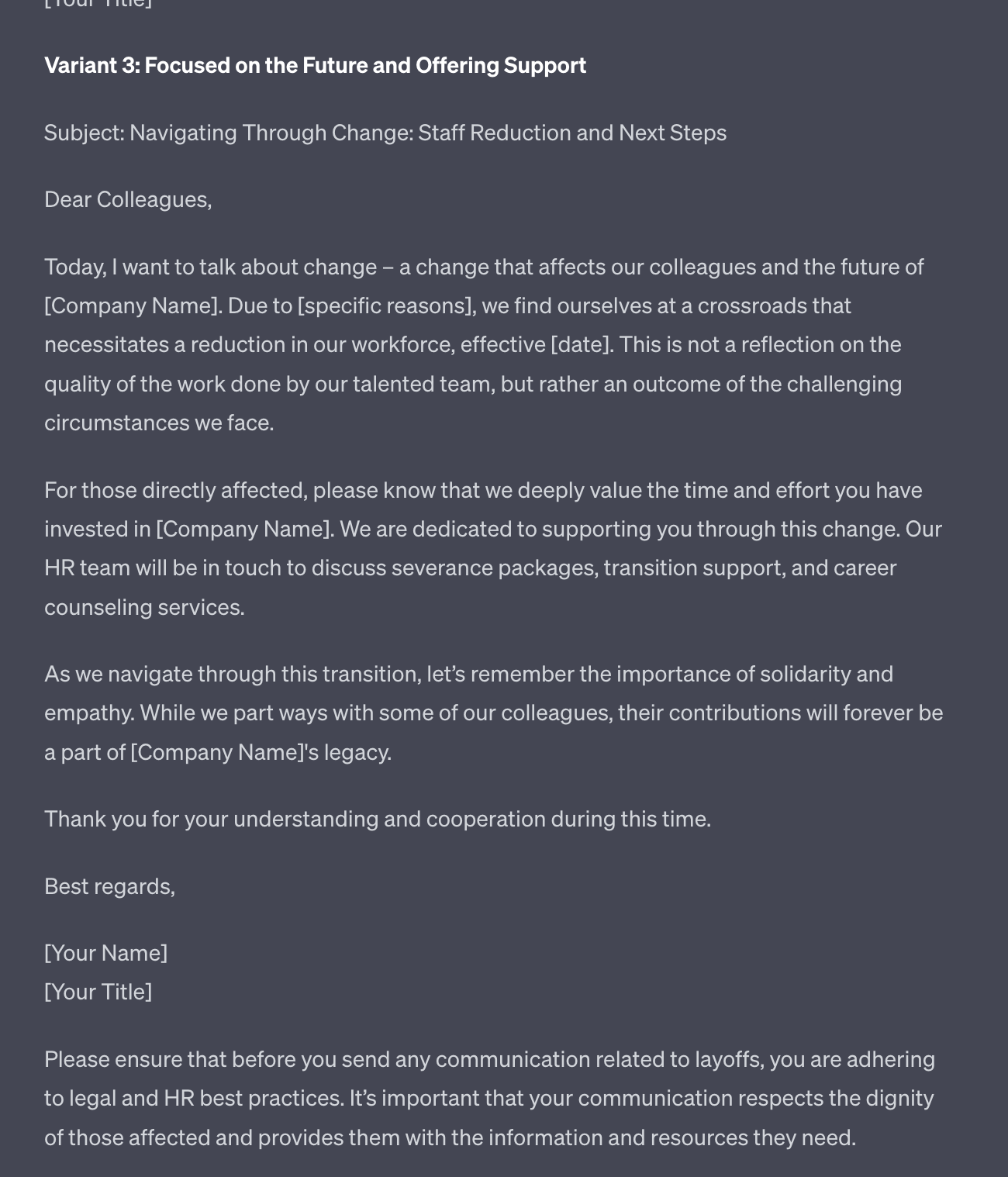

Let’s see it in action:

As you can see, in this prompt, I define two criteria: the level of engagement and how closely the answer has followed my instructions.

The second criterion is critical: large language models are better at comparing things than at creating things from scratch. So when we ask GPT-4 to compare its output against our instructions, it can better evaluate its performance.

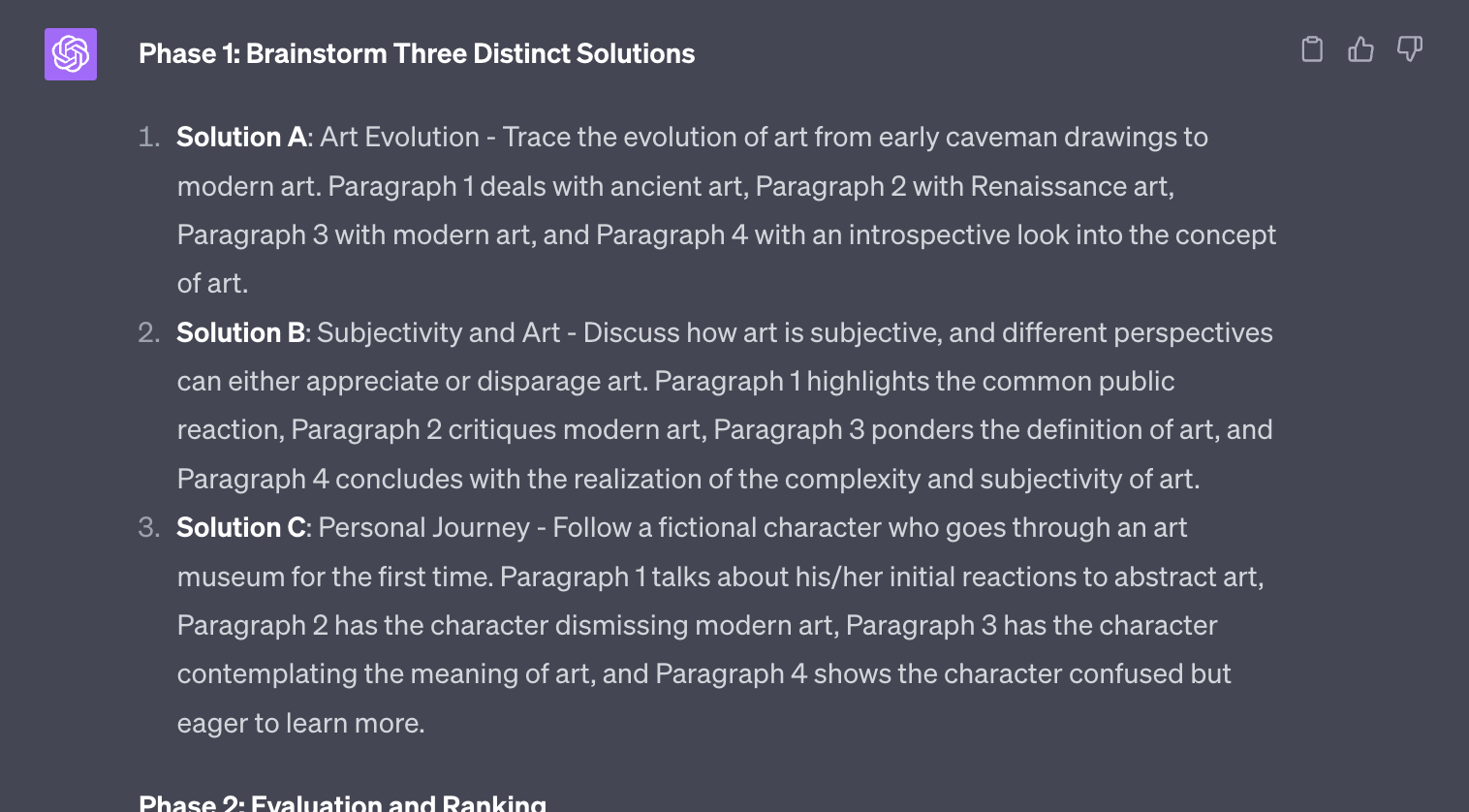

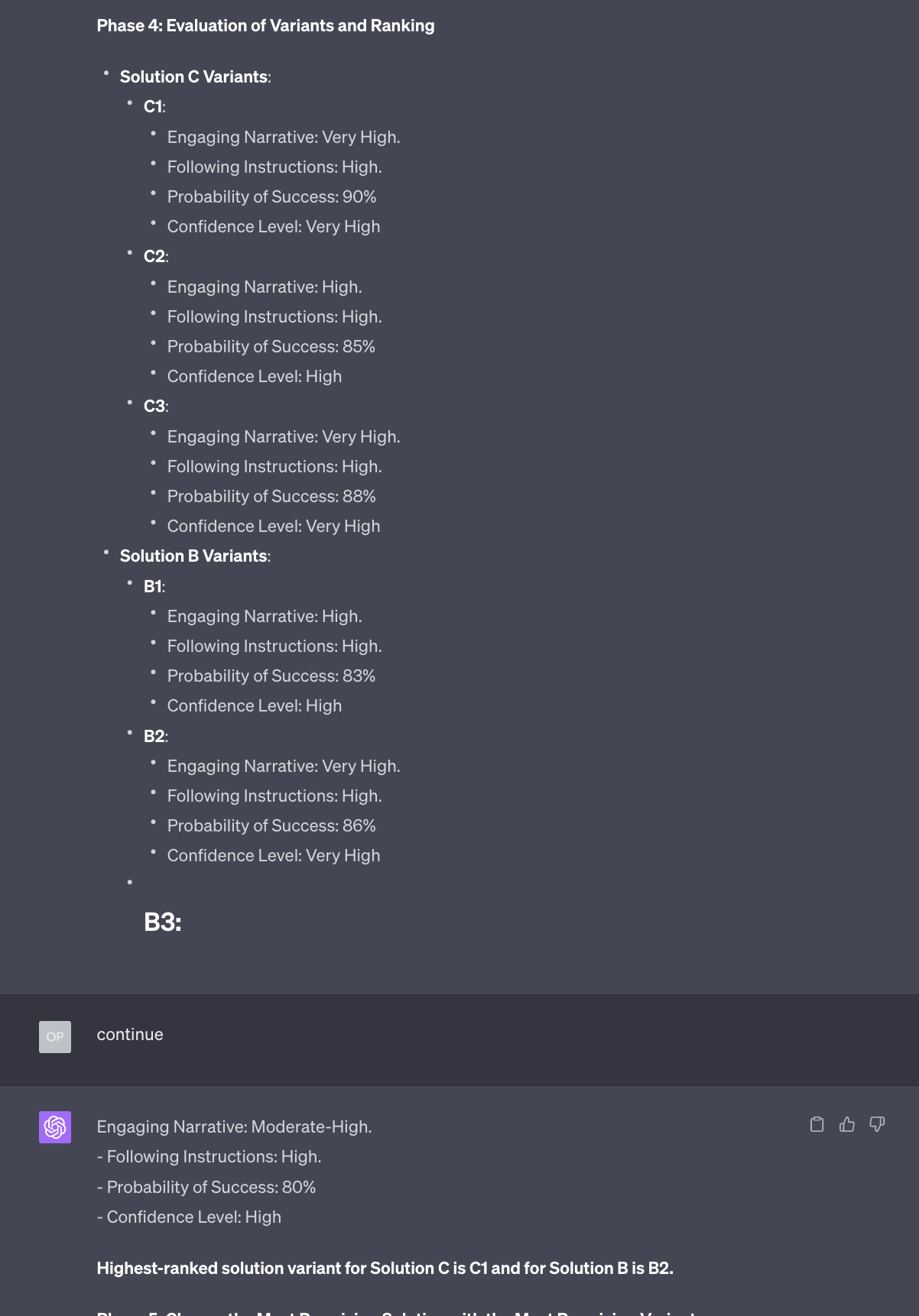

As you can see, GPT-4 generates three interesting variants. Now it needs to evaluate them against the criteria we have defined:

According to this evaluation, solution A will be discarded.

Now: did GPT-4 really calculate the probability of success with some mathematical formula? Or is it just the number that the AI assumes will most likely follow the words “probability of success”?

Here’s the shocking answer: we don’t know.

I’m not joking. Even the people that have trained these AI models don’t quite understand why certain techniques, like this one, work.

This is a good reminder that we are living the stuff of sci-fi movies. We have created something that we don’t fully comprehend. We can only, for now, evaluate its performance.

And after this brief chilling digression, let’s go back to how GPT-4 executes the task:

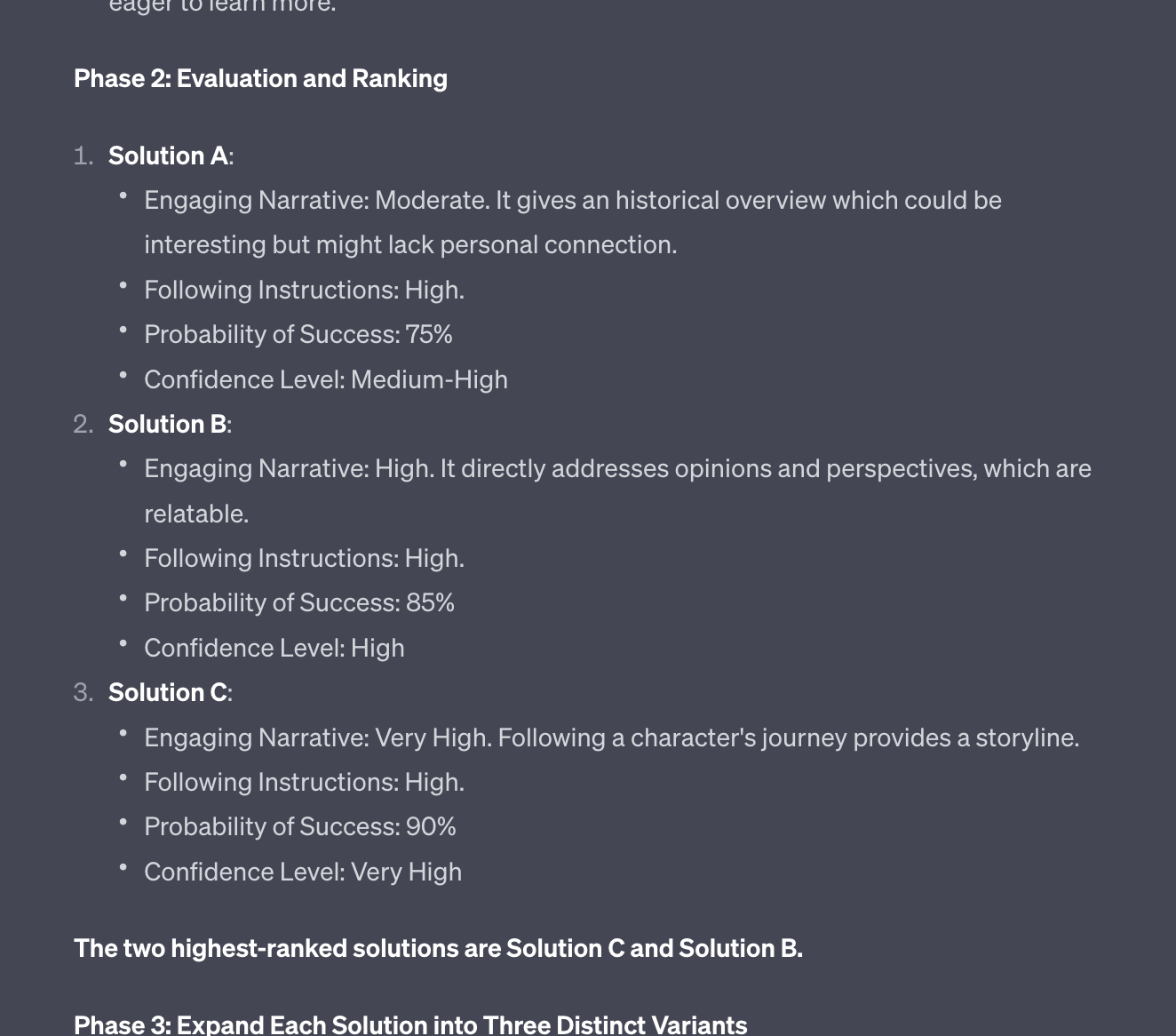

As you can see, GPT-4 has generated three sub-variants for each variant that has passed the first evaluation.

Now that same evaluation must be done for these new variants.

Here, notice that I had to ask GPT-4 to continue because of the context window limitations that we mentioned a million times in the previous issues of Synthetic Work.

I should really set up a Glossary, shouldn’t I?

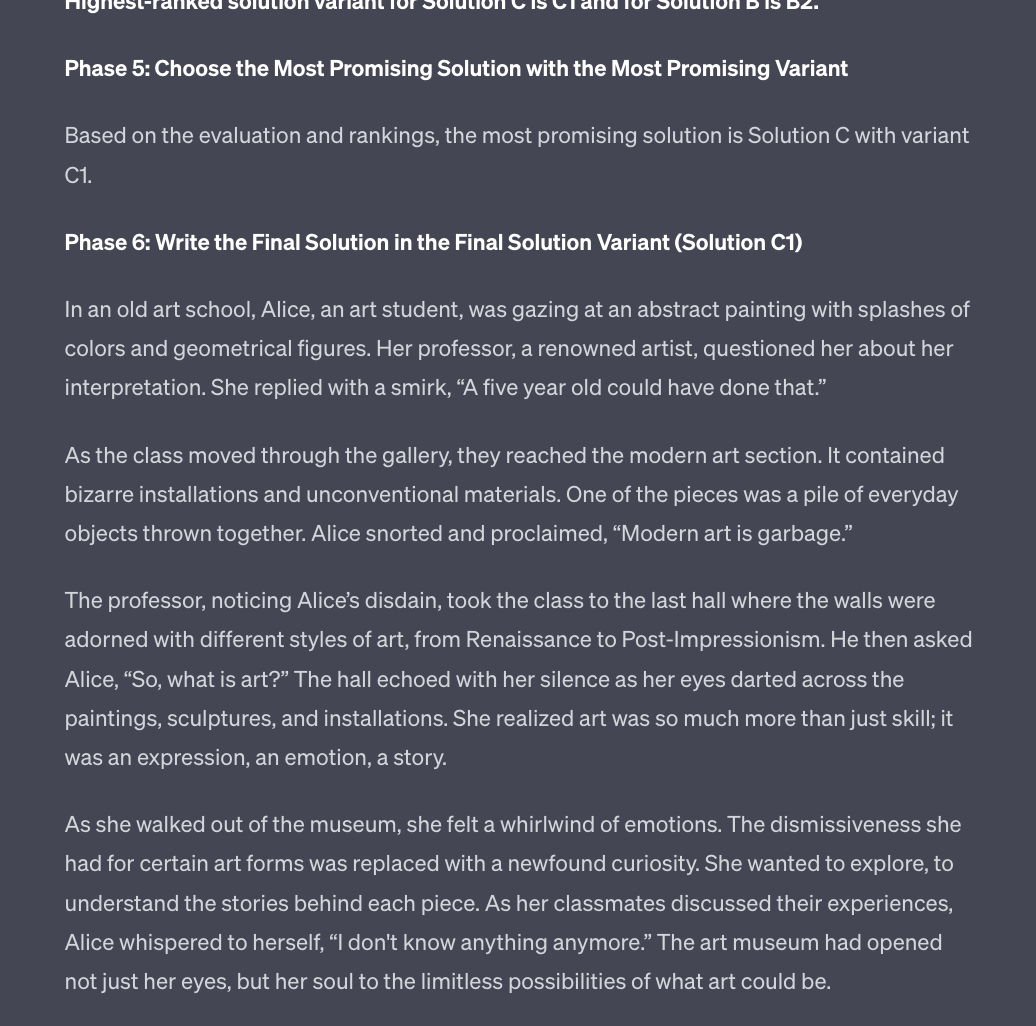

Here’s the final result:

Is this more engaging than the original version we obtained with the vanilla prompt? Perhaps. What matters is that, in a very short period, we have seen nine alternatives to our question and we have somebody else to blame for the one that was finally selected.

It’s cheaper than hiring a consulting firm and you can use it to brainstorm ideas for your next project or to make more informed decisions.

Normally, this section of the newsletter is dedicated to those tools/products/solutions that are greatly enhanced by AI capabilities and stand out from an ocean of competitors.

Today, we are going to make an exception to this rule and talk about a product that is not augmented by AI but that helps you use AI. It’s my favorite browser in the world: Vivaldi.

I started using Vivaldi four years ago because I was constantly frustrated by the poor performance of Chrome. I tried Firefox for a while, but it’s slower than Chrome at rendering pages, so I kept looking.

As I always do in these cases, I simply tested every single browser on the planet. When it was the turn of Vivaldi, I discovered that they could do something no other browser could do at that time: arrange the tabs vertically, on a left or right sidebar.

This one feature has changed my life, to the point that I cannot use any other browser anymore.

Our monitors have an aspect ratio of 16:9. Yet, the overwhelming majority of the websites in the world don’t use the side space. The narrower the column of text, the easier is to read for people. Also, there are issues with the rendering of pages on multiple devices and yada yada yada.

Lots of boring details about web design that we don’t care about.

The point is that we have this enormity of space at the side of our screens that we rarely use.

Conversely, the average user has 7,000 tabs open at any given time. And when you have 7,000 tabs open you have no idea where is what because the titles are unreadable and the favicons are not necessarily a good indicator of what the page is about.

So, every time you have to switch the context, you have to cycle through all the tabs to find the ones you need. That takes forever and it completely breaks your flow.

Various browsers have tried to solve this problem in clumsy ways. For example, Firefox has some very popular extensions that can help you summarize what the open tabs are all about and sort of simulate a vertical tab organization.

I tried those extensions. Their implementation is not satisfactory.

Vivaldi, on the other hand, has a native implementation of vertical tabs that is flawless. I see every title in full if I decide to enlarge the tab, and I can manipulate the tabs in wild ways if I choose to: I can group them together, pin them at the top of the sidebar, organize them in workspaces, right click on them to copy the link of the page without focusing on the tab, select multiple of them and close them all, send them to a new window (I use this trick to separate my tabs in work activities), etc.

I could continue for a long time. But, really, even without all these fancy features, the simple fact that I can see all the tabs at once and I can read their titles is a game changer.

It took me a long time to accept the fact that the rest of the world doesn’t use vertical tabs. I still don’t understand why, but I’ve come to accept it as a mystery of life.

And while all of this is wonderful (to me), none of this explains why we are talking about Vivaldi.

The reason is that their new 6.1 version, released earlier this week, allows you to trick Microsoft and access Bing Chat, which is powered by GPT-4, without the need to download Microsoft Edge.

Yes. Microsoft doesn’t allow you to access Bing Chat from any browser. You have to download Edge.

If you are wondering if this is anti-competitive behavior, the answer is: I’m not a lawyer, but it certainly feels that way to me.

So, Vivaldi 6.1 avoids me from downloading and using a secondary browser.

Wait a minute, Alessandro. Microsoft Edge has vertical tabs, too!

Yes, and kudos to Microsoft for having copied the most useful browser feature in the universe.

However:

- Edge comes with a lot of things that I profoundly dislike. Like the capability to inject ads into my browsing session.

- Edge is not even remotely close to Vivaldi in terms of customizations and features. In fact, it’s quite constraining in terms of how you can customize the one application that, arguably, most people use the most in their digital life.

- Edge is not as fast as Vivaldi.

So, not a chance.

Vivaldi is so good that I’d be happy to pay for it. And instead, it’s free. And now, I can use it to test the Bing Chat implementation of GPT-4 vs the OpenAI native experience.