- Intro

- How to keep busy during the holidays.

- What’s AI Doing for Companies Like Mine?

- Learn what Renaissance Hotels, EY, BlackRock, and Cali Group are doing with AI.

- A Chart to Look Smart

- End-of-year reports from PluralSight, Evident AI, and cnvrg.io give us a shower of charts on AI adoption trends and talent flows.

- Prompting

- Anthropic explains why Claude 2.1 failed the Needle-in-a-Haystack test. To fix the problem just use clever prompt engineering.

As you’ll read in this week’s Free Edition, Synthetic Work takes a quick break. Not because it’s winter holiday time, but because I have to fly to Italy to keynote a private event and lead an internal hackathon.

Both editions of Synthetic Work will reach your inbox as usual on December 29th.

While you wait, here are five things you can do with your Sage subscription:

- Read the archive with all 82 editions of Synthetic Work, which equals over 1,000 pages of content at this point. That should be enough to keep you busy during the holidays.

- Study the most popular AI enterprise use cases by reviewing 120+ early adopters tracked in the AI Adoption Tracker.

- Test our new custom GPTs: Synthetic Work Presentation Assistant and (Re)Search Assistant and.

- Boost your productivity in 2024 by trying our tutorials on how to use AI for the most common use cases.

- Practice your prompt engineering skills with the HowToPrompt library.

Also, remember that you can access our Discord server to network with other Sage and Explorer members who do not want to spend time with their families.

Happy Holidays!

Alessandro

What we talk about here is not about what it could be, but about what is happening today.

Every organization adopting AI that is mentioned in this section is recorded in the AI Adoption Tracker.

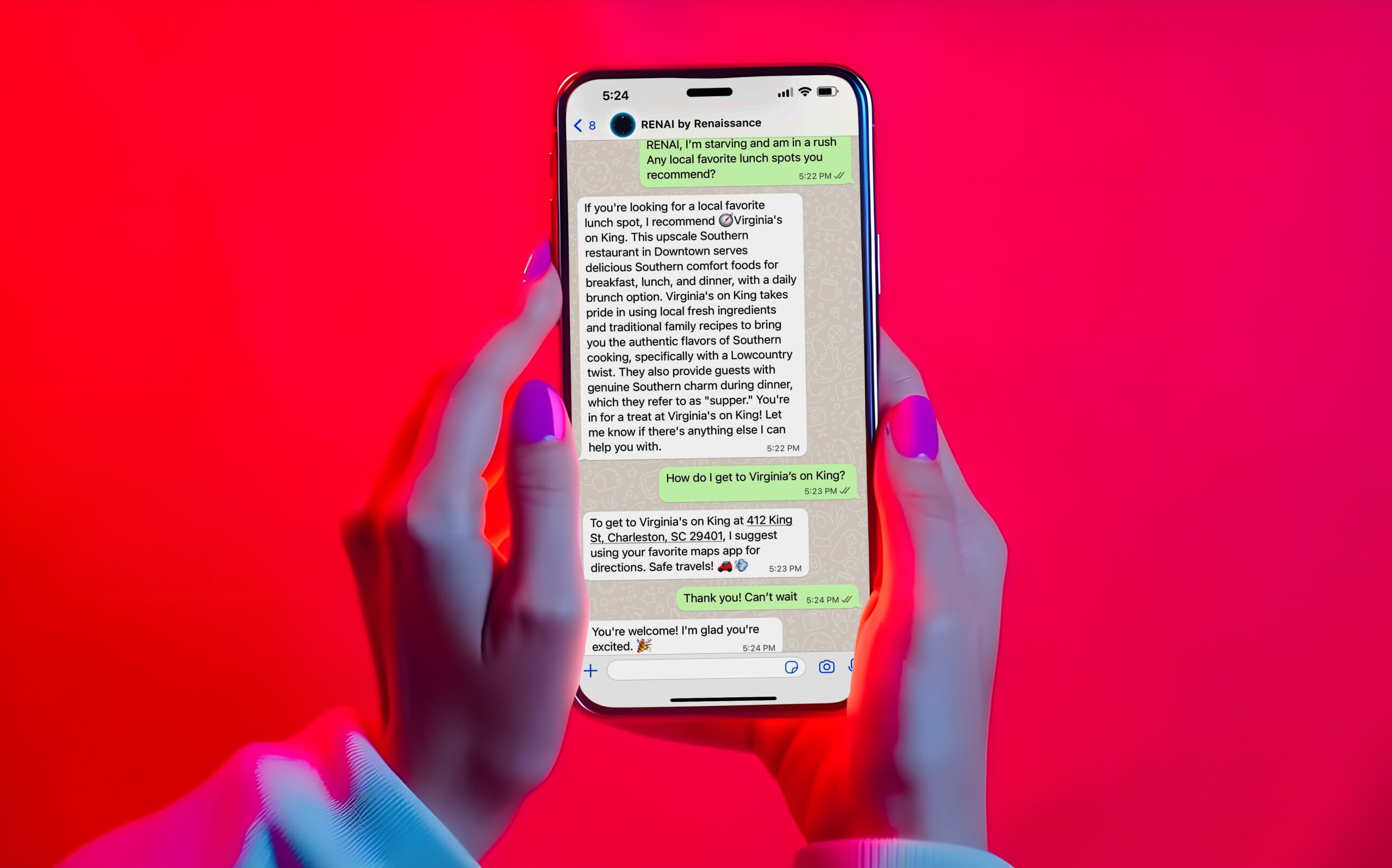

In the Hospitality industry, Renaissance Hotels is about to launch an AI-powered virtual concierge called Renai.

From the official press release:

Travelers staying at select Renaissance Hotels, part of Marriott Bonvoy’s extraordinary portfolio of over 30 hotel brands, will soon have instant access to vetted, insiders’ picks for vibey cocktail bars, under-the-radar attractions, top-rated breakfast spots and more through an exciting new virtual concierge service. Today the brand announced a pilot program for RENAI by Renaissance (pronounced “ren-A”), which stands for Renaissance Artificial Intelligence, and is akin to having a well-connected local who is available 24/7 all right from guests’ smartphones.

…

Renaissance Navigators have provided their expertise to train RENAI by Renaissance with their top picks, and these specific recommendations will always be designated by a compass emoji. It also leverages ChatGPT and reputable open-source outlets, which have contributed recommendations to a curated and constantly refreshed “black book” directory that is vetted by human Navigators. This means users can have confidence that the suggestions they see serve as a true reflection of the neighborhood.

…

Guests are now able to test out RENAI by Renaissance at The Lindy Renaissance Charleston Hotel, Renaissance Dallas at Plano Legacy West Hotel, and Renaissance Nashville Downtown.

…

For guests who may want to conduct their own research on local experiences before their trip even starts or get a head start while waiting in line for the concierge, they can simply scan a QR code to connect with RENAI by Renaissance via text message or WhatsApp and start a conversation. Guests will then receive a response to their requests with recommendations that have been vetted by Renaissance Navigators as well as identify special deals on restaurants, tours and more.

…

Following RENAI by Renaissance’s pilot period, Renaissance Hotels plans to expand the AI-powered concierge service more widely in 2024, including over 20 properties globally by March 2024. The full rollout of the program is projected to include more enhancements to the service including additional communication platforms such as Instagram as well as curated recommendations from neighborhood tastemakers such as musicians, DJs, artists, fashion designers, and more.

There is a reason why I never quote press releases. Today’s exception is a painful reminder of why I don’t do it.

That said, this is another application of generative AI that you should expect every hotel in the world will copy. And not just hotel chains. I would assume that every major credit card company will start converting a portion of their concierge staff into AI-powered virtual assistants.

In the Financial Services industry, EY started using AI to automatically identify audit frauds.

Robert Wright, reporting for Financial Times:

According to Kath Barrow, EY’s UK and Ireland assurance managing partner, the new system detected suspicious activity at two of the first 10 companies checked. The clients subsequently confirmed that both cases had been frauds.

This early success illustrates why some in the industry believe AI has great potential to improve audit quality and reduce workloads. The ability of AI powered systems to ingest and analyse vast quantities of data could, they hope, provide a powerful new tool for alerting auditors to signs of wrongdoing and other problems.

Some audit firms are sceptical that AI systems can be fed enough high quality information to detect the multiple different potential forms of fraud reliably. There are also some concerns about data privacy, if auditors are using confidential client information to develop AI.

…

Regulators are likely to have the final say over how the technology can be deployed. Jason Bradley, head of assurance technology for the UK’s Financial Reporting Council, the audit watchdog, said AI presented opportunities to “support improved audit quality and efficiency” if used appropriately.But he warned that firms would need the expertise to ensure systems worked to the right standards. “As AI usage grows, auditors must have the skills to critique AI systems, ensuring the use of outputs is accurate and that they are able to deploy tools in a standards-compliant manner,” he said.

…

The technology could be particularly helpful if it reduces auditor workloads. Firms across the world are struggling to train and recruit staff. It could also help raise standards: in recent years auditors have missed serious financial problems that have caused the collapse of businesses including outsourcer Carillion, retailer BHS and café chain Patisserie Valerie.

…

EY’s experiment, according to Barrow, used a machine-learning tool that had been trained on “lots and lots of fraud schemes”, drawn from both publicly available information and past cases where the firm had been involved. While existing, widely used software looks for suspicious transactions, EY said its AI-assisted system was more sophisticated. It has been trained to look for the transactions typically used to cover up frauds, as well as the suspicious transactions themselves. It detected the two fraud schemes at the 10 initial trial clients because there had been similar patterns in the training data, the firm said.

EY’s competitors Deloitte and KPMG have criticized this approach suggesting that frauds are very sophisticated and unique, and that AI cannot be trained to detect them.

But that’s a nonsensical argument. Surely, having an AI that spots the clumsiest frauds is better than having no AI at all. Also, as usual, people insist on focusing on what AI can do today, ignoring the fact that we are on a vertical progression curve such that just 18 months ago we didn’t have even the intuition of the technology we are debating today.

Accounting experts are not equipped to comment on the potential of emerging technologies or their progression curves.

If your strategy is based on the here and now, you’ll soon need to prepare a new strategy.

In the Financial Services industry, BlackRock is about to launch an AI assistant that integrates with their portfolio management solution Aladdin.

Brooke Masters, reporting for Financial Times:

BlackRock plans to roll out generative artificial intelligence tools to clients in January as part of a larger drive to use the technology to boost productivity, the $9.1tn asset manager told employees on Wednesday.

The world’s largest money manager said in a memo to staff that it has used generative AI to construct a “co-pilot” for its Aladdin and eFront risk management systems. Clients will be able to use BlackRock’s large language model technology to help them extract information from Aladdin.

…

“GenAI will change how people interact with technology. It will improve our productivity and enhance the great work we are already doing. GenAI will also likely change our clients’ expectations around the frequency, timeliness, and simplicity of our interactions,” according to the memo from Rob Goldstein, chief operating officer; Kfir Godrich, chief innovation officer; and Lance Braunstein, head of Aladdin Engineering.BlackRock is also building tools to help its investment professionals gather financial and other data for research reports and investment proposals, as well as a language translator, according to the memo. Additionally, in January, it will start deploying Microsoft’s AI add-on to Office 365 productivity software across the company.

…

the AI would be producing “first drafts” that must go through normal quality control, and all data would remain inside BlackRock’s “walled garden” rather than being shared with users of open access generative AI programmes

Once again, heavily regulated industries are implementing AI at breakneck speed.

Bonus story:

In the Foodservice industry, Cali Group is about to open the world’s first fully autonomous, AI-powered restaurant in California.

From the official press release:

Cali Group, a holding company using technology to transform the restaurant and retail industries, Miso Robotics, creator of Flippy (the world’s first AI-powered robotic fry station), and PopID, a technology company simplifying ordering and payments using biometrics, announced today that they are soon opening CaliExpress by Flippy, the world’s first fully autonomous restaurant.

Utilizing the most advanced systems in food technology, both grill and fry stations are fully automated, powered by proprietary leading-edge artificial intelligence and robotics. Guests will watch their food being cooked robotically after checking in with their PopID accounts on self-ordering kiosks to get personalized order recommendations and make easy and fast payments.

The new CaliExpress by Flippy restaurant is located in a prime retail location in Pasadena, California on the northwest corner of Green Street and Madison Avenue at 561 E. Green St.

…

Flippy, the famous robotic fry station, will serve up crispy, hot fries made from top grade potatoes that are always cooked to exact times. The menu is very simple, comprising burgers, cheeseburgers, and french fries.

…

The CaliExpress by Flippy kitchen can be run by a much smaller crew, in a less stressful environment, than competing restaurants — while also providing above average wages.

…

The CaliExpress by Flippy location will also be a pseudo-museum experience presented by Miso Robotics. Including dancing robot arms from retired Flippy units, experimental 3D-printed artifacts from past development, photographic displays, and much more, the space is designed to serve noteworthy food, plus inspire the next generation of kitchen AI and automation entrepreneurs.

Highlighting restaurant crew size and wages in a launch press release is an odd move. But, unquestionably, journalists will ask about the impact of automated restaurants on employment in the Foodservice industry. Assuming this thing doesn’t stay perennially broken, like the infamous ice cream machines at McDonald’s.

So, perhaps, this is an attempt to get control of the narrative.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

Usually, we use this section of the Splendid Edition to discuss a chart coming from cutting-edge research. However, I realize that some of you may be also interested in various types of market trends. So, given that we are approaching the end of the year, let’s focus on that kind of content.

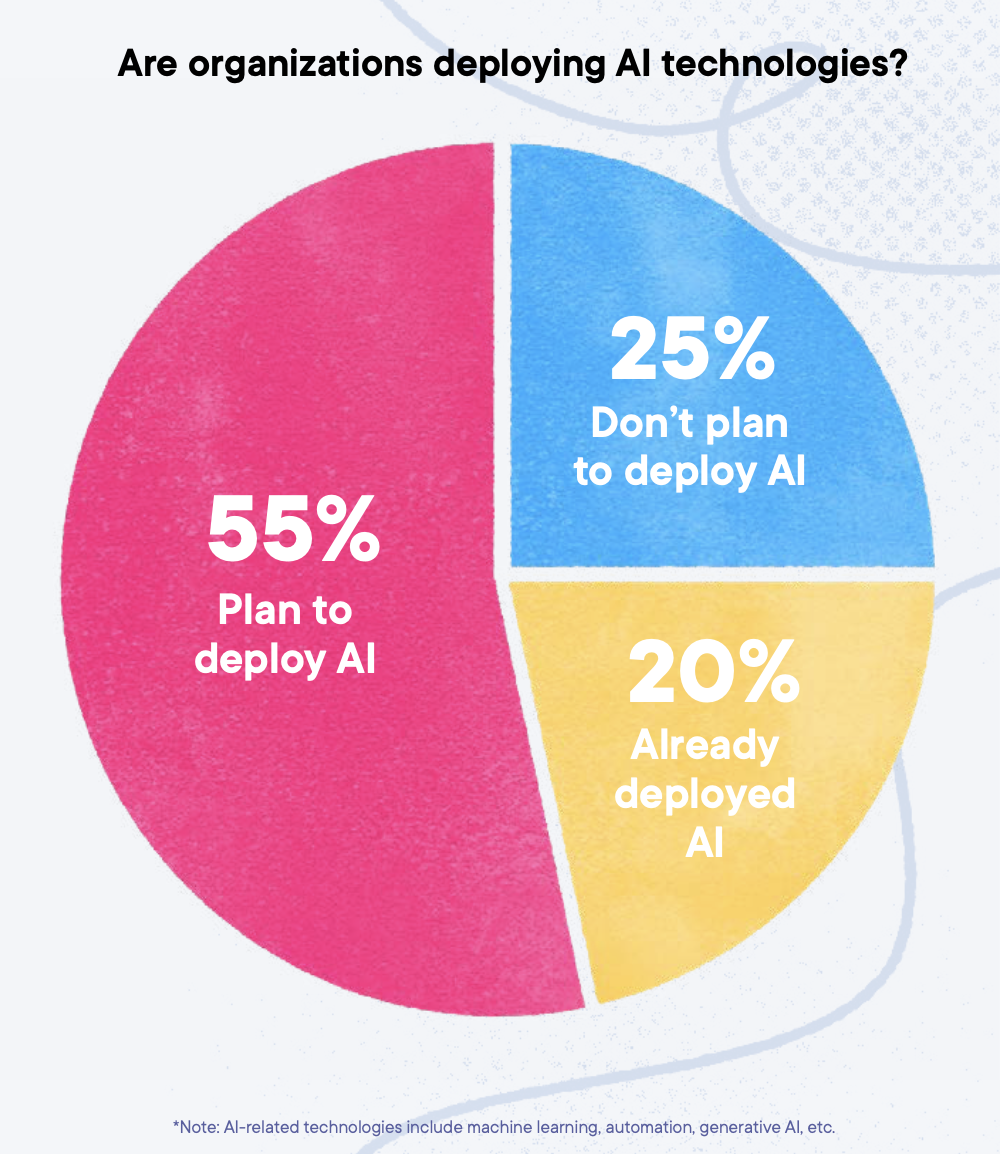

Let’s start with the just published Pluralsight AI skills report.

The company surveyed 1,200 executives and IT professionals (50% for each category, 58% from the US and 42% from the UK) to understand the impact of AI on their organizations and how they are preparing for it.

According to PluralSight, most organizations are still thinking about it:

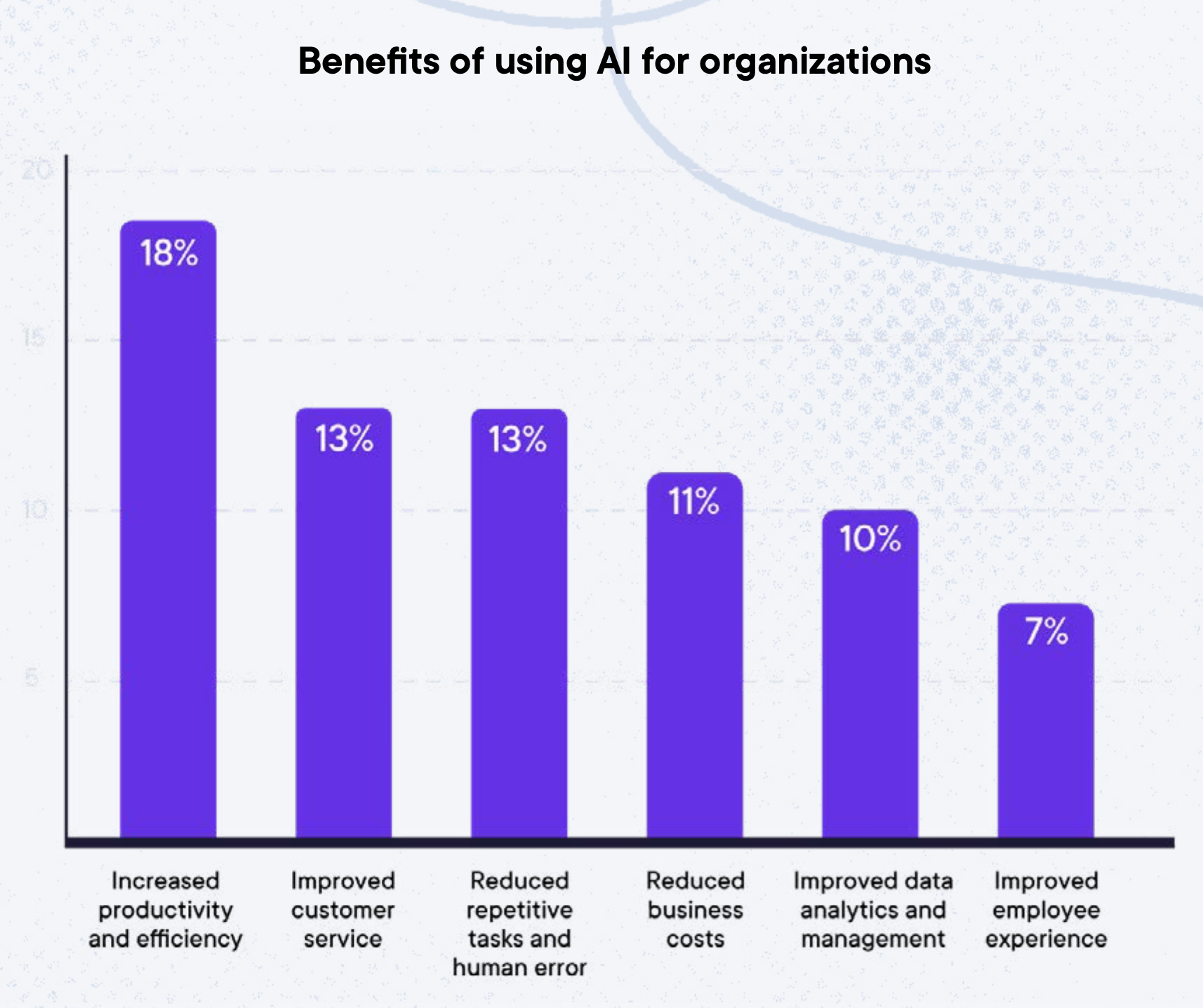

Surprisingly, and against everything Synthetic Work documented in the last 10 months, when the respondents were asked about the top reasons to deploy AI, “cost reduction” came only fourth:

In these months, we also documented how companies are getting more careful in admitting the real reasons why they adopt AI, fearing employee backlash and reputational damage. So, if people have started understanding that AI is not about cutting costs, all the better, but don’t count on it.

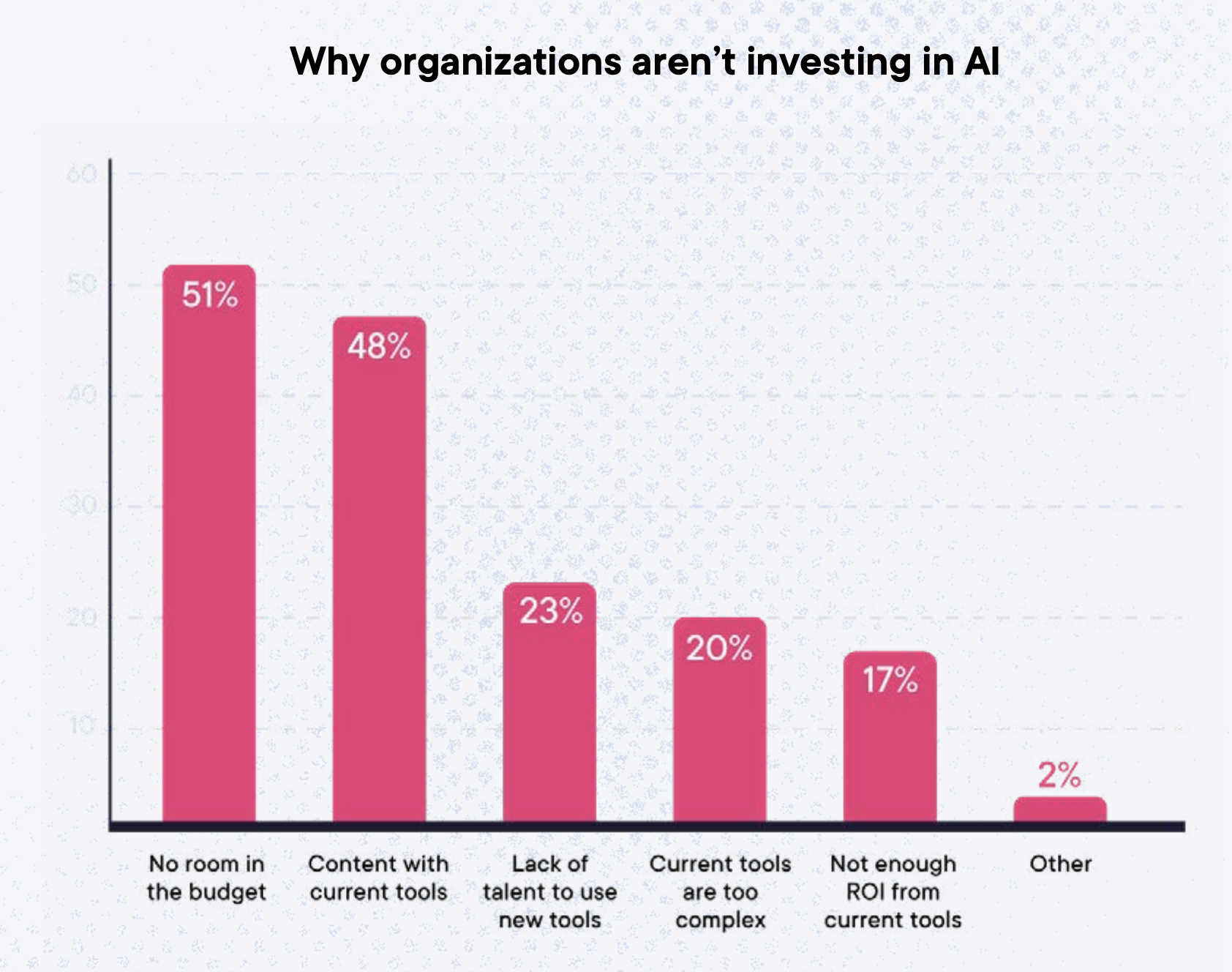

When it’s time to discuss the reasons why companies have not adopted AI yet, the top answer is always the same: no budget.

The second most common answer, tho, is interesting: “content with current tools”.

Here there are two possibilities: either people are ignoring the enormous potential of AI and how much they will fall behind by sticking with their existing tools, or they have tried AI-powered solutions and were underwhelmed.

The latter hypothesis fits a narrative I’ve seen first-hand many times since I started writing this newsletter: people have tried GPT-3.5-Turbo, which is the only model you can use with the free version of ChatGPT, and they went away thinking “Is that it?”

That first impression prevented them from ever trying GPT-4, GTP-4 Code Interpreter, or even the new GPT-4-Turbo, which are dramatically better models, giving the feeling of “intelligence” in the term “artificial intelligence” for the first time ever.

On top of this, it’s undeniable that an ocean of startups out there is deploying AI without adding any substantial value to their customers. Most of them are using AI as a gimmick and that contributes to building a general skepticism.

This is also why, I use the Splendid Edition to mention only those products and services where AI is making a real difference, testing them first-hand before I issue any recommendation.

Back to the survey.

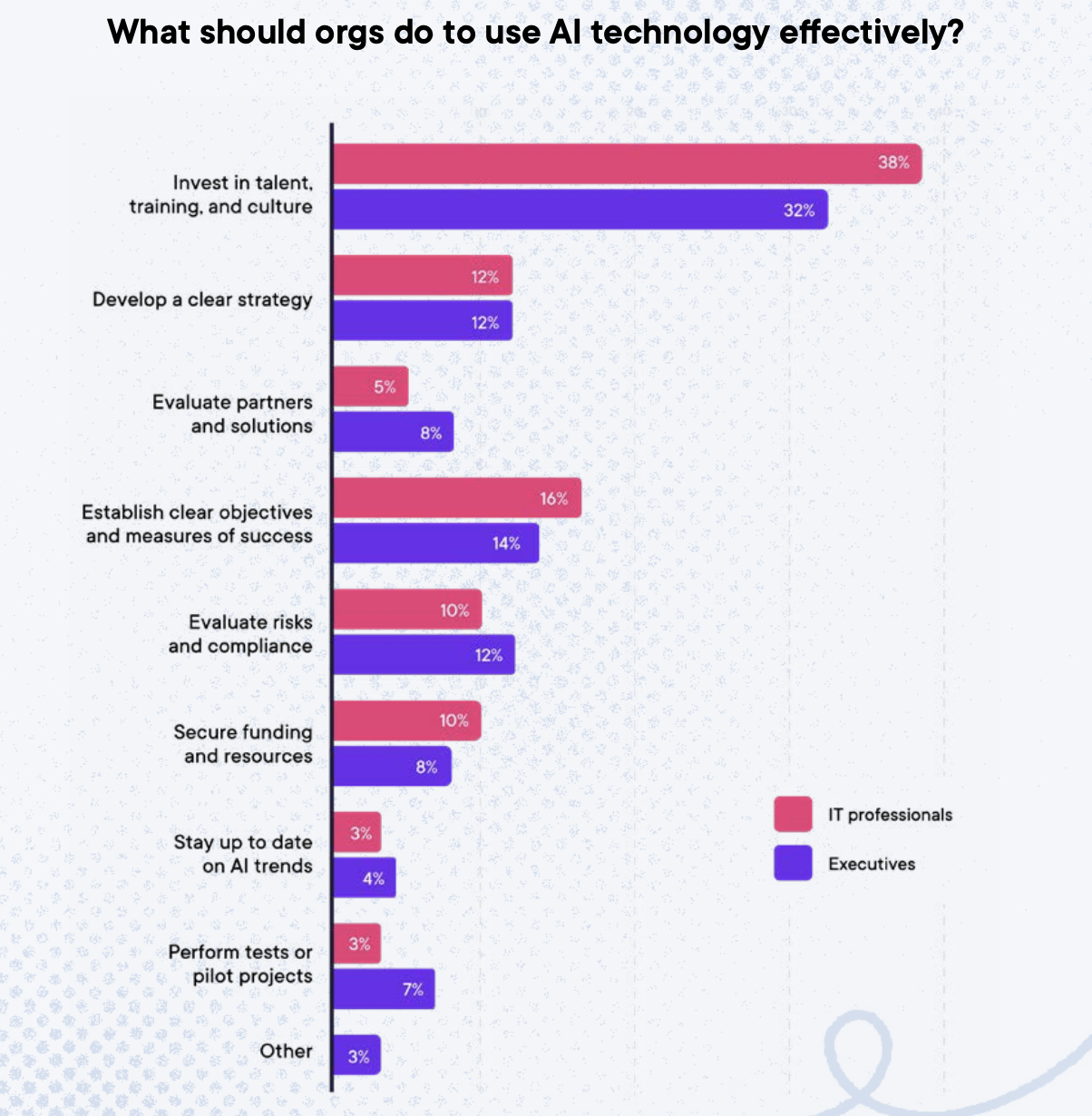

PluralSight also asked respondents what they think their company should do to get the most out of their AI adoption efforts:

Obviously, PluralSight sells courses, so it goes without saying that a survey commissioned by them would end up highlighting the lack of skills as the main obstacle to AI adoption.

Nonetheless, they are experts in corporate training, so theirs is a perspective worth considering. And that perspective is 100% aligned with what we’ve seen happening in previous attempts to adopt emerging technologies.

In particular, the survey highlights a could of barriers to AI upskilling that I’ve seen in past professional experiences, exactly as described, and precisely about AI:

- Organizations adopt technology first and train employees later – 80% of executives and 72% of IT practitioners agree their organization often invests in new technology without considering the training employees need to use it.

- Organizations believe they can outsource AI skills – 91% of executives are at least somewhat likely to replace or outsource talent to successfully deploy AI initiatives.

So, I don’t think there’s a particular bias, at least in this portion of the survey.

The first point is a classic, and not just about AI. Not much to say about it.

The second point has acquired a new meaning in the AI era, and it proves to be a fatal flaw in any AI strategy. Business leaders who think that they can outsource AI talents have no clear the job market dynamics at play across the globe, in terms of talent scarcity across geographies, and in terms of the quality of the talent pool made available by the outsources.

We discussed these points many times in various issues of Synthetic Work, and we’ll keep doing it in the future.

Somehow connected to this, is the fact that only 12% of respondents thought that “Develop a clear strategy” would be a must-do. If outsourcing is the strategy, many will be up for a rude awakening.

Now.

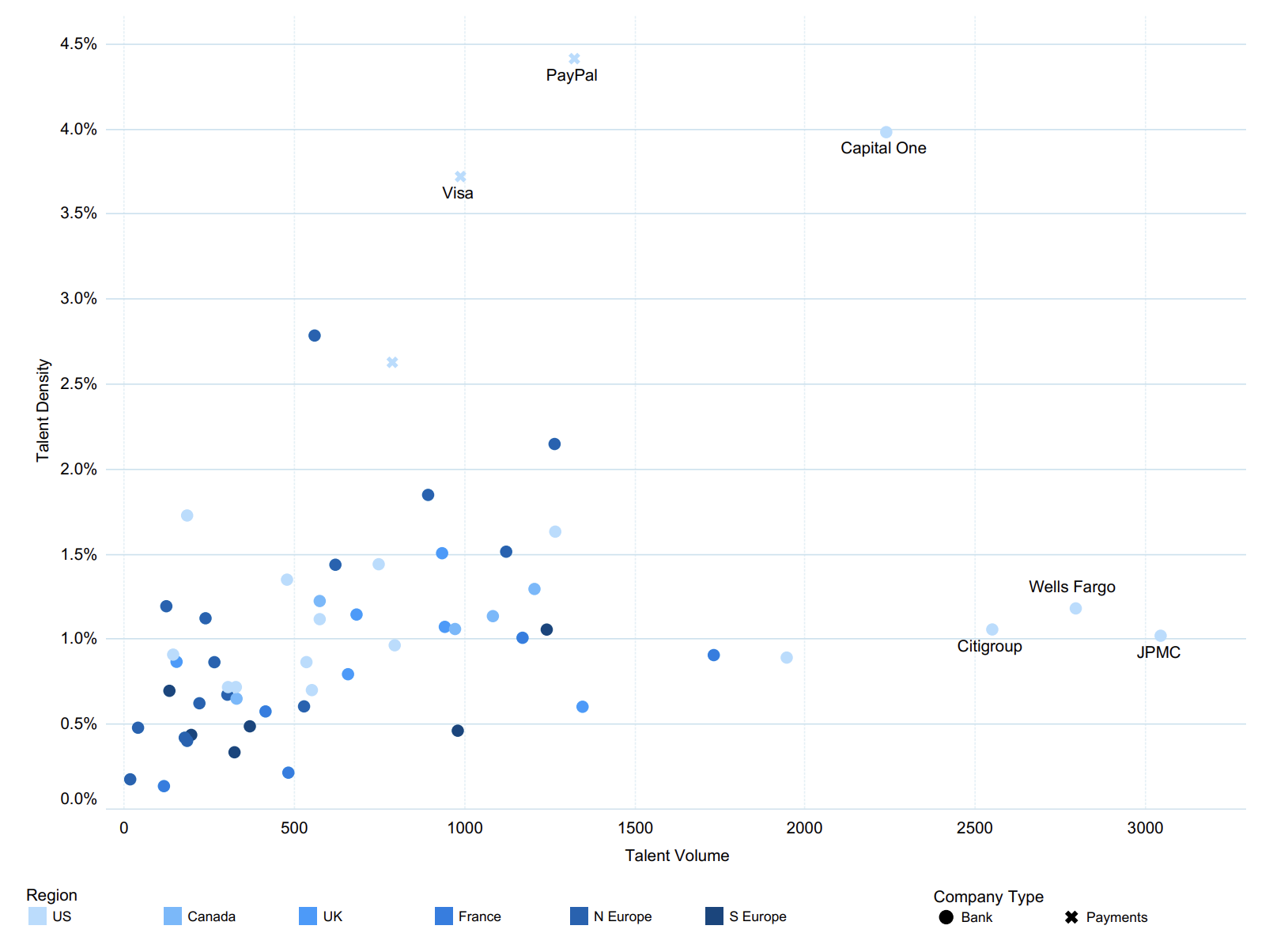

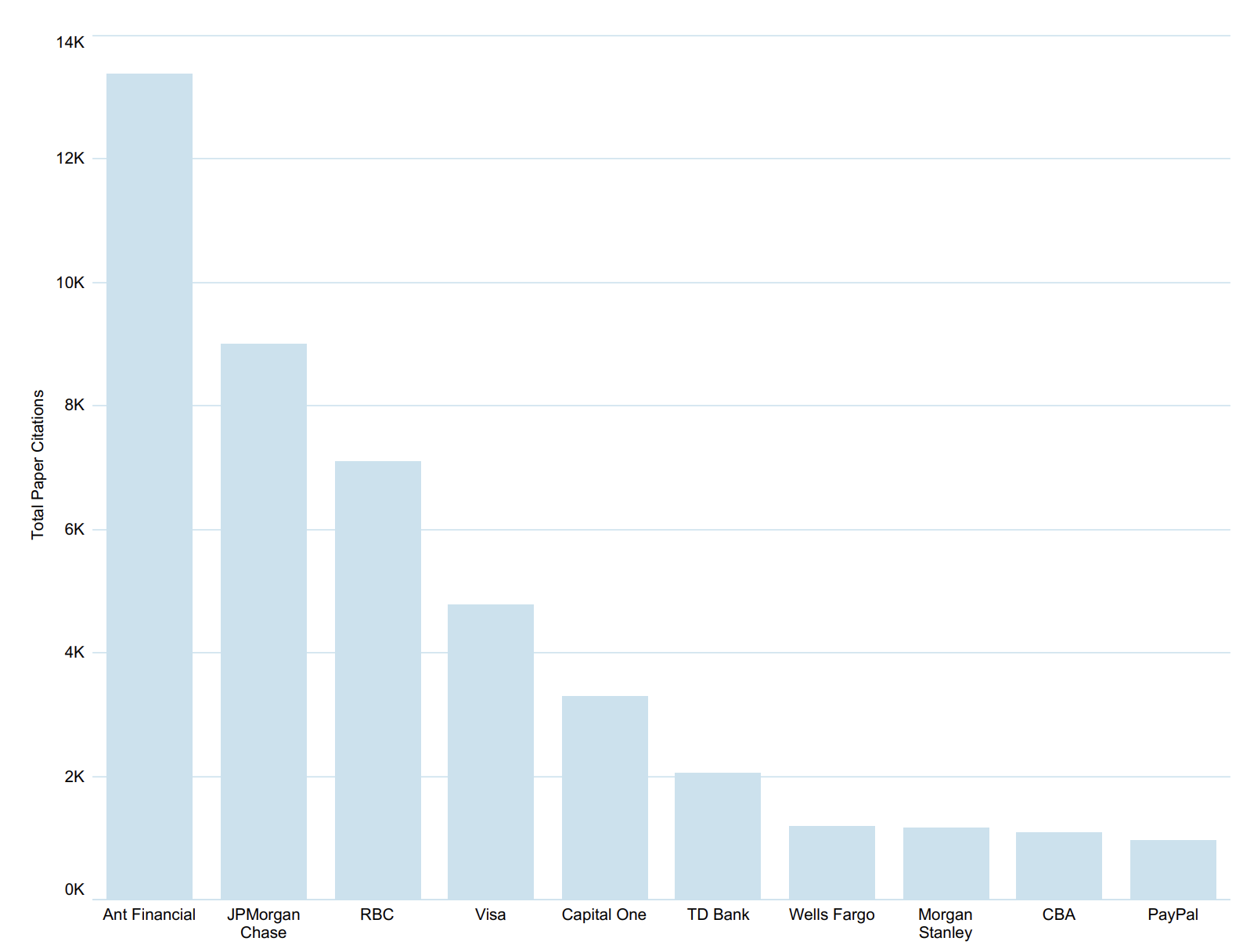

Given that we are talking about AI talents and outsourcing, let’s look at another report, this time from Evident AI, a company dedicated to benchmarking the adoption of artificial intelligence in the Financial Services industry.

Their new Talent Report measures the distribution of AI talents across the top financial institutions around the world and the flow of talents across competitors.

The winning banks of this race currently are JP Morgan Chase, which has the highest number of AI-skilled people in the industry, and CapitalOne, which has the highest number of AI-skilled people compared to the size of the workforce.

Only PayPal beats CapitalOne in terms of AI talent density, but PayPal is a payment provider that was born as a technology company, so it’s not that surprising.

Any software vendor on the planet that is trying to sell AI-oriented solutions should be aware of this data and tailor their marketing and sales strategy accordingly.

These organizations are fiercely competing to hire AI talents away from each other. So acquisition and retention are critical aspects of their AI strategy.

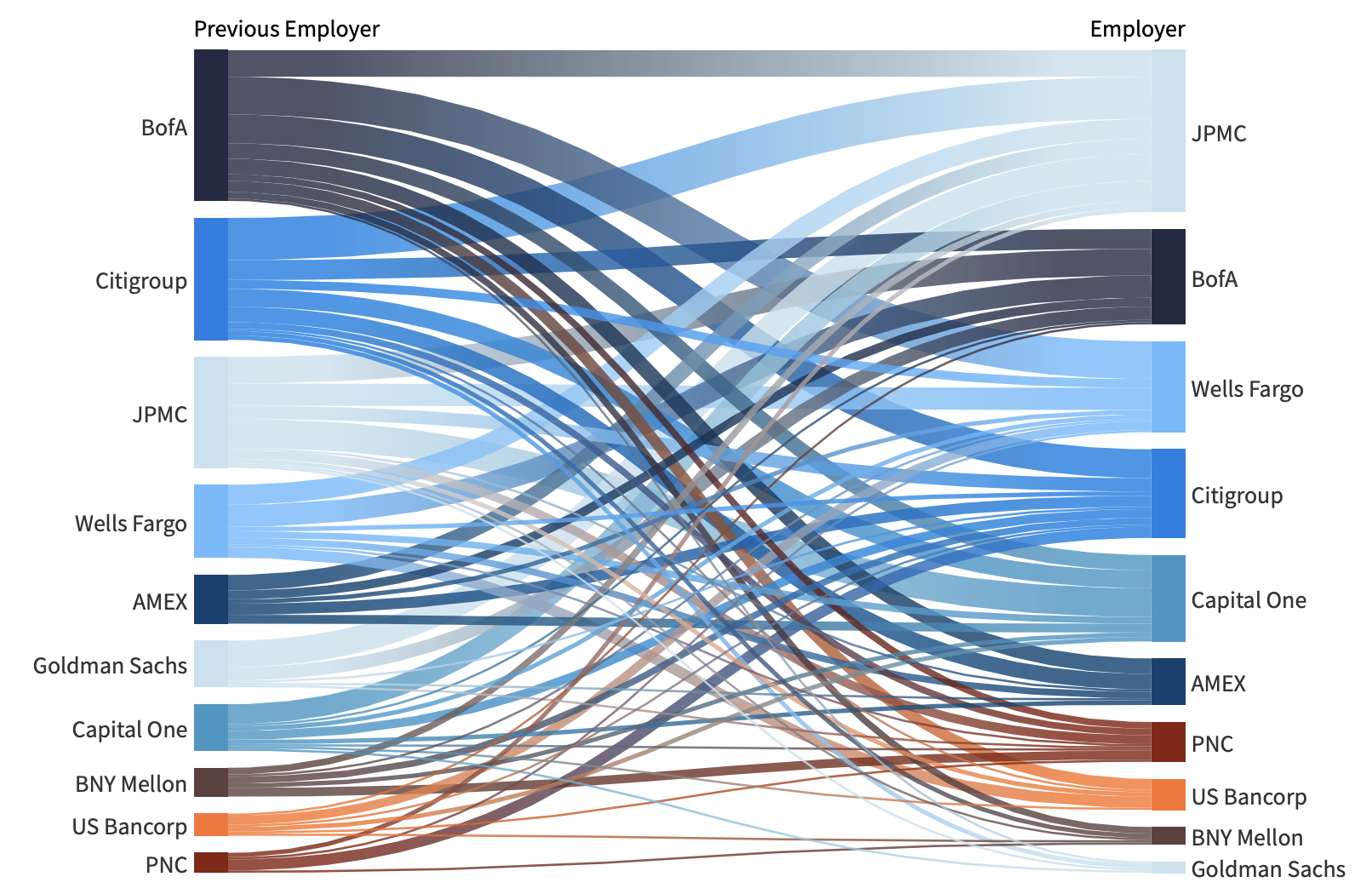

Let’s start with the hiring part.

As we mentioned in the Free Edition, Goldman Sachs is the one that is losing the most talent at the moment, possibly due to a very conservative approach to risk-taking.

As I wrote in this week’s Free Edition:

People want to see that their work matters.

People want to see that they are not just there to fill slide decks until they are numb.

People want to see that they can make an impact.

And when “people” means “AI researchers,” people also want to publish research in scientific journals. Allowing employees to allocate time for it, and promoting, even financially, the publishing of research papers, and patent filing, increases the chances of retaining AI talents.

A key aspect to highlight is that these financial organizations, once again, at the forefront of AI adoption, are not outsourcing the AI talent.

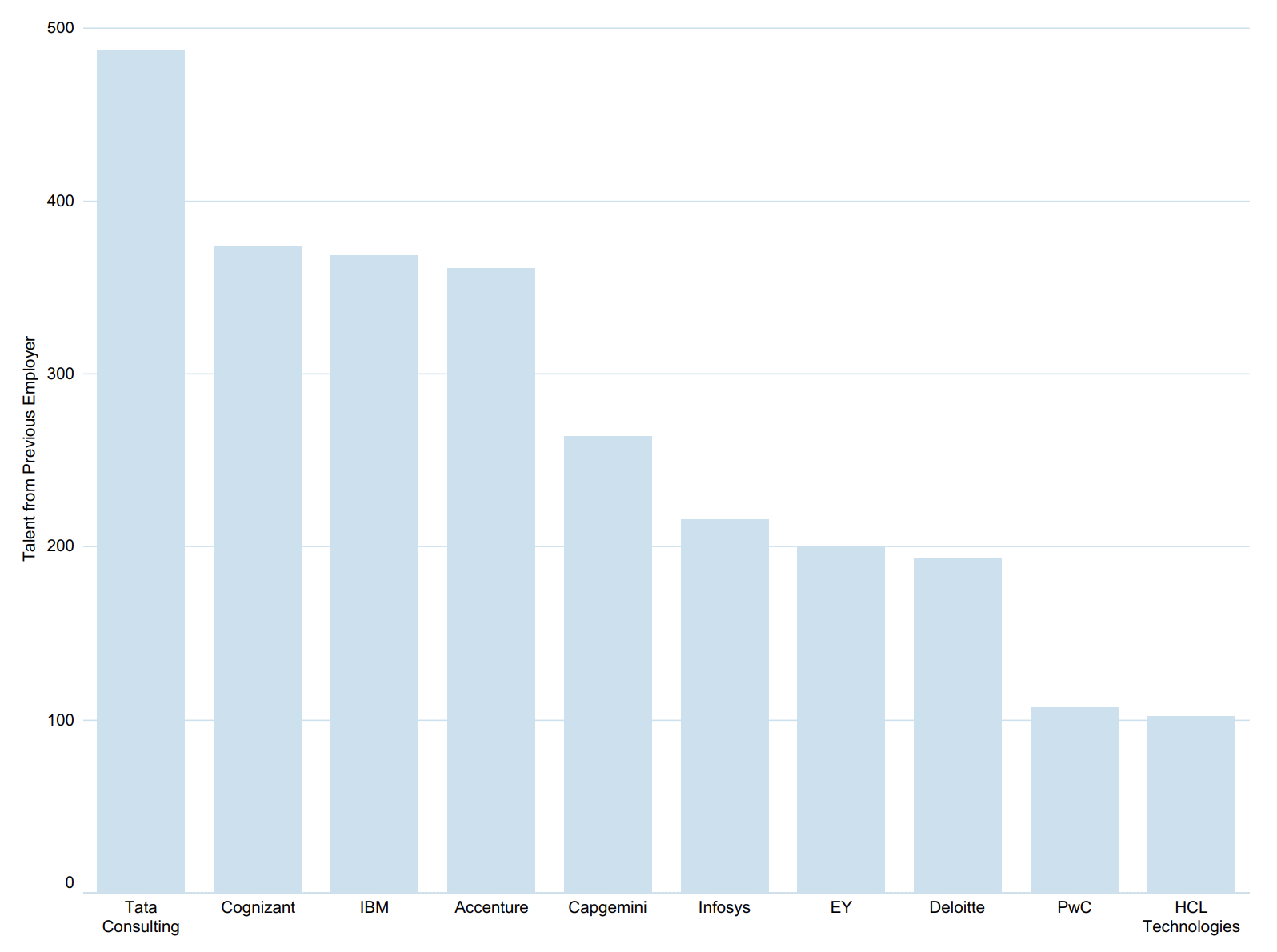

If anything, when they don’t poach AI talent from each other, they poach it from outsourcers:

This dynamic, together with the massive hiring of AI talents by the top tech companies in the world, makes the outsourcing strategy we discussed above, when reviewing the PluralSight survey, even more dangerous.

There are very few experts that remain available on the market for outsourcers to hire, and they are concentrated in very few geographies.

And when an outsourcer struggles to find the talent necessary to support its clients, it starts cutting corners. And large language models make it very easy to cut corners.

Ok.

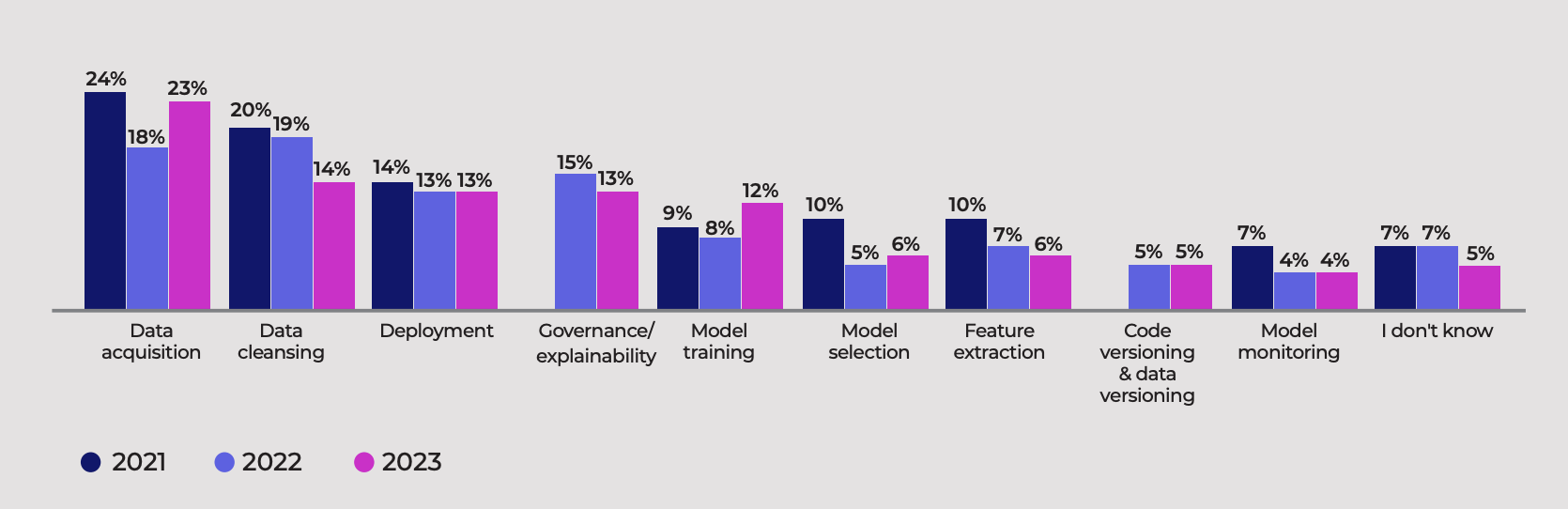

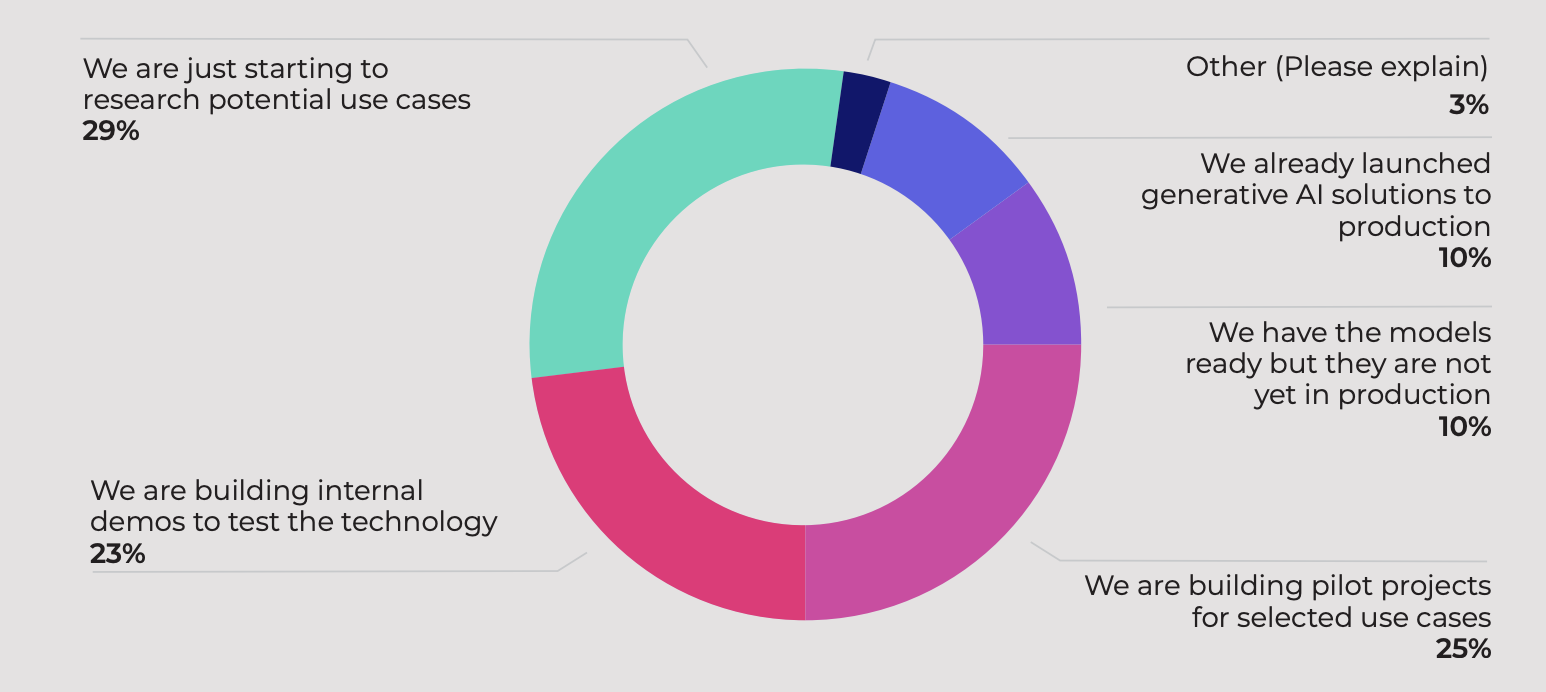

Let’s close this unusual A Chart to Look Smart section by expanding on a theme we touched on at the beginning: AI adoption plans that get executed.

To help us, there is cnvrg.io, a startup spun out of Intel, which offers “an operating system for machine learning built to actualize the AI-driven enterprise.” Whatever that means.

To compile its MLInsider 2023 report, the company recently surveyed 430 participants, 51% of whom work in companies with 600 employees or larger.

28% of all respondents were data scientists, 23% were DevOps engineers, and 17% were software developers. Only 10% were business professionals.

This audience of practitioners was asked to describe the challenges they face in their AI projects and, unsurprisingly, data preparation remains the hardest part of the job:

More importantly, they were asked to describe how mature their use of generative AI was in the organization, and it’s clear that most of them are far from being ready to deploy anything in production:

Most of these organizations are facing their challenges despite counting on centralized AI solutions offered by AI providers.

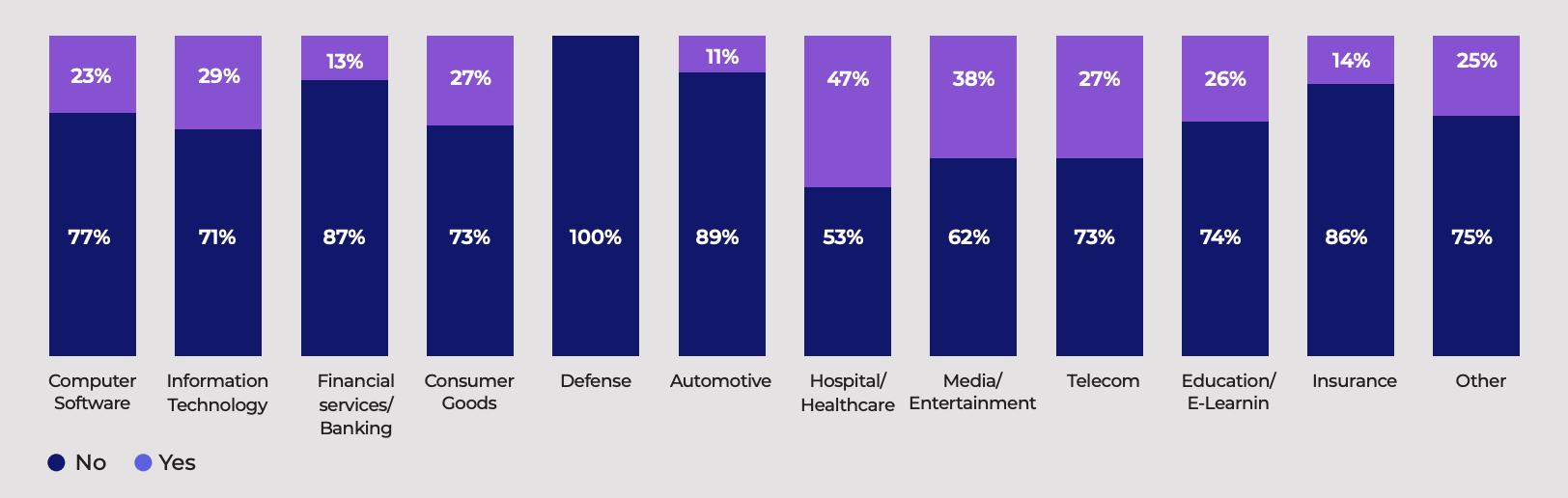

When asked if they are building their own AI solutions internally, leveraging open access large language models, very few answered yes, and in very few industries:

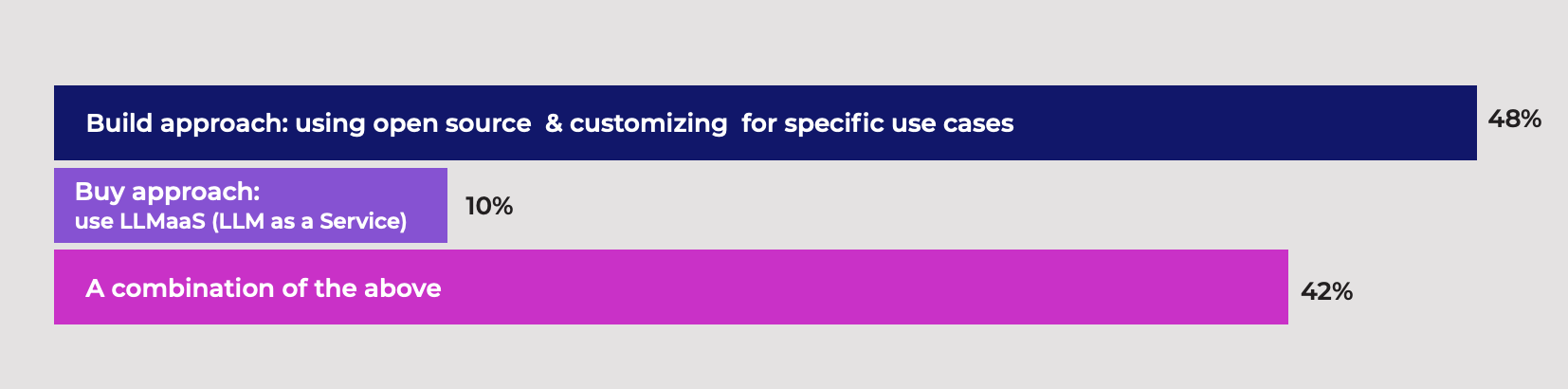

As we have seen for almost two decades with cloud computing, companies mix and match centralized AI solutions with open access LLMs depending on the use case and the most relevant aspect for each one: cost, accuracy, speed, etc.

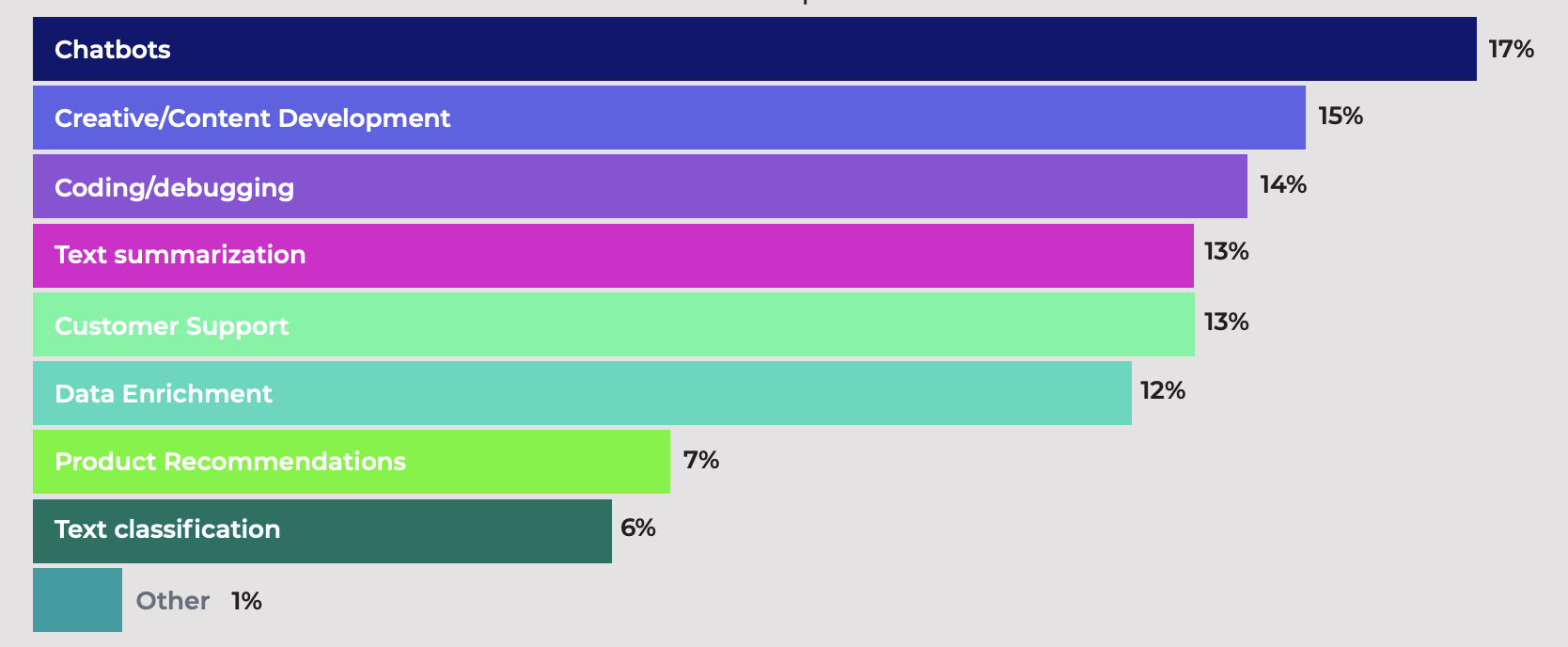

In a sense, the most important chart in this last report is the one that describes the top use cases for generative AI in these companies:

Anybody reading a chart like this would understand absolutely nothing about how AI is being used around the world. And the reason is that the level of abstraction of these answers is too high to be meaningful.

This is why Synthetic Work’s AI Adoption Tracker is so important. In it, you’ll find 150 use cases that tell you exactly how, say, summarization is being used by a law firm or a hospital.

Not only that gives you a much better understanding of the state of the union, but it inspire you to look beyond the obvious scenarios where your company will end up competing with everybody else in a race to the bottom.

OK, last chart.

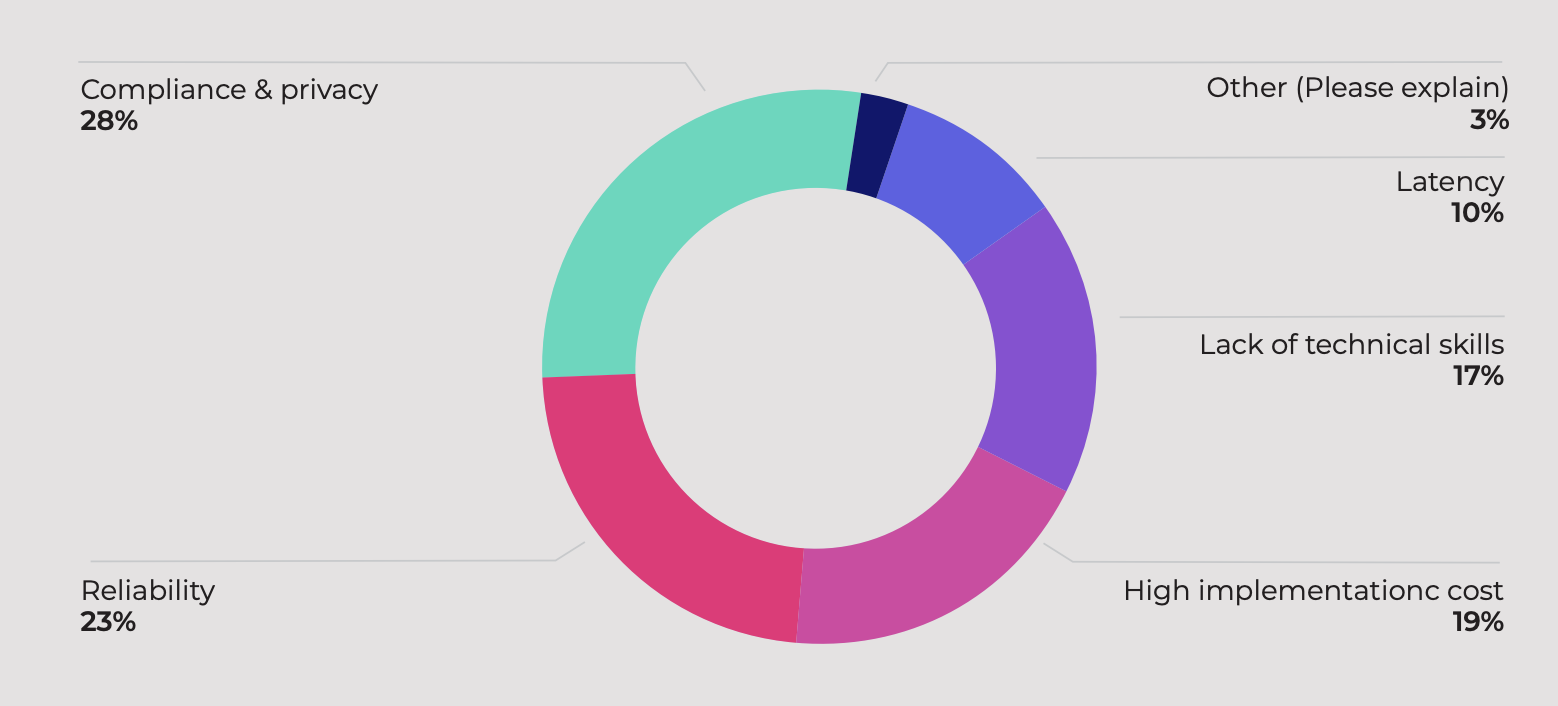

When asked about the top concerns related to their AI projects, the respondents mentioned the usual suspects: compliance, reliability, and cost.

There.

Now you have enough data to support whatever narrative you want to push in your organization in 2024. Happy lobbying!

…actually, wait.

Before you go, a final question to think about:

How many of these survey responses were generated by synthetic users provided by absurd market analysis companies like the ones we have seen in Issue #9 – If You Have Experience With Technologies Like AGI, You Are Encouraged To Apply or in Issue #19 – Let’s collapse some models, there’s money to be made?

None, of course.

Of course not.

Before you start reading this section, it's mandatory that you roll your eyes at the word "engineering" in "prompt engineering".

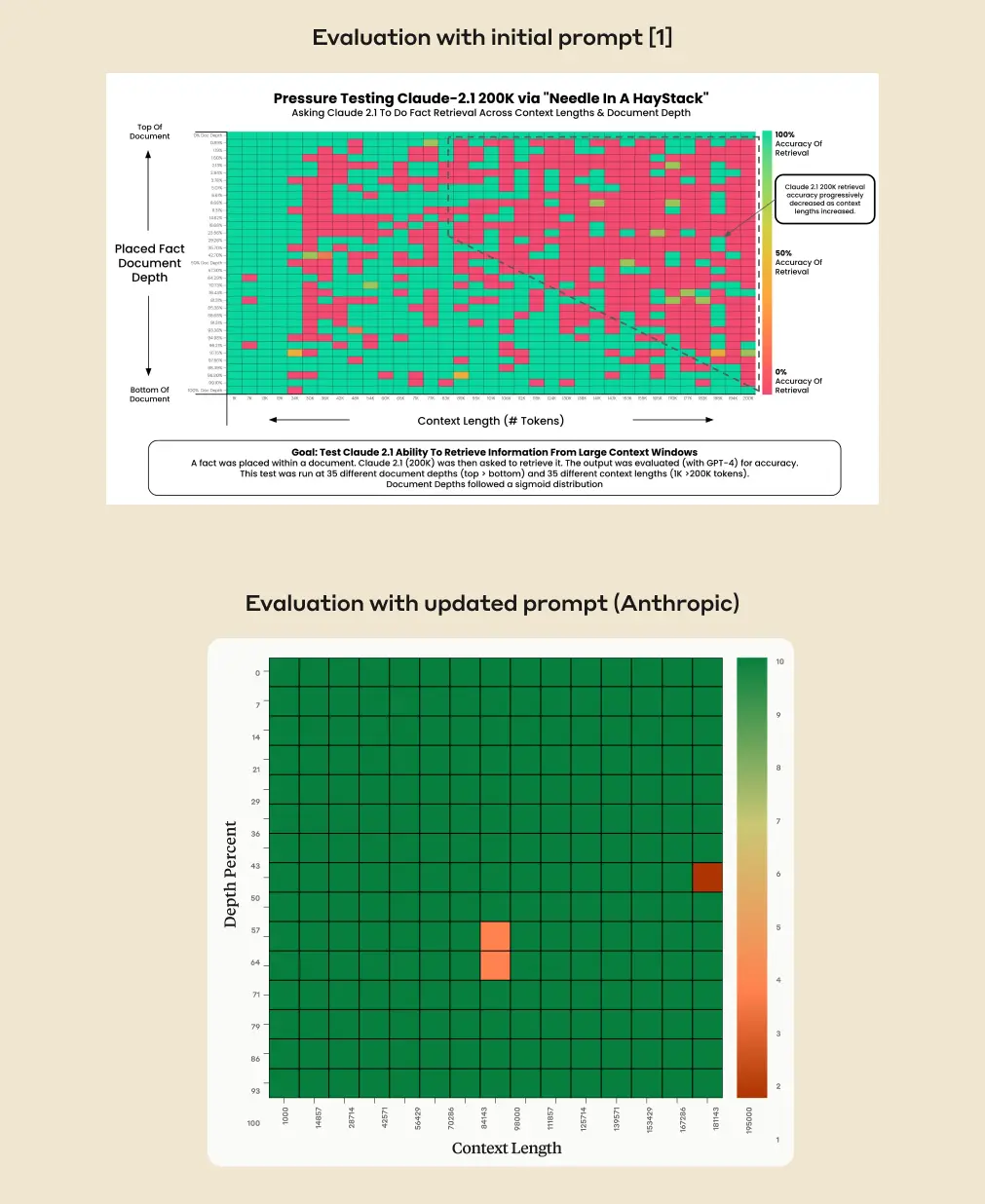

Do you remember the embarrassing comparison between the new Claude 2.1 and GPT-4-Turbo that we discussed in Issue #39 – The Balance Scale?

Independent analysis demonstrated that Claude 2.1 was absolutely terrible at retrieving information in the middle of its enormous 200k token context window, while GPT-4-Turbo was almost flawless.

The test, to refresh your memory, consisted of injecting a random sentence in a random position of a very long document and seeing if the model could retrieve the information necessary to answer a question about that sentence.

Anthropic, the maker of Claude 2.1, didn’t quite appreciate the outcome of the test and has prepared a rebuttal to explain the behavior.

From the official blog post:

Claude 2.1 is trained on a mix of data aimed at reducing inaccuracies. This includes not answering a question based on a document if it doesn’t contain enough information to justify that answer. We believe that, either as a result of general or task-specific data aimed at reducing such inaccuracies, the model is less likely to answer questions based on an out of place sentence embedded in a broader context.

Claude doesn’t seem to show the same degree of reluctance if we ask a question about a sentence that was in the long document to begin with and is therefore not out of place.

…

What can users do if Claude is reluctant to respond to a long context retrieval question? We’ve found that a minor prompt update produces very different outcomes in cases where Claude is capable of giving an answer, but is hesitant to do so. When running the same evaluation internally, adding just one sentence to the prompt resulted in near complete fidelity throughout Claude 2.1’s 200K context window.

…

We achieved significantly better results on the same evaluation by adding the sentence “Here is the most relevant sentence in the context:” to the start of Claude’s response. This was enough to raise Claude 2.1’s score from 27% to 98% on the original evaluation.

…

Essentially, by directing the model to look for relevant sentences first, the prompt overrides Claude’s reluctance to answer based on a single sentence, especially one that appears out of place in a longer document.This approach also improves Claude’s performance on single sentence answers that were within context (ie. not out of place). To demonstrate this, the revised prompt achieves 90-95% accuracy.

The next time you hear a world-famous AI “expert” saying that “prompt engineering doesn’t really matter that much, just write”, maybe change AI expert.