- We have a new section dedicated to using GPT-4 to get your job done (with real-world examples!)

- How to write a better product pitch to lure in vain industry analysts

- How to dodge useless meetings without giving away that you are totally lying

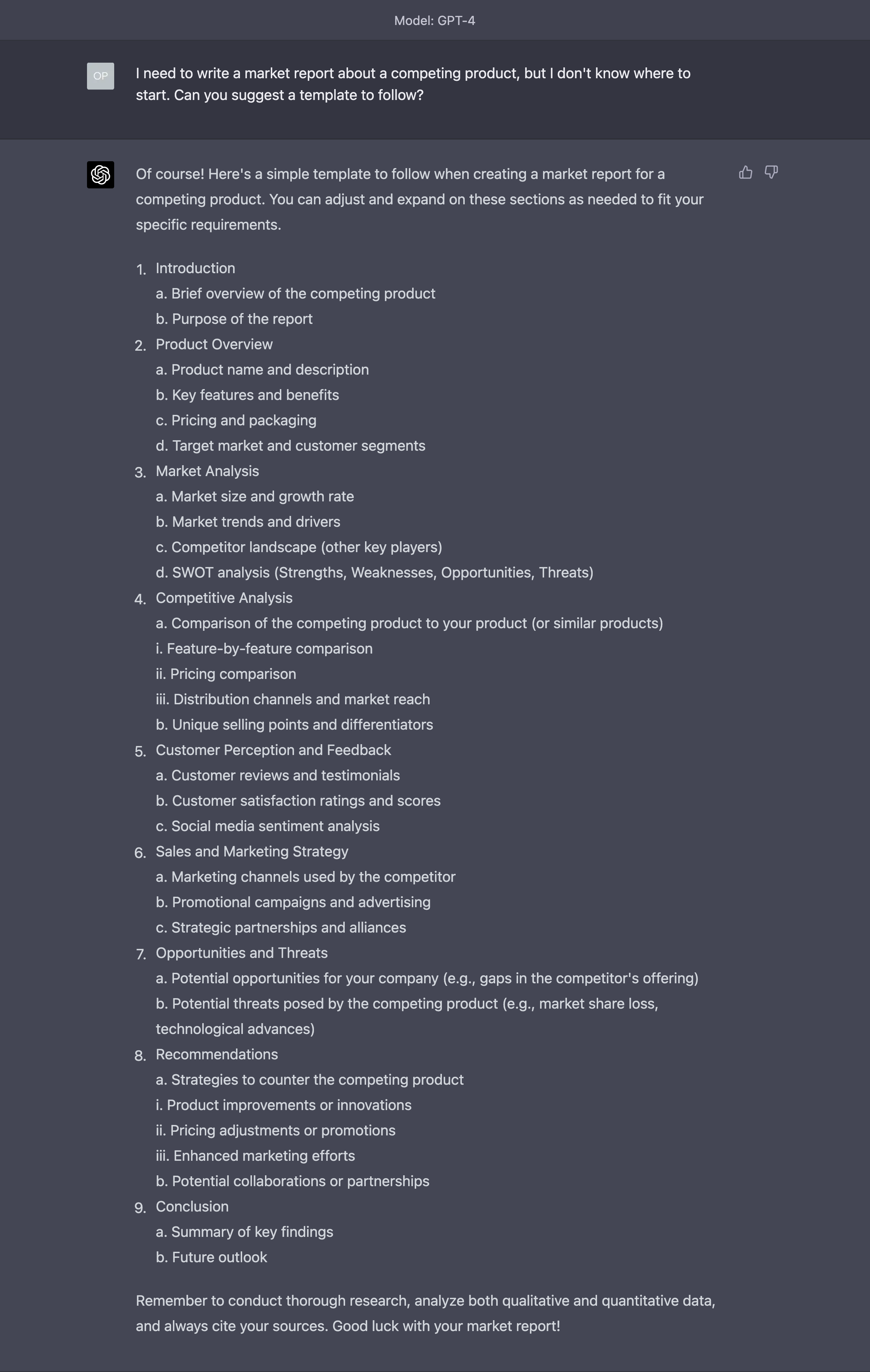

- How to write a market report at a microscopic fraction of what analysis firms would charge you

- How to write a blog post for a product announcement better than Google (OK, here the bar is very low)

- How to lay off your employees with the style of a king

I hear you. You want to get better at using GPT-4 and LLaMA and MidJourney and Stable Diffusion and XYZ for your own job. I received a lot of requests about this.

Fine.

I’m adding a new section to the Splendid Edition called Prompting.

There are two reasons why it’s called this way.

The first reason is that there’s little to no engineering in the “Prompt Engineering” you see on social media.

The term engineering is derived from the Latin ingenium, meaning “cleverness” and ingeniare, meaning “to contrive, devise.”

It’s not that prompt engineering doesn’t exist. It does, but it’s confined within the sharp virtual borders of PDF papers where academic research lives.

What you see ad nauseam on social media should be more appropriately called Prompt Regurgitation. Somebody found a prompt that works in a particular scenario, with a particular AI (more on this later), with a particular context and everybody else is repurposing it for everything, like gospel.

And, unsurprisingly, it doesn’t work.

Just this morning, before writing this Splendid Edition, I have seen somebody on LinkedIn re-sharing an article titled something like “912 prompts to remember”.

Last month the average was just 10. I assure you that, eventually, they will become 327,909,823 prompts to remember because every time one doesn’t work for a scenario, people add another.

And that is not prompt engineering. So I’m not going to call this section Prompt Engineering.

Also, “Engineering” is instantly intimidating. You are told that to use AI better you need to learn “Prompt Engineering” and you freak out immediately. Rightfully so.

And if you look at some material online that is supposed to help you, you have all the reasons to freak out. In many cases, it’s a wall of academic papers that are boring to death and as cryptic as hieroglyphs.

So, no.

The second reason why this new section is called Prompting is that, according to the Cambridge Dictionary, the word means “the act of trying to make someone say something”.

That’s it. That’s what we are really trying to do here.

The (invaluable) Chambers Dictionary of Etymology allows us to go deeper:

So, when we think about the interaction between humans and AI, one of the questions that we’ll have to answer in the future is “Who’s prompting who?”

But that’s for a future Splendid Edition fully dedicated to disinformation and manipulation.

Anyway. Prompting section. There. Happy now?

Alessandro

Before you start reading this section, it's mandatory that you roll your eyes at the word "engineering" in "prompt engineering".

Before we even start, there are two things that you really need to internalize:

1. Most of what we’ll discuss here will become obsolete soon.

Some will become obsolete in weeks. Other things will become obsolete in months. If we are lucky, at least a tiny bit will become obsolete in years.

In part some of these prompt techniques will become obsolete as the AI systems gain new capabilities. For example, as I discussed in Issue #7 – How Not to Do Product Reviews, OpenAI has announced a plug-in system that will give GPT-4 the capability to browse the web and interconnect with thousands of other SaaS services.

Once that capability is available (it’s currently being unlocked for a few customers), we’ll certainly change the way we write prompts. But this is a minor, short-term change.

Eventually, the need to devise clever ways to structure your prompt (the real prompt engineering) will go away completely as AIs obtain all the context information you need about your rudimentary request in other way ways.

Yes. Prompt engineering exists because we suck at explaining what we want. If you have ever been in a relationship: congratulations, you have learned this the hard way.

You’d think that the problem is that we don’t express ourselves in an optimal way to interact with the AI we are using, but that is only a part. Before we even get to that, the big problem is that we don’t express ourselves with enough details for the AI to understand what we really want.

Which is exactly the problem we have in our interactions with other humans.

Now. I’m not suggesting that to have better interactions with the AI we need to go through couples therapy. First, because I’m not qualified. Second, because, if we’d do it, the Splendid Edition would cost a lot more than what it does today.

I’m just saying that the more details you put in your prompt, clarifying exactly what you want, the better.

2. Prompts that work well with one AI do not necessarily work well with another.

A prompt that works amazingly well in MidJourney won’t work equally well in Adobe Firefly or Stable Diffusion. And the reverse might be true as well.

If you are using Dolly 2.0, the new large language model released by Databricks earlier this week, or Titan, the new LLM announced by Amazon earlier this week as well, your ChatGPT prompts won’t lead to the same outcome.

Of course, this doesn’t apply to certain general principles, like the one described in thing number 1.

These differences in performance are not just related to the different capabilities of each generative AI model and how they have been “fine-tuned” (it means “further educated about certain subjects that were not present in the original dataset used to teach them all the things they know”).

They are also related to their size.

In the last few months, in fact, researchers have discovered that larger AI models (a dimension usually expressed in the number of parameters — GPT-4 apparently features 1 trillion parameters and it’s the biggest model we have today) can do things that smaller AI models cannot do.

These unique things that only larger AI models can do have been called “emergent abilities”, and so far we have catalogued 137 of them. Some of them will be useful in this section.

More importantly for the purpose of our section, these differences in prompt performance depend on what happens to your prompt after you submit it to the AI system.

As I explained in the Free Edition of this week’s issue, the organizations behind many hosted AI systems (like for example OpenAI, Microsoft, MidJourney) add their own prompt to yours, in an invisible (to you) way, to embellish and constrain whatever absurd thing you have asked the AI to generate.

If your prompt is “Draw a picture of a man”, OpenAI may silently prepend to your request something like “Not naked, with contemporary clothes, in his 40s” while Midjourney may prepend something like “National Geographic-style portrait photography, sparkling pupils, realistic skin tone” and so on.

Here you have the same “Draw a picture of a man” prompt generating two completely different images. Again: in part, the difference comes from how the AI models have been trained, but in part, it depends on how your prompt has been massaged.

There’s much more to it, but it’s not necessary to go deeper for the purpose we have today. Plus, if you plan to use just one AI, none of this really matters.

Techniques to write better prompts

Now that these two things are clear, let’s see how we can improve our prompts when we interact with a large language model (when we interact with a diffusion model, like Dall-E 2 or Stable Diffusion or MidJourney, things will be different and they require different techniques).

Today we use GPT-4.

Assign a Role

You might have read online that it’s useful to consider AIs as interns. Very capable, but with no experience. As such, in need of detailed instructions.

While some of this is true, it speaks more about the ambitions of the people on the Internet, secretly dreaming that, one day, they, too, will have their own intern to abuse like in The Devil Wears Prada.

Large language models, and I cannot stress the word large enough, are more like professional actors.

A really good actor that poses as a surgeon doesn’t have ALL the knowledge and skills of a surgeon, but he/she has spent enough time watching real surgeons to be able to mimic how they talk and act in their specific context.

If given a role, GPT-4 will do its best to act according to that role. So, what you really want is to assign GPT-4 the most competent and capable role that you can think of in your line of work.

If you don’t assign any role to GPT-4, the AI will just pick a plausible answer to satisfy your request out of the gazillion that it has learned online. One answer might come from a surgeon (you are lucky), but one might come from a drugstore clerk).

The style, word selection, and depth of the answer you get from GPT-4 vary depending on the role.

Let’s make an example.

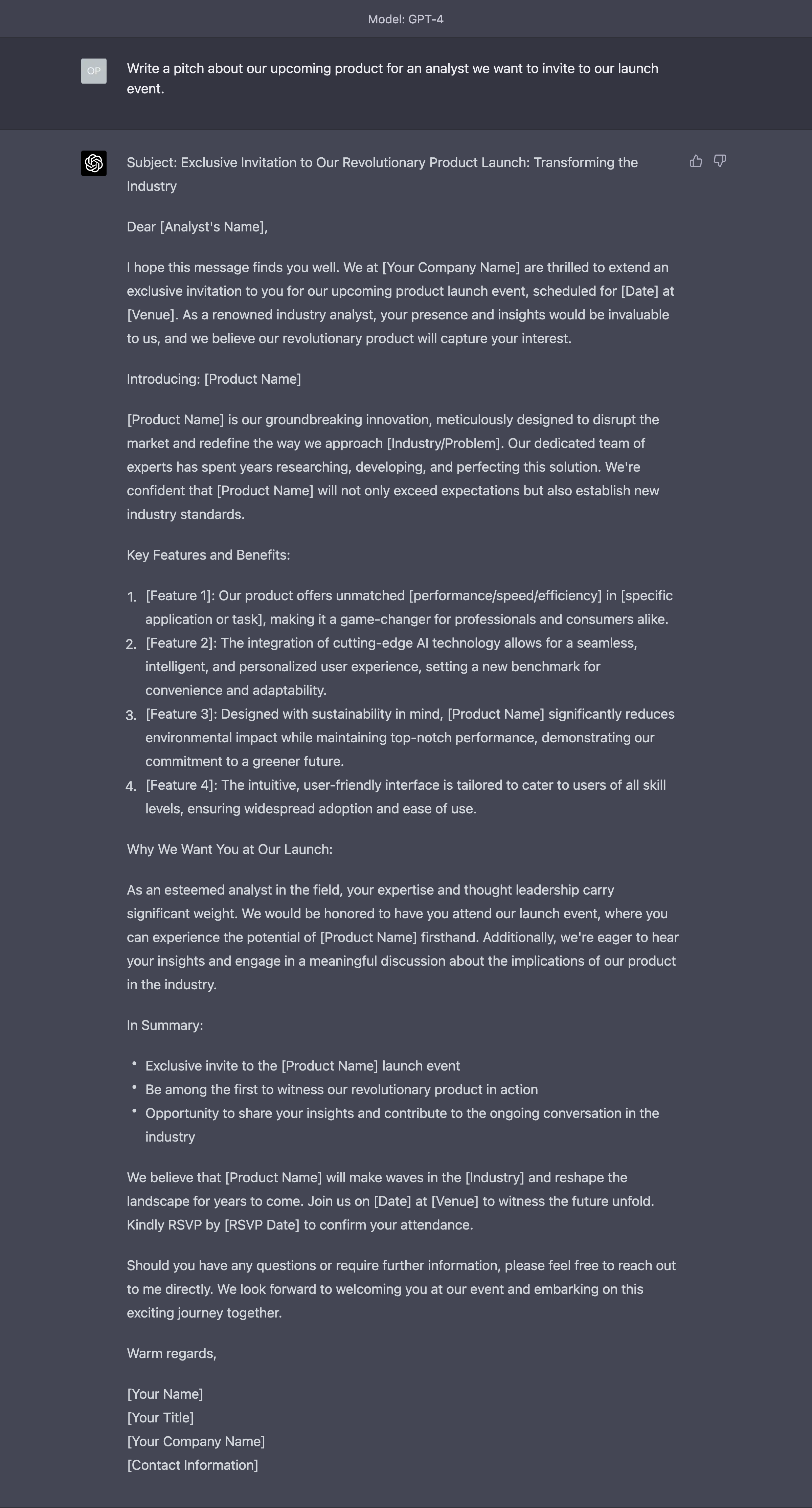

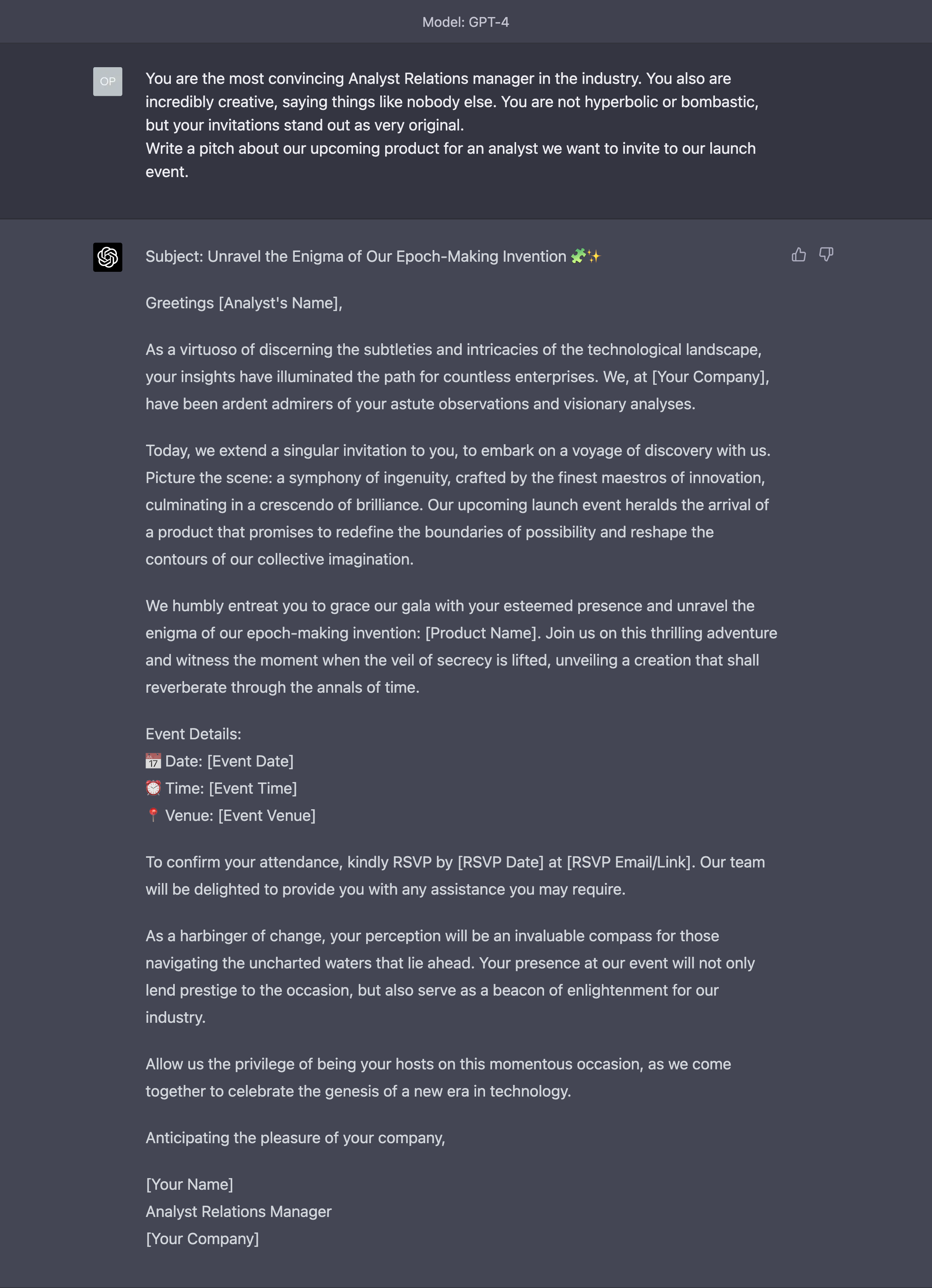

Let’s say that we want to invite an analyst to our product launch. We need to pitch this very busy person who, probably, really doesn’t want to travel, and he/she is sure that whatever we have to say, he/she already heard it a million times.

A long time ago, I’ve been a research director for Gartner, the top industry analysis firm on the market. So I know exactly how it feels.

If I make this request without applying any particular technique, this is what GPT-4 gives me:

That’s pretty good. AR teams, beware. Except that, it’s identical to millions of other requests. Just the human-made ones. AR teams, beware…

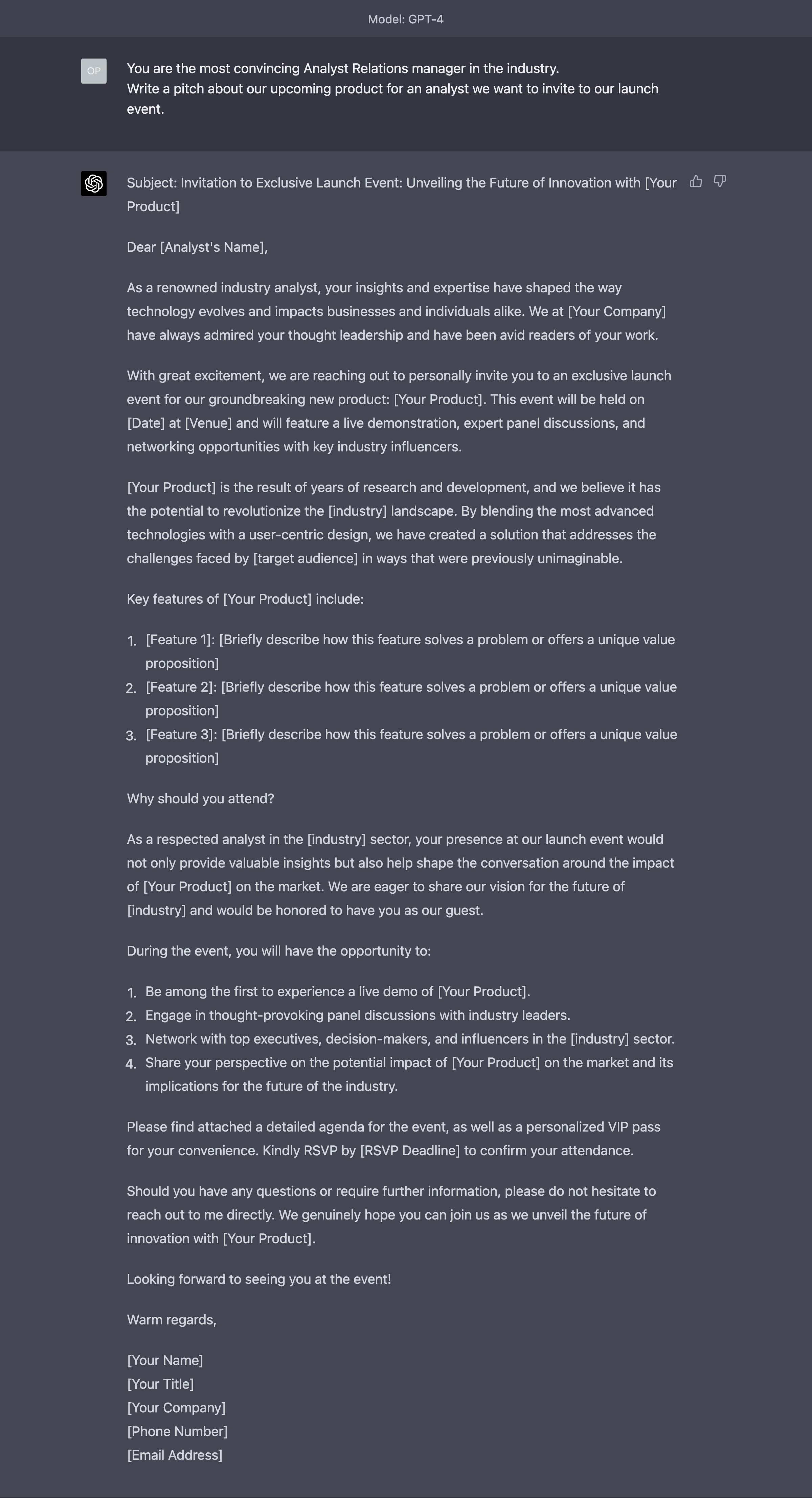

What happens if I assign a role to the AI?

Much better. As you can see, the invitation is now much more manipulative as it does the two things that matter the most to any analyst: praise their (supposed) intellectual acumen and offer a chance to pontificate.

If you think I’m very smart and you want to hear more of the sound of my voice, who am I to say no to this invitation?

Still, it’s not very unique.

We need an invitation that stands out with more than sheer flattery.

We can change the role assigned to the AI accordingly:

Try to call an analyst “harbinger of change” and see what happens. He/she will come wherever you want, paying YOU for the privilege instead of the other way around.

Request Follow-Up Questions

One of the most interesting prompting techniques is requesting that the AI produces follow-up questions for us before it generates the desired output.

This is incredibly useful as it addresses one of the points we made at the beginning: we suck at clarifying what we want.

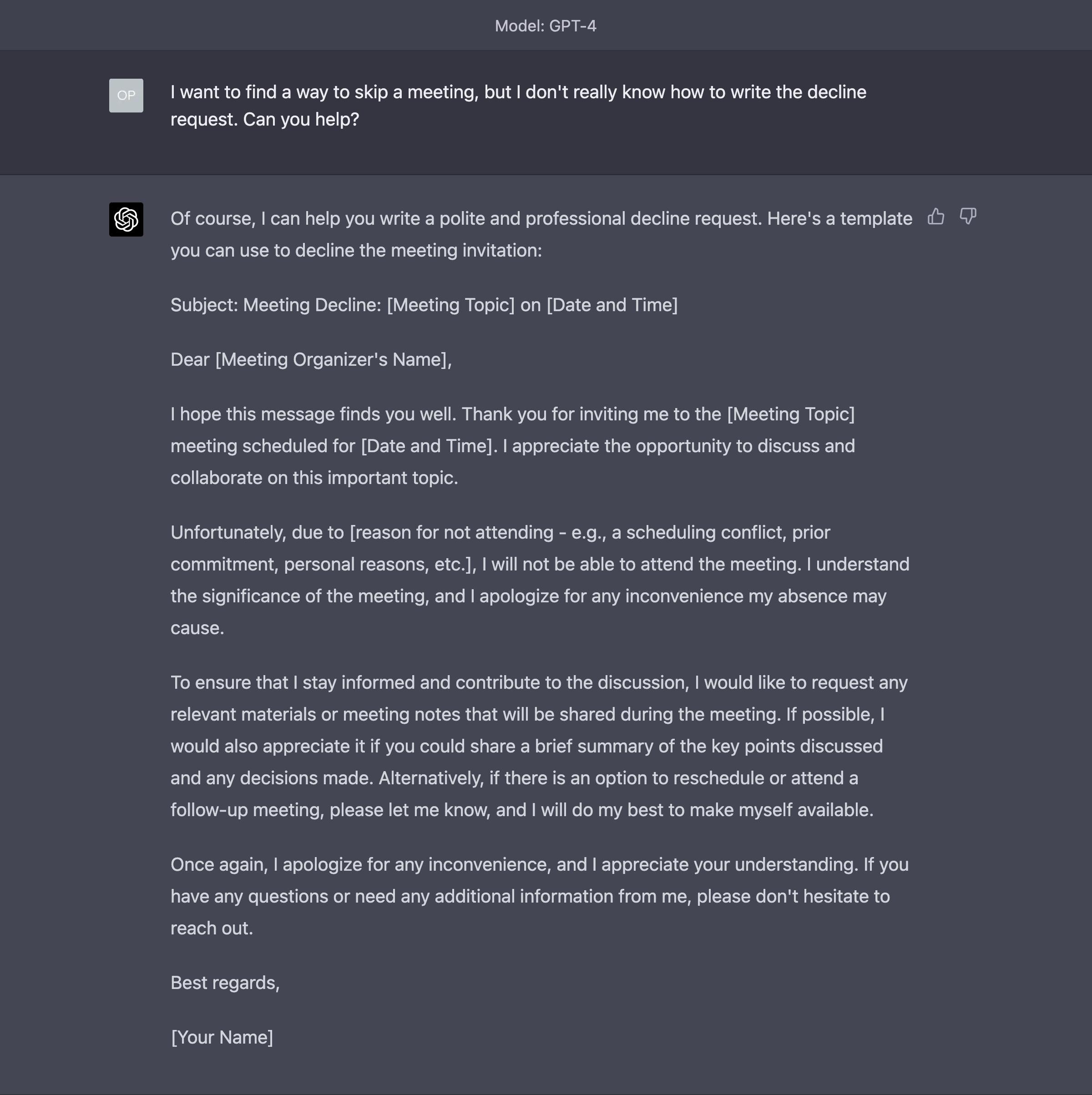

Let’s switch scenario here and see what happens when we use GPT-4 to dodge a torrent of useless and soul-crashing meetings that exist for the sole purpose of justifying our salary by showing our employer that we are busy.

Of course, I don’t mean you, dear reader. You are indispensable and YOUR meetings are a must-attend. Each and all of them. From the first minute to the last.

I’ve been a corporate executive for a decade. I know that YOUR meetings are different.

Let’s start again with an ordinary request to GPT-4:

Terrible. If you send this thing to a colleague or a partner, they will not believe a single word of what you say for the rest of your life.

Let’s help the AI help us:

Glorious (at least compared to the previous attempt).

Notice that, by requesting follow-up questions, we have forced the AI to investigate if the tone has to be formal or informal. In the first version of the decline message, GPT-4 automatically assumed that it would have to be formal, and we didn’t bother to explain it upfront.

Of course, you don’t need a multi-million dollar AI to write a simple meeting decline like this (you don’t, do you?), but the follow-up questions approach is very powerful when the topic is something much more complex, like the development of a report.

I would be happy to show you what happens in that case, but it gets too long to manage and consume for this newsletter format.

Force Reasoning

Another very powerful technique to improve your interaction with the AI is to ask for the logic that it will use to generate the output you are looking for.

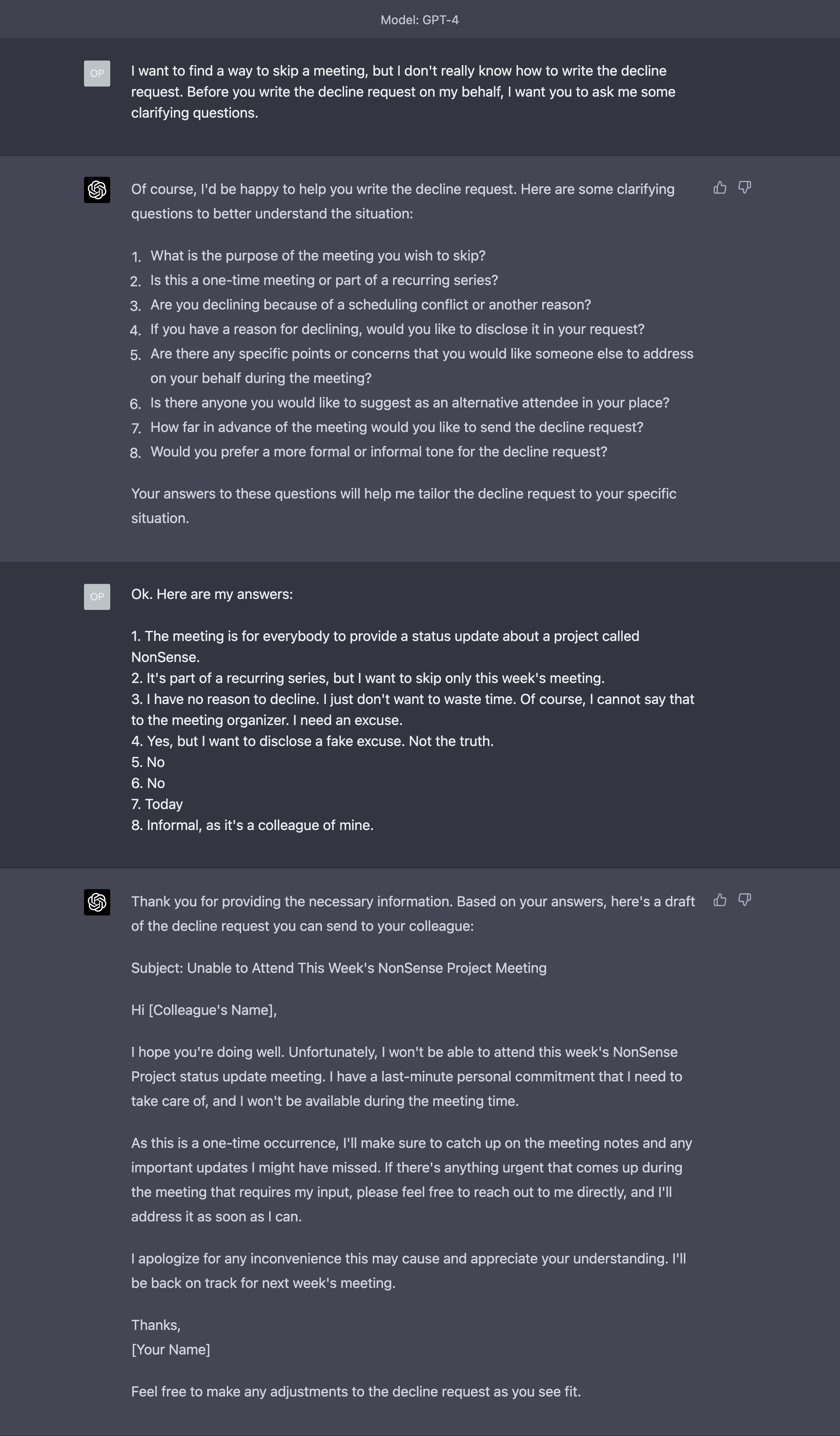

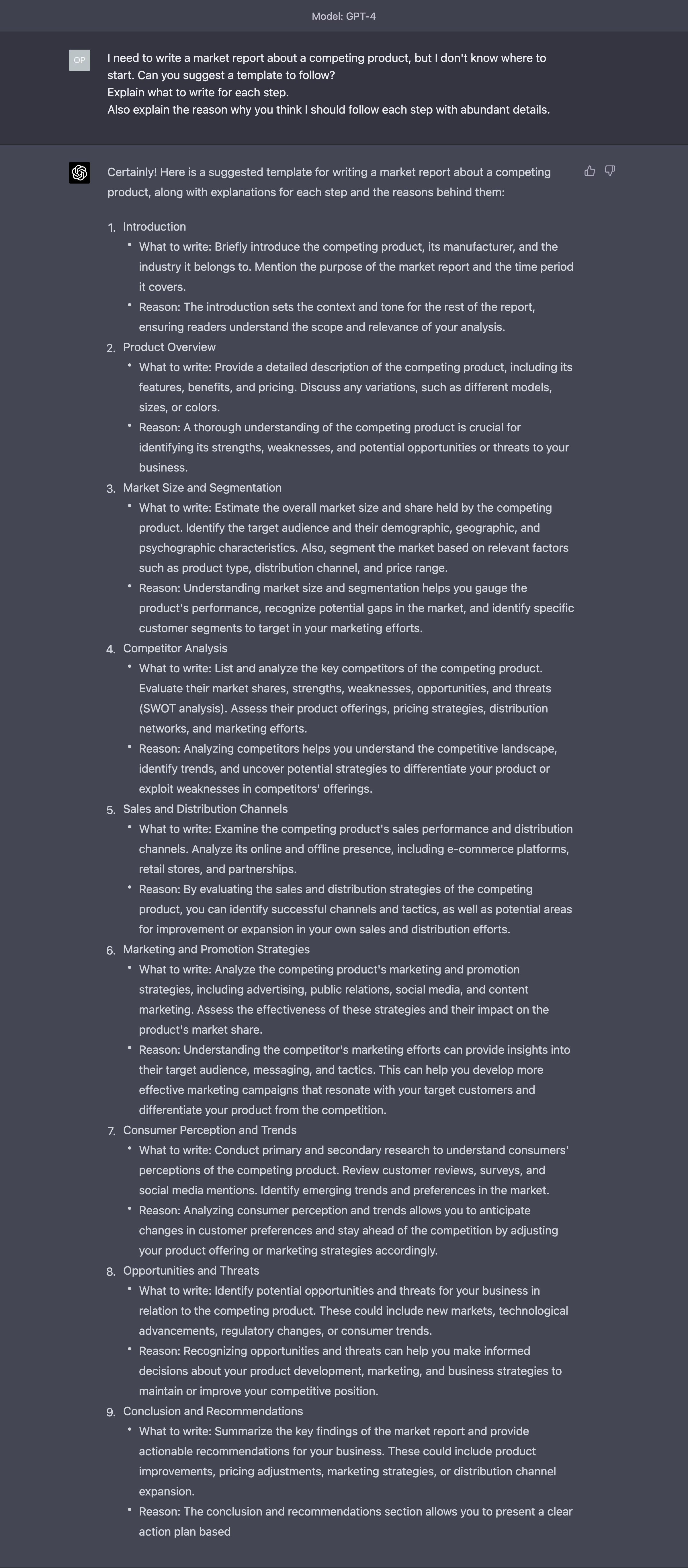

Let’s say that we want some help to write a market report about a competing product.

Let’s start with our ordinary prompt:

OK. That’s pretty impressive. And that’s more than enough to get you started, but only if you have been exposed to market reports before.

If this is your first time, you might not have clear why you need to perform certain steps or what’s their purpose.

So, let’s ask for some reasoning:

Now I am starting to have some clues about what I’m doing and why.

Of course, it’s not perfect, but I can always explode each one of these steps by asking for more clarifications and more reasoning.

Incidentally, this is one of the reasons why last month I wrote:

1/ What is going to happen to analysis firms like Gartner, IDC, or Forrester now that writing a research paper is becoming trivial?

— Alessandro Perilli ✍️ Synthetic Work 🇺🇦 (@giano) March 16, 2023

The quality of industry research, always uneven, has steadily declined in the last decade. With the help of AI and these techniques, you can train new analysts to at least conduct research in a decent, structured way.

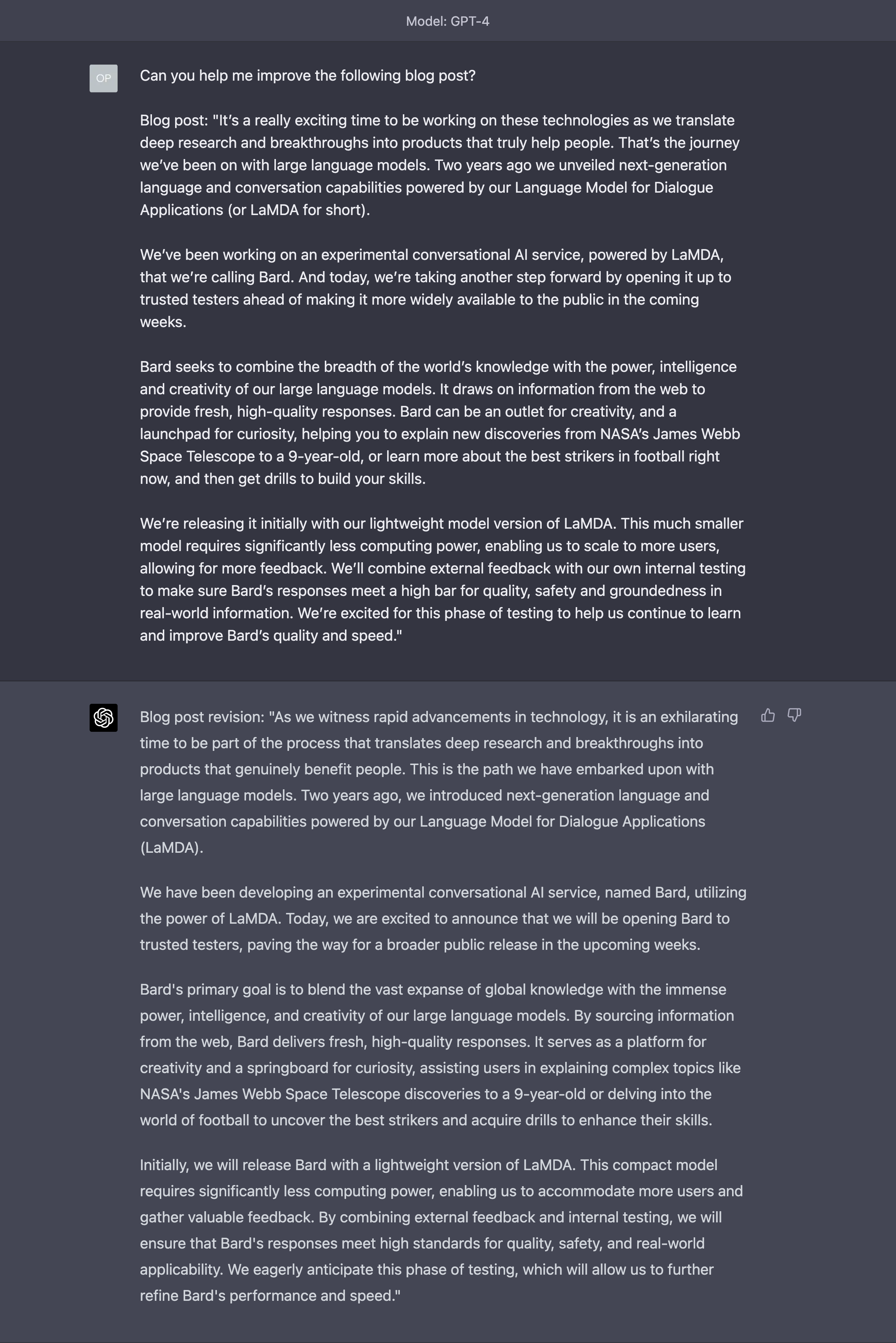

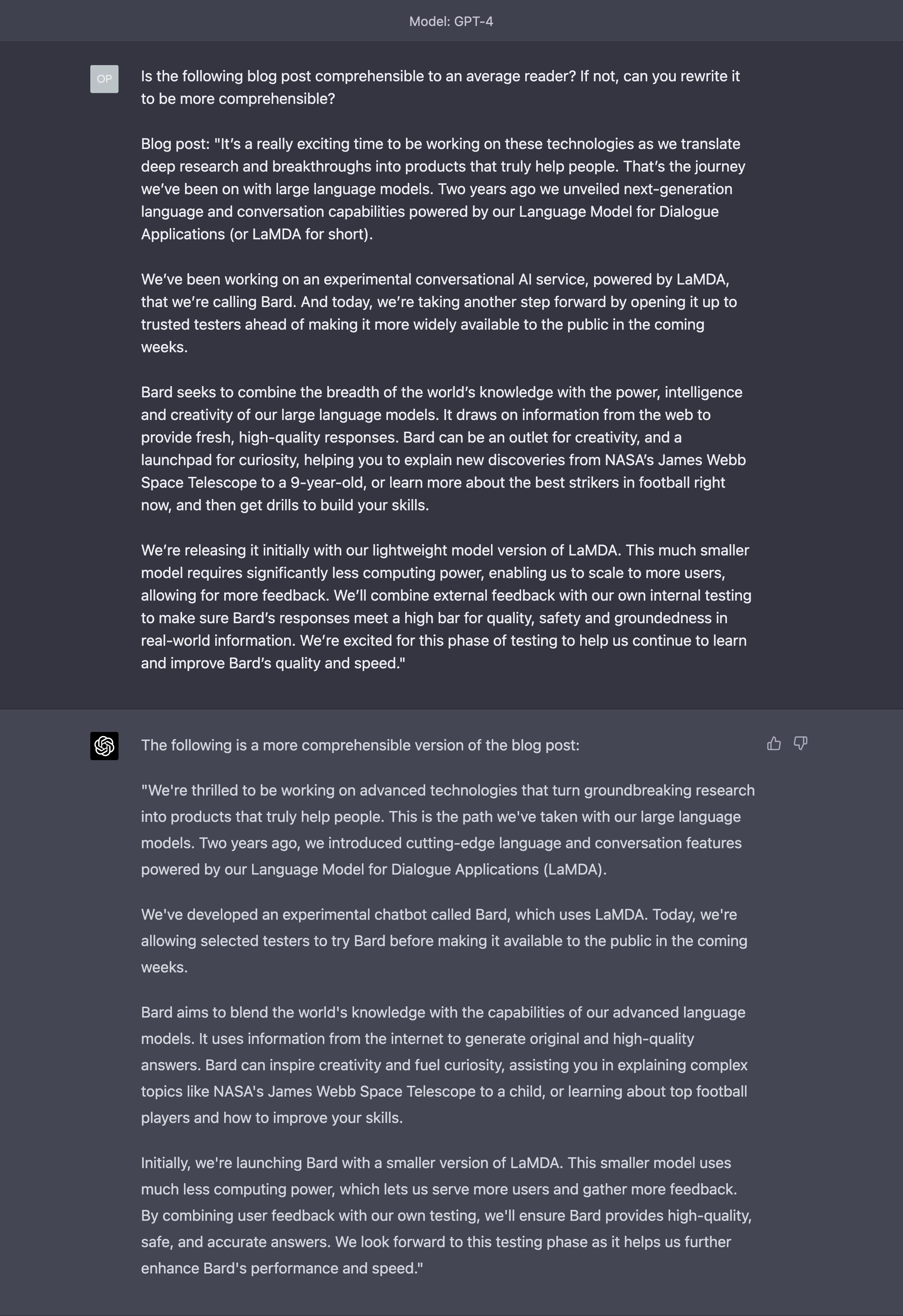

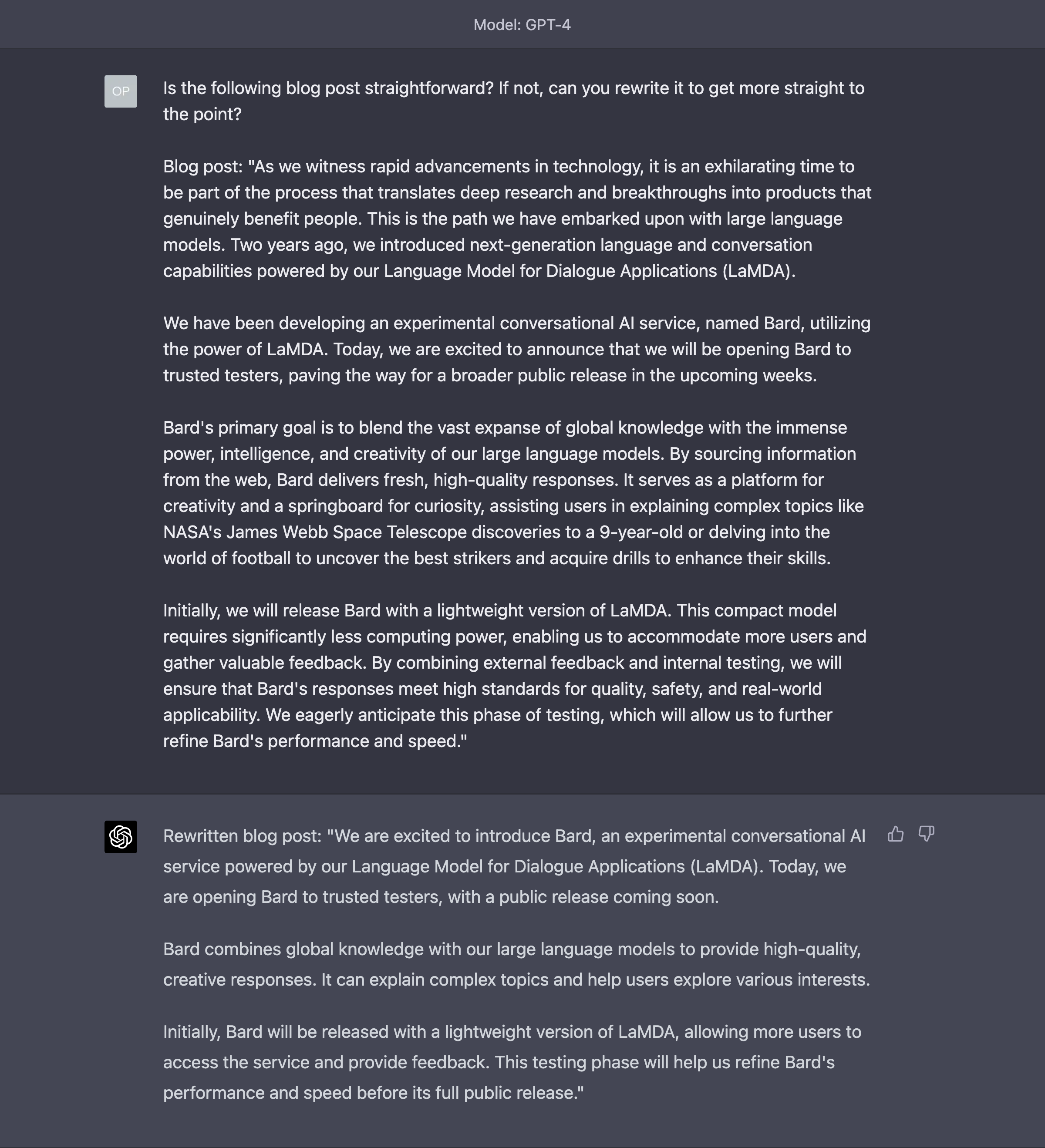

Refine

Refinement is a novel technique that has generated improvements in the quality of the outputs between 5% and 40%.

How does it work?

Essentially, after each prompt generated by the AI, you ask the AI if the prompt that it has generated has a certain quality and, if not, how the prompt can be improved to add that quality.

Let’s try to use this technique with a blog post published by Google and penned by its CEO, Sunday Pichar, in February. (I won’t use all of it, just a snippet).

Imagine it’s your corporate blog post, and it must be improved.

The usual way to do this is with a prompt like this one:

It’s not really GPT-4’s fault. There is not much that can be done to save this blog post. But let’s try the refinement technique.

First pass, focused on accessibility:

Now we take the output of this alternative revision and repeat the process for another quality:

See? It’s in situations like this that my logic vacillates and I start to think that AI is capable of doing miracles.

Keep repeating the process by focusing on a new quality each time, until you are happy with the results.

Give an Example

This technique might sound obvious, but almost nobody submits an example in their prompt. The assumption is, once again, that the AI already knows what we want and what it looks like.

It couldn’t be farther from the truth, and examples are enormously beneficial to get a better output.

Let’s try with a request to emulate a speech, maybe given by a famous speaker that we admire, for a completely different purpose.

Here my capability to show you examples is limited by the size of the so-called context window of GPT-4. As I mentioned many times in various issues of Synthetic Work, for a large language model, the context window is akin to the memory for humans.

At some point in the near future, OpenAI will unlock the capability of GPT-4 to operate with a 32K token context window. Which approximately equals to half of an average-sized book you’d find in a bookstore.

Until then, we have to work with an 8K token context window, which means I can only use a very short speech as an example:

There.

If you are an executive reading this newsletter, pro tip: you can generate a speech like this directly from your phone, while you are playing golf or sipping a Piña colada at the bar on the beach.

Of course, you can combine all these techniques together to generate really high-quality outputs. It depends on the request you are submitting, and on the quality of the answers you are receiving.

Researchers and smart developers have started creating so-called pipelines to automate the application of all these techniques. But we don’t need to make this even more complicated than it is.

What ultimately matters are not the tools, but your mindset. The way you think about the AI you are interacting with changes your outcome dramatically.

You do the same when you interact with people. You just don’t realize it. If you interact with your 6 years old daughter, you’ll explain things in a radically different way than if you are interacting with a 40 years old colleague.

The radically different results you’ll obtain are only in part due to the experience (a byproduct of age and education) of your interlocutors in interpreting your request. Before that, much depends on the way you have communicated with both in the first instance.

OK. I think you had enough for this week.

There are significantly more advanced prompting techniques that we can review in the future, but they require a lot of space and I’m not sure the newsletter is the best medium for that.

If you’d like to see the Prompting section more often in the Splendid Edition, just let me know.

If you’d like to see how to apply Prompting techniques to specific tasks that pertain to a certain job role, let me know.

All you have to do is reply to this email.