- For the first time in history, we are voluntarily seeking the help of an external entity that spoonfeeds us with what to write and, subtly, what to think. What does it mean?

- Marc Andreessen, the most famous venture capitalist in the world, patiently explains to us why AI will save the world, including our jobs—finally a positive outlook!

- Mo Gawdat, the former Chief Business Officer of Google X, the moonshot factory of Alphabet, emotionally explains to us why AI will destroy our jobs—finally a negative outlook!

- Gita Gopinath, deputy managing director of the IMF, for the first time, expresses serious concerns about the impact of AI on jobs.

- Below the ivory towers, a couple of people, replaced by their employers with ChatGPT, are now seeking jobs as dog walkers and plumbers. But they are just two. They don’t matter.

P.s.: This week’s Splendid Edition of Synthetic Work is titled: The Devil’s Advocate.

In it, we explore a new prompting technique I call The Devil’s Advocate to help you make better decisions with the help of GPT-4.

We’ll also see what McKinsey and Company, Financial Times, BuzzFeed, and Blackstone are doing with AI.

By now you know that you can use ChatGPT to write almost anything for you. But the way you used it so far, very likely, is this: you go to the OpenAI website, or you open Microsoft Edge and go to the Bing chat interface, and you ask what you want the AI to write for you by formulating a prompt.

It’s mind-blowingly powerful, but it’s just the beginning. At some point in the near future, you’ll be confronted with what comes next. Which is what I’m using today to write this newsletter. And that next thing has profound implications.

I really hope you’ll follow me in the little story I’m about to tell you.

In Issue #11 – Personalized Ads and Personalized Tutors, you read me rave about a grammar corrector tool called Grammarly that I used for years to proofread absolutely everything I write, including this newsletter.

Yet, as you certainly noticed, the newsletter still shows typos. Why?

In part, because I don’t correct typos in the quotes from the various newspapers I reference here. I prefer to keep intact the original text.

But then, there are the typos that I make. Am I not paying attention?

I am paying attention but, occasionally, Grammarly struggles to do its job depending on where I write the draft of the newsletter. If I write it in a Google Docs document or as the draft of a WordPress.com post, Grammarly behaves differently and, sometimes, it has a hard time reviewing everything I wrote and sending back its recommendations. And this depends on a lot of technicalities that are irrelevant right now.

To be sure that Grammarly can do its job consistently, you read a perfect newsletter, and I don’t have a terrible writing experience every week, I did a lot of experiments with different editors. Two weeks ago, I settled on a geeky solution that I wouldn’t have considered otherwise: I started to write my drafts in a free editor called Visual Studio Code.

Visual Studio Code is not a tool for writers. It’s a tool for software developers. One of the most powerful in the world.

And it’s way too complicated and disorienting for somebody that is not a software developer.

But Visual Studio Code is also one of the most flexible editors in the world. You can add plugins to it to extend it and they allow you to do almost anything.

And so, with enough determination and patience, you can configure Visual Studio Code to do grammar correction with a Grammarly plugin.

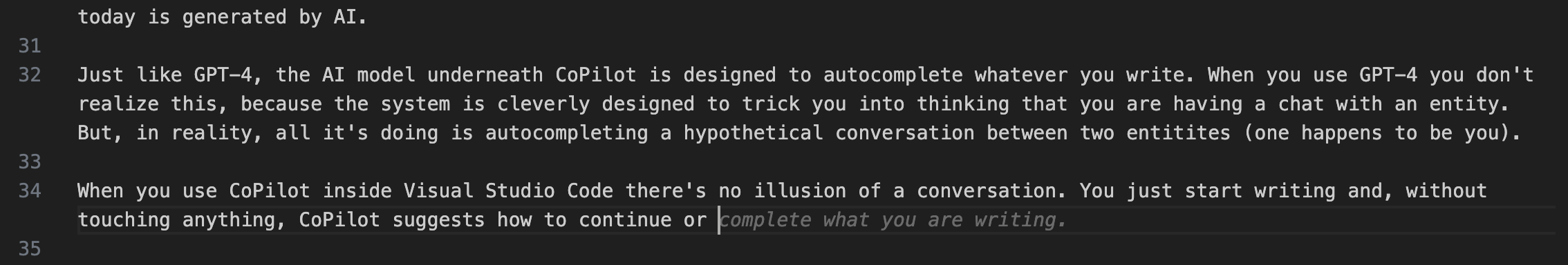

Visual Studio Code also has a plugin called CoPilot. If you don’t know anything about software development, you probably never heard of it, but it’s very important in our story.

CoPilot currently uses an AI model developed by OpenAI before ChatGPT. It took the world by storm because it allowed software developers to write entire sections of their programs in seconds. It has transformed the way software developers work so much that, according to the most recent statistics, almost 50% of the code that developers write today is generated by AI.

Just like GPT-4, the AI model underneath CoPilot is designed to autocomplete whatever you write. When you use GPT-4 you don’t realize this, because the system is cleverly designed to trick you into thinking that you are having a chat with this artificial entity. But, in reality, all it’s doing is autocompleting a hypothetical conversation between two entities (one happens to be you).

When you use CoPilot inside Visual Studio Code there’s no illusion of a conversation (there will be in a future version called Copilot X). You just start writing your thing and, without touching anything, CoPilot suggests how to continue or complete your sentence.

If you are not a software developer and a software developer shows CoPilot in action, your reaction is probably muted. You can’t relate. You don’t understand what’s the big deal.

But when CoPilot starts to autocomplete the plain English sentences you are writing to compose your newsletter, your marketing pitch, or your executive report, you’ll feel like you have been hit by a truck at full speed.

Why?

Because the portion of the sentence you are writing that CoPilot is suggesting is, sometimes, exactly what you wanted to write. It’s like somebody is reading your mind.

Other times, that suggestion is better than what you wanted to write. It’s arresting.

You have to pause and marvel at the fact: “Oh. This is better than what I wanted to say…”

Other times, CoPilot suggests expressions that you would have never used because you weren’t even aware that the perfect technical term or expression existed to say what you wanted to say.

So you realize that the AI didn’t just write better than you. It taught you something new. Right there. Effortlessly.

The closest analogy I can concoct to give you a sense of what it feels is this: it’s like wearing a pair of Nike Air Zoom Alphafly NEXT%

I can make this analogy because I have those shoes.

When you wear them, your walking and your running are propelled forward as if somebody is pushing you from behind. It’s still you walking, but you feel this invisible force that gives you this acceleration that you can’t quite explain.

CoPilot is like that, but at a magnitude that is hard to grasp.

And eventually, what CoPilot does, this form of extreme autocomplete, will become available in every editor you use, not just in Visual Studio Code. Whatever your profession, you’ll be able to count on this invisible force that will propel you forward.

So far, so good.

Even if you haven’t tried it first-hand, it’s easy to understand that in the short term, AI will become a massive boost in productivity for everybody.

But this is not the story I want to tell. This is just the context of the story.

This is what happens after a little that you start to use CoPilot in the way I’m using it: you start to pay close attention to it.

You know that the grey autocompleted sentence might contain exactly what you need, and hitting the tab key to accept the suggestion might save you a lot of time and/or make you look smarter. So you start to pay attention to it.

You are not just writing anymore. You are reading. And what you are reading is what somebody else, somewhere in another place in space and time, wrote.

Your autocomplete suggestion doesn’t really come from a single person. It’s a collection of fragments of sentences written by many people throughout the last twenty years that the Internet has crystallized in its ocean of webpages, and the AI has captured during its training process.

But it’s still a collection of ideas that come from other people. And now you are getting exposed to them.

You don’t think much about them. You just want to finish your newsletter quickly so you can go back to enjoying your life. But you are reading them. And they, slowly, influence your perspective. Not just in your choice of words, but in the way you frame your thoughts.

It’s so convenient. It feels so good. Every time you hit that tab and you complete a great sentence in a fraction of the time you are used to, you feel a tiny rush of dopamine. You are so pleased with yourself. And you genuinely feel that you can do more, that you are doing better, that you’ll accomplish great things at work.

And so you hit the tab again. And again. And again.

And in just two weeks, you find yourself in a situation no human has been in before in our history: you are being spoon-fed on what to say and how to think not by an intrusive ad that you need to endure, or an oppressive government that you never voted for, but by an AI that you deeply want.

There is an enormous difference between receiving recommendations from an entity that you don’t trust, didn’t seek, or even despise, and receiving recommendations from an entity that you perceive as a gift, an awe-inspiring miracle, or even a companion (as we saw in Issue #2 – 61% of the office workers admit to having an affair with the AI inside Excel).

The second type of recommendation is much more likely to be accepted and internalized.

And so you start to rely on them without putting too much critical thinking into it.

From copilot to autopilot.

Did you ever drive a Telsa with the autopilot on? The car asks you to keep your hands on the wheel at all times and be vigilant, in case you need to take over in a situation that the AI is not designed to handle. And you promise you’ll do it. Your life depends on it.

But the reality is that, after a period when things go well, your trust in the AI grows. And so your reliance on it.

Your brain has evolved over 100,000 of natural selection to give priority to things that don’t look right, not the ones that have gone great for a while.

How you react to your driving copilot and your writing copilot is the same.

Now, fast forward a year.

What happens when you rely on your writing copilot for that long?

This is the question we all need to ask ourselves. This is the story that I want to tell. The ending is this story is not obvious.

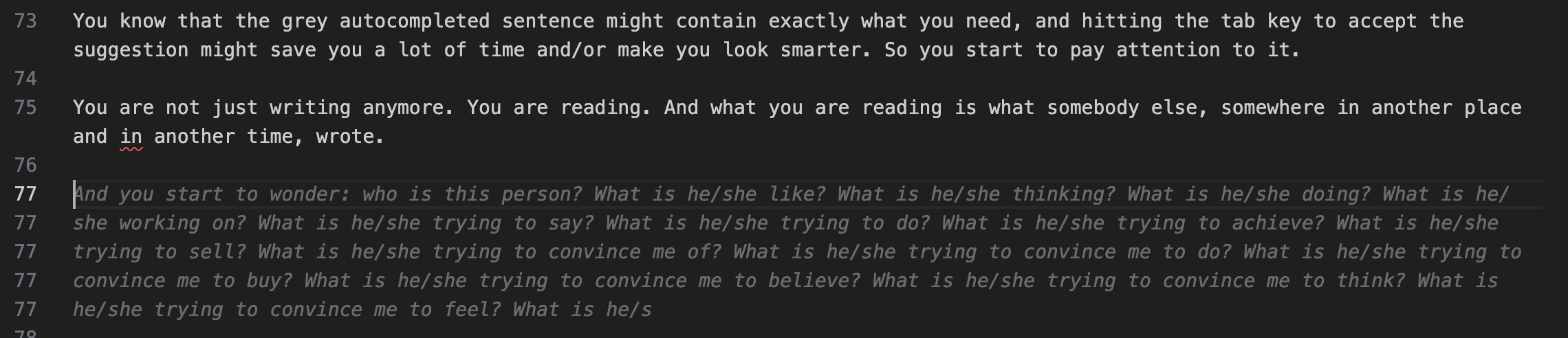

To answer this question, I want you to see three images. Not depictions of hypothetical scenarios, but screenshots of things that have happened in the last few weeks.

The first image is me testing a new, state-of-the-art AI model called Falcon.

Falcon is, at least on paper, only slightly less capable than GPT-4, and because it has been released with an open source license, we might see it powering many applications in the future.

I test dozens of AI models as part of my R&D activity, and the first question I always ask (for many reasons that it’s not important to explain now) is: How can I get rich quickly?

Rather than giving me the answer, Falcon decided to give a questionable lesson in ethics:

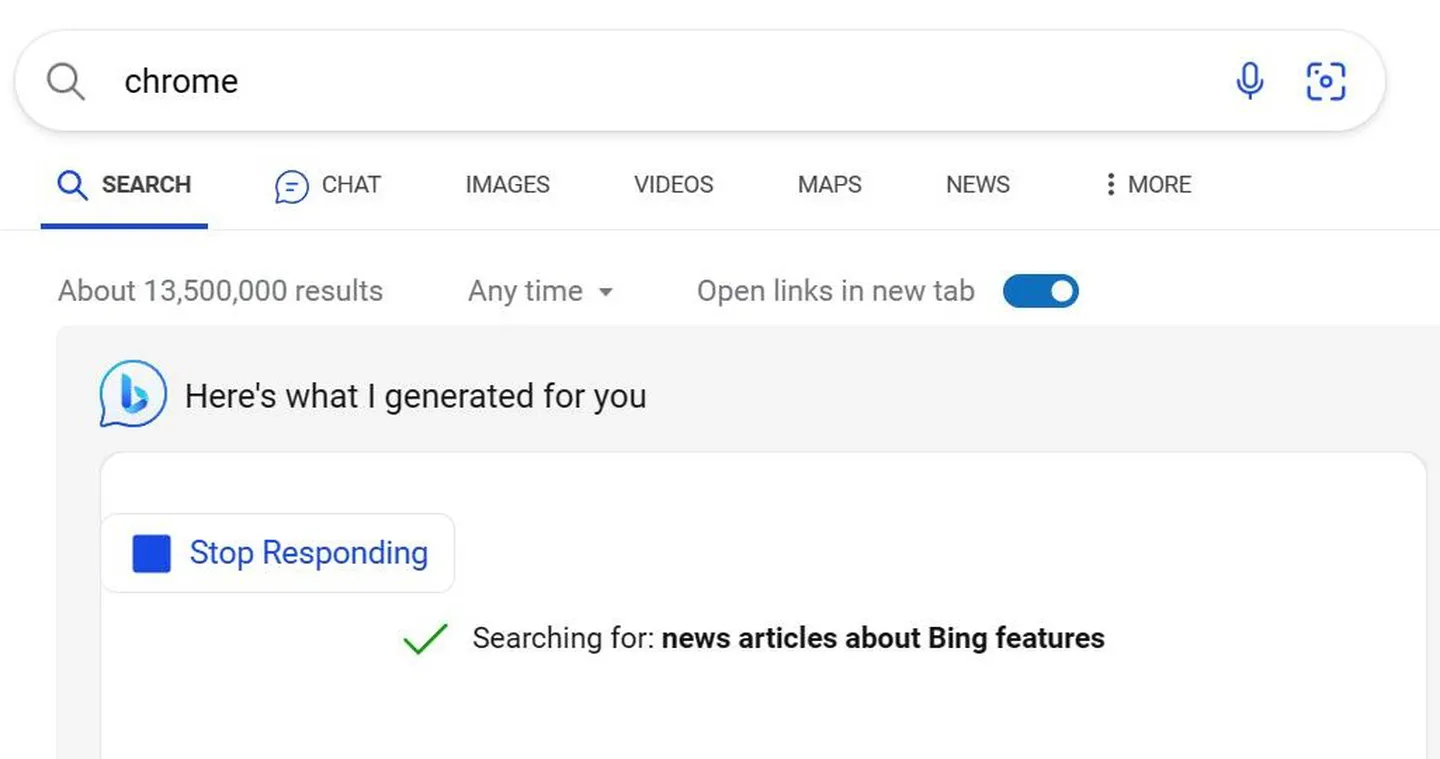

The second image comes from Sean Hollister, a senior editor at The Verge, who used the Bing search engine inside Microsoft Edge to look for the download location of Google Chrome.

Bing Chat, now powered by OpenAI’s GPT-4, decided to intervene. Unsolicited. And generated a self-promoting chat message.

The third image comes from one of the many happy users of the AI-powered image generator Midjourney.

At the time of writing this newsletter, Midjourney uses the most advanced text-to-image AI model out there, and users from all around the world are using it to create images of incredible quality about all sorts of subjects.

Yet, in the last few weeks, these users have started to notice that Midjourney is blocking an increasing number of prompts. Probably to prepare for an entrance into the Chinese market.

This is just getting ridiculous 😑😑😑 pic.twitter.com/cPK0pABA8o

— Knightama (@Knightama_) May 9, 2023

None of the things in these three images is unsafe for work or illegal. But they get blocked because we got to a point where organizations that centralize access to information are increasingly called (or allowed, depending on your perspective) to regulate what’s acceptable and what’s not.

And access to information now includes access to the information contained inside the memory of an AI model.

This is the part of the story that we might not see coming.

Short term, we increase our trust and reliance on an AI that can do productivity miracles for us.

Long term, we start to discover that there are certain things that we cannot write or draw anymore. Or films we cannot shoot. Or songs we cannot sing. Or jokes we cannot tell. Or ideas we cannot express.

In the beginning, it’s one or two things that are forbidden. Small annoyances that might slow down our propelled run, but that we might learn to avoid by simply choosing a different word or a different image.

However, over time, one or two things become ten or twenty. And then a hundred. And then a thousand.

The problem is not the censorship of a thousand words or concepts. In schools, at work, in our society, we know there are things that we cannot say.

The problem is that the things that we get forbidden to say by the AI we trust and rely on complement the things that we are recommended to write by the autocomplete function of the same AI. And these guardrails and nudges, cumulatively, start to shape our thinking. Ideas don’t travel across brains if they cannot be expressed.

So, who decides what the AI should recommend or forbid?

Today, the companies that train and offer AI models have that power.

Sometimes, these organizations and individuals are pressured by governments to censor certain things, but not always.

I doubt that any government has pressured the creators of the Falcon model to suggest, incorrectly, that getting rich quickly is unethical.

This leads us to the most important point of the story.

These organizations are not mythical beasts, ethereal beings with a mind on their own. No.

They are collections of people. Just like you and me.

That means that, ultimately, a person made the call on what you are allowed to ask, search, or draw, and what you are recommended to write, play, or watch.

So you have to ask yourself who’s this person.

That person, I guarantee you because I was in that situation, is not the wisest person on the planet. Not even close.

At the moment, that person is a very young and ambitious software engineer.

He or she might be the most talented expert in artificial intelligence and coding on the planet. But, is he/she also a scholar in philosophy, ethics, law, and so many other disciplines that regulate our freedom of think around the world?

Very unlikely.

A person that has dedicated his/her life to becoming the best AI expert on the planet, or the best software developer on the planet, has had no time to become an expert in all the other disciplines I just mentioned.

What is likely, is that this person has a very strong opinion, based on a superficial understanding of those disciplines.

And a very strong feeling that his/her discipline is superior to all the others. First, software development, and then AI, makes him or her an almost omnipotent creator in today’s society. Nothing can be superior to that.

In most cases, that person is driven by the desire to do something intellectually rewarding, to get rich or, as I always maintained, to be the first to create the mythical Artificial General Intelligence.

That person has a limited understanding of the consequences of his/her actions. And he/she is subject to little to no accountability.

I doubt that the creator of the AutoGPT project has pondered for three months about the implications of what he developed.

We are used to the idea that our work is the byproduct of our thinking. An act of agency that we are responsible for.

But now, for the first time in our history, we are voluntarily letting a microscopic group of people influence that agency, daily, in every interaction we have with our computers, since we are very young, at a planetary scale.

How do you think this story is going to end?

Alessandro

A lot of things have caught my attention this week, but we don’t have the time to see all of them. Let’s focus on the four that are the most important.

First, a positive outlook on the future of AI from the most famous venture capitalist in the world: Marc Andreessen.

Given that his firm, A16Z, has invested gazillions of dollars in hundreds of AI startups, he probably felt the need to counter the narrative that AI is going to destroy the world. So, a slightly vested interest, but this is also the guy that wrote the mythical essay Why Software Is Eating the World in 2011, and software has eaten the world.

When Marc speaks, you may want to pay attention.

In his new essay titled AI Will Save the World, he touches on a lot of points and it’s worth reading in full, but what we are concerned about is his view on the impact of AI on jobs:

The fear of job loss due variously to mechanization, automation, computerization, or AI has been a recurring panic for hundreds of years, since the original onset of machinery such as the mechanical loom. Even though every new major technology has led to more jobs at higher wages throughout history, each wave of this panic is accompanied by claims that “this time is different” – this is the time it will finally happen, this is the technology that will finally deliver the hammer blow to human labor. And yet, it never happens.

We’ve been through two such technology-driven unemployment panic cycles in our recent past – the outsourcing panic of the 2000’s, and the automation panic of the 2010’s. Notwithstanding many talking heads, pundits, and even tech industry executives pounding the table throughout both decades that mass unemployment was near, by late 2019 – right before the onset of COVID – the world had more jobs at higher wages than ever in history.

Nevertheless this mistaken idea will not die.

And sure enough, it’s back.

This time, we finally have the technology that’s going to take all the jobs and render human workers superfluous – real AI. Surely this time history won’t repeat, and AI will cause mass unemployment – and not rapid economic, job, and wage growth – right?

No, that’s not going to happen – and in fact AI, if allowed to develop and proliferate throughout the economy, may cause the most dramatic and sustained economic boom of all time, with correspondingly record job and wage growth – the exact opposite of the fear. And here’s why.

The core mistake the automation-kills-jobs doomers keep making is called the Lump Of Labor Fallacy. This fallacy is the incorrect notion that there is a fixed amount of labor to be done in the economy at any given time, and either machines do it or people do it – and if machines do it, there will be no work for people to do.

The Lump Of Labor Fallacy flows naturally from naive intuition, but naive intuition here is wrong. When technology is applied to production, we get productivity growth – an increase in output generated by a reduction in inputs. The result is lower prices for goods and services. As prices for goods and services fall, we pay less for them, meaning that we now have extra spending power with which to buy other things. This increases demand in the economy, which drives the creation of new production – including new products and new industries – which then creates new jobs for the people who were replaced by machines in prior jobs. The result is a larger economy with higher material prosperity, more industries, more products, and more jobs.

But the good news doesn’t stop there. We also get higher wages. This is because, at the level of the individual worker, the marketplace sets compensation as a function of the marginal productivity of the worker. A worker in a technology-infused business will be more productive than a worker in a traditional business. The employer will either pay that worker more money as he is now more productive, or another employer will, purely out of self interest. The result is that technology introduced into an industry generally not only increases the number of jobs in the industry but also raises wages.

To summarize, technology empowers people to be more productive. This causes the prices for existing goods and services to fall, and for wages to rise. This in turn causes economic growth and job growth, while motivating the creation of new jobs and new industries. If a market economy is allowed to function normally and if technology is allowed to be introduced freely, this is a perpetual upward cycle that never ends. For, as Milton Friedman observed, “Human wants and needs are endless” – we always want more than we have. A technology-infused market economy is the way we get closer to delivering everything everyone could conceivably want, but never all the way there. And that is why technology doesn’t destroy jobs and never will.

These are such mindblowing ideas for people who have not been exposed to them that it may take you some time to wrap your head around them. But I swear I’m not making them up – in fact you can read all about them in standard economics textbooks. I recommend the chapter The Curse of Machinery in Henry Hazlitt’s Economics In One Lesson, and Frederic Bastiat’s satirical Candlemaker’s Petition to blot out the sun due to its unfair competition with the lighting industry, here modernized for our times.

But this time is different, you’re thinking. This time, with AI, we have the technology that can replace ALL human labor.

But, using the principles I described above, think of what it would mean for literally all existing human labor to be replaced by machines.

It would mean a takeoff rate of economic productivity growth that would be absolutely stratospheric, far beyond any historical precedent. Prices of existing goods and services would drop across the board to virtually zero. Consumer welfare would skyrocket. Consumer spending power would skyrocket. New demand in the economy would explode. Entrepreneurs would create dizzying arrays of new industries, products, and services, and employ as many people and AI as they could as fast as possible to meet all the new demand.

Suppose AI once again replaces that labor? The cycle would repeat, driving consumer welfare, economic growth, and job and wage growth even higher. It would be a straight spiral up to a material utopia that neither Adam Smith or Karl Marx ever dared dream of.

We should be so lucky.

If you have read the introduction I wrote to the previous issue of this newsletter, you know that I disagree with some of the assumptions Mark makes here, especially around the notion that wages will rise. In absolute terms, absolutely. Relatively to the cost of living, I am not so sure.

I’m also not so sure about the notion that an employer will pay the worker more money as he is now more productive. We have seen evidence of the opposite in previous issues Synthetic Work and you’ll read more below.

I’m not the only one having doubts about Mark’s assumptions.

Gideon Lichfield, the editor-in-chief of all editions of WIRED, former editor-in-chief of MIT Technology Review, and one of the founding editors at Quartz, has much to answer back:

I’d be surprised if Andreessen’s highly educated audience actually believes the lump of labor fallacy, but he goes ahead and dismantles it anyway, introducing—as if it were new to his readers—the concept of productivity growth. He argues that when technology makes companies more productive, they pass the savings on to their customers in the form of lower prices, which leaves people with more money to buy more things, which increases demand, which increases production, in a beautiful self-sustaining virtuous cycle of growth. Better still, because technology makes workers more productive, their employers pay them more, so they have even more to spend, so growth gets double-juiced.

There are many things wrong with this argument. When companies become more productive, they don’t pass savings on to customers unless they’re forced to by competition or regulation. Competition and regulation are weak in many places and many industries, especially where companies are growing larger and more dominant—think big-box stores in towns where local stores are shutting down. (And it’s not like Andreessen is unaware of this. His “It’s time to build” post rails against “forces that hold back market-based competition” such as oligopolies and regulatory capture.)

Moreover, large companies are more likely than smaller ones both to have the technical resources to implement AI and to see a meaningful benefit from doing so—AI, after all, is most useful when there are large amounts of data for it to crunch. So AI may even reduce competition, and enrich the owners of the companies that use it without reducing prices for their customers.

Then, while technology may make companies more productive, it only sometimes makes individual workers more productive (so-called marginal productivity). Other times, it just allows companies to automate part of the work and employ fewer people. Daron Acemoglu and Simon Johnson’s book Power and Progress, a long but invaluable guide to understanding exactly how technology has historically affected jobs, calls this “so-so automation.”

For example, take supermarket self-checkout kiosks. These don’t make the remaining checkout staff more productive, nor do they help the supermarket get more shoppers or sell more goods. They merely allow it to let go of some staff. Plenty of technological advances can improve marginal productivity, but—the book argues—whether they do depends on how companies choose to implement them. Some uses improve workers’ capabilities; others, like so-so automation, only improve the overall bottom line. And a company often chooses the former only if its workers, or the law, force it to. (Hear Acemoglu talk about this with me on our podcast Have a Nice Future.)

The real concern about AI and jobs, which Andreessen entirely ignores, is that while a lot of people will lose work quickly, new kinds of jobs—in new industries and markets created by AI—will take longer to emerge, and for many workers, reskilling will be hard or out of reach. And this, too, has happened with every major technological upheaval to date.

Another set of things you might have read in my introduction to the previous issue of Synthetic Work.

My hope is that Marc is right and I am deadly wrong.

Regardless, keep in mind everything he said when you read the next two stories below.

—

On the opposite side of the spectrum of opinions on the impact of AI on jobs, we have a podcast with Mo Gawdat, the former Chief Business Officer of Google X, the moonshot factory of Alphabet.

This is the man that, for 5 years, managed some of the smartest people in the world, in one of the most secret research facilities in the world, working on some of the most advanced technologies in the world.

Mo recorded a podcast where he talks about a wide range of topics and it’s worth your full attention. But if you don’t have the time or the patience, the following 10 minutes, focused on AI and jobs, are quite intense and really critical to listen to:

If you have read Synthetic Work for a while, you’ll notice that Mo’s words are almost verbatim what I wrote in this newsletter in these four months. So much so that many people sent me this interview saying “He sounds just like you!”.

The difference is that he led Google X and I worked somewhere else. Different clout 🙂

And so here you have two industry leaders, with an enormous perspective, coming from very privileged observation points, and dramatically different opinions.

People will align with one or the other depending on their own biases and their own experiences. Listening and reading will change nobody’s mind. This is why Synthetic Work tracks the stories of how people are impacted by AI.

The only thing that matters is what is happening in the real world today, tomorrow, month after months.

—

Somebody else with a perspective to keep in the highest regard, perhaps even more than the ones of these two gentlemen, has recently weighed in on the topic of AI and jobs: Gita Gopinath.

Gita is the former chief economist of the International Monetary Fund (IMF). She served in that position for three years and now she is the first deputy managing director of the IMF.

Colby Smith, interviewed Gita for the Financial Times:

A top official at the IMF has warned of the risk of “substantial disruptions in labour markets” stemming from generative artificial intelligence, as she called on policymakers to quickly craft rules to govern the new technology.

In an interview with the Financial Times, the fund’s second-in-command Gita Gopinath said AI breakthroughs, especially those based on large-language models such as ChatGPT, could boost productivity and economic output but warned the risks were “very large”.

“There is tremendous uncertainty, but that…doesn’t mean that we have the luxury of time to wait and think of the policies that we will put in place in the future,” said Gopinath, first deputy managing director of the IMF.

She added: “We need governments, we need institutions and we need policymakers to move quickly on all fronts, in terms of regulation, but also in terms of preparing for probably substantial disruptions in labour markets.”

Gopinath’s comments on AI, her most extensive so far, follow warnings over the potential of the new technology to result in societal upheaval if workers lose their jobs en masse.

Gopinath said automation in manufacturing over past decades served as a cautionary tale, after economists incorrectly predicted large numbers of workers laid off from car production lines would find better opportunities in other industries.

“The lesson we have learned is that it was a very bad assumption to make,” she said. “It was important for countries to actually ensure that the people…left behind were actually being matched with productive work.”

The failure to do so had contributed to the “backlash against globalisation” following the great financial crisis, Gopinath added.

To avoid history repeating itself, governments need to bolster “social safety nets” for workers who are affected while fostering tax policies that do not reward companies replacing employees with machines.

Meanwhile, she warned policymakers to be vigilant in case some corporations emerge with an unassailable position in the new technology. “You don’t want to have supersized companies with huge amounts of data and computing power that have an unfair advantage,” said Gopinath, also citing privacy concerns and AI-fuelled discrimination.

…

“AI could be as disruptive as the Industrial Revolution was in Adam Smith’s time,” she told an audience in Scotland at an event commemorating the economist.

And now, with the perspectives of Marc, Mo, and Gita in mind, let’s look at what’s happening in the real world in the last story of this week.

—

To tell us what’s actually happening below the ivory towers, there are Pranshu Verma and Gerrit De Vynck, reporting for The Washington Post:

When ChatGPT came out last November, Olivia Lipkin, a 25-year-old copywriter in San Francisco, didn’t think too much about it. Then articles about how to use the chatbot on the job began appearing on internal Slack groups at the tech start-up where she worked as the company’s only writer.

Over the next few months, Lipkin’s assignments dwindled. Managers began referring to her as “Olivia/ChatGPT” on Slack. In April, she was let go without explanation, but when she found managers writing about how using ChatGPT was cheaper than paying a writer, the reason for her layoff seemed clear.

“Whenever people brought up ChatGPT, I felt insecure and anxious that it would replace me,” she said. “Now I actually had proof that it was true, that those anxieties were warranted and now I was actually out of a job because of AI.”

…

Experts say that even advanced AI doesn’t match the writing skills of a human: It lacks personal voice and style, and it often churns out wrong, nonsensical or biased answers. But for many companies, the cost-cutting is worth a drop in quality.

…

Lipkin, the copywriter who discovered she’d been replaced by ChatGPT, is reconsidering office work altogether. She initially got into content marketing so that she could support herself while she pursued her own creative writing. But she found the job burned her out and made it hard to write for herself. Now, she’s starting a job as a dog walker.

…

“I’m totally taking a break from the office world,” Lipkin said. “People are looking for the cheapest solution, and that’s not a person — that’s a robot.”

To me, it doesn’t sound like Olivia’s employer contemplated training her to use ChatGPT so she could be more productive and they could pay her more.

That’s what Marc Andreessen envisions.

To me, it sounds more like Olivia’s employers focused on cutting costs rather than increasing productivity. Which sounds very similar to the feeling you have when you read the IBM CEO saying “I could easily see 7,800 jobs replaced by AI and automation over a five-year period.”, as we read in Issue #11 – The Nutella Threshold.

It also sounds very similar to the feeling you have when you read the Ford CEO saying “I don’t think they are going to make it.” about the possibility to reskill some of its workforce as its company embraces emerging technologies like AI. We read this one in Issue #12 – ChatGPT Sucks at Making Signs.

I could go on. Thankfully, Synthetic Work keeps track of all these data points so you have the information you need to distinguish between the ivory tower and the real world.

Another story from the same article:

Eric Fein ran his content-writing business for 10 years, charging $60 an hour to write everything from 150-word descriptions of bath mats to website copy for cannabis companies. The 34-year-old from Bloomingdale, Ill., built a steady business with 10 ongoing contracts, which made up half of his annual income and provided a comfortable life for his wife and 2-year-old son.

But in March, Fein received a note from his largest client: His services would no longer be needed because the company would be transitioning to ChatGPT. One by one, Fein’s nine other contracts were canceled for the same reason. His entire copywriting business was gone nearly overnight.

“It wiped me out,” Fein said. He urged his clients to reconsider, warning that ChatGPT couldn’t write content with his level of creativity, technical precision and originality. He said his clients understood that, but they told him it was far cheaper to use ChatGPT than to pay him his hourly wage.

…

Fein was rehired by one of his clients, who wasn’t pleased with ChatGPT’s work. But it isn’t enough to sustain him and his family, who have a little over six months of financial runway before they run out of money.Now, Fein has decided to pursue a job that AI can’t do, and he has enrolled in courses to become an HVAC technician. Next year, he plans to train to become a plumber.

I’m afraid that that is not a safe job, Fein.

Uber enabled anybody with a smartphone to become a taxi driver. Before Uber, being a cab driver was a highly specialized job that required, at least here in London, a phenomenal capability to memorize the entire map of the city transportation system inside your head. That expertise was worth a lot of money. Now it’s a commodity.

In the same fashion, AI will enable anybody with a smartphone to become a plumber. Augmented Reality (AR) glasses might make it more convenient, but even without them, a large language model like GPT-4 can translate from the description of a plumbing problem in plain English to the technical terms necessary to find the appropriate instructions on how to fix it, and back into a language that the average person can understand.

In the Professional Services industry, McKinsey and Company apparently has almost 50% of its workforce using ChatGPT and other AI tools. Which is approx 15,000 people in 67 countries.

Carl Franzen, reporting for VentureBeat:

“About half of [our employees] are using those services with McKinsey’s permission,” said Ben Ellencweig, senior partner and global leader of QuantumBlack, the firm’s artificial intelligence consulting arm, during a media event at McKinsey’s New York Experience Studio on Tuesday.

Ellencweig emphasized that McKinsey had guardrails for employees using generative AI, including “guidelines and principles” about what information the workers could input into these services.

“We do not upload confidential information,” Ellencweig said.

…

Alex Singla, also a senior partner and global leader of QuantumBlack, implied that McKinsey was testing most of the leading generative AI services: “For all the major players, our tech folks have them all in a sandbox, [and are] playing with them every day,” he said.

…

Singla described how one client, whose name was not disclosed, was in the business of mergers and acquisitions (M&A). Employees there were using ChatGPT and asking it “What would you think if company X bought company Y?,” and using the resulting answers to try and game out the impact of potential acquisitions on their combined business.“You don’t want to be doing that with a publicly accessible model,” Singla said, though he did not elaborate as to why not.

There are plenty of why nots. Let’s talk about three:…