- A rare interview with the Anthropic CEO Dario Amodei and his view on the integration of AI in business environments.

- Billionaire investor Chamath Palihapitiya on the impact of AI on software development and the implications for public companies pressured by activist investors.

- A leaked conversation during an Adobe staff meeting about the risk of cannibalizing the company’s own business model with AI.

- The New England Journal Of Medicine published a very interesting article on the adoption of AI in the Health Care industry.

- The collaboration between a rapper, Lupe Fiasco, and Google is a great example of collaboration between humans and AI. For now.

- A new report from the UK House of Commons Committee touches on how workers might feel in a workplace where they are surveilled and judged by tech and AI.

P.s.: this week’s Splendid Edition of Synthetic Work is out, titled The Ideas Vending Machine.

In it, you’ll read what WPP, the London Stock Exchange Group, Tinder, and Australia’s Home Affairs Department are doing with AI.

Also, in the What Can AI Do for Me? section, you’ll read how GPT-4 can be used to generate perfectly legit business ideas. For real.

Synthetic Work is now 6 months old.

This milestone comes with a few insights that I’d like to share with you:

- You, dear first-edition reader, have stuck around for this entire time. I’m thankful for (and astonished by) that. Synthetic Work has a churn rate of zero. While there is a seemingly infinite number of resources to read about AI, the particular angle we focus on in this newsletter seems to matter to all of you. I hope you’ll continue to see value in this project as I work to build and scale it in the future.

- You, collectively, are a readership of business leaders. I’ve never seen such a concentration of CEOs, CIOs, and other C-level executives, SVPs/VPs, and Managing Directors adopt a service focused on an emerging technology as quickly as I’ve seen for Synthetic Work. You are an audience of truly outstanding thought leaders across a wide range of industries. It’s my privilege to write for you, and do business with you, every week.

- You have financially supported Synthetic Work from day one by subscribing to the Splendid Edition. You, better than anybody else, know how hard is to start a business. Especially an independent one like this. But you are helping make this project viable thanks to your enthusiastic support. That said, more support is always welcome as I scale the business to offer you even more value (read below).

- Synthetic Work has evolved to be way more than a newsletter. Information primarily comes to you through the two editions of the newsletter every week but, at this point, the project encompasses multiple web assets, like the AI Adoption Tracker and the How to Prompt section. More of these assets are in construction, like a Vendor Database, a Research Database, and a long-overdue database of recommended AI-powered solutions.

- It’s still very early. The number of people that have yet to realize how much their business, their workforce, and their career will be impacted by AI, is still small. In my interactions with clients during consultation days, I still see some questions that would have been important two years ago. Other experts in other sub-fields of AI, like AI law, tell me the same. Synthetic Work has the potential to reach a much bigger audience and it will.

- This is just a timid first step. The way we are seeing AI being used by early adopters in the Splendid Edition is nothing compared to what’s coming. It’s hard to see the possibilities if you don’t read research papers all day. I do it on your behalf, and I promise that what we have seen so far pales in comparison with what’s in the innovation pipeline. Some of the things that I’m building for you depend on AI technology that is maturing rapidly, but not quite there yet. The idea is that Synthetic Work becomes a showcase for AI applied to business problems.

So, thank you for your support and your trust thus far. If you think Synthetic Work could be valuable for other leaders like you, please consider sharing it with your network. It’s the best way to help it grow.

Alessandro

The first thing that I’d like to point your attention to this week is a rare interview with the Anthropic CEO Dario Amodei.

None of the questions were focused on the impact of AI on jobs, but there are two reasons why the interview is important.

First, Amodei is one of the few human beings that truly understand how far generative AI has come as his company is busy developing frontier models that can rival OpenAI’s ones. At some point during the interview, he suggests that AI will reach the ability levels of educated humans in 2-3 years. And yet, he replies “I don’t know” 17 times to the interviewer’s questions.

If the top-of-the-world experts are not certain about what will happen, you should be cautious when you hear a pundit’s prediction about generative AI will evolve in the next 5 or 10 years.

This doesn’t just mean that the predictions might be too optimistic. It also means that the predictions might be too conservative.

The second thing why the interview is important is that Amodei is one of the few that highlighted the critical difference between the proof of concepts that you see constantly promoted on social media and the reality of an enterprise implementation:

Q:Why would it be the case that it could pass a Turing Test for an educated person but not be able to contribute or substitute for human involvement in the economy?

A: A couple of reasons. One is just that the threshold of skill isn’t high enough, comparative advantage. It doesn’t matter that I have someone who’s better than the average human at every task. What I really need for AI research is to find something that is strong enough to substantially accelerate the labor of the thousand experts who are best at it.

We might reach a point where the comparative advantage of these systems is not great.

Another thing that could be the case is that there are these mysterious frictions that don’t show up in naive economic models but you see it whenever you go to a customer or something.

You’re like — “Hey, I have this cool chat bot.” In principle, it can do everything that your customer service bot does or this part of your company does, but the actual friction of how do we slot it in? How do we make it work? That includes both just the question of how it works in a human sense within the company, how things happen in the economy and overcome frictions, and also just, what is the workflow? How do you actually interact with it?

It’s very different to say, here’s a chat bot that looks like it’s doing this task or helping the human to do some task as it is to say, okay, this thing is deployed and 100,000 people are using it.

Right now lots of folks are rushing to deploy these systems but in many cases, they’re not using them anywhere close to the most efficient way that they could. Not because they’re not smart, but because it takes time to work these things out.

That’s a key reason why the Splendid Edition of Synthetic Work focuses exclusively on applied AI (even if it’s just in the testing phase).

The interview is dense with insights, but keep in mind that it’s two hours long and the first part is quite technical:

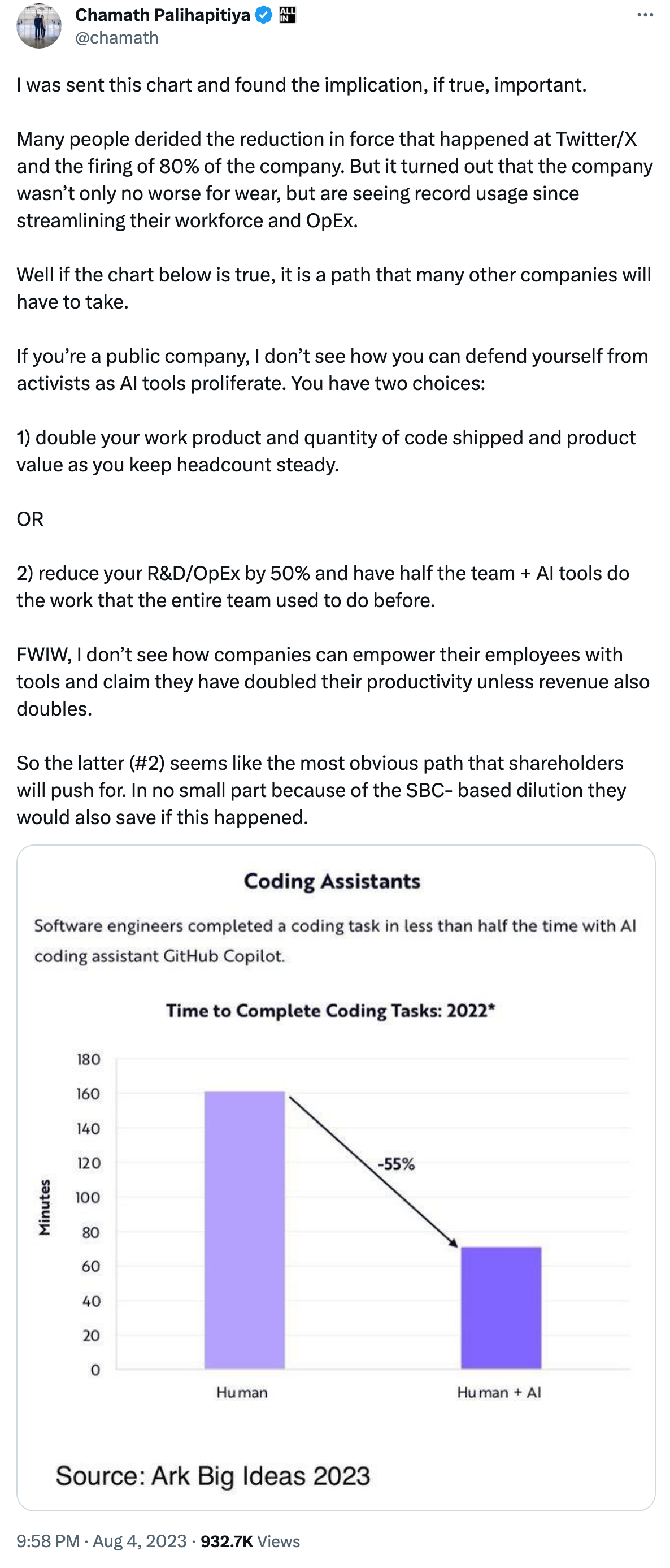

The second thing worth your attention is an observation from the famed billionaire investor Chamath Palihapitiya on the impact of AI on software development.

Palihapitiya doesn’t exactly have an immaculate track record. The investments he led in the last few years, mainly through the SPAC vehicle, have all crushed and burned, leaving retail investors with heavy losses.

Nonetheless, the following comment is interesting and aligns perfectly with what I’ve been writing in multiple issues of Synthetic Work:

The idea that AI shrinking corporate workloads will lead to companies producing more and of higher quality (scenario #1), as Marc Andreessen recently suggested, is an exceptionally optimistic one. My personal experience in corporate environments, and the trends we have been tracking in the Splendid Edition of Synthetic Work, suggest that scenario #2 is much more likely.

The particular angle that Palihapitiya focuses on, the pressure from activist investors, makes me think that this might become a playbook for Private Equity (PE) firms: acquire distressed companies that have more-than-average business functions ideal for AI-driven automation, and then deploy AI models to radically shrink the workforce and increase margins.

The third thing that I would focus on this week is a leaked conversation during an Adobe staff meeting about the risk of cannibalizing the company’s own business model with AI.

Eugene Kim, reporting for Business Insider:

One senior designer at Adobe recently wrote in an internal AI ethics Slack channel that a billboard and advertising business he knows plans to reduce the size of its graphic design team because of Photoshop’s new text-to-image features.

“Is this what we want?” the person wrote.

…

Other messages in the Adobe Slack channel were more critical of the AI revolution, calling it “depressing” and an “existential crisis” for many designers. One person said some artists now feel like they are “slaves” to the AI algorithm, since their jobs will mostly involve just touching-up AI-generated work.Some had a more positive view. Photoshop made artists more productive, and AI will only increase their efficiency, they said. One person said many freelancers and hobbyists will benefit from the increased output, even if some companies reduce their design workforce.

“I don’t think we should feel guilty for providing better and faster tools, as long as it’s done ethically,” that person wrote in the Slack channel.

…

During an internal staff meeting in June, one employee asked whether generative AI was putting Adobe “in danger of cannibalizing” its lucrative business that targets corporate customers, in exchange for individual users “who want it free or cheap,” according to a screenshot of the question submitted online.

…

A similar question was broached during an Adobe earnings call in June. Jefferies analyst Brent Thill said the “number one question” he gets from investors is whether AI will reduce Adobe’s “seats available.”This is a closely watched measure of the company’s customer base. Adobe often sells cloud software subscriptions based on the number of seats, or licenses, which give customers access to the technology. A company with, say, 5 graphic designers in-house would buy five licenses. So if designers are getting laid off, demand for licenses might fall, cutting into Adobe’s revenue, or slowing sales growth.

In response to Thill’s question, David Wadhwani, Adobe’s president of digital media, said the company has a history of introducing new technology that leads to more productivity and jobs.

…

Some employees are not sold on this idea. In the internal Slack channel, a group of employees discussed how new generative AI technology is fundamentally different from prior disruptive innovations.Cameras, for example, still required skill and expertise to produce good photography, they said. In contrast, generating AI images requires almost no skill, raising concerns over losing “craft and expertise that can only be gained through continued practice and personal creativity,” one of the people wrote in the Slack channel.

…

“It does not innovate in the way a camera does in that it replaces people in the mediums that it draws data from instead of opening up new means of expression,” one of the people wrote.

Adobe’s Firefly text-to-image mode is not yet great, but it will become soon. And their new Photoshop features called Generative Fill and Generative Expand, powered by the same AI model, already are impressive.

So, there’s no question that this technology significantly impacts the amount of time a designer needs to spend on a project.

But if Adobe would not have done it, somebody else would have. Open source alternatives of those features have existed since the launch of Stable Diffusion 1.0 last summer, and you should expect that these capabilities will become table stakes in photo manipulation software going forward.

This dilemma will soon concern many other technology providers, and not just in the graphic design space.

Every software company that is productizing AI to automate a task that was previously done by humans will eventually reach a threshold where more AI might hurt the business model rather than make the life of the customers better.

That’s what I usually refer to the expression “It might get better before it gets worse.” in the context of artificial intelligence.

The alternative view is that, following Marc Andreessen’s argument again, technology providers will become significantly more profitable because these AI technologies are lowering the cost of entry for new experts.

If, to put it like the Adobe employees of our story, AI will turn a highly sophisticated skill into a button-push exercise, it means that more people will be able to become graphic designers, or software developers, or copywriters. Those words won’t mean the things that they mean today, but will still be jobs that people can do without studying for years.

So we might go from, let’s say, 100,000 graphic designers to 10 million.

The problem will, at that point, find enough customers to keep those 10 million designers busy. Or pay them enough to make a living.

It might get better before it gets worse.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

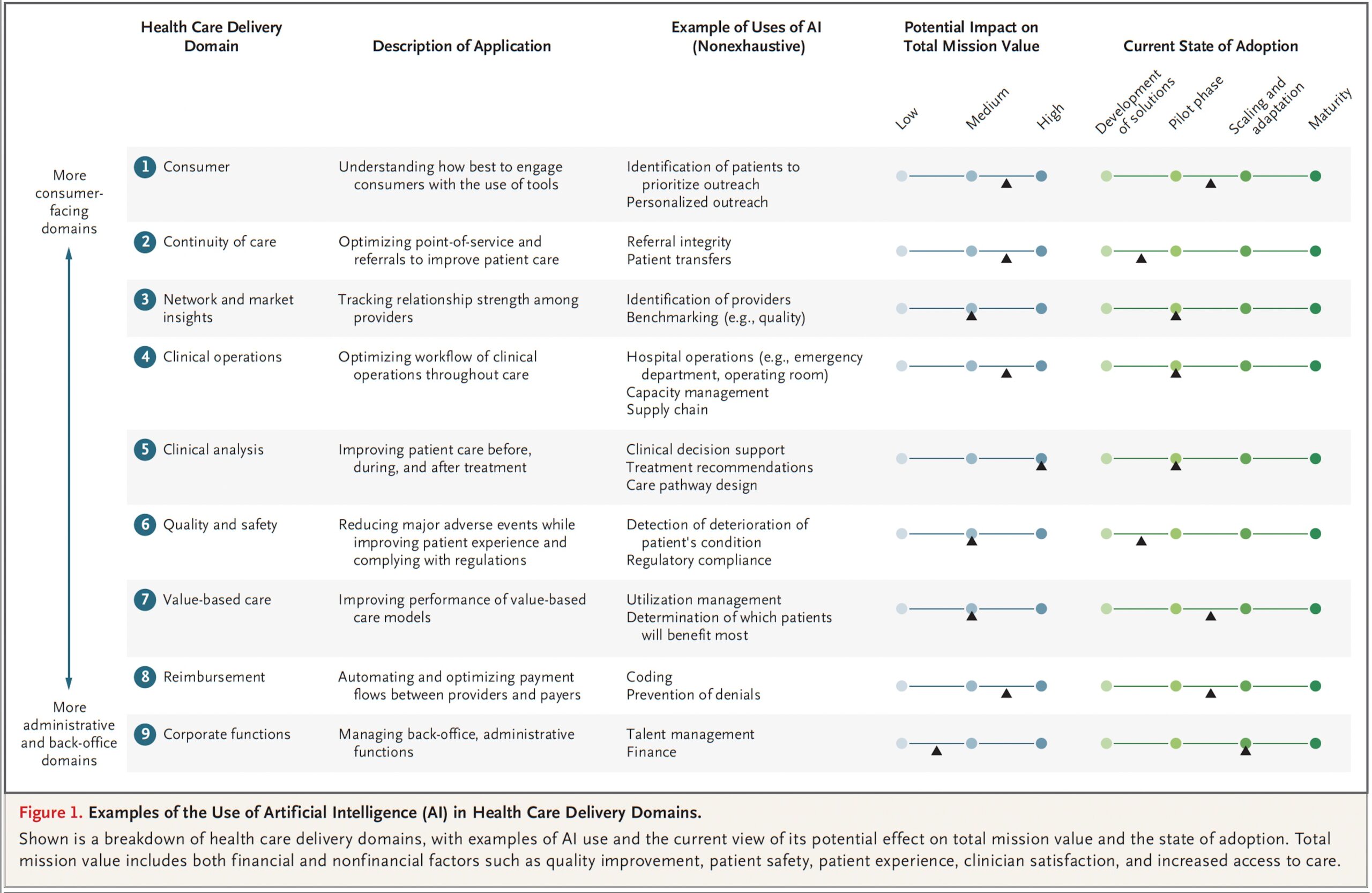

The New England Journal Of Medicine published a very interesting article on the adoption of AI in the Health Care industry. It comes with an important chart:

From the research:

AI adoption in health care delivery lags behind the use of AI in other business sectors for multiple reasons. Early AI took root in business sectors in which large amounts of structured, quantitative data were available and the computer algorithms, which are the heart of AI, could be trained on discrete outcomes — for example, a customer looked at a product and bought it or did not buy it. Qualitative information, such as clinical notes and patients’ reports, are generally harder to interpret, and multifactorial outcomes associated with clinical decision making make algorithm training more difficult.

In last week’s Splendid Edition, Issue #23 – Your Own, Personal, Genie, we saw how three different companies are using a new AI technology developed by AWS to automatically generate clinical notes from patient-doctor conversations.

So something is moving in that direction.

Let’s continue with the most important insight:

We think that the need for AI to help improve health care delivery should no longer be questioned, for many reasons. Take the case of the exponential increase in the collective body of medical knowledge required to treat a patient. In 1980, this knowledge doubled every 7 years; in 2010, the doubling period was fewer than 75 days.1 Today, what medical students learn in their first 3 years would be only 6 percent of known medical information at the time of their graduation. Their knowledge could still be relevant but might not always be complete, and some of what they were taught will be outdated. AI has the potential to supplement a clinical team’s knowledge in order to ensure that patients everywhere receive the best care possible.

Somehow similarly, in my previous job, and for years, I kept repeating that no human team, no matter how skilled or how numerous, can keep up with the amount of events that occur in a large, complex IT environment and that, because of this, monitoring dashboards full of blinking lights and numbers are completely useless, and only feed a delusion of control.

The commonality between the above quote and my position on monitoring dashboards is that it’s time to accept that human beings cannot scale to the complexity of the world we have created. And if we cannot scale, the number of errors we make is only destined to increase.

Let’s go back to the article for some more data on AI adoption:

In health care delivery, the role of AI in improving clinical judgment has garnered the most attention, with a particular focus on prognosis, diagnosis, treatment, clinician workflow, and expansion of clinical expertise. Specialties such as radiology, pathology, dermatology, and cardiology are already using AI in the process of image analysis. In radiologic screening, for example, up to 30% of radiology practices that responded to a survey indicated that they had adopted AI by 2020, and another 20% of radiology practices indicated that they planned to begin using AI in the near future.

…

We have found that uses of AI are emerging in nine domains of health care delivery. However, most uses of AI in health care delivery have not been subject to randomized, controlled trials. Therefore, the usual level of evidence required for medical decision making may be lacking.

…

Adoption of AI in health care delivery is lagging behind for several reasons. First, given the many different sources and types of health care data needed, they are known to be more heterogeneous and variable than data in other business sectors (e.g., data to make a movie recommendation in Netflix). This creates challenges in applying AI. Another major reason is the fee-for-service model of payment as compared with a value-based payment model. The latter payment structure would fund measures that improve care or make it safer, which is where the benefit of AI in health care delivery could be of substantial importance. Under a fee-for-service model, these incentives are substantially less prominent or absent altogether. Other documented reasons for the slow adoption of AI in health care delivery are lack of patient confidence, including concerns about privacy and trust in the output; regulatory issues such as Food and Drug Administration approval and reimbursement; methodologic concerns such as validation and communication of the uncertainty of a given AI-based recommendation or decision; and reporting difficulties such as explanations of assumptions and dissemination. These factors will have to be addressed before long-term adoption of AI and full realization of the opportunity that it provides.Issues within health care organizations may also account for the slow adoption of AI.

I close with a key point that resonates with Dario Amodei’s comment in the first story of this newsletter:

Finally, implementation is critical for AI adoption within an organization. This category takes the most time and effort, and it is often shortchanged by organizations. One challenge is change management. For example, there may be agreement to move to prescriptive scheduling in the operating room, but the implications of this decision are quite different for a hospital administrator, the chief of surgery, individual surgeons, and the operating-room team. Thus, successful AI adoption is likely to require intentional actions that both help to effect behavioral change and address the details holistically, such as creating AI output visualizations that make interpretation easy for clinicians.

Another implementation challenge is workflow integration. The use of AI in clinical operations is more successful when it is treated as a routine part of the clinical workflow. In essence, AI output is more effective when viewed as a member of the team rather than as a substitute for clinical judgment.

Change management. Another drum I’ve been beating for years when explaining what is the key ingredient for a successful rollout of automation technologies.

It turned out that technologies don’t understand and don’t care about change management. It’s up to the business leadership to pay attention to it and align the organization for awareness and cooperation.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

This week I invite you to watch a fascinating video about the collaboration between a rapper, Lupe Fiasco, and Google.

The former used a large language model, probably a customized of Google’s AI assistant Bard, which is powered by the AI model called PaLM 2, but not in the way you would expect:

It’s a great, even emotional, story that reinforces the idea that AI is not a threat to human creativity, but a tool that can help us be more creative.

That certainly was Google’s intent.

Except that… 🙂

…the part that most people don’t want to see is that a generative AI model can now also capture the thought process of the rapper in selecting which words were the best fit for the song.

Today, AI models are not very good at that. But one year ago, they were not able to write a mathematical theorem like a Shakespeare sonnet, and now they can.

Seeing the trajectory of our actions and their long-term consequences is the hardest thing.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

The UK House of Commons Committee for culture, media and sport just published a 79-pages report titled Connected tech: smart or sinister? in which they dedicate some space to how workers might feel in a workplace where they are surveilled and judged by tech (and that tech is powered by AI):

the introduction of connected tech in workplace environments can also have negative impacts on employees. As the ICO notes, “the key difference is the nature of the employer/employee relationship and its inherent power imbalance”

Dr Tabaghdehi and Dr Matthew Cole, post-doctoral researcher at the Fairwork Project based at the OII, described to us instances where the micro-determination of time and movement tracking through connected devices, which had been introduced to improve productivity, such as in warehouses had also led to workers feeling alienated and experiencing increased stress and anxiety.

Dr Sarah Buckingham similarly described Devon & Cornwall and Dorset Police Services’ trial of a “mobile health (mHealth)” intervention, which consisted of giving officers FitBit activity monitors and Bupa Boost smartphone apps to promote physical activity and reduce sedentary time. The trial increased physical activity on average but also led to “feelings of failure and guilt when goals were not met, and anxiety and cognitive rumination resulting from tracking [physical activity] and sleep”.

A Report on Royal Mail published earlier this year by the then-Business, Energy and Industrial Strategy Committee concluded that data from handheld devices called Postal Digital Assistants (PDAs) had “been used to track the speed at which postal workers deliver their post and, subsequently, for performance management, both explicitly in disciplinary cases and as a tool by local managers to dissuade staff from stopping during their rounds” despite an agreement of joint understanding between Royal Mail and the Communication Workers Union (CWU) in April 2018 to the contrary.

…

Dr Cole also argued that, more broadly, technological transformation would likely lead to a change in task composition and a deskilling of many roles as complex tasks are broken up into simpler ones to allow machines to perform them.Dr Tabaghdehi cited the education sector as one profession likely to experience disruption due to technological transformation.

…

The ICO had noted that respondents to a recent call for evidence on employment practices “raised concerns around the use of connected tech in workplace scenarios including the increased use of monitoring technologies, as well as the ways in which AI and machine learning are impacting how decisions are made about workers” and said it “will provide more clarity on data protection in the employment context as part of this work”. Dr Cole also called for greater observation and monitoring of AI system deployments, empowered labour inspectorates and a greater role for the Health and Safety Executive (HSE), the UK regulator for workplace health and safety, in regulating workplace AI systems and upholding standards of deployment.

As a result, the Committee recommends:

The monitoring of employees in smart workplaces should be done only in consultation with, and with the consent of, those being monitored. The Government should commission research to improve the evidence base regarding the deployment of automated and data collection systems at work. It should also clarify whether proposals for the regulation of AI will extend to the Health and Safety Executive (HSE) and detail in its response to this report how HSE can be supported in fulfilling this remit.

AI-induced stress and anxiety might become a common topic mentioned by unions in the next few years.

Perhaps we should ask China’s citizens how they feel about it.

This week we attempt something that I genuinely would have not considered worth a minute of my (or your) time just one year ago: we’ll ask the AI to come up with a list of business ideas.

Before you click the unsubscribe button of this newsletter, let me give you some context. If there was no science behind this, I guarantee you that I wouldn’t bother you.

…

Their first discovery is how quickly a human can generate 100 ideas with the help of GPT-4:

Two hundred ideas can be generated by one human interacting with ChatGPT-4 in about 15 minutes. A human working alone can generate about five ideas in 15 minutes. Humans working in groups do even worse.

…

A professional working with ChatGPT-4 can generate ideas at a rate of about 800 ideas per hour. At a cost of USD 500 per

hour of human effort, a figure representing an estimate of the fully loaded cost of a skilled professional, ideas are generated at a cost of about USD 0.63 each, or USD 7.50 (75 dimes) per dozen. At the time we used ChatGPT-4, the API fee for 800 ideas was about USD 20. For that same USD 500 per hour, a human working alone, without assistance from an LLM, only generates 20 ideas at a cost of roughly USD 25 each, hardly a dime a dozen. For the focused idea generation task itself, a human using ChatGPT-4 is thus about 40 times more productive than a human working alone.

Breathtaking, but only as long as these AI-generated ideas are not complete crap. In fact, per the researchers premise, to be useful, an LLM has to generate a few truly exceptional ideas rather than a lot of non-complete crap ones.

So, how did they evaluate the ideas generated by GPT-4?

…

OK.

It’s time to try this ourselves, using a slightly modified version of the prompt used by the researchers.

Given that it’s the 6th monthiversary of Synthetic Work, I think it’s the perfect time to try to generate 100 ideas to evolve this project.

…

Here’s the first batch of 10 ideas:

…

Idea #1 is already in motion. As you know that I’ve started offering consulting services to companies that want to adopt AI, and I certainly thought about expanding the service to offer access to fellow experts in adjacent areas (like AI and legal).

I’m not going to comment on idea #2. You can tell me if this is a Synthetic Work service that you would be interested in.

I never thought about idea #3. Is it an exceptional one? Perhaps not.

I think about idea #4 every day, even under the shower. Technology is not there yet, but it’s coming.

Ideas #5, #9, and #10 are very difficult to implement. Idea #9 is not adjacent to Synthetic Work, in my opinion. Also: are they exceptional?

Ideas #6 and #7 are adjacent media projects tailored to provide education and cybersecurity content. As Synthetic Work evolves, you should expect more vertical content. Nothing groundbreaking.

Idea #8 is confusing in its description. It’s a mix of content tailored for the Pharmaceutical industry, similar to ideas #6 and #7, and an actual drug discovery platform, which is what Google DeepMind is doing.

…

Remember: we said that the value of using an LLM as a business idea generator is that there’s judgment involved to bias the process.

OK.

I’ll leave you with another two batches of 10 ideas each.

…

Especially in this last batch, I’m sure you’ll recognize a few things that Synthetic Work already does. So, I wouldn’t say that GPT-4 is doing a job worse than a human (me, in this case) at idea generation.

Of course, to have a chance to stumble on a truly exceptional idea, just like the researchers said, we’ll have to generate thousands of them. 30 is nowhere near enough.

If you try this experiment yourself, in the areas that matter to you, and you stumble on something truly exceptional, please let me know.

Now, to close.

Why is this research so incredibly important?

…