- Intro

- What’s left for artists to do?

- What Caught My Attention This Week

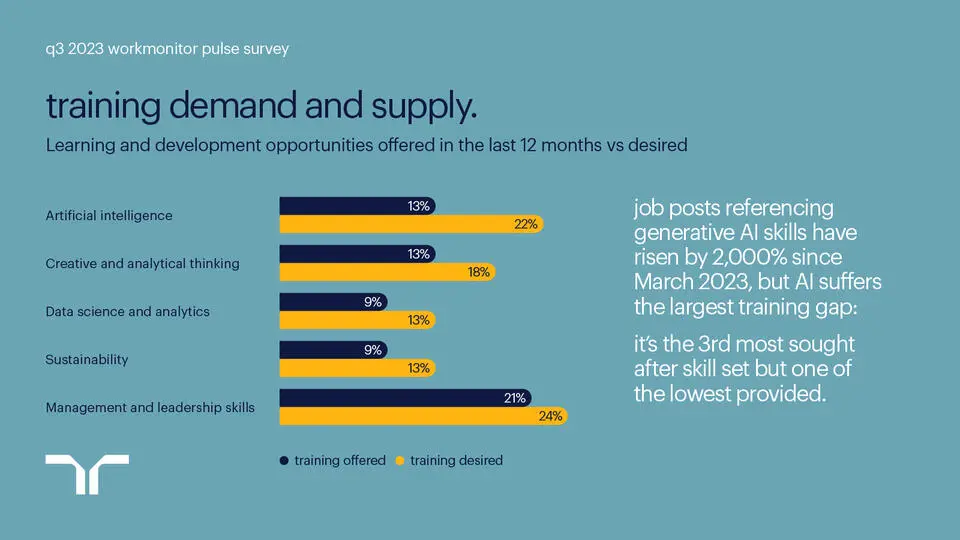

- A survey conducted by Randstad highlights the lack of upskilling for the global workforce on AI.

- The organizers of Eurovision are considering banning AI-generated songs from the competition.

- UK publishers are urging the Prime Minister to protect works ingested by AI models.

- The Way We Work Now

- The US Securities and Exchange Commission Chair Gary Gensler on how AI might impact the way people trade.

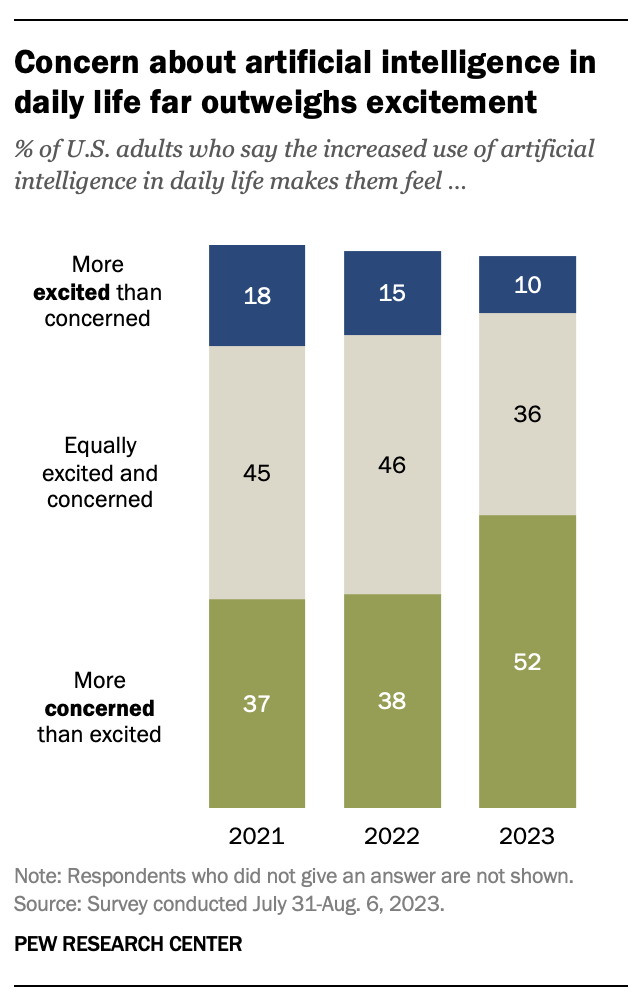

- How Do You Feel?

- According to Pew Research, 52% of Americans feel more concerned than excited about the increased use of artificial intelligence.

- Putting Lipstick on a Pig

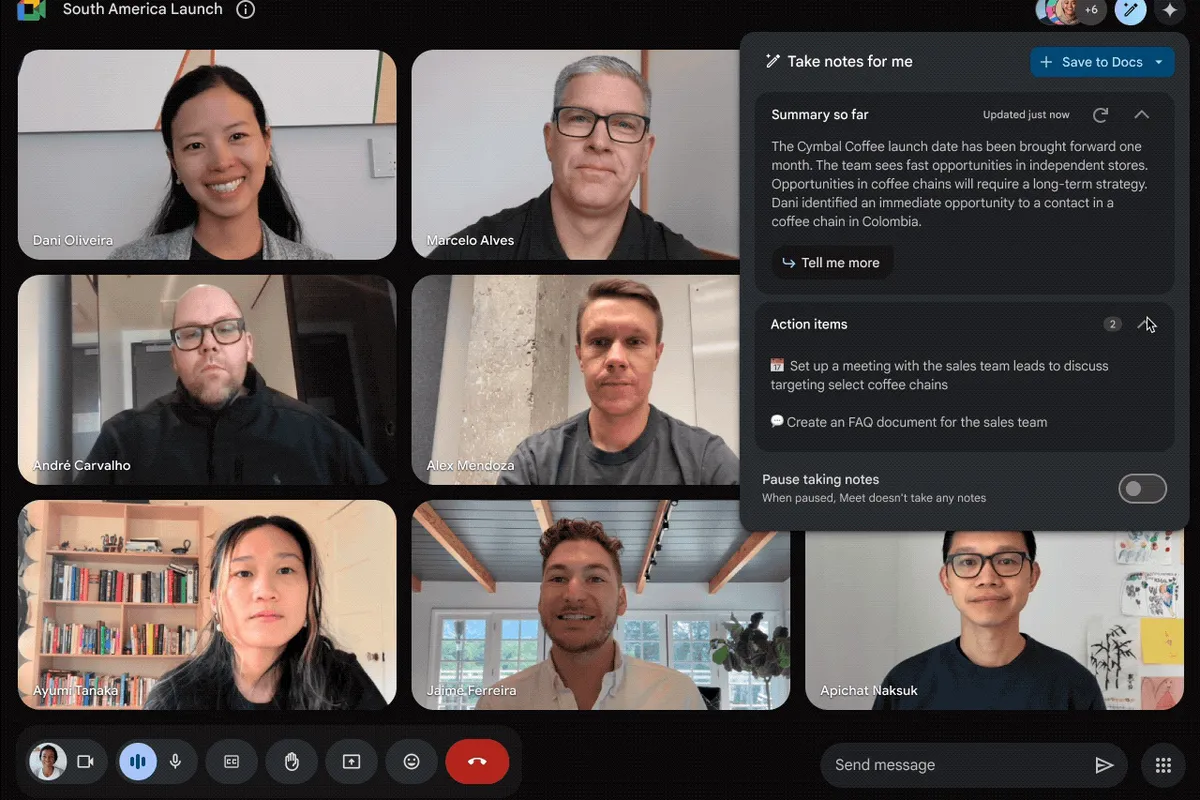

- Microsoft, Google, and Zoom are now ready to help you reach the maximum level of alienation during corporate meetings.

This is a special issue of Synthetic Work, as I introduce, experimentally, the audio version, powered by ElevenLabs technology.

If you want to know more about why and how I did it, read this week’s Splendid Edition.

If you like it and you’d like this to become a permanent feature of Synthetic Work, reply to this email and let me know.

Notice: This is not my voice. In fact, it’s nobody’s voice.

Earlier this week, I went to a print shop to print a large-scale version of an AI image. While I was waiting for the job to be completed, I had a chat with one of the employees, who is a young artist.

He was aghast to discover that I used Stable Diffusion to generate the artwork he was preparing to print.

Like in dozens of other conversations I had with artists like him, he told me:

The only way I could potentially consider using AI is by feeding it my past works so it can generate more things in my style. But if I do so, then what’s left to do for me?

It’s a comment I hear often from artists who have not spent enough time learning how text2image (t2i) AI systems work, just like in his case.

I explained to him that, at least for now, his role as an artist is more important than ever. These AI models might take over the production of the artwork, but his taste and experience are still important to improve the composition (for example, via inpainting) and, more importantly, to pick the one variant of the same image that should be seen by the audience and go into the market, the curation.

Eventually, AI models will surpass humans even in those tasks, especially if we integrate them with all sorts of metrics about how people feel about each generated image and how much they are willing to pay for them.

But not yet.

For a few more years, the importance of the human artist will still be critical.

Rather than embracing the new technology and seeing if it could elevate his work, he refused to touch it.

His plan, he told me, was to simply wait for everybody to embrace AI and then sell his human craftsmanship at a high price, like we do today with hand-made tailored suits or artisanal shoes.

It’s a great plan, I told him, as long as you can afford to wait and see the day when human craftsmanship will be so rare and so in demand to let you command a price high enough to make a living.

He didn’t reply.

If you want to wait for AI to go away in one way or another, be sure you have the resources to survive until that day comes.

Alessandro

The first thing that I thought was interesting this week is a survey conducted in October, 2022 by the company Randstad, which highlights the lack of upskilling for the global workforce on AI.

Shubham Sharma, reporting for VentureBeat:

according to a new Workmonitor Pulse survey from staffing company Randstad, despite this surge, AI training efforts continue to lag.

Randstad analyzed job postings and the views of over 7,000 employees around the world and discovered that even though there’s been a 20-fold increase in roles demanding AI skills, only about one in ten (13%) workers have been offered any AI training by their employers in the last year. The findings highlight a major imbalance that enterprises need to address to truly harness the opportunities of AI and succeed.

…

In the survey, 52% of the respondents said they believe being skilled in AI tools will improve their career and promotion prospects, while 53% said that the technology will have an impact on their industries and roles.Similar stats were noted in the U.S., where 29% of the workers are already using AI in their jobs. In the country, 51% said they see AI influencing their industry and role and 42% expressed excitement about the prospects it will bring to their workplace. For India and Australia, the figures were even higher.

…

As of now, the survey found, AI handling is the third most sought after skillset – expected by 22% of the participants over the next 12 months – after management and leadership (24%) and wellbeing and mindfulness (23%), but only 13% of the workers claim they have been given opportunities to upskill in this area in the last 12 months.The gap between expected and offered AI training was found to be highest in Germany (13 percentage points) and the UK (12 percentage points), followed by the US (8 percentage points).

Interesting, but not surprising.

I’ve seen first-hand how little big corporations invest in training and upskilling, even for their most valuable employees.

I am working with some organizations on their upskilling programs, but I remain skeptical that the majority of companies will do the right thing.

This is why it’s so critical that you don’t wait for your employer to give you the tools you need to succeed in a future dominated by AI.

The Splendid Edition of Synthetic Work is meant to help you with that. It’s meant to help you understand what you can do with AI, and how to do it, at work, in your line of business, while you learn what other companies like yours are doing as well.

The second thing that I thought was interesting this week is the news that the Eurovision organization is considering banning AI-generated songs from the competition.

Thomas Seal, reporting for Bloomberg:

Eurovision, the kitschy annual pop song contest, is debating a ban on artificial intelligence, the latest sign of the entertainment industry’s concerns over the emerging technology.

“What if at the Eurovision Song Contest we suddenly get an AI-created song?” said Jean-Philip de Tender, deputy director general of the European Broadcasting Union, an alliance of TV companies that oversees the contest. The EBU is “reflecting on how do we need this in the rulebook, that the creativity should come from humans and not from machines.”

While new rules require a discussion with the EBU’s members and governing bodies, the competition should reward “people on stage, who have achieved something in writing a song and performing a song,” de Tender said in an interview with Bloomberg Thursday at the Edinburgh TV Festival.

…

Eurovision has inspired AI experiments in the past. In 2019, algorithms developed in part by Oracle Corp. analyzed hundreds of past submissions to create the melody and lyrics for “Blue Jeans and Bloody Tears,” a duet by 1978 Eurovision winner Izhar Cohen and a pink robot.This year, Eurovision reached an audience of 162 million, according to the contest’s website.

Nothing. Absolutely nothing will prevent the music industry from generating songs with AI. Those songs will be the most popular songs ever created. And the ones that will produce those songs will be the same music labels that today are fighting against anonymous people cloning the voices of famous singers to create spoof songs. And the music streaming services.

That’s because these two industry constituencies, music labels and streaming services, are the ones that have the most data on what people have liked in the last 100 years of music.

If you think that they are not going to use that data to generate the most wanted songs ever, I have a bridge to sell you.

Do you remember the popular synthetic song Heart on My Sleeve featuring the unauthorized voice of Drake that we mentioned in Issue #10 – The Memory of an Elephant?

The anonymous author published a new song titled Whiplash on TikTok.

I cannot show you the original clip as it has been removed since I wrote this issue, but I can show you the original clip next to the clip of a famous TikToker reacting to it:

I urge you to listen to the song, even if you hate rap.

I urge you to read the comments and do a bit of exploring until you realize that people are crazy for this song, and they care exactly zero that it’s made with AI.

But the anonymous creator is not content enough. He/she has to stir the pot even more with the accompanying text:

The future of music is here. Artists now have the ability to let their voice work for them without lifting a finger. That being said, ghostwriter is open for business.

@travisscott @21savage it’s clear that people want this song. DM me on Instagram if you are interested in allowing me to release this record, or if you’d like me to remove this post.

If you’re down to put it out, I will clearly label it as A.I., and I’ll direct royalties to you. Respect either way.

Do you realize how many kids all around the world will now flock to generative AI music models in the attempt to emulate this?

And here’s the inevitable evolution, documented by Joe Coscarelli, reporting for The New York Times:

Behind the scenes, however, the shadowy act and its team were making overtures to the very industry figures “Heart on My Sleeve” had unnerved. In the months since, those behind the project have met with record labels, tech leaders, music platforms and artists about how to best harness the powers of A.I., including at a virtual round-table discussion this summer organized by the Recording Academy, the organization behind the Grammy Awards.

“I knew right away as soon as I heard that record that it was going to be something that we had to grapple with from an Academy standpoint, but also from a music community and industry standpoint,” Harvey Mason Jr., a producer who is the chief executive of the Recording Academy, said in an interview. “When you start seeing A.I. involved in something so creative and so cool, relevant and of-the-moment, it immediately starts you thinking, ‘OK, where is this going? How is this going to affect creativity? What’s the business implication for monetization?’”

Mason said he had contacted Ghostwriter directly on social media after being impressed with “Heart on My Sleeve.” He added that Ghostwriter attended the meeting in character, including using a distorted voice.

…

A representative for Ghostwriter, who requested anonymity to not expose those behind the project — acknowledging that much of its marketing power comes from its mystery — confirmed that “Whiplash,” like “Heart on My Sleeve,” was an original composition written and recorded by humans. Ghostwriter attempted to match the content, delivery, tone and phrasing of the established stars before using A.I. components.“As far as the creative side, it’s absolutely eligible because it was written by a human,” said Mason of the Recording Academy.

He added that the Academy would also look at whether the song was commercially available, with Grammy rules stating that a track must have “general distribution,” meaning “the broad release of a recording, available nationwide via brick-and-mortar stores, third-party online retailers and/or streaming services.”

If a Grammy goes to either of these songs, it will open the gates to a new era of music.

The music streaming services are especially primed to attempt creating hits with AI. They are struggling to compete against each other and grow their subscriber base. The music labels cost them a lot of money in royalties, but they can’t get rid of them. Human artists can say or do nasty things that force them to break multi-million dollar contracts. And so on.

They got served on a silver platter the opportunity to get rid of all of these problems by using AI to generate synthetic singers and incredibly popular songs.

The technology is maturing quickly, and this is one the jobs that might become the most impacted by AI in the years to come.

I know that among you there are many people believing that AI will never be able to replace human creativity. My answer to all of you is always the same: watch Everything is a Remix.

The third interesting thing of the week about the UK publishers urging the Prime Minister to protect works ingested by AI models.

Dan Milmo, reporting for The Guardian:

UK publishers have urged the prime minister to protect authors’ and other content makers’ intellectual property rights as part of a summit on artificial intelligence.

The intervention came as OpenAI, the company behind the ChatGPT chatbot, argued in a legal filing that authors suing the business over its use of their work to train powerful AI systems “misconceived the scope” of US copyright law.

The letter from the Publishers Association, which represents publishers of digital and print books as well as research journals and educational content, asks Rishi Sunak to make clear at the November summit that intellectual property law must be respected when AI systems absorb content produced by the UK’s creative industries.

…

In its letter, the Publishers Association said: “On behalf of our industry and the wider content industries, we ask that your government makes a strong statement either as part of, or in parallel with, your summit to make clear that UK intellectual property law should be respected when any content is ingested by AI systems and a licence obtained in advance.”

…

In the UK, the government has backtracked on an initial proposal to allow AI developers free use of copyrighted books and music for training AI models. The exemption was raised by the Intellectual Property Office in June 2022 but ministers have since rowed back on it. In a report published on Wednesday, MPs said the handling of the exemption proposal showed a “clear lack of understanding of the needs of the UK’s creative industries”.

…

The letter from the publishers’ trade body said the UK’s “world-leading” creative industries should be supported in parallel with AI development. It pointed to research that estimated the publishing industry to be worth £7bn to the UK economy, while employing 70,000 people and supporting hundreds of thousands of authors.

The job of a lot of people is on the line if we end up being able to publish a bestselling book with a single prompt and the push of a button.

As I wrote before, for now, it’s strikes and calls to the politicians. The next logical step is sabotage.

Don’t expect these people to go down without a fight.

Actors, musicians, writers. One by one, every category is raising against the use of AI to generate synthetic content. When corporate employees?

Are we ready to address that?

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Long overdue, I’d like to point your attention to a reiterated comment about the impact of AI on the way people trade by the Chair of the US Securities and Exchange Commission (SEC) Gary Gensler.

From the transcript:

A lot of the recent buzz has been about such generative AI models, particularly large language models. AI, though, is much broader. I believe it’s the most transformative technology of our time, on par with the internet and mass production of automobiles.

…

The possibility of one or even a small number of AI platforms dominating raises issues with regard to financial stability. While at MIT, Lily Bailey and I wrote a paper about some of these issues called “Deep Learning and Financial Stability.”[30] The recent advances in generative AI models make these challenges more likely.AI may heighten financial fragility as it could promote herding with individual actors making similar decisions because they are getting the same signal from a base model or data aggregator. This could encourage monocultures. It also could exacerbate the inherent network interconnectedness of the global financial system.

Thus, AI may play a central role in the after-action reports of a future financial crisis.

While current model risk management guidance—generally written prior to this new wave of data analytics—will need to be updated, it will not be sufficient. Model risk management tools, while lowering overall risk, primarily address firm-level, or so-called micro-prudential, risks. Many of the challenges to financial stability that AI may pose in the future, though, will require new thinking on system-wide or macro-prudential policy interventions.

The paper Gensler is referring to was published in November 2020 and it’s titled: Deep Learning and Financial Stability.

When we imagine how AI could impact the Finance world, we tend to think about it with optimism, imagining easier-to-use and more intelligent tools that, among other things, could further democratize access to retail trading.

The SEC Chair perspective turns this idea on its head. Interesting.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

We are a little more than a quarter away from 2024, a date that sounds futuristic for people of my age.

We have all the evidence we need that the artificial intelligence technologies we use today have a lot of room to evolve and mature. Even if the current approach we are using will eventually hit a wall, that wall seems far away. And even if that wall exists, what can be done with today’s approach is enough to reshape quite a few jobs and industries.

All of this is to say that the adoption of AI at a planetary scale is inevitable. If you read the Splendid Edition of Synthetic Work, you know this probably better than the overwhelming majority of people in the industry, including most AI experts.

As the destiny of AI evolved from “It’s another hype before a new AI winter comes.” to “It’s inevitable.”, it’s important to understand how people’s feelings about AI evolved as well.

Pew Research offers some help, at least for what concerns Americans, with a new report published last week.

Alec Tyson and Emma Kikuchi, write about their research:

Overall, 52% of Americans say they feel more concerned than excited about the increased use of artificial intelligence.

…

The share of Americans who are mostly concerned about AI in daily life is up 14 percentage points since December 2022, when 38% expressed this view.

…

Still, there are some notable differences, particularly by age. About six-in-ten adults ages 65 and older (61%) are mostly concerned about the growing use of AI in daily life, while 4% are mostly excited. That gap is much smaller among those ages 18 to 29: 42% are more concerned and 17% are more excited.

…

The rise in concern about AI has taken place alongside growing public awareness. Nine-in-ten adults have heard either a lot (33%) or a little (56%) about artificial intelligence. The share who have heard a lot about AI is up 7 points since December 2022.Those who have heard a lot about AI are 16 points more likely now than they were in December 2022 to express greater concern than excitement about it.

Now.

In Issue #15 – Well, worst case, I’ll take a job as cowboy we learned from another Pew Research survey that there are a lot of Americans who talk about ChatGPT but never used ChatGTP.

So one might wonder if a lot of people are expressing an opinion on something they don’t know much about.

I know. It would be the first time. An unprecedented event in the history of humanity. But, let’s keep our minds open to the possibility.

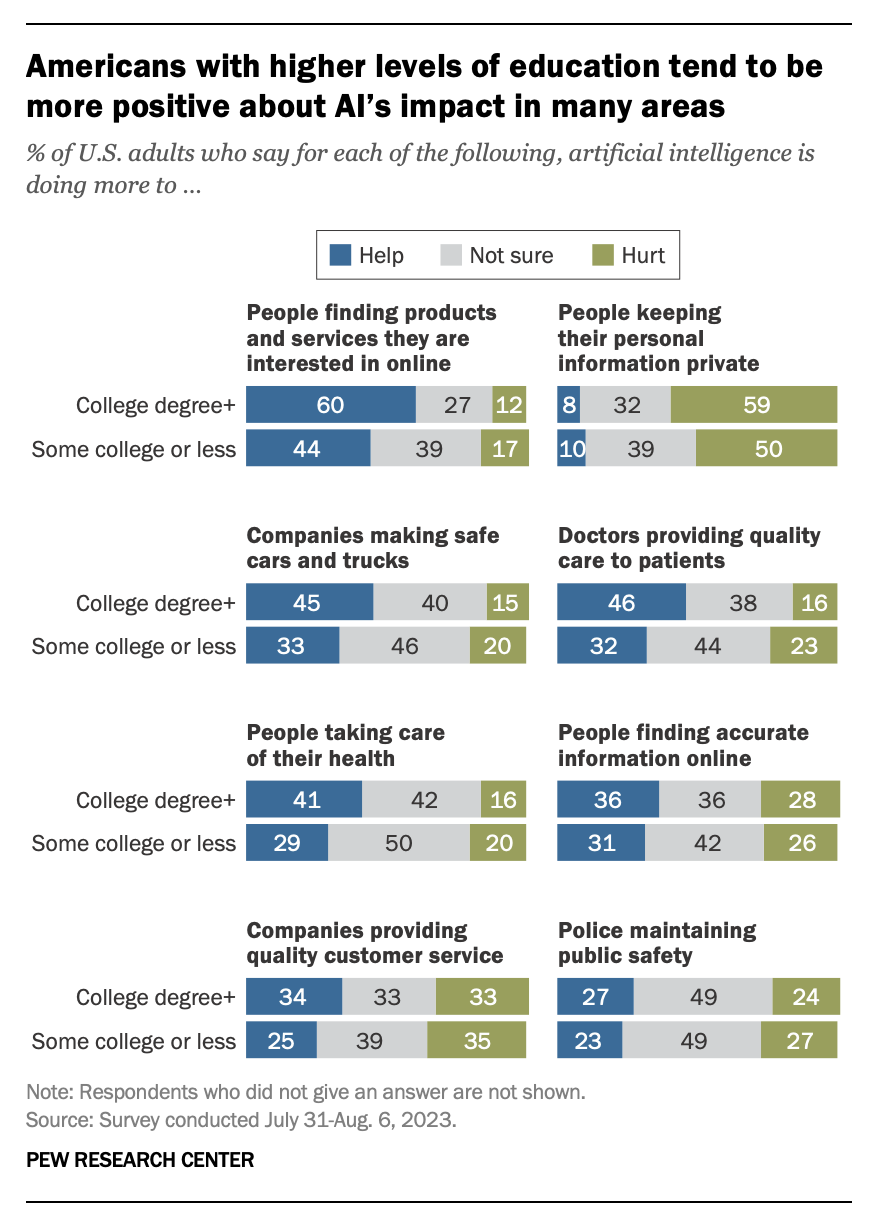

People are not just concerned. They are also confused:

There are several uses of AI where the public sees a more positive than negative impact.

For instance, 49% say AI helps more than hurts when people want to find products and services they are interested in online.

…

Other uses of AI where opinions tilt more positive than negative include helping companies make safe cars and trucks and helping people take care of their health.In contrast, public views of AI’s impact on privacy are much more negative. Overall, 53% of Americans say AI is doing more to hurt than help people keep their personal information private. Only 10% say AI helps more than it hurts, and 37% aren’t sure.

So, people are happy about receiving help in finding the things they want to buy, but they don’t want to tell anybody about their taste.

“Somebody else can train those AI models, not me. I’ll just enjoy them very much.”

So, here’s the problem, people:

The only way to find somebody (a seller, a lover, etc.) that feels like “Wow! This is exactly what I was looking for! It feels like he/she is reading my mind!” is to let him/her read your mind.

The hope that, by pure chance, you’ll stumble on the perfect match (for a product, a service, a lover, etc.), in your lifespan, leads to a life of disappointment.

Back to the report with a final data point about the influence of education:

Americans with higher levels of education are more likely than others to say AI is having a positive impact across most uses included in the survey. For example, 46% of college graduates say AI is doing more to help than hurt doctors in providing quality care to patients. Among adults with less education, 32% take this view.

Keep this in mind when you read the next tech optimist on X saying that AI will free us to do greater things.

A few months ago, Microsoft announced the future integration of OpenAI models with Microsoft 365, suggesting the capability of automatically taking notes on your behalf during meetings. At that time, I wrote that the immediate consequence might be even less engagement. Which is hard, given that most people in corporate meetings stare at their screens instead of paying attention to the meeting.

It still can happen if AI turns the whole exercise of attending a meeting into a mere formality.

In my experience, people attend meetings primarily to be sure they are there to defend their interests: clinging to their existing resources, fighting for more budget, securing new requisitions, and, most importantly, making sure they can defend themselves if somebody badmouths their jobs in their absence.

If the AI takes all the notes, and people can ask it something like: “send me a message on the corporate chat if any of these situations happen during the conversation”, then the urgency to attend meetings will be reduced to near zero.

All of these considerations, of course, equally apply to Google, which announced the integration of Bard with Google Meet.

Jay Peters, reporting for The Verge:

If Google Meet’s new AI tools are as good as advertised, you might never need to pay attention to another meeting again — or even show up at all. At its Cloud Next conference today, Google revealed a handful of new AI-powered features coming soon to Meet.

One of the biggest new AI-enabled features is the ability for Google’s Duet AI to take notes in real time: click “take notes for me,” and the app will capture a summary and action items as the meeting is going on. If you’re late to a meeting, Google will be able to show you a mid-meeting summary so that you can catch up on what happened. During the call, you’ll be able to talk privately with a Google chatbot to go over details you might have missed. And when the meeting is over, you can save the summary to Docs and come back to it after the fact; it can even include video clips of important moments.

Wait. The best is yet to come:

Another new Meet feature lets Duet “attend” a meeting on your behalf. On a meeting invite, you can click an “attend for me” button, and Google can auto-generate some text about what you might want to discuss. Those notes will be viewable to attendees during the meeting so that they can discuss them.

What could possibly go wrong?

And before you start wondering if this is the worst possible way to solve the meeting problem, please take note of how strong the cognitive dissonance is inside Google:

Ultimately, [Dave Citron, Google’s senior director of product for Meet] says Meet is still working on the same overall goal as before. “We really want meetings to feel like they’re bringing people together into the same room regardless of where you are and your device,” he says.

Of course, now, everybody thinks this is a great idea that must be done. So here’s the uncanny timing of Zoom:

Preparing for that big meeting. Writing emails. Catching up on a backlog of chat messages. Repetitive tasks like these can take up 62% of your workday, not to mention sap your productivity and hurt your ability to collaborate with your team. But now, you’re empowered to do more using Zoom AI Companion.

If communicating with others is not collaboration, what is it? Each one sitting at their desk, in silence, with headphones on, and a screen in front of them, doing their own thing and sending that thing when it’s done?

Even developers that “collaborate” in an open source project, each writing pieces of code, eventually have to communicate with each other and with the group to transfer ideas from one brain to another.

If you strip away the communication part from human interaction, offloding that job to an AI, all it’s left is people doing their atomic tasks based on an input and a set of rules.

Humans as programming functions of a program we call society.

Let’s continue with Zoom’s announcement:

In line with our commitment to responsible AI, Zoom does not use any of your audio, video, chat, screen sharing, attachments, or other communications-like customer content (such as poll results, whiteboard, and reactions) to train Zoom’s or third-party artificial intelligence models.

…

You hop on your computer and see a bunch of chats. No problem — AI Companion will help you compose chat responses with the right tone and length based on your prompts. That time saved can help you focus on a project you need to share with your team right away.

Humans as programming functions.

You grab a cup of coffee and arrive at your first meeting a few minutes late. Instead of interrupting your teammates to find out what you missed, just ask AI Companion to catch you up and it will recap what’s been discussed so far.

…

You can also ask AI Companion more specific questions about the meeting content. After the meeting, AI Companion smart recordings can automatically divide cloud recordings into smart chapters for easy review, highlight important information, and create next steps for attendees to take action.You missed a meeting yesterday because you were busy or traveling. Now, you no longer need to find someone to fill you in. You can just read the meeting summary generated by AI Companion, which tells you who said what, highlights important topics, and outlines next steps.

Humans as programming functions.

Pressed for time and have important emails to respond to? Later this month, AI Companion will be able to help you compose email messages with the right tone and length.

After several back-to-back calls, you have a ton of unread chats. Coming later this month, AI Companion will be able to summarize your chat messages instantly so you can see the big picture more easily. This fall, you’ll be able to respond to those messages quickly with AI Companion suggestions to help you complete your sentences and responses.

…

In a chat channel, your teammates discuss the marketing plan for a new product but you need to clarify a few key points and want to talk through it with them. This fall, AI Companion will be able to automatically detect meeting intent in chat messages and display a scheduling button to streamline the scheduling process.

Humans as programming functions.

More importantly, if the communication part is offloaded to an AI, you, my dear fellow human, are entirely replaceable.

I know you think your brilliance will emerge from the output that you’ll produce (and that the AI will communicate and promote on your behalf), but it won’t. Very few move up the ladder because of the quality of their output, and only up to a point.

We are successful because of how we communicate with others.

This week’s Splendid Edition is titled Use AI, save 500 lives per year.

In it:

- Intro

- What is software?

- What’s AI Doing for Companies Like Mine?

- Learn what DoorDash, Kaiser Permanente, and BHP Group are doing with AI.

- A Chart to Look Smart

- Researchers have used large language models to analyze the impact of corporate culture on financial analyst reports. Can this approach be used elsewhere?

- The Tools of the Trade

- Let’s use a voice generation tool to produce an audio version of Synthetic Work