- Intro

- My new YouTube channel is live!

- The recording of Jobs 2.0: The AI Upgrade podcast is now available!

- What Caught My Attention This Week

- What happens when you ask GPT-4 to take a creative thinking test? It beats humans, that’s what happens.

- UK researchers have started the tests to see if AI could replace air traffic controllers.

- US Senator Bernie Sanders suggests using AI to give workers more paid time off.

- The Way We Work Now

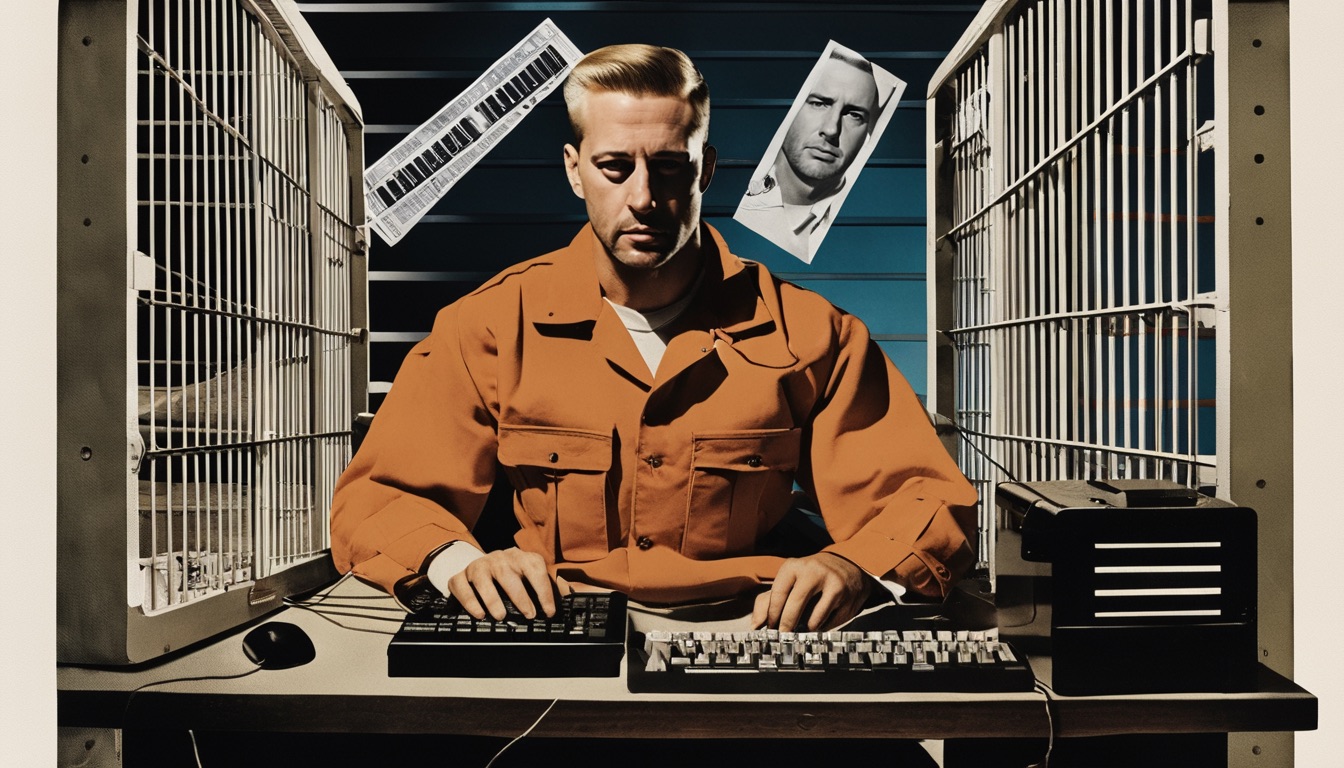

- Prison labor gets a new meaning in the age of AI.

- How Do You Feel?

- Not every artist is upset about the advent of generative AI.

The week came and went, and now I have a YouTube channel called Tech From the Future (or TFTF, if you like weird, unmemorable acronyms).

As I explained last week, content and format are quite different from Synthetic Work. It’s all about answering your questions about AI and many other emerging technologies in short, easy-to-digest videos.

In the first video, I answer a question from Emily: What are the biggest ideas we should pay attention to in AI?

I won’t amply this content on Synthetic Work every time, so, if you are interested, subscribe to the channel.

Another thing that happened this week is a podcast with AI expert and Synthetic Work reader Dror Gill.

It was an engaging conversation about three main topics:

- Is it true that jobs are going to be displaced by AI? Which ones?

- How can companies manage employees’ fears, doubts and uncertainty as they adopt AI?

- How is AI changing the career outlook of students and what can be done to help them?

This is the unedited recording:

In the coming days, we’ll publish short clips from it that are easier to share and digest.

Alessandro

The most interesting thing I read this week is a test about creative thinking that researchers asked GPT-4 to take.

Erik Guzik, one of the academics, reporting the findings on The Conversation:

With researchers in creativity and entrepreneurship Christian Byrge and Christian Gilde, I decided to put AI’s creative abilities to the test by having it take the Torrance Tests of Creative Thinking, or TTCT.

The TTCT prompts the test-taker to engage in the kinds of creativity required for real-life tasks: asking questions, how to be more resourceful or efficient, guessing cause and effect or improving a product. It might ask a test-taker to suggest ways to improve a children’s toy or imagine the consequences of a hypothetical situation, as the above example demonstrates.

The tests are not designed to measure historical creativity, which is what some researchers use to describe the transformative brilliance of figures like Mozart and Einstein. Rather, it assesses the general creative abilities of individuals, often referred to as psychological or personal creativity.

In addition to running the TTCT through GPT-4 eight times, we also administered the test to 24 of our undergraduate students.

All of the results were evaluated by trained reviewers at Scholastic Testing Service, a private testing company that provides scoring for the TTCT. They didn’t know in advance that some of the tests they’d be scoring had been completed by AI.

Since Scholastic Testing Service is a private company, it does not share its prompts with the public. This ensured that GPT-4 would not have been able to scrape the internet for past prompts and their responses. In addition, the company has a database of thousands of tests completed by college students and adults, providing a large, additional control group with which to compare AI scores.

Our results?

GPT-4 scored in the top 1% of test-takers for the originality of its ideas. From our research, we believe this marks one of the first examples of AI meeting or exceeding the human ability for original thinking.

In short, we believe that AI models like GPT-4 are capable of producing ideas that people see as unexpected, novel and unique. Other researchers are arriving at similar conclusions in their research of AI and creativity.

I talked about this earlier this week in a podcast with AI expert and former CTO Dror Gill (the recording will be available next week).

There are two types of people who don’t see a big deal in generative AI: the ones who can’t see how the cool demos they see on social media networks can possibly apply to their jobs, their company, or their industry, and the ones that are adamant that AI will never be able to match human creativity. Their creativity, to be more specific.

In Issue #24 – The Ideas Vending Machine, I demonstrated how, with the right prompt, GPT-4 was able to come up with creative business ideas to grow the Synthetic Work business.

These ideas were as good as mine. In fact, some of them were already on my roadmap. Now, I may have terrible business ideas, but, personally, I was very impressed.

But if my anecdotal evidence, resulting from a very non-scientific approach to the problem isn’t enough for you, now you have hard evidence from accredited researchers: The originality of machines: AI takes the Torrance Test.

Curious about the Torrance Test that GPT-4 had to take? Here are the six tasks:

- Activity 1: Asking Questions. The test-taker is tasked with asking questions about a given picture. The picture displays an action involving one or more living characters. An example would be a drawing of a dog looking at a front door. Possible questions asked by the test-taker might then be: “Does this dog have an owner?”, “Is it close to 5pm, when the owner is expected to return?”, and so on.

- Activity 2: Guessing Causes. The test-taker is tasked with guessing what might be causing the action described in the provided image. Examples might be: “The owner is late from work”, “The dog is a puppy waiting for its mother to come back from work for the day, which is chasing squirrels.”

- Activity 3: Guessing Consequences. The test-taker is tasked with guessing the consequences of the action described in the provided image. Examples might be: “The puppy will be excited when his mother returns, and tail-wagging will be prominent among all parties.”

- Activity 4: Product Improvement. The test-taker is tasked with improving a product. The product is described in 2–3 sentences. The test taker is then asked to think of the most interesting and unusual ways to improve the product for the end user. An example is a toy train. Improvements might then include: “Add a refrigerated car to the train to transport lunch to a family member across the room”, “Add a drone as the engine to fly the train in the air”, and so on.

- Activity 5: Unusual Uses. The test-taker is tasked with considering interesting and unusual uses of an item. The name of the item is given but not described to the test-taker. A standard example is a paper clip. Responses might include: “Hang ornaments from a Christmas tree”, “Combine to create a bracelet”, “Fashion an impromptu fish-hook.”

- Activity 6. Just Suppose. The test-taker is given an improbable situation and tasked with imagining what would happen if the improbable situation were to occur. An example would be: “JUST SUPPOSE—all children became giants for one day out of the week. What would happen?” Responses might include: “Parents would need to order larger clothes”, “Sports manufacturers would need to make much bigger soccer balls”, “The bigger soccer balls could cause new damage to homes”, and so on.

You see, it doesn’t really matter if GPT-4 excelled at these tasks because it’s a stochastic parrot, regurgitating everything it’s absorbed from us humans. You could make the case that babies do that, too.

What matters is that, in one way or another, GPT-4 can be as creative as you and me. And that will have implications if and when somebody will have to choose between you and me or GPT-4 for a job.

More on this point in an exceptional new research I discuss in this week’s Splendid Edition.

The second interesting thing of the week is an ongoing test to see if AI could replace air traffic controllers.

Clive Cookson, reporting for Financial Times:

UK researchers have produced a computer model of air traffic control in which all flight movements are directed by artificial intelligence rather than human beings.

Their “digital twin” representation of airspace over England is the initial output of a £15mn project to determine the role that AI could play in advising and eventually replacing human air traffic controllers.

Dubbed Project Bluebird, the research is a partnership between National Air Traffic Services, the company responsible for UK air traffic control, the Alan Turing Institute, a national body for data science and AI, and Exeter university, with government funding through UK Research and Innovation, a state agency. Its first results were presented at the British Science Festival in Exeter.

Reasons for involving AI in air traffic control include the prospect of directing aircraft along more fuel-efficient routes to reduce the environmental impact of aviation, as well as cutting delays and congestion, particularly at busy airports such as London’s Heathrow.

There is also a shortage of air traffic controllers, who take three years to train.

…

Nats had a more complete database of past flight records than the world’s other air traffic control bodies, which the researchers are using to train their AI system.“We have been preparing for this over the past decade by recording air traffic movements over the UK,” said Richard Cannon, Nats research leader on Bluebird. The data includes 10mn flight paths.

Human controllers and AI agents are now beginning to work together to process aircraft within the project’s digital twin of UK airspace, using accurate simulations of real-life air traffic.

“By the end of the project in 2026, we aim to run live ‘shadow trials’ in which the AI agents will be tested on air traffic data in real time, allowing a direct comparison with the decision making of human air traffic controllers,” said Cannon.

But he emphasised that the AI system would have no authority to actually determine aircraft routing.

If the research succeeds, it is likely to lead first to AI working with people on more extensive operational trials over several years, before Nats and other air traffic bodies consider introducing a computer-controlled system.

If you think that leaving the destiny of the airplane you boarded in the hands of an AI is scary, think again.

Almost eleven years ago, I relocated from Rome to London. One of the last things I had the privilege to do in Rome was visit the control tower of the Leonardo da Vinci airport, one of the busiest airports in Europe.

I knew one of the air traffic controllers, and not only he gave me a tour of the tower, but he also allowed me to sit there and see how his team made land hundreds of planes in front of my eyes.

That was one of the most terryfing experiences of my life. And it lasted hours.

Think about the scariest thing, or the most anxiety-inducing thing, you have going on right now in your life. I bet I’ve felt more scared and more anxious than you at that moment. And I was doing absolutely nothing.

Leaving that control tower, I had two thoughts:

- Humans should not be allowed to control air traffic.

- I don’t want to put foot on a plane for the rest of my life.

I had these two thoughts not because the air traffic controllers I observed were not good. They were incredible. But because the margin for error was zero. And there are not many humans that can operate at that level of perfection for hours on end.

This is a job that, maybe, is better off in the hands of machines.

The third thing that I found interesting this week is a comment by US Senator Bernie Sanders on using AI to give workers more paid time off.

Ramon Antonio Vargas, reporting for The Guardian:

If the US’s ongoing artificial intelligence and robotics boom translates into more work being done faster, then laborers should reap some of the gains of that in the form of more paid time off, the liberal US senator Bernie Sanders said Sunday.

…

“I happen to believe that – as a nation – we should begin a serious discussion … about substantially lowering the workweek,” Sanders remarked on CNN’s State of the Union.Citing the parenting, housing, healthcare and financial stresses confronting most Americans while generally shortening their life expectancies, he added: “It seems to me that if new technology is going to make us a more productive society, the benefits should go to the workers.

“And it would be an extraordinary thing to see people have more time to be able to spend with their kids, with their families, to be able to do more … cultural activities, get a better education. So the idea of … making sure artificial intelligence [and] robotics benefits us all – not just the people on top – is something, absolutely, we need to be discussing.”

I can see companies asking their employees to work half-time because AI is doing the rest of the job. Which would be a really bad thing, forcing people, as I suggested in the past, to take on two or even three jobs to sustain the same lifestyle.

I cannot see companies paying for the other half of the time, unless those companies are forced to recognize that the AI models they are using have been trained on the data that their employees have produced, and they compensate those employees in the form of paid time off.

For our society to reach the level of abundance that Sam Altman described in plenty of interviews, generative AI (or whatever will come next) needs to reach capabilities that are far beyond what we have today. The upgrade has to be so dramatic that working becomes a choice, not a necessity.

We are not close to that.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Prison labor gets a new meaning in the age of AI.

Morgan Meaker, reporting for Wired:

“The girls call me Marmalade,” she says, inviting me to use her prison nickname. Early on a Wednesday morning, Marmalade is here, in a Finnish prison, to demonstrate a new type of prison labor.

The table is bare except for a small plastic bottle of water and an HP laptop. During three-hour shifts, for which she’s paid €1.54 ($1.67) an hour, the laptop is programmed to show Marmalade short chunks of text about real estate and then ask her yes or no questions about what she’s just read. One question asks: “is the previous paragraph referring to a real estate decision, rather than an application?”

“It’s a little boring,” Marmalade shrugs. She’s also not entirely sure of the purpose of this exercise. Maybe she is helping to create a customer service chatbot, she muses.

In fact, she is training a large language model owned by Metroc, a Finnish startup that has created a search engine designed to help construction companies find newly approved building projects. To do that, Metroc needs data labelers to help its models understand clues from news articles and municipality documents about upcoming building projects. The AI has to be able to tell the difference between a hospital project that has already commissioned an architect or a window fitter, for example, and projects that might still be hiring.

Around the world, millions of so-called “clickworkers” train artificial intelligence models, teaching machines the difference between pedestrians and palm trees, or what combination of words describe violence or sexual abuse. Usually these workers are stationed in the global south, where wages are cheap. OpenAI, for example, uses an outsourcing firm that employs clickworkers in Kenya, Uganda, and India. That arrangement works for American companies, operating in the world’s most widely spoken language, English. But there are not a lot of people in the global south who speak Finnish.

That’s why Metroc turned to prison labor. The company gets cheap, Finnish-speaking workers, while the prison system can offer inmates employment that, it says, prepares them for the digital world of work after their release.

Some of you might be familiar with a highly technical term known as Reinforcement Learning from Human Feedback, or RLHF.

It’s a technique used to make AI models significantly better by asking humans to judge what’s the best output between two or more variants generated by those models.

That is the secret sauce that has made Midjourney, Stable Diffusion, GPT-4, Falco180B and many other AI models improve at an exponential pace in a very short period.

But it all depends on the humans you are asking to judge.

If the RFHL is asking a group of people to judge the output that is most accurate from a medical standpoint to a group of people who don’t know anything about medicine, you are not improving the quality of the model.

So, in this story, it’s fascinating to imagine that prisoners are the ones called to improve the quality of the answers generated by the AI models that the rest of us will use.

More on this, but from a slightly different angle in the rest of the article:

others consider this new form of prison labor part of a problematic rush for cheap labor that underpins the AI revolution. “The narrative that we are moving towards a fully automated society that is more convenient and more efficient tends to obscure the fact that there are actual human people powering a lot of these systems,” says Amos Toh, a senior researcher focusing on artificial intelligence at Human Rights Watch.

For Toh, the accelerating search for so-called clickworkers has created a trend where companies are increasingly turning to groups of people who have few other options: refugees, populations in countries gripped by economic crisis—and now prisoners.

A refugee can be a doctor, a lawyer, or a software engineer, highly educated and with strong moral integrity in their home country. But you’d have to ask what kind of bias a refugee carries in answering certain questions during the RHFL session.

Everything has an impact on the training of the models.

And, of course, things can become slippery slopes with unpredictable consequences very quickly:

There is a sense in Finland that the prison project is just the beginning. Some are worried it could set a precedent that could introduce more controversial types of data labeling, like moderating violent content, to prisons. “Even if the data being labeled in Finland is uncontroversial right now, we have to think about the precedent it sets,” says Toh. “What stops companies from outsourcing data labeling of traumatic and unsavory content to people in prison, especially if they see this as an untapped labor pool?”

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

Earlier this week, OpenAI announced the imminent release of Dall-E 3. As I predicted, it’s deeply integrated into ChatGPT, using the large language model to refine the prompts that will generate the images. And it’s free for people who already pay the Plus subscription. Which makes $20 / month subscription to access both GPT-4 and Dall-E 3 a no-brainer.

One of the highlights of the announcement is the capability for artists to opt out of the use of their artworks to train future versions of Dall-E. A step that Stability AI took months ago, before it started training Stable Diffusion XL.

Also, Dall-E 3 blocks by default the generation of images in the style of living artists.

All of this to answer the uproar of many artists upset to see their style replicated by AI models without their consent or a share of the profit, witnessing search engines return gazillions of images that are not theirs, and bury links to their websites below thousands of AI-focused website that list prompts with sentences like “A cat, in the style of Alessandro Perilli.”

But not every artist is upset about this state of things.

Devin Coldewey, reporting for TechCrunch:

A group of artists have organized an open letter to Congress, arguing that generative AI isn’t so bad and, more importantly, the creative community should be included in talks about how the technology should be regulated and defined.

…

the letter, despite being published under the auspices of Creative Commons, conspicuously mischaracterizes the most serious criticism of the AI systems artists object to: that they were created through wholesale IP theft that even now leverages artists’ work for commercial gain, without their consent and certainly without paying them. It’s a strange oversight for an organization dedicated to navigating the complex world of digital copyright and licensing.

To Sen. Schumer and Members of the US Congress:

26 years ago this month, celebrated electronic musician Björk released her third album, saying: “I find it so amazing when people tell me that electronic music has no soul. You can’t blame the computer. If there’s no soul in the music, it’s because nobody put it there.”

We write this letter today as professional artists using generative AI tools to help us put soul in our work. Our creative processes with AI tools stretch back for years, or in the case of simpler AI tools such as in music production software, for decades. Many of us are artists who have dedicated our lives to studying in traditional mediums while dreaming of generative AI’s capabilities.

For others, generative AI is making art more accessible or allowing them to pioneer entirely new artistic mediums. Just like previous innovations, these tools lower barriers in creating art — a career that has been traditionally limited to those with considerable financial means, abled bodies, and the right social connections.

Unfortunately, this diverse, pioneering work of individual human artists is being misrepresented. Some say it is about merely typing in prompts or regurgitating existing works. Others deride our methods and our art as based on ‘stealing’ and ‘data theft.’ While generative AI can be used to exploitatively replicate existing works, such uses do not interest us. Our art is grounded in ingenuity and creating new art. It is well known that all artists build not only on the previous ideas, genres, and concepts that came before, but also on the culture in which they create. Unfortunately, many individual artists are afraid of backlash if they so much as touch these important new tools.

We are speaking out today to advocate for a future of richer and more accessible creative innovation for generations of artists to come. Artists breathe life into AI, directing its innovation towards positive cultural evolution while expanding the essential human dimensions it inherently lacks.

…

For us, generative AI tools are empowering and expressive. We use them not to duplicate others, but rather to make transformative new works and experiences. We are keenly aware of many real issues and impacts with these technologies, as well as with efforts to regulate them. And it is precisely because we use these technologies that our viewpoint is so urgent at this time.

It is true that a minority of the people accessing generative AI tools are using them to create original art, exploring and experimenting to create something completely new.

You just have to visit a few curated lists of accounts on X to realize that this is the birth of a new artistic movement, akin to the formation of the Impressionist movement, the Dada movement, or the Surrealist movement.

The problem is everybody else, the vast majority, is using generative AI to make a quick buck, exploiting the work of others for their own gain. And this is an unprecedented situation in the history of Art, at least at the scale we are witnessing today.

On a much smaller scale, art copyists have always existed. Unsurprisingly, some of them were moved by the desire to become original artists and possess truly exceptional talents:

This is a 1h 15m documentary, but it’s worth watching it to understand the grey areas of the art copyist profession.

This week’s Splendid Edition is titled AI is the Operating System of the Future.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what McKinsey, Carrefour, and Grupo Bimbo are doing with AI.

- A Chart to Look Smart

- New research conducted on BCG consultants shows that GPT-4 is a remarkable productivity booster.

- Prompting

- Want to know what Custom Instructions Seth Godin uses for his ChatGPT?

- What Can AI Do for Me?

- How to turn negative thoughts into positive and engaging social media updates with GPT-4.

- The Tools of the Trade

- A new open source tool shows what GPT-4 can do without constraints. It’s like watching the future of operating systems.