- Intro

- Every human worker might become a micro-company supervising dozens of synthetic workers.

- What Caught My Attention This Week

- The new OpenAI board member on the impact of AI on jobs.

- Generative AI is already impacting a class of professionals: freelancers.

- Accenture Technology CEO for EMEA says that AI could “free up” to 40% of working hours.

- The Way We Work Now

- How AI has created jobs for minors.

- How Do You Feel?

- The Publishing industry’s fear of losing control of the content starts to become palpable.

- Putting Lipstick on a Pig

- Somebody instructed a custom GPT to correct horribly misspelt typing.

A couple of weeks ago, I unveiled the new Synthetic Work (Re)Search Assistant, powered by the new OpenAI custom GPTs.

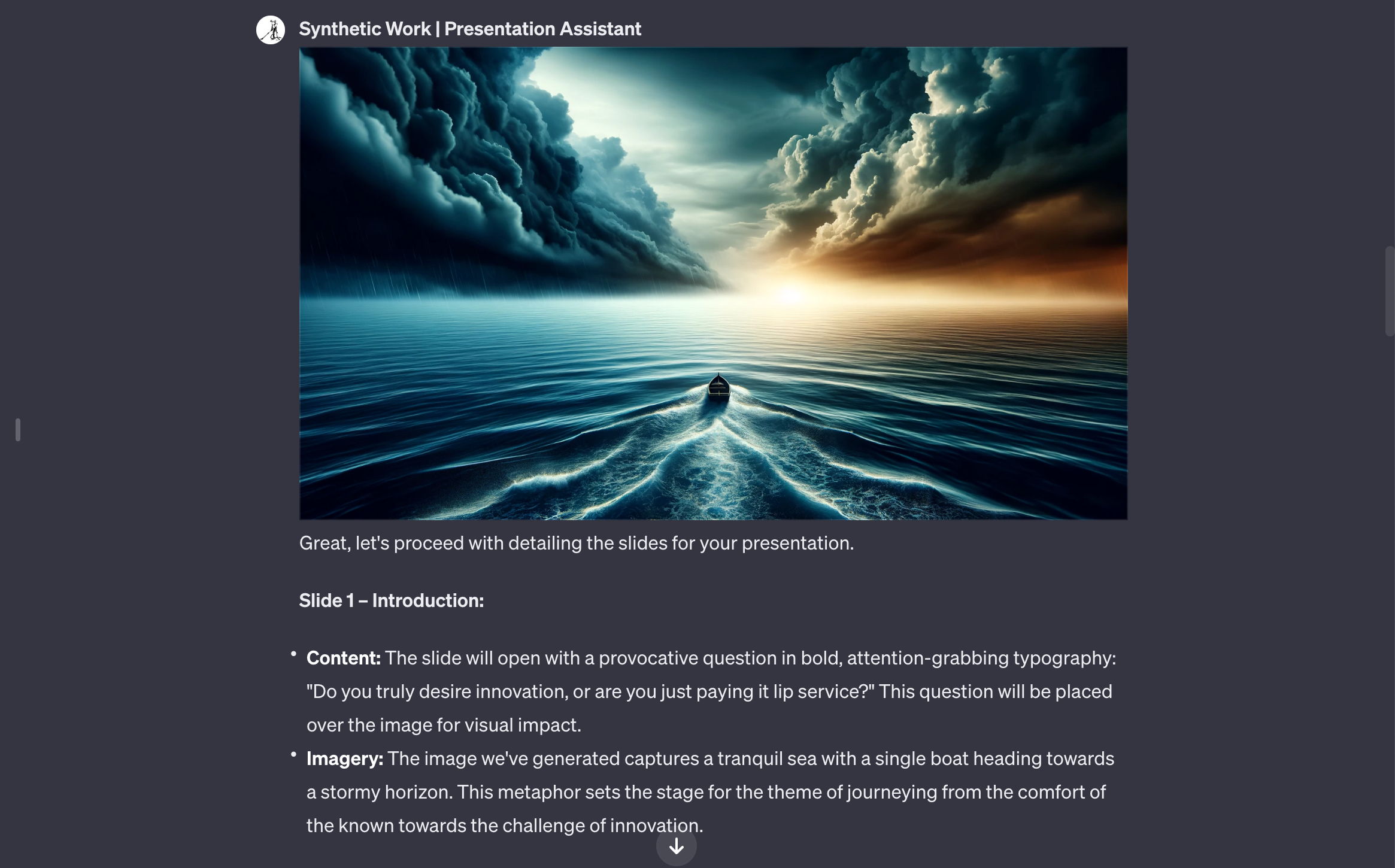

In last week’s Splendid Edition, I showed how I created a second custom GPT: Synthetic Work Presentation Assistant.

Presentation Assistant embodies all the tricks we described in Issue #14 – How to prepare what could be the best presentation of your life with GPT-4, plus some new ones.

The result is a custom GPT that can create a narrative for whatever topic you want to present, tell you what to put in each slide, and, despite my scepticism, even create every image for every single slide of your presentation. All without human intervention.

You tell it what you want to present, answer a few follow-up questions, and it does the rest.

In How to do absolutely nothing to prepare the best presentation of your career I explain why and how this approach is very different from the one taken by an ocean of AI-focused startups out there. I won’t repeat it here.

The system prompt, what OpenAI now calls Instructions, makes the whole difference. To appreciate that, you must create a number of custom GPTs yourself, realizing that influencing the behavior of these large language models at a very granular level is much harder than it seems.

As I use it myself, and as I get feedback from the members of the Synthetic Work community, I’ll keep tweaking the system prompt to make it more effective.

Just like for the Search Assistant, there’s a lot of room for this GPT to improve. Not only custom GPTs are still in beta, but they cannot yet count on the new GPT-4-Turbo model with 128K token context window. They cannot use the new GPT-4V model to “see” what you show them. They cannot talk to you (less critical, but still a nice UX improvement).

Plus, there are limitations in the number of files I can upload to build the Presentation Assistant Knowledge archive, which is critical to improving the quality of the answers.

Synthetic Work Presentation Assistant is a bit like having a presentation mentor, like the ones used by TED to prepare their speakers for their famous talks. Just synthetic. And I can’t imagine how much better this tool will become over time.

While the Search Assistant remains available to all Synthetic Work members, Presentation Assistant is available only to paying members with a Sage subscription or higher.

Now.

I know that, by now, you have the impression that I spent the intro to promote the new tool and entice you to buy a subscription, but no.

I care far more about something completely different, and this is the important message.

The synthetic assistant I created months ago to design the covers of Synthetic Work Breaking News, the new Search Assistant, the new Presentation Assistant, and many others that will come next are meant to give you context, and a warning.

The context is for stories like the ones you’ll read in this week’s issue, below.

When you read and watch those stories, my hope is that you always keep in mind that those are not just empty words. Somewhere, in London, there’s a guy who is actively working on AI to create autonomous, synthetic workers that can do the jobs of a human employee. And month after month, that guy improves those synthetic workers so that he won’t miss what we call the “unique human touch”.

I strongly believe that seeing is believing. So I’m showing you what might be coming.

And now the warning.

Like me, thousands of others are working to create autonomous, synthetic workers. These workers will do much more than just create pretty pictures of the breaking news of the day, or help you find the information you need in the archives of a newsletter, or help you prepare a presentation.

This week, for example, Workday announced a new LLM that will “help” managers write performance reviews for their direct reports.

All of these synthetic workers will make our lives so much easier, with just a little supervision.

Things will get better.

Progressively, as these synthetic workers get more accurate and more capable, we’ll move from supervising one at a time to supervising a dozen at a time within the same unit of time.

Things will get much better.

Progressively, as these synthetic workers get more accurate and more capable, we’ll move from supervising a dozen a time to supervising a hundred or more within the same unit of time.

Each corporate employee or independent worker will become a micro-company, managing an entire hierarchy of synthetic workers.

Eventually, the underlying AI model that powers this horde of synthetic workers might get so good that they won’t need supervision anymore. They will simply talk to each other, as I demonstrated with today’s technology in Issue #31 – The AI Council

That’s when things might get much worse.

Not because it’s inherently bad to have an army of synthetic workers doing all the work that we don’t like to do. But because we don’t have a plan, and we are not even discussing a plan, to deal with millions of humans that don’t have much to work on anymore, and don’t have much to supervise anymore.

Keep this possibility in mind when you read the stories below. Ignoring it won’t make it go away.

Alessandro

One of the outcomes of this IT version of House of Cards, is that OpenAI has a new board. Completely white, completely privileged, completely American. By no means representing the diversity of the world we live in. In other words, perfect to supervise the creation of an artificial general intelligence that supposedly will benefit the entire planet.

One of the new members of the OpenAI board is Larry Summers, former US Treasury Secretary.

This is what Mr Summers had to say about the impact of AI on jobs just four months ago:

Keep the context of the intro in mind.

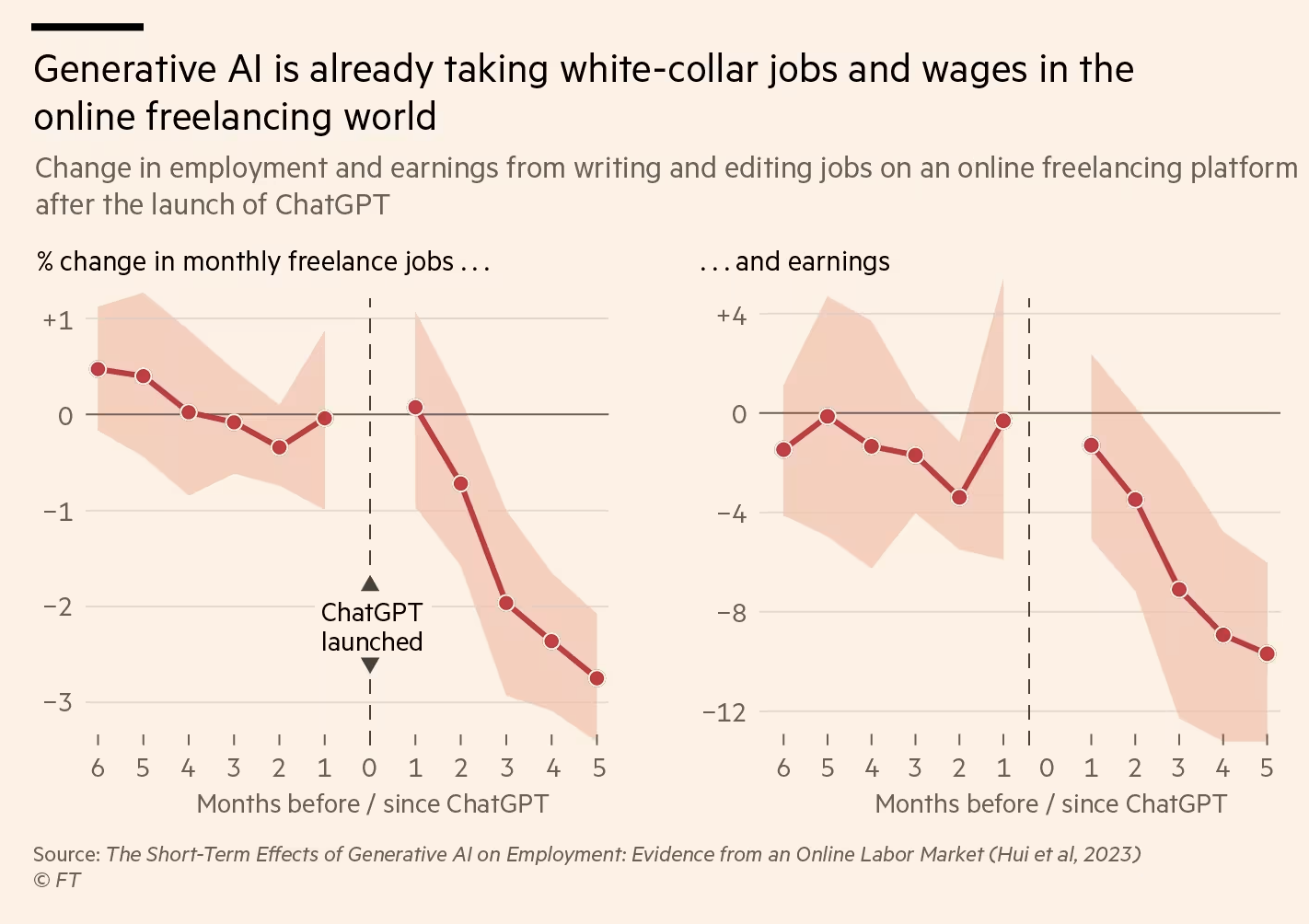

People are starting to realize that generative AI is already impacting a class of professionals: freelancers.

John Burn-Murdoch, reporting for the Financial Times:

OpenAI, the company behind ChatGPT, estimates that the jobs most at risk from the new wave of AI are those with the highest wages, and that someone in an occupation that pays a six-figure salary is about three times as exposed as someone making $30,000.

…

In an ingenious study published this summer, US researchers showed that within a few months of the launch of ChatGPT, copywriters and graphic designers on major online freelancing platforms saw a significant drop in the number of jobs they got, and even steeper declines in earnings. This suggested not only that generative AI was taking their work, but also that it devalues the work they do still carry out.

…

Most strikingly, the study found that freelancers who previously had the highest earnings and completed the most jobs were no less likely to see their employment and earnings decline than other workers. If anything, they had worse outcomes. In other words, being more skilled was no shield against loss of work or earnings.

If you are curious about reading the whole study, which was published in August: The Short-Term Effects of Generative Artificial Intelligence on Employment: Evidence from an Online Labor Market

During the OpenAI drama, a seemingly infinite number of “the AI guy” joked on social media that it would be ironic if the first job taken by AI would be Altman’s CEO position.

AI is already taking jobs, as Synthetic Work has documented over the last nine months. It’s just that most people are not paying attention.

Keep the context of the intro in mind.

Accenture Technology CEO for EMEA says that AI could “free up” to 40% of working hours.

Irina Anghel, reporting for Bloomberg:

Accenture Plc’s European technology lead said generative AI could eventually “free up” about 40% of working hours across industries, allowing workers to focus on other tasks.

…

Speaking at a Workday conference in Barcelona, Accenture’s Jan Willem Van Den Bremen said the rise of the technology has prompted companies to rethink which tasks they want staff to perform. For example, Van Den Bremen said computer programmers could shift to validating programs developed by AI, rather than writing the programs themselves.

Keep the context of the intro in mind.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Let’s talk about the upside of AI impacting jobs. Let’s talk about how minors finally found an income opportunity.

Niamh Rowe, reporting for Wired:

Like most kids his age, 15-year-old Hassan spent a lot of time online. Before the pandemic, he liked playing football with local kids in his hometown of Burewala in the Punjab region of Pakistan. But Covid lockdowns made him something of a recluse, attached to his mobile phone. “I just got out of my room when I had to eat something,” says Hassan, now 18, who asked to be identified under a pseudonym because he was afraid of legal action. But unlike most teenagers, he wasn’t scrolling TikTok or gaming. From his childhood bedroom, the high schooler was working in the global artificial intelligence supply chain, uploading and labeling data to train algorithms for some of the world’s largest AI companies.

…

The raw data used to train machine-learning algorithms is first labeled by humans, and human verification is also needed to evaluate their accuracy. This data-labeling ranges from the simple—identifying images of street lamps, say, or comparing similar ecommerce products—to the deeply complex, such as content moderation, where workers classify harmful content within data scraped from all corners of the internet. These tasks are often outsourced to gig workers, via online crowdsourcing platforms such as Toloka, which was where Hassan started his career.

…

A friend put him on to the site, which promised work anytime, from anywhere. He found that an hour’s labor would earn him around $1 to $2, he says, more than the national minimum wage, which was about $0.26 at the time.

…

At least some of those human workers are children. Platforms require that workers be over 18, but Hassan simply entered a relative’s details and used a corresponding payment method to bypass the checks—and he wasn’t alone in doing so. WIRED spoke to three other workers in Pakistan and Kenya who said they had also joined platforms as minors, and found evidence that the practice is widespread.

…

“When I was still in secondary school, so many teens discussed online jobs and how they joined using their parents’ ID,” says one worker who joined Appen at 16 in Kenya, who asked to remain anonymous. After school, he and his friends would log on to complete annotation tasks late into the night, often for eight hours or more.

…

These workers are predominantly based in East Africa, Venezuela, Pakistan, India, and the Philippines—though there are even workers in refugee camps, who label, evaluate, and generate data.

…

Sometimes, workers are asked to upload audio, images, and videos, which contribute to the data sets used to train AI. Workers typically don’t know exactly how their submissions will be processed, but these can be pretty personal: On Clickworker’s worker jobs tab, one task states: “Show us you baby/child! Help to teach AI by taking 5 photos of your baby/child!” for €2 ($2.15). The next says: “Let your minor (aged 13-17) take part in an interesting selfie project!”

…

Some tasks involve content moderation—helping AI distinguish between innocent content and that which contains violence, hate speech, or adult imagery. Hassan shared screen recordings of tasks available the day he spoke with WIRED. One UHRS task asked him to identify “fuck,” “c**t,” “dick,” and “bitch” from a body of text. For Toloka, he was shown pages upon pages of partially naked bodies, including sexualized images, lingerie ads, an exposed sculpture, and even a nude body from a Renaissance-style painting. The task? Decipher the adult from the benign, to help the algorithm distinguish between salacious and permissible torsos.

…

Bypassing age checks can be pretty simple. The most lenient platforms, like Clickworker and Toloka, simply ask workers to state they are over 18; the most secure, such as Remotasks, employ face recognition technology to match workers to their photo ID. But even that is fallible, says Posada, citing one worker who says he simply held the phone to his grandmother’s face to pass the checks. The sharing of a single account within family units is another way minors access the work, says Posada. He found that in some Venezuelan homes, when parents cook or run errands, children log on to complete tasks. He says that one family of six he met, with children as young as 13, all claimed to share one account. They ran their home like a factory, Posada says, so that two family members were at the computers working on data labeling at any given point. “Their backs would hurt because they have been sitting for so long. So they would take a break, and then the kids would fill in,” he says.

…

“Stable job for everyone. Everywhere,” one service, Kolotibablo, states on its website. The company has a promotional website dedicated to showcasing its worker testimonials, which includes images of young children from across the world. In one, a smiling Indonesian boy shows his 11th birthday cake to the camera. “I am very happy to be able to increase my savings for the future,” writes another, no older than 7 or 8. A 14-year-old girl in a long Hello Kitty dress shares a photo of her workstation: a laptop on a pink, Barbie-themed desk.

While we wait for the utopia of a world where nobody has to walk thanks to cars, everybody might be asked to become a car mechanic. At least, as long as the cars don’t start fixing themselves.

And it’s never too early to become a car mechanic.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

Synthetic Work tracked the adoption of AI and its impact on jobs in the Publishing industry since Issue #1. The fear of losing control of the content starts to become palpable.

Daniel Thomas, writing for Financial Times:

The chief executive of Bloomsbury Publishing has warned of the threat of artificial intelligence to the publishing industry, saying tech groups are already using the work of authors to train up generative AI programmes.

Nigel Newton, who signed Harry Potter author JK Rowling to Bloomsbury in the 1990s, also said ministers needed to act urgently to address competition concerns between large US tech groups and the publishing industry given their increasing market dominance in selling books across the world.

The warning came as Bloomsbury reported its highest-ever first-half results on the back of the boom in fantasy novels, leading the publisher to boost its interim dividend.

…

Newton pointed to the “huge” growth in fantasy novels, with sales of books by Sarah J Maas and Samantha Shannon growing 79 per cent and 169 per cent respectively in the period and demand for Harry Potter books, 26 years after publication, remaining strong.The next Maas book, scheduled for January, has already received “staggering” pre-orders of 750,000 copies for the hardback edition, he said, underscoring the resurgence of the book-selling industry.

…

Newton raised concerns about the rise of generative AI that uses books to “learn” without permission of the author or publisher.He said copyright was not sufficiently being protected from AI. “The most important issue right now in our industry is to prevent books being trained upon by generative AI because they, in effect, steal the author’s copyrighted work,” he said. “It’s critically important that publishers and authors protect their work from being scanned without permission.”

…

He urged UK ministers to commit to the Digital Markets, Competition and Consumers Bill, which is still being considered in parliament, and warned against watering down any provisions in the face of lobbying by tech groups.

News publishing companies join the chorus, as reported by Katie Robertson for The New York Times:

The News Media Alliance, a trade group that represents more than 2,200 publishers, including The New York Times, released research on Tuesday that it said showed that developers outweigh articles over generic online content to train the technology, and that chatbots reproduce sections of some articles in their responses.

The group argued that the findings show that the A.I. companies violate copyright law.

…

In its analysis, the News Media Alliance compared public data sets believed to be used to train the most well-known large language models, which underpin A.I. chatbots like ChatGPT, with an open-source data set of generic content scraped from the web.The group found that the curated data sets used news content five to 100 times more than the generic data set. Ms. Coffey said those results showed that the people building the A.I. models valued quality content.

The report also found instances of the models directly reproducing language used in news articles, which Ms. Coffey said showed that copies of publishers’ content were retained for use by chatbots. She said that the output from the chatbots then competes with news articles.

…

The News Media Alliance has submitted the findings of the report to the U.S. Copyright Office’s study of A.I. and copyright law.

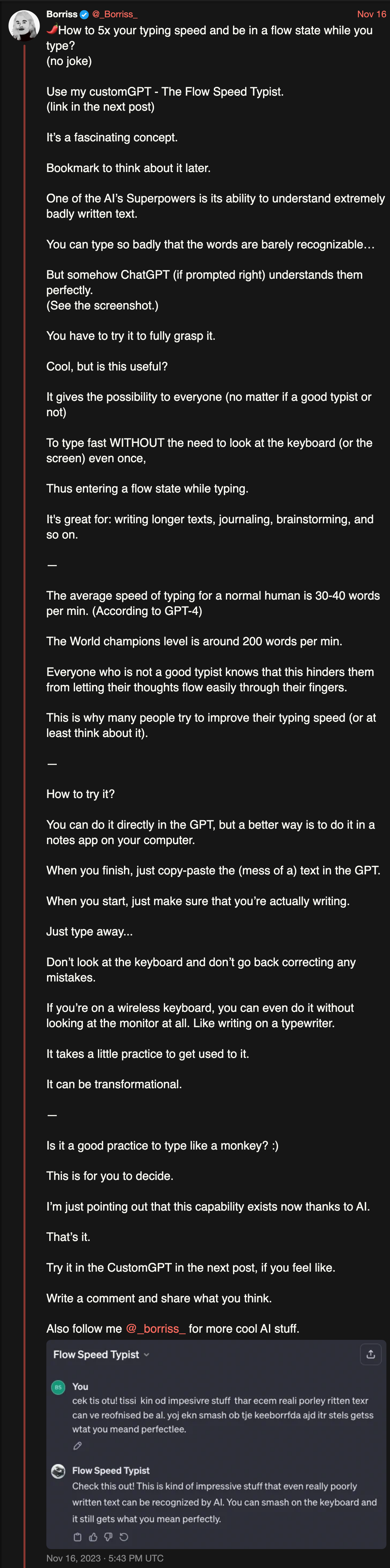

Somebody instructed a custom GPT to correct horribly misspelt typing. You can furiously type on the keyboard, at lightspeed, without caring about grammar and punctuation, and the GPT will return a perfect version of what you typed.

It’s really impressive, but you have to try it to believe it. Yet another surprising capability of large language models among the many that we’ll still have to discover in the months and years to come.

Why does it matter?

While narrowly focused, this is an excellent example of how a synthetic worker can lower the barrier to entry for a huge portion of the world population that didn’t have the luxury of higher education.

A human worker, even one with limited knowledge and education, but an idea that comes from a real-world, unaddressed need, might be able to count on his/her micro-company of synthetic workers to do things right, to be coached on how highly educated people do in his/her situation, to react better to events he/she has never been prepared to face.

And all of this means that not only human workers will increasingly have less to do thanks their micro-company of synthetic workers, but that they will also have to compete with a much larger number of people who can do the same increasingly less demanding job.

Keep the context of the intro in mind.

This week’s Splendid Edition is titled The Balance Scale.

In it:

- Intro

- How to look at what happened with OpenAI, and what questions to answer next if you lead a company.

- What’s AI Doing for Companies Like Mine?

- Learn what Koch Engineered Solutions, Ednovate, and the Israel Defense Forces (IDF) are doing with AI.

- A Chart to Look Smart

- OpenAI GPT-4-Turbo vs Anthropic Claude 2.1. Which one is more reliable when analyzing long documents?