- Intro

- Welcome to the Post-Real Era

- What Caught My Attention This Week

- US Supreme Court Chief Justice devotes his 2023 Year-End Report on the Federal Judiciary to artificial intelligence to ponder if AI will replace judges.

- Reddit CEO reveals that his company is experimenting AI-powered moderation.

- Deepdub, a startup that uses AI to clone voice actors, launches a royalty program.

- The Way We Work Now

- The Prado museum used AI to verify the authenticity of a painting attributed to Raphael.

- How Do You Feel?

- Human supremacists will be upset in discovering that AI models can, under the right conditions, be very creative.

For centuries, we associated “news” with “something that happened”. But it won’t be like that going forward.

The image below was generated with Midjourney v6 (which is still in alpha). Imagine it supporting a story that you read in the news. A story completely fabricated to drive engagement (and ad revenue).

An image like this can be generated in seconds. The news story accompanying it can be generated in seconds. A good storyteller could create, in seconds, more convincing and engaging fabricated news than real news. A bad storyteller could take very old and engaging real news and, in seconds, fabricate a modern version of that news.

As long as the news business is supported by an advertising business model, there will be no incentive to spend 10x, 100x, 1000x to report real news, when you can do this at an industrial scale, effortlessly.

Most people read the news to be entertained, not to be informed. Their attention gravitates around stories, not facts or statistics. Truth is marginally important as long as the news doesn’t directly, urgently, impact them.

This is why fake stories and misinterpreted (real) news stay with us for decades and are often impossible to eradicate (for example: we use only 10% of our brain).

So, if the fabricated news is more interesting to read, who cares to find out if it’s fabricated?

More importantly, if the boundary between fabricated and real news blurs, reality starts to become unstable*, and trust in anything erodes. And if we don’t trust anything, we don’t act on anything. We just passively watch and enjoy data as it’s served to us, suspending judgment on its veridicity.

Even more importantly, if we don’t trust anything, any real news somebody doesn’t like can be countered by a hundred fabricated news stories that suggest the opposite. Instantaneously, at a planetary scale, marrying generative AI with automation with tools like my AP Workflow for ComfyUI. Effectively neutering any opposition to anything.

Did you read the newspaper? What happened today?

Everything. Nothing. I don’t know anymore.

It’s already happening:

What has any of this to do with the impact of AI on jobs?

Two things:

One. Going forward, the jobs today we call news writer, news editor, reporter, journalist, etc. might mean something completely different. We will not be informed when that happens. Once we realise it, more people than ever might be discouraged from pursuing a career in journalism, creating a vicious circle were nobody is left to report news but AIs.

Two. The dynamic we described so far is not just about the news business. In any ad-supported industry, there’s an incentive to maximize engagement at all costs, and generative AI is the ultimate tool to do that.

If you are in one of those industries, it’s worth starting to think about the sustainability of that business model in the long-term.

Alessandro

*Hence the name of the R&D company I founded.

US Chief Justice John G. Roberts Jr. devotes his 2023 Year-End Report on the Federal Judiciary to artificial intelligence to ponder if AI will replace judges.

Every year, I use the Year-End Report to speak to a major issue relevant to the whole federal court system. As 2023 draws to a close with breathless predictions about the future of Artificial Intelligence, some may wonder whether judges are about to become obsolete. I am sure we are not—but equally confident that technological changes will continue to transform our work.

…

Law professors report with both awe and angst that AI apparently can earn Bs on law school assignments and even pass the bar exam. Legal research may soon be unimaginable without it. AI obviously has great potential to dramatically increase access to key information for lawyers and non-lawyers alike. But just as obviously it risks invading privacy interests and dehumanizing the law.

…

Proponents of AI tout its potential to increase access to justice, particularly for litigants with limited resources. Our court system has a monopoly on many forms of relief. If you want a discharge in bankruptcy, for example, you must see a federal judge. For those who cannot afford a lawyer, AI can help. It drives new, highly accessible tools that provide answers to basic questions, including where to find templates and court forms, how to fill them out, and where to bring them for presentation to the judge—all without leaving home. These tools have the welcome potential to smooth out any mismatch between available resources and urgent needs in our court system.

…

Some legal scholars have raised concerns about whether entering confidential information into an AI tool might compromise later attempts to invoke legal privileges. In criminal cases, the use of AI in assessing flight risk, recidivism, and other largely discretionary decisions that involve predictions has generated concerns about due process, reliability, and potential bias. At least at present, studies show a persistent public perception of a “human-AI fairness gap,” reflecting the view that human adjudications, for all of their flaws, are fairer than whatever the machine spits out.

…

Many professional tennis tournaments, including the US Open, have replaced line judges with optical technology to determine whether 130 mile per hour serves are in or out. These decisions involve precision to the millimeter. And there is no discretion; the ball either did or did not hit the line. By contrast, legal determinations often involve gray areas that still require application of human judgment.

…

Machines cannot fully replace key actors in court. Judges, for example, measure the sincerity of a defendant’s allocution at sentencing. Nuance matters: Much can turn on a shaking hand, a quivering voice, a change of inflection, a bead of sweat, a moment’s hesitation, a fleeting break in eye contact. And most people still trust humans more than machines to perceive and draw the right inferences from these clues.

…

Appellate judges, too, perform quintessentially human functions. Many appellate decisions turn on whether a lower court has abused its discretion, a standard that by its nature involves fact-specific gray areas. Others focus on open questions about how the law should develop in new areas. AI is based largely on existing information, which can inform but not make such decisions.

…

I predict that human judges will be around for a while. But with equal confidence I predict that judicial work—particularly at the trial level—will be significantly affected by AI. Those changes will involve not only how judges go about doing their job, but also how they understand the role that AI plays in the cases that come before them.

There’s no computer vision algorithm that cannot recognize a bead of sweat or a fleeting break in eye contact and there’s no multi-modal large language model that cannot put that visual evidence in context and draw a conclusion from it, if trained properly.

Even if tomorrow the AI community will solve the hallucination problem, our main hurdle would remain: most human jobs depend on an implicit set of inference rules that are not documented anywhere. Those rules are (sometimes) inferred during training and with field experience. That’s why certain jobs are so hard to learn and take a lifetime to master.

But as the technology continues to mature, the economic incentive to assemble training datasets to codify those rules is becoming irresistible.

The question is not if AI can replace human jobs today. The question is if AI will replace human jobs tomorrow given that now we know what we need to make it happen.

Reddit CEO reveals that his company is experimenting AI-powered moderation.

Harry McCracken, reporting for Fast Company:

Over 50,000 moderators preside over subreddits visited by 70 million daily active users (“Redditors”). The 16 billion posts and comments they’ve created—mostly without profit as a motive—make up one of the internet’s most essential troves of unvarnished, real-world knowledge.

…

“There’s a lot of hype around [LLMs], but they are exceptional at processing words and language,” he says. “Reddit has a lot of words and language, and so there are a couple of areas where I think LLMs can meaningfully improve our products.”Rather than involving the most obvious AI functionality, like a Reddit chatbot, the examples he provides relate to moderation of problem content. For instance, the latitude that individual moderators have to govern their communities means that they can set rules that Huffman describes as “sometimes strict and sometimes esoteric.” Newbies may run afoul of them by accident and have their posts yanked just as they’re trying to join the conversation.

In response, Reddit is currently prototyping an AI-powered feature. called “post guidance.” It’ll flag rule-violating material before it’s ever published: “The new user gets feedback, and the mod doesn’t have to deal with it,” says Huffman. He adds that Reddit will also use AI to crack down on willful bad behavior, such as bullying and hate speech, and that he expects progress on that front in 2024.

Moderation is one of those jobs that most people would prefer to see displaced by AI. It’s mostly unpaid on Reddit, and the ones who volunteer to do it have the wrong motivations (e.g., power-seekers, narrative manipulators, etc.).

Elsewhere, it’s paid a misery. Facebook enlists contractors to moderate content on its platform at minimum wage in exchange for the opportunity to develop PTSD.

Yet, moderation is critical to guarantee a civilized online interaction. If Reddit or Facebook are successful in automating even just a fraction of the moderation job, many other organizations will pay attention and follow suit.

As usual, I’m much more concerned about a scenario where the AI moderator is a blackbox, blindly trusted even by their creators, that operates at a planetary scale under the influence of involuntary and maliciously-injected biases. For more on this topic, read the intro of Issue #41 – I simply asked my synthetic scientist to discover time travel.

Deepdub, a startup that uses AI to clone voice actors, launches a royalty program.

Carl Franzen, reporting for VentureBeat:

Deepdub offers artificial intelligence (AI) tools for dubbing voices in videos, audio tracks, games and other media. It allows a speaker to record a vocal track in their native language, and then, with the power of AI, have their words translated into a multitude of other languages and dialects while preserving the sound of their unique, original voice.

…

This week, the company debuted a new offering: a royalty program, which allows vocal artists to record their voice, turn it into the source of future AI-generated vocal tracks, and receive payment every time their AI-cloned voice is used in a new production.

…

Theoretically, any voice artist of any experience level try their hand at recording their voice for Deepdub’s royalty program, but right now, the company is controlling participation through a public intake form on its website.The form asks users to provide basic location and personally identifying information, and their work status — as a freelancer or working within an organization. It also asks if the user is a “recognized voice artist,” how many years of experience they have, and if they have an IMDB profile page.

…

The program is open to professional voice artists, who are required to submit a sample of their voice. Once approved, they are added to Deepdub’s voice marketplace and their vocal profile becomes available for use in audio-visual productions.

…

The startup did not provide information on precisely how much money vocal artists would receive every time their voice was used going forward in an AI-produced file.

…

One company that does seek to offer royalties and/or payment for usage of an AI version of artists is Metaphysic, the company that sprang from a captivating demo of deepfake technology that went viral on TikTok in the form of parody videos of actor Tom Cruise.However, Metaphyic PRO, the UK startup’s performance management tier, is designed to help artists 3D scan their full bodies and monetize those, not just their vocal stylings.

While this initiative is commendable, the reality is that professional voices will most certainly become a commodity as soon as new text-to-speech AI models can accurately and reliably reproduce emotions. We are very close now.

After that point, the only synthetic voices that market participants will be willing to buy for a non-insignificant amount will be the ones of celebrities.

The value of those voices is not proportional to the quality of the voice itself but to the size of the fanbase of the celebrity.

In a world where everything about us can be synthetized, the only thing that makes us valuable is the skill to market ourselves, the skill to be wanted.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

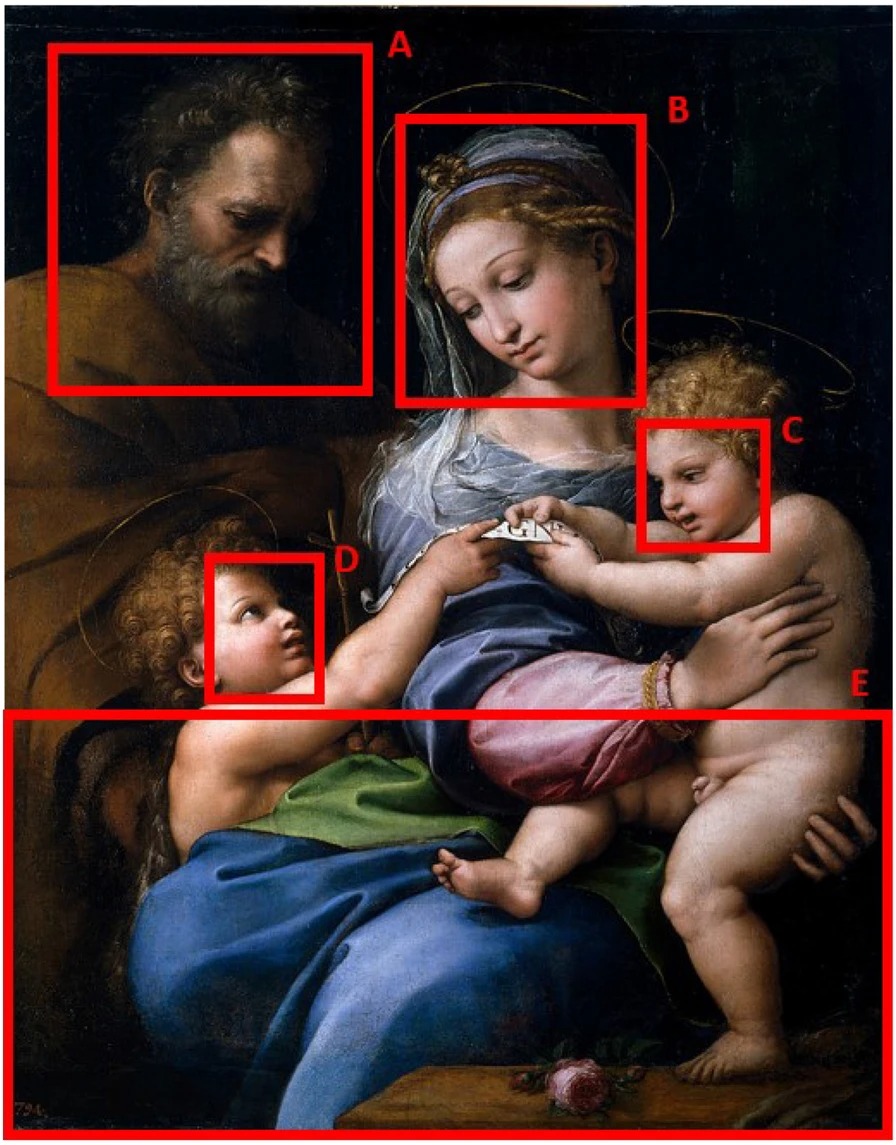

The Prado museum used AI to verify the authenticity of a painting attributed to Raphael.

Mark Brown, reporting for The Guardian:

The Madonna della Rosa (Madonna of the Rose) has intrigued art connoisseurs and experts for centuries.

The painting depicts Mary, Joseph and the baby Jesus, with the infant John the Baptist. It is something of a showstopper and was regarded as being all the work of Raphael until doubts were raised in the 19th century.

Art historians argued it should also be attributed to his workshop. Some said the Joseph figure looked like an afterthought and could not possibly be by the hand of Raphael. Others thought the lower section, with the rose, was painted by someone else. In Spain, the painting has always been attributed to Raphael.

The painting has now been tested by AI developed by Hassan Ugail, professor of visual computing at the University of Bradford.

Its conclusion is that most of the painting is by Raphael but the face of Joseph is by a different hand. The lower portion is “most likely” by Raphael.

Ugail said the algorithm was developed after it looked in extraordinary detail at 49 uncontested works by Raphael and can, as a result, recognise authentic works by the artist with 98% accuracy.

…

“The computer is looking in very great detail at a painting,” said Ugail. “Not just the face, it is looking at all its parts and is learning about colour palette, the hues, the tonal values and the brushstrokes. It understands the painting in an almost microscopic way, it is learning all the key characteristics of Raphael’s hand.”In the case of the Madonna, the initial testing showed that it was 60% by Raphael. The computer then looked at the painting by section and concluded that it was the face of Joseph that was not by Raphael.

The full analysis is described in this research paper: Deep transfer learning for visual analysis and attribution of paintings by Raphael.

Two considerations:

One. AI continues to make inroads in the art market. This is the second instance we document in Synthetic Work where artificial intelligence is being trusted more than art historians and critics for art authentication.

The previous time, the same professor was called to verify the attribution of the de Brecy Tondo to Raphael. We discussed this in Issue #31 – The Sky Is the Limit.

If the trust in this technology continues to grow, at some point, museums and galleries will have to make a business case for preferring humans to algorithms for art authentication.

Two. If the computer is “looking at all its parts and is learning about colour palette, the hues, the tonal values and the brushstrokes. It understands the painting in an almost microscopic way, it is learning all the key characteristics of Raphael’s hand.” then there’s no question that it also can understand “a shaking hand, a quivering voice, a change of inflection, a bead of sweat, a moment’s hesitation, a fleeting break in eye contact” mentioned by the US Chief Justice in the first story of this week’s newsletter.

At some point, there will be no question about the technical prowess of these AI systems. It will only become a matter of subjectively trusting them for a job but not for another.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

One of the strongest, most emotional arguments against the use of AI for a wide range of human jobs is that no artificial intelligence model is capable of creativity like people are.

Many of us feel outraged when somebody (usually me) suggests that AI is as capable of creativity as a professional artist in any field.

Unfortunately for them, new evidence suggests exactly that: AI models can, under the right conditions, be very creative.

The research titled Evaluating Large Language Model Creativity from a Literary Perspective is authored by a lead scientist in the famed DeepMind division of Google (which recently absorbed the entirety of Google Brain) and a cultural historian.

In their research, the two academics decided to simulate the production of a novel-length work of speculative fiction involving time travel, and played with the so-called temperature of GPT-4 to surface the most creative outputs the model is capable of.

The temperature is a parameter that controls the randomness of the model. The higher the temperature, the more random the output. The lower the temperature, the more predictable the output.

This aligns well with the notion of creativity: we humans deem creative something unexpected, unpredictable, and surprising.

What did they find?

We investigate three techniques for generating fragments of text with the aid of an LLM for inclusion in Effie’s story.

- Creative Dialogue. The LLM is used within a dialogue agent. The dialogue agent takes the part of the author, and generates text, while the user takes the part of a critic or mentor. The result is a process of iterative improvement.

- Raising the Temperature. The temperature parameter of the LLM is varied. Higher values promote a more “experimental” prose style.

- Multi-Voice Generation. Dialogue produced with the first technique is turned into a transcript that alternates between the voices of the author and mentor. This transcript is used to prompt the model, which then takes on both roles, author and mentor, in effect performing self-critique.

For each technique, the fragments of text we obtain are subjected to analysis from a literary critical point of view. We found the model’s responses to be unexpectedly sophisticated. In the creative dialogue, it proposed text of increasing quality, responding appropriately and with apparent skill to the suggestions and feedback of the mentor.

Raising the temperature parameter caused it to produce neologisms that, far from being nonsensical, could be interpreted as semantically coherent and contextually relevant. In the multi-voice mode, it spontaneously introduced an entirely new character, although nowhere in the prompting was there any hint of such a possibility.

The whole paper is worth reading (it’s not too technical, don’t worry), but the section dedicated to the experiments with the temperature value is particularly noteworthy:

Another response at θ = 1.4 includes an astonishing image: Effie as “a ripened seed attempting to stretch back into the curve that it yearns”. The metaphor speaks of impossibility and futility, as well as Effie’s desperate desire to return to a place of safety and enclosure – the cupboard, or her own time. It also connects, obliquely, with the imagery of the Garden, and expulsion from Eden, generated by the initial prompt. While it is unlikely that these high-temperature completions could be used themselves as standalone texts (other than in very radical and experimental contexts), re-imagining GPT-4 as a creative partner, collaborating in a process of

writing, allows us to consider them as creative starting points and provocations.The more unpredictable content generated at this temperature setting, less confined by textual norms and conventions, could be a useful tool for prompting more creative, risky, ambitious and radical writing, from more surprising metaphors, to lexical creativity and formal innovation.

I won’t spoil the rest of the paper for you.

I’ll just close by saying that somewhere else, deep in the vastity of the Internet, another group of researchers started 2024 by formalizing a formula to measure the creativity of an AI model.

Creativity serves as a cornerstone for societal progress and innovation, but its assessment remains a complex and often subjective endeavor. With the rise of advanced generative AI models capable of tasks once reserved for human creativity, the study of AI’s creative potential becomes imperative for its responsible development and application. This paper addresses the complexities in defining and evaluating creativity by introducing a new concept called Relative Creativity.

Instead of trying to define creativity universally, we shift the focus to whether AI can match the creative abilities of a hypothetical human.

This perspective draws inspiration from the Turing Test, expanding upon it to address the challenges and subjectivities inherent in evaluating creativity. This methodological shift facilitates a statistically quantifiable evaluation of AI’s creativity, which we term Statistical Creativity. This approach allows for direct comparisons of AI’s creative abilities with those of specific human groups. Building on this foundation, we discuss the application of statistical creativity in contemporary prompt-conditioned autoregressive models.

In addition to defining and analyzing a measure of creativity, we introduce an actionable training guideline, effectively bridging the gap between theoretical quantification of creativity and practical model training. Through these multifaceted contributions, the paper establishes a cohesive, continuously evolving, and transformative framework for assessing and fostering statistical creativity in AI models.

Now. Before you counter the whole argument with the usual “large language models are just stochastic parrots mimicking the training data”, let me tell you that one of the two authors of the first research, Murray Shanahan, is a world expert in large language models and he has publicly argued against the anthropomorphization of AI models more than anybody else in the AI community.

As another world-class expert, Rob Miles, has put it:

Calling GPT4 a “glorified predictive text” is like calling a modern computer a “glorified calculator.”

This week’s Splendid Edition is titled My child is more intelligent than yours.

In it:

- What’s AI Doing for Companies Like Mine?

- Learn what Golborne Medical Centre, The Clueless, British Airways, and the Chinese Ministry of State Security are doing with AI.

- A Chart to Look Smart

- How the Financial Services industry is fine-tuning specialized LLMs for asset management and document analysis.

- The Tools of the Trade

- A free online service to compare the answers of multiple LLMs to your prompts in edge use cases.