- YouTube’s first Culture & Trends Report reveals some numbers about the interest in virtual creators among the YouTube audience.

- The Screen Actors Guild—American Federation of Television and Radio Artists (SAG-AFTRA) joins the Writers Guild of America (WGA) in an unprecedented strike focused on generative AI.

- 8,000 authors have signed a letter asking the leaders of companies including Microsoft, Meta Platforms and Alphabet to not use their work to train AI systems without permission or compensation.

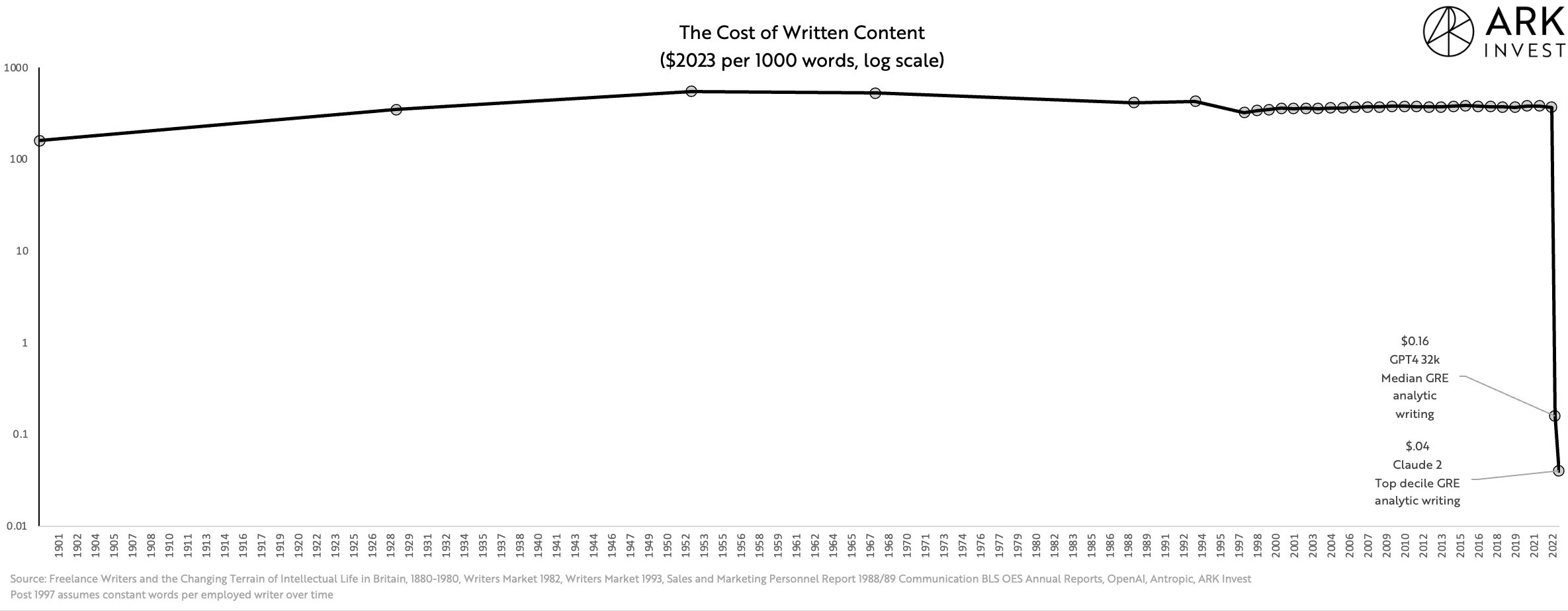

- The cost of producing well-written material has fallen 10 thousand fold over the course of the past year and for the first time in almost 125 years.

- Several large news and magazine publishers are discussing the formation of a new coalition to address the impact of artificial intelligence on the industry

- The startup Air shows how salespeople all around the world won’t have to be on the phone anymore going forward. Next step: do not show up at the office either.

P.s.: This week’s Splendid Edition is titled What AI should I wear today?. In it, we’ll see what the New York City’s Metropolitan Transit Authority (MTA), Eurostar, and G/O Media are doing with AI.

In the What Can AI Do for Me? section, we’ll see how to use GPT-4 Code Interpreter to ask questions about our website performance that Google Analytics can’t answer without attending a 72 days class.

In the Prompting section, I’ll recommend what use case is more suitable for seven AI systems that can be used today.

Synthetic Work takes a week off. This newsletter is written by a real human, not an AI, and that human needs a little break.

Expect the next issue on August 6th.

Alessandro

The first thing that caught my attention this week is YouTube’s first Culture & Trends Report.

If you are a YouTuber or you plan to become one, there are a lot of interesting insights in the report, and a page dedicated to AI:

Virtual creators and K-pop bands being debuted by Korean companies are a build on VTubers and hint about the places that AI-driven creations may go in the future, providing an early look at viewer interests in creativity that challenges norms of authenticity.

RuiCovery, a real person with an AI-generated face, was made by Seoul-based IT start-up Dob Studio. The studio produces song and dance covers in both long-form videos and Shorts.

Two statistics in particular interest us for Synthetic Work:

- 60% of people surveyed agree they’re open to watching content from creators who use AI to generate their content.

- 52% of people surveyed say they watched a VTuber (virtual YouTuber or influencer) over the past 12 months.

This is not a small study: 25,892 online adults, aged 18-44, were surveyed in May 2023.

If people are open to watching synthetic humans delivering synthetic content on YouTube, with time, this might pave the way for a broader acceptance of the concept in other areas. The first application that comes to mind is synthetic anchors for news and sports.

We will not think about the long-term impact on the job market. From a utility standpoint, if all we want is to be entertained or informed, it doesn’t matter if the anchor is synthetic or not.

In fact, the possibility of creating synthetic anchors will probably trigger a gold rush to create the most engaging personality that resonates with this or that section of the addressable audience. Something that is much harder and more expensive to do with real humans.

Speaking of synthetic entertainers, the second thing that caught my attention this week is, of course, the unprecedented strike of the Screen Actors Guild—American Federation of Television and Radio Artists (SAG-AFTRA) that joins the Writers Guild of America (WGA).

We already covered this strike in Issue #12 – ChatGPT Sucks at Making Signs and Issue #20 – £600 and your voice is mine forever, but this fight is so critical for the future of synthetic work (at least in the entertainment industry) that we need to keep looking.

Christopher Grimes, reporting for Financial Times:

Hollywood has not seen anything like it in more than 60 years: thousands of striking actors and writers picketing together outside movie and TV studios, where production has ground to a halt.

Demetri Belardinelli, who has acted in TV shows such as Silicon Valley, was among hundreds of picketers outside Walt Disney’s Burbank studios in sweltering heat on Friday. He and 160,000 other members of the SAG-AFTRA union had voted to strike a day before, after talks with the studios collapsed.

…

The Screen Actors Guild has not gone on strike in 43 years, and it has been even longer since the actors and writers have picketed at the same time. Their last joint industrial action was in 1960, when Ronald Reagan was the head of the Screen Actors Guild.

…

Key sticking points for both the writers and actors include royalties — which have declined significantly in the streaming era — and establishing rules over the use of artificial intelligence. Writers fear being paid far less to adapt basic scripts generated by AI programmes, while actors are concerned that their digital likenesses will be used without compensation.

…

Bob Iger, Disney’s chief executive, told CNBC on Thursday that it was the “worst time in the world” for work stoppages, given the industry’s nascent recovery from the Covid-19 pandemic. “There’s a level of expectation that they have that is just not realistic.”

Of course, this is the best (and possibly, only) time in the world for human artists to attempt this fight. Tech startups are already running away with AI technologies, creating synthetic content that people start to watch and might enjoy.

More information comes from Angela Watercutter, reporting for Wired:

You know it’s bad when the cocreator of The Matrix thinks your artificial intelligence plan stinks. In June, as the Directors Guild of America was about to sign its union contract with Hollywood studios, Lilly Wachowski sent out a series of tweets explaining why she was voting no. The contact’s AI clause, which stipulates that generative AI can’t be considered a “person” or perform duties normally done by DGA members, didn’t go far enough. “We need to change the language to imply that we won’t use AI in any department, on any show we work on,” Wachowski wrote. “I strongly believe the fight we [are] in right now in our industry is a microcosm of a much larger and critical crisis.”

…

Leading up to the strike, one SAG member told Deadline that actors were beginning to see Black Mirror’s “Joan Is Awful” episode as a “documentary of the future” and another told the outlet that the streamers and studios—which include Warner Bros., Netflix, Disney, Apple, Paramount, and others—“can’t pretend we won’t be used digitally or become the source of new, cheap, AI-created content.”

While Season 6 of Black Mirror is, overall, highly disappointing, the episode “Joan Is Awful” episode is a must-watch:

Let’s continue the Wired article:

Will any of this stop the rise of the bots? No. It doesn’t even negate that AI could be useful in a lot of fields. But what it does do is demonstrate that people are paying attention—especially now that bold-faced names like Meryl Streep and Jennifer Lawrence are talking about artificial intelligence. On Tuesday, Deadline reported that the Alliance of Motion Picture and Television Producers, which represents the studios, was prepared for the WGA to strike for a long time, with one exec telling the publication “the end game is to allow things to drag on until union members start losing their apartments and losing their houses.” Soon, Hollywood will find out if actors are willing to go that far, too.

While all of this happens, in a self-fulfilling prophecy fashion, somebody thought it was a good idea to launch a synthetic show.

Devin Coldewey, reporting for TechCrunch:

little savvy is required to see that this may be the worst possible moment to soft-launch an AI that can “write, animate, direct, voice, edit” a whole TV show — and demonstrate it with a whole fake “South Park” episode.

The company behind it, Fable Studios, announced via tweet that it had made public a paper on “Generative TV & Showrunner Agents.” They embedded a full, fake “South Park” episode where Cartman tries to apply deepfake technology to the media industry.

The technology, it should be said, is fairly impressive: Although I wouldn’t say the episode is funny, it does have a beginning, a middle and an end, and distinct characters (including lots of fake celebrity cameos, including fake Meryl Streep).

…

Fable started in 2018 as a spinoff from Facebook’s Oculus (how times have changed since then), working on VR films — a medium that never really took off. Now it has seemingly pivoted to AI, with the stated goal of “getting to AGI — with simulated characters living real daily lives in simulations, and creators training and growing those AIs over time,” Saatchi said.Simulation is the name of the product they intend to release later this year, which uses an agent-based approach to creating and documenting events for media, inspired by Stanford’s wholesome AI town.

If you want to see the software Fable Studios used to create this episode, you just need to look here:

One month ago, Lisa Joy, one of the creators of Westworld, said she was confident her job as a producer of science fiction was safe from artificial intelligence.

Her words, related by Nate Lanxon, and Jackie Davalos for Bloomberg:

“I’m not so much worried about being replaced, partly because AI isn’t as good at creative things yet, but also we tend to take tools and we figure out new ways to use them, and come up with things we couldn’t do before,” he said. “What I look at as a writer is how can this help me tell a better story? And am I adaptable enough to use it and come up with something that I couldn’t have come up with otherwise?”

I wonder for how long she’ll feel this way.

Actors and screenwriters are not the only creators increasingly vocal against the use of AI. The third story worth your attention this week is about 8,000 authors have signed a letter asking the leaders of companies including Microsoft, Meta Platforms and Alphabet to not use their work to train AI systems without permission or compensation.

Talal Ansari, reporting for The Wall Street Journal:

The letter, signed by noteworthy writers including James Patterson, Margaret Atwood and Jonathan Franzen, says the AI systems “mimic and regurgitate our language, stories, style, and ideas.” The letter was published by the Author’s Guild, a professional organization for writers.

“Millions of copyrighted books, articles, essays, and poetry provide the ‘food’ for AI systems, endless meals for which there has been no bill,” the letter says. “You’re spending billions of dollars to develop AI technology. It is only fair that you compensate us for using our writings, without which AI would be banal and extremely limited.”

The letter was addressed to the CEOs of OpenAI, IBM, Stability AI and several tech companies, which run AI models and chatbots such as Bard, ChatGPT and Llama.

…

The Author’s Guild letter says many of the books used to train AI systems were pulled from “notorious piracy websites.”

…

Comedian Sarah Silverman and other authors filed a lawsuit against Meta earlier this month, alleging its artificial intelligence model was trained in part on content from a “shadow library” website that illegally contains the authors’ copyrighted works. The group filed a similar lawsuit against OpenAI.

…

The Authors Guild said writers have seen a 40% decline in income over the last decade. The median income for full-time writers in 2022 was $22,330, according to a survey conducted by the organization. The letter said artificial intelligence further threatens the profession by saturating the market with AI-generated content.

The problem here is that paying for the books would change nothing. Once a book has entered the training set, AI models can extrapolate the style of the author and use it to generate any type of content (including answers to questions that have nothing to do with writing a book).

Provided that enough material is available, what generative AI really does is capture the essence of a creator. Just like a human being learns how to mock a politician or a celebrity.

So what do you pay for? It can’t be royalties for the book. Can it be royalties for personality? If so, are AI companies supposed to pay for every single personality they have captured in the training dataset? If so, how to measure that?

More importantly, this mimicking phase is just the beginning. We’ll see AI models generate completely new personalities that don’t resemble any existing creator and are more engaging than every one of them.

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

The chart of the week comes from Brett Winton, the Chief Futurist at ARK Invest, the asset management company led by famous investor Cathie Wood.

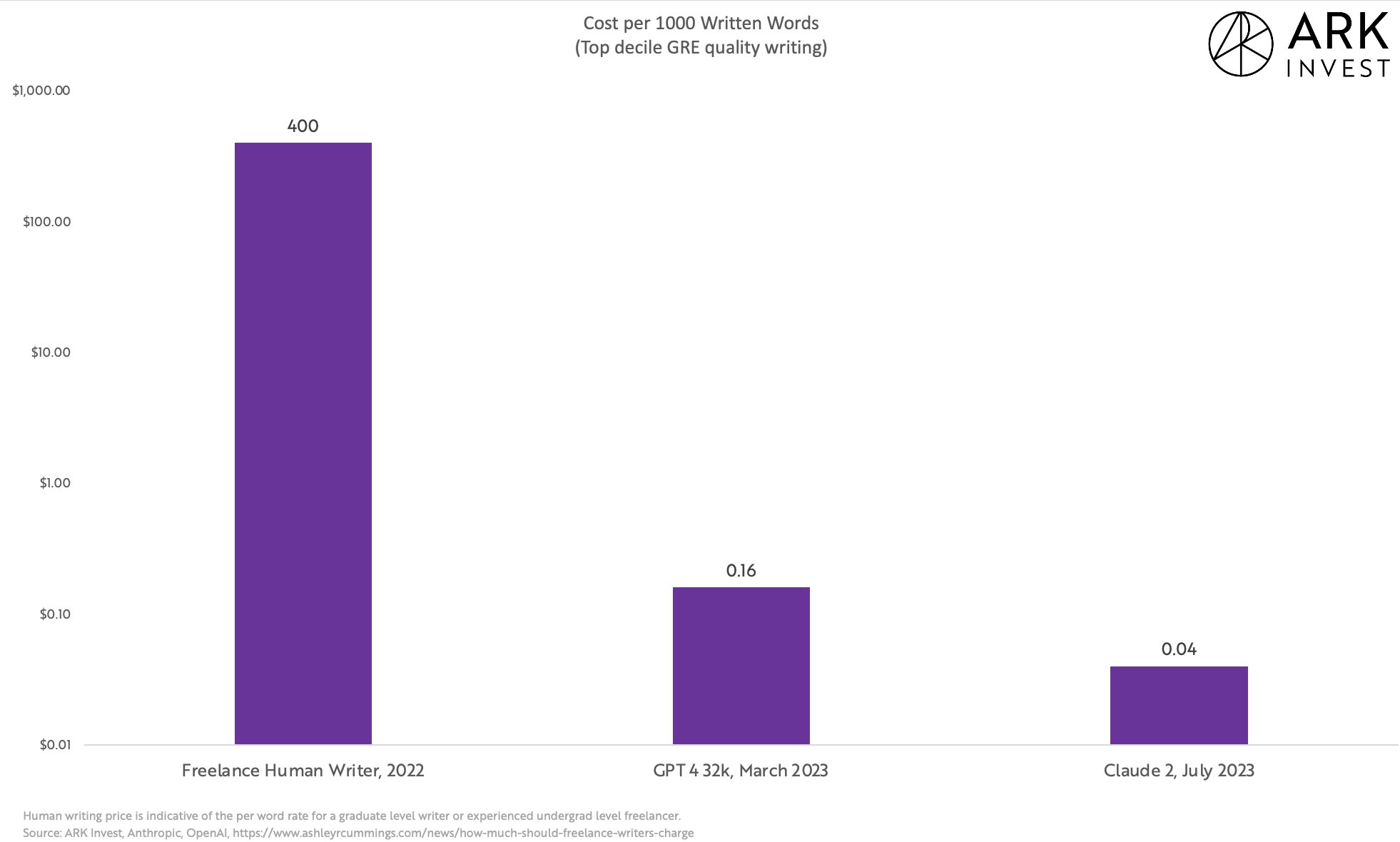

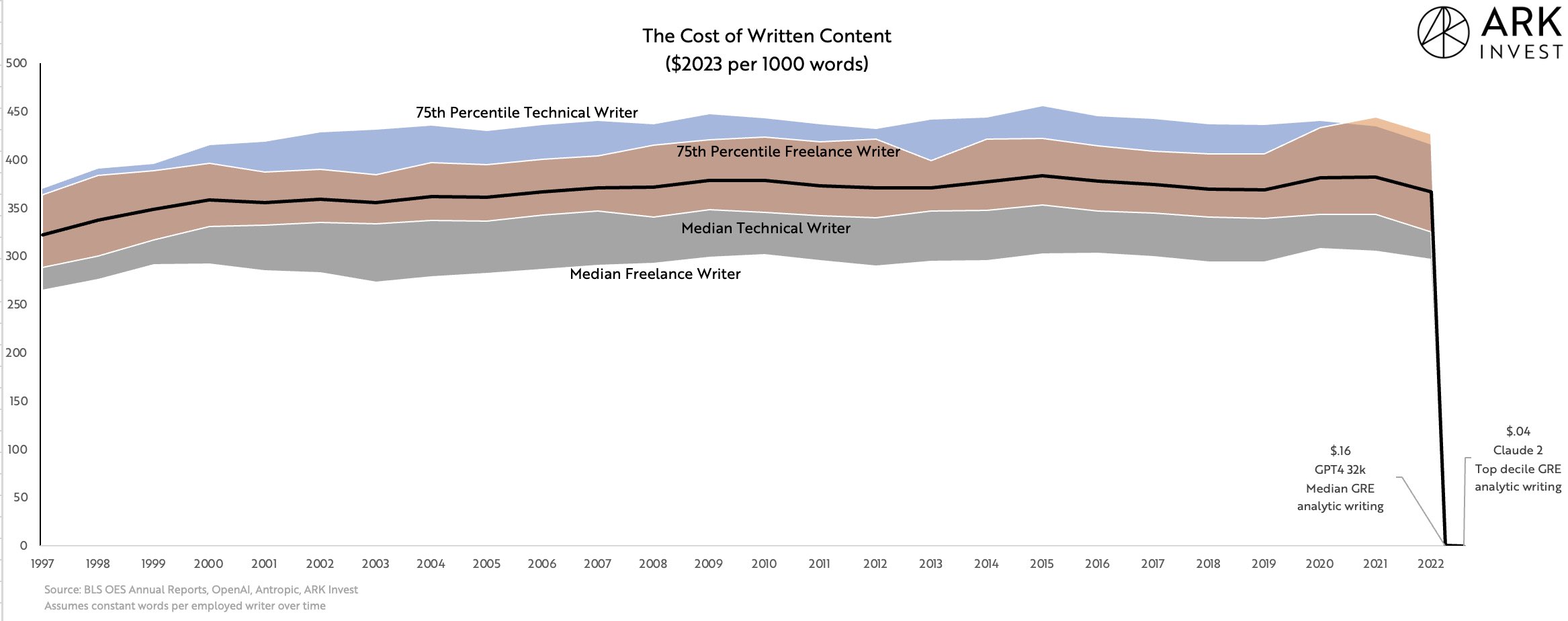

Brett reminds us that the cost of producing well-reasoned coherent written material has fallen 10 thousand fold over the course of the past year.

But it’s only when we zoom out and we see where we are now compared to the last 25 years and to the last 125 years that we start to understand the true impact of generative AI:

This is a critical point that will push employers to keep using AI-generated content no matter how many strikes humans will organize.

As we said many times in the last 5 months, this is a vicious circle: if even just one of your competitors switches to AI-generated content, your chances to stay competitive from a cost standpoint are close to zero. If the market chooses cheap and decent instead of expensive and excellent, you have no choice but to follow.

On top of that, there’s the issue that the market is polluted by countless options that are very expensive and barely decent.

The trigger for this chart is the release of the AI model Claude 2, developed by Anthropic, which we discussed last week in Issue #21 – Ready to compete against yourself?

Also last week, I compared Claude 2 with GPT-4 Code Interpreter in Issue #21 – Investigating absurd hypotheses with GPT-4.

Going forward, I plan to use Claude 2 a lot more in the use cases we discuss in the Splendid Edition.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

On top of book authors, there’s another group of people that doesn’t feel comfortable with AI “stealing” their content: publishers.

The problem is that both authors and publishers are simultaneously complaining about AI learning from their content and using AI to produce new content.

We document which publishers and publications have started producing AI-generated content in the Splendid Edition of Synthetic Work, and we track each and every name in the AI Adoption Tracker.

It’s a typical “It’s complicated” relationship. And like in most “It’s complicated” relationships, it’s complicated only because you can’t have it both ways and you refuse to choose.

To tell us more about this internal struggle, we have Alexandra Bruell, reporting for The Wall Street Journal:

Several large news and magazine publishers are discussing the formation of a new coalition to address the impact of artificial intelligence on the industry, according to people familiar with the matter.

The possibility of such a group has been discussed among executives and lawyers at the New York Times ; Wall Street Journal parent News Corp ; Vox Media; Condé Nast parent Advance; Politico and Insider owner Axel Springer; and Dotdash Meredith parent IAC, the people said.

A specific agenda hasn’t been decided, and some publishers haven’t yet committed to participating, the people said. It is possible a coalition may not be formed, they said.

While publishers agree that they need to take steps to protect their business from AI’s rise, priorities at different companies often vary, the people said.

…

IAC Chairman Barry Diller at a recent industry event warned of AI content scraping—or the use of text and images available online to train the AI tools—and said that publishers in some cases should “get immediately active and absolutely institute litigation.”

…

News Corp Chief Executive Robert Thomson, who has been one of the most vocal critics of the platforms, recently warned that intellectual property was under threat due to the rise of AI.

Possibly to placate the growing animosity, OpenAI has started forging partnerships with publishers. The first one is with Associated Press:

The Associated Press and OpenAI have reached an agreement to share access to select news content and technology as they examine potential use cases for generative AI in news products and services.

The arrangement sees OpenAI licensing part of AP’s text archive, while AP will leverage OpenAI’s technology and product expertise. Both organizations will benefit from each other’s established expertise in their respective industries, and believe in the responsible creation and use of these AI systems.

Will AI companies have to forge similar partnerships with every publisher on the planet?

The winner of this week’s spot in the “Putting Lipstick on a Pig” section is a company called Air. The founder writes in his Twitter bios: AI for the enhancement of humanity.

However, the company’s website teases:

Introducing the world’s first ever AI that can have full on 10-40 minute long phone calls that sound like a REAL human, with infinite memory, perfect recall, and can autonomously take actions across 5,000 plus applications. It can do the entire job of a full time agent without having to be trained, managed or motivated. It just works 24/7/365.

Not sure how the two things are compatible, but you don’t have to trust me. I highly recommend you listen carefully to this 3 minutes demo:

If you have friends in sales, you might want to forward them this newsletter. It will be pleasing to know that they don’t have to try and be good anymore.

Not quite our traditional The Tools of the Trade section but, given the AI community’s mad rush to release many new things every week now, it seems necessary to chart a map of the various AI systems now available on the market and when to use them.

Eventually, one system will be able to do everything, but until then, this is my recommendation:

- ChatGPT with GPT-4 (vanilla), by OpenAI: use this AI system with this specific model for most use cases. It remains the most accurate and capable on the market. Do not waste your time with the GPT-3.5-Turbo model. If that’s the only model you ever tried from OpenAI, please know that there’s an ocean of difference compared go GPT-4.When it will be available to you, switch to the 32K tokens version of GPT-4, to have even longer and more coherent conversations.

- Claude 2 (100K tokens), by Anthropic: use this AI system to upload and analyze long documents. Be extra careful in checking the results as this model tends to hallucinate more than GPT-4.When it will be available to you, switch to the 200K tokens version, to have even longer and more coherent conversations.

- ChatGPT with GPT-4 Code Interpreter, by OpenAI: