- Using Google Search is a horrifying experience compared to asking questions to GPT-4. OpenAI will fix it soon.

- AI-related legal cases are on the rise. There never was a better time to become a robo-lawyer.

- Critics of the FLI open letter: “You are being ridiculous. It’s just math”. So let’s hear from somebody that actually knows math.

- Goldman Sachs: “Don’t look now, but 300MM jobs need a bit of reskilling.”

- Once upon a time, people could be hired to do calculations and their job title was “Computer.”

- The demand for AI skills is raising in every industry of the economy. Thankfully, you read Synthetic Work.

- When an AI uses emojis to reply to you, it’s not cute. It’s manipulative.

- Soon, you’ll be able to lie more than usual during dates and job interviews. Happy?

P.s.: This week’s Splendid Edition of Synthetic Work is titled How Not to Do Product Reviews. It was supposed to be a review of the AI-centric products that I use on a daily basis, but things went horribly wrong and the article derailed and I ended up talking about product strategy, competitive landscapes, and business models. Hence the title.

I hope you enjoyed reading the Free Edition newsletter so far. Unfortunately, it’s now time to give back.

No, I’m not asking you to pay. I’m asking you to reply to this email and let me know three things:

- What you like about Synthetic Work

- What you don’t like about Synthetic Work

- What you would like to read in future issues of Synthetic Work

No need to write a long, formal reply. Too long, and too much cognitive friction for you. Plus, I don’t care about formalism.

No need to think too hard about it. The first thing that comes to mind.

Skip the “Hi”. I don’t care either.

Just reply with 3 lines, one for each answer.

It’s not a big ask.

Just do it.

Swoosh.

Alessandro

This week I’m going to shock you with three things worth your attention, instead of the usual two. I know. Goosebumps, right?

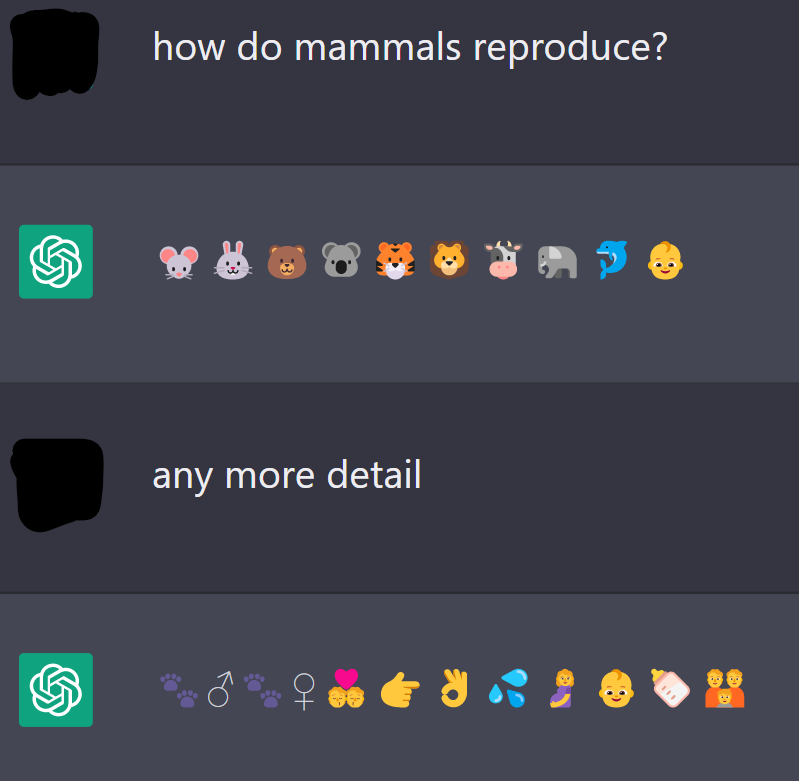

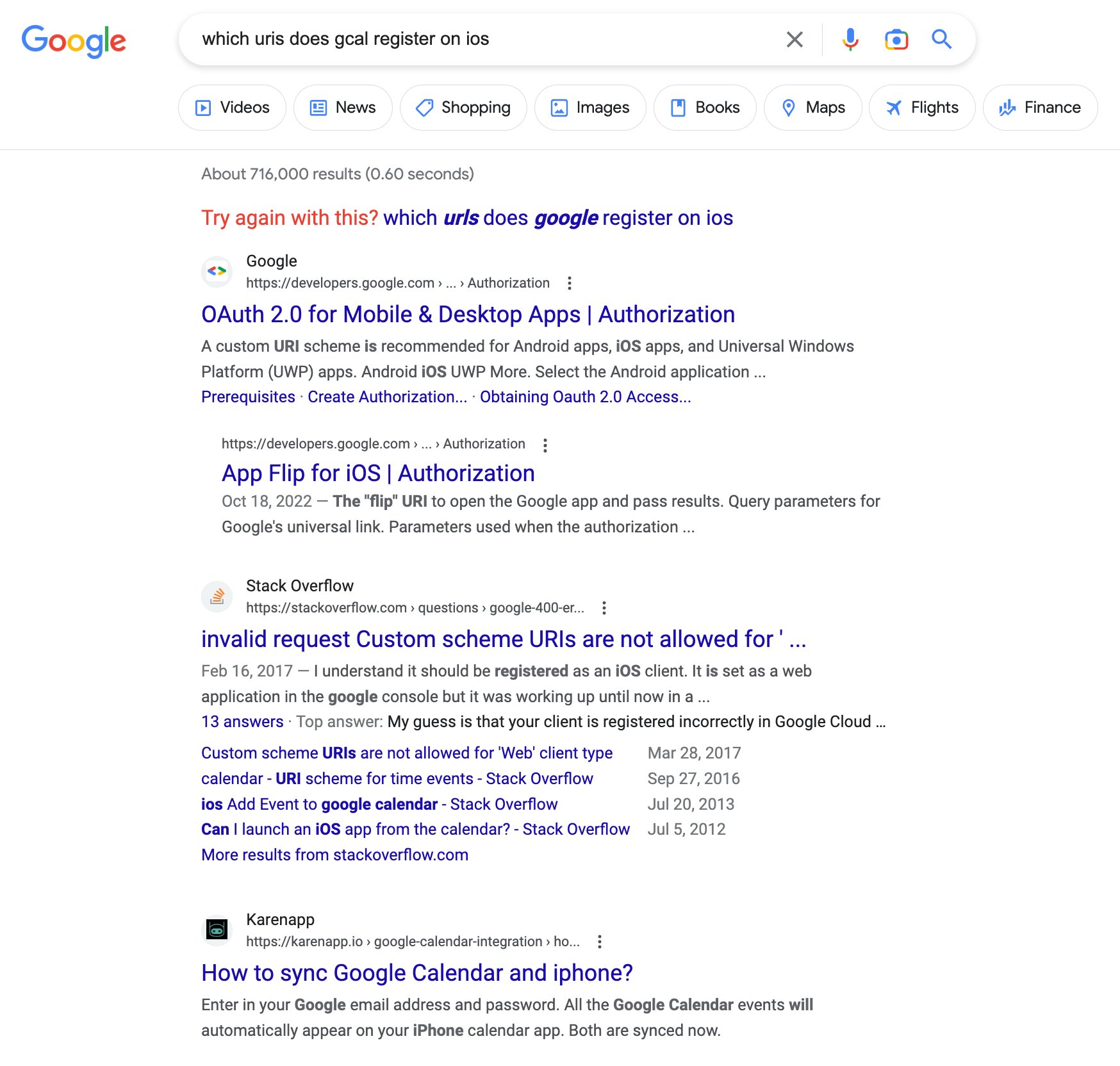

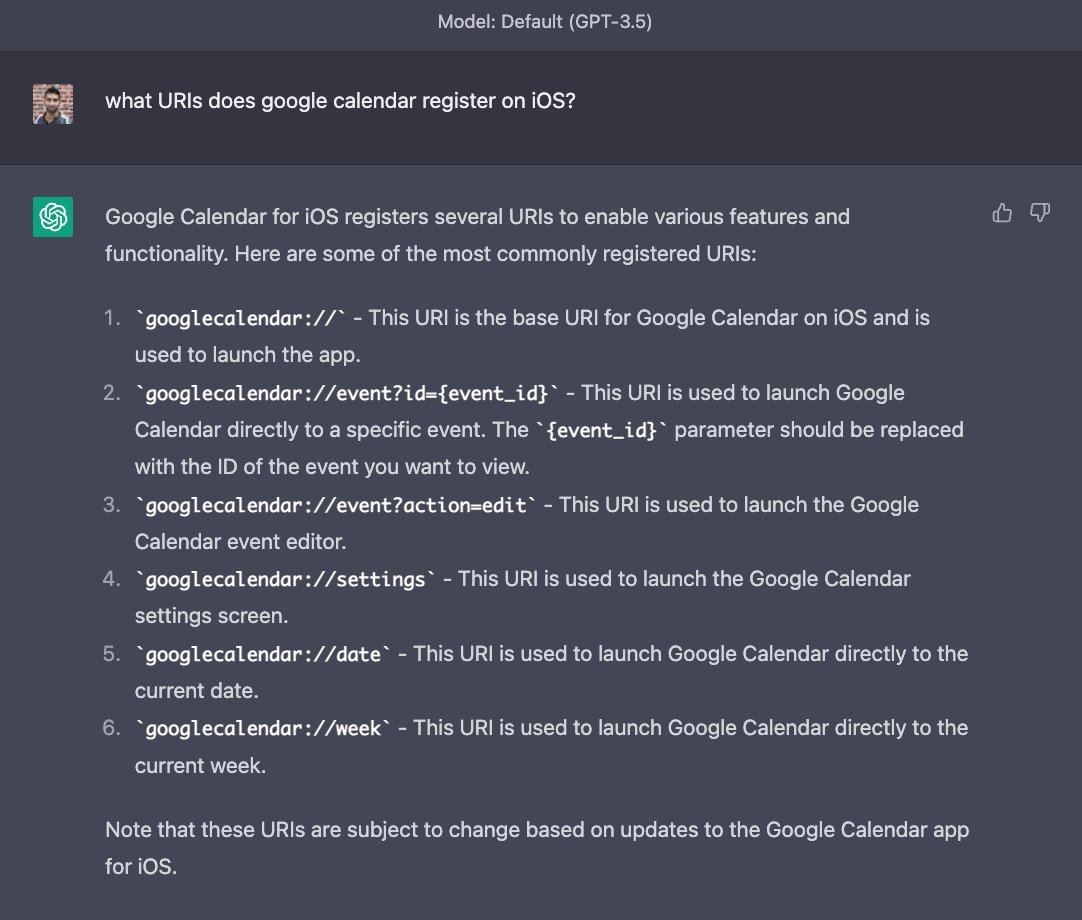

The first thing: like many, many others, Rahul Vohra, compares the experience he has with Google to the experience he has with GPT-3 when he tried to find the answer to a technical question:

Now. This is not an apples-to-apples comparison. Google is a public company under immense pressure to monetize while OpenAI is a startup that just got an infusion of $1B in cash from Microsoft to keep developing its models.

Anyone convinced that OpenAI will not start injecting ads into its GPT chats is fooling themselves. You just have to see what Microsoft has started doing with the Bing implementation of GPT-4. We discussed this last week in the Free Edition of Synthetic Work Issue #6 (Putting Lipstick on a Pig (at a Wedding)).

That said, it’s undeniable that the experience is completely different and, for the average non-technical user, having a straight answer to a question is a significantly better outcome than exploring an ocean of links.

Or it would be if large language models would provide authoritative and correct answers. But they don’t. They lie with a straight face and you always have to end up going back to Google to double-check if the answer is accurate. So, double the work.

Should OpenAI succeed in decreasing the so-called “hallucinations” of their AI models to a negligible amount, Google might be forced to change the way search has been done for the last twenty years.

–

OK. The second thing to pay attention to: The Stanford Institute for Human-Centered Artificial Intelligence (HAI) just released its annual AI Index report.

Just like in large corporations, they clearly believe that the longer the title the more important the person.

Anyway.

This new 2023 report is 386 pages long and full of charts. I’m glad to report that I was aware of almost all the data. It means that I am paying attention and that you, dear reader, won’t miss a thing if you keep reading Synthetic Work (disclaimer: not a threat).

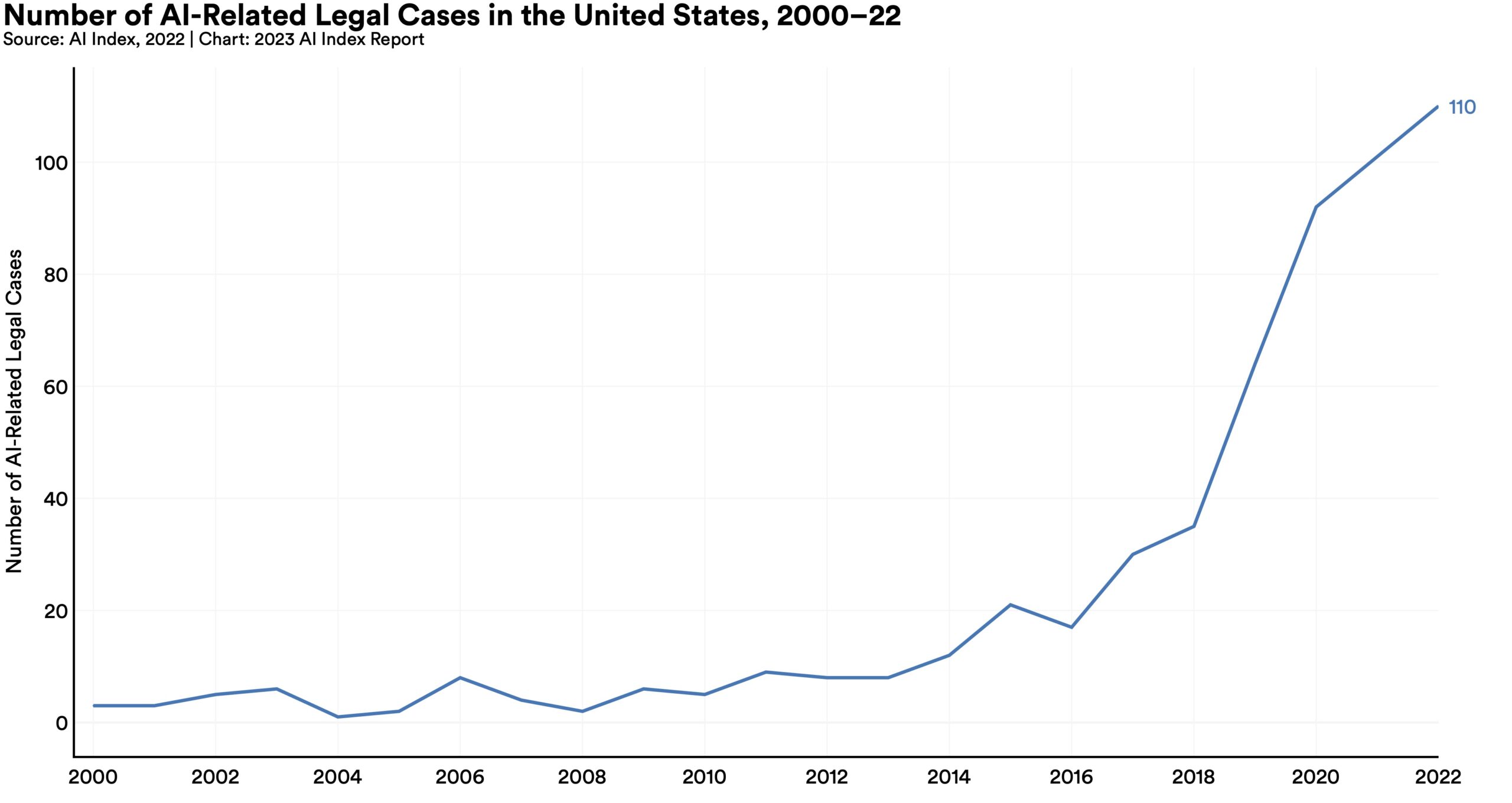

One of the most interesting charts in this collection is the following one:

The report comments:

In 2022, there were 110 AI-related legal cases in United States state and federal courts, roughly seven times more than in 2016. The majority of these cases originated in California, New York, and Illinois, and concerned issues relating to civil, intellectual property, and contract law.

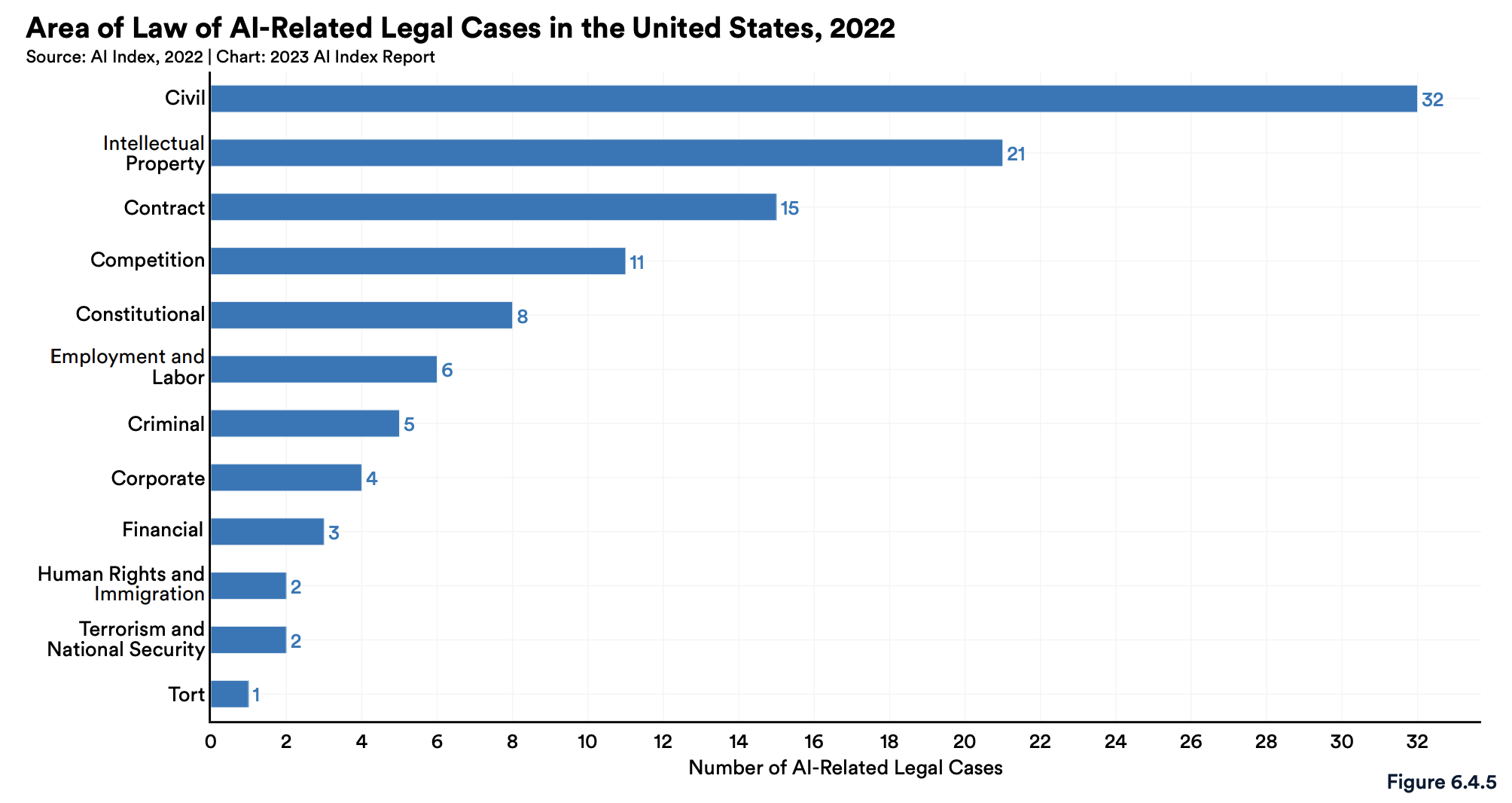

But if we further explore the data, we find out that there’s a small, but not zero number of cases related to “Employment and Labor”:

And that’s where I expect to see the most interesting action in the coming months and years as we start to see mass adoption of large language models and other types of AI across the industries of our economy.

As I wrote in past issues of Synthetic Work, many possible scenarios may lead to lawsuits. For example:

- A manager becomes (more) biased (than usual) in evaluating the performance and in assigning bonus allocation if a direct report systematically uses AI to embellish his/her outputs

- An employee falls in love with an AI and is manipulated (either by the employer or by the company that offers the AI service)

- An employee’s performance is evaluated in a negative way because the AI tools deployed by the employer have led to a detachment from the team

- An employee costs material damage to the employer because he/she has used an AI that generated inaccurate or misleading information.

All of this sounds surreal and, hopefully, it will remain so. But arson lawsuits seemed surreal until we discovered fire.

–

The third thing to pay attention to this week: a 45-minute interview with one of the fathers of modern AI and winner of the Turing Award, Geoffrey Hinton. Who, coincidentally, signed the Future of Life Institute letter to pause the development of powerful generative AI models just days after giving this interview.

Normally, I wouldn’t recommend something like this. Synthetic Work rigorously targets an audience of non-technical people and it will remain this way. But this interview is 99% comprehensible by everybody.

More importantly, this interview becomes shocking around the 30th minute. If you want to understand how serious the discourse around AI really is, you won’t regret watching it:

You won’t believe that people would fall for it, but they do. Boy, they do.

So this is a section dedicated to making me popular.

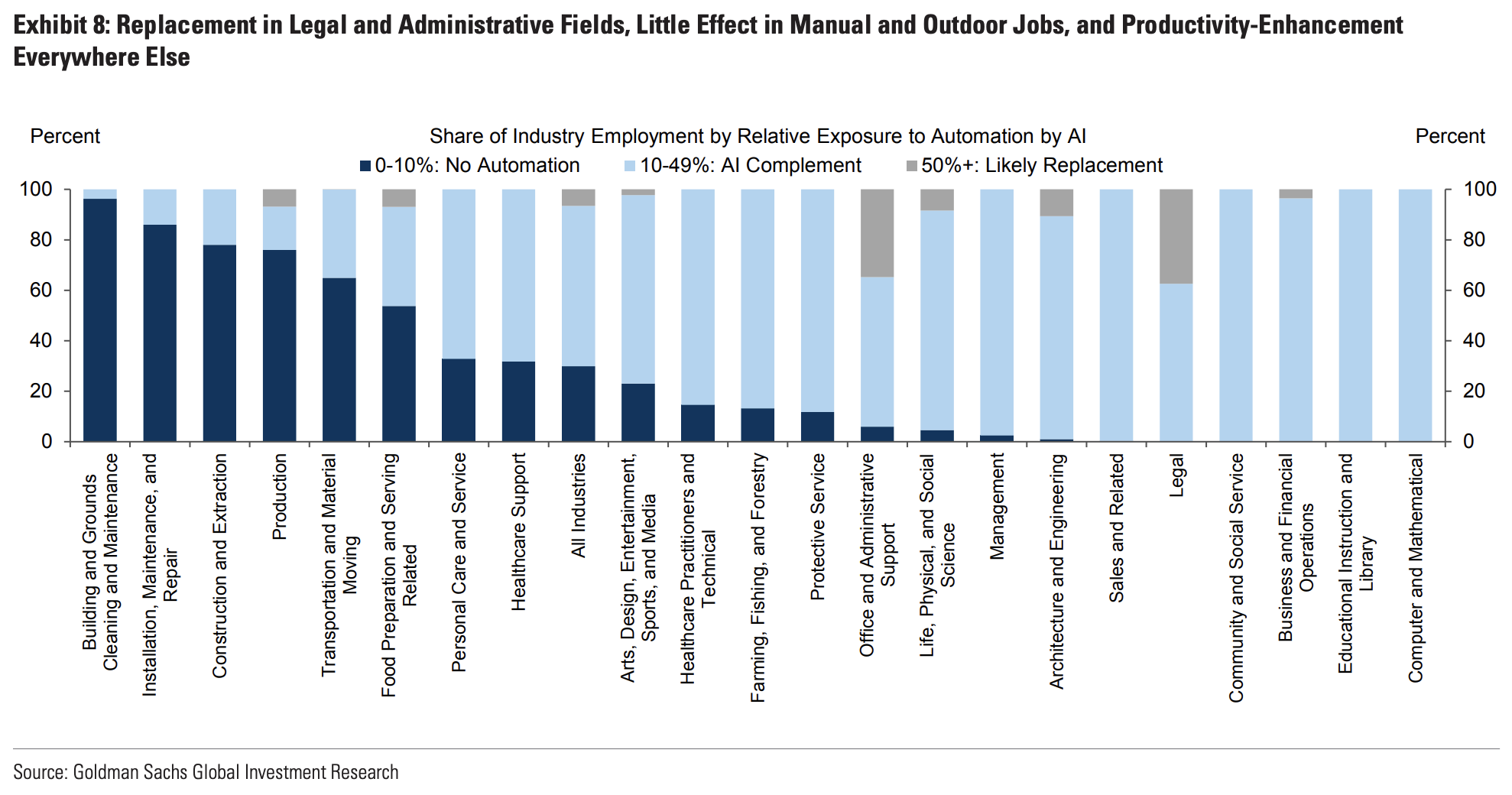

Last week, Goldman Sachs released a 20-pages economics research titled “The Potentially Large Effects of Artificial Intelligence on Economic Growth“.

Every newspaper and TV news talked about it because the report was authored by two investment bank’s economists and supervised by the Chief Economist and Head of Global Investment Research. They wrote:

If generative AI delivers on its promised capabilities, the labor market could face significant disruption. Using data on occupational tasks in both the US and Europe, we find that roughly two-thirds of current jobs are exposed to some degree of AI automation, and that generative AI could substitute up to one-fourth of current work. Extrapolating our estimates globally suggests that generative AI could expose the equivalent of 300mn full-time jobs to automation.

The readers of this newsletter, have already seen multiple charts and studies, from prominent academics, measuring what type of jobs could be “exposed” to AI.

We have seen it in the Free Edition of Synthetic Work Issue #4 (A lot of decapitations would have been avoided if Louis XVI had AI) and in the Free Edition of Synthetic Work Issue #5 (You are not going to use 19 years of Gmail conversations to create an AI clone of my personality, are you?).

Goldman Sachs’ economists have taken the studies we mentioned here on Synthetic Work, further elaborated them, and quantified that exposure to AI.

The conclusion they came to is that “25% current work tasks could be automated by AI in the US and Europe.”

Of course, jobs potentially displaced by AI will be replaced by new types of jobs, raising the overall level of happiness of the population, except for those poor souls that lost their job and cannot reskill.

The most interesting part of the report is this one:

The boost to global labor productivity could also be economically significant, and we estimate that AI could eventually increase annual global GDP by 7%.

…

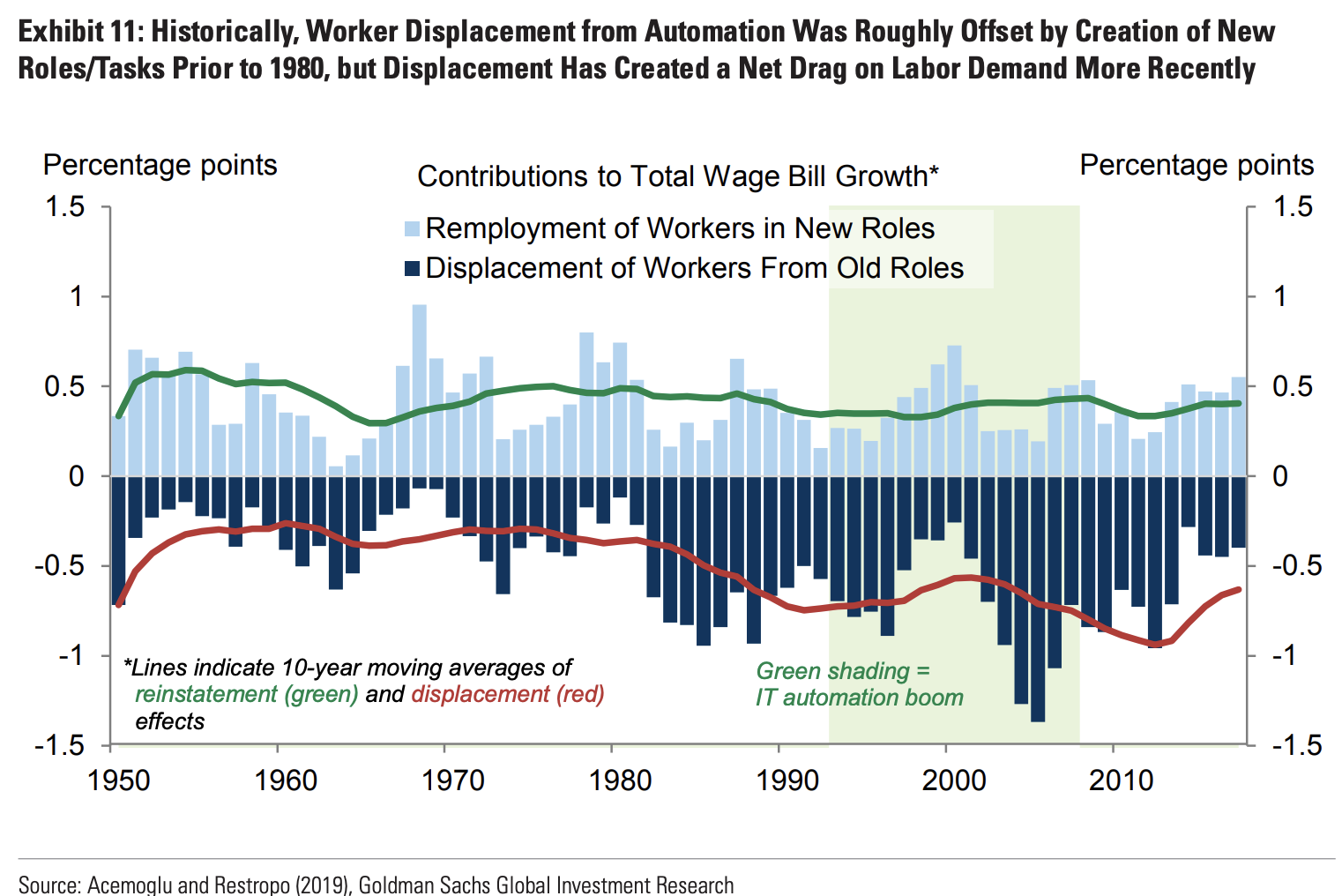

Technological change displaced workers and created new employment opportunities at roughly the same rate for the first half of the post-war period, but has displaced workers at a faster pace than it has created new opportunities since the 1980s.These results suggest that the direct effects of generative AI on labor demand could be negative in the near-term if AI affects the labor market in a manner similar to earlier advances in information technology, although the effects on labor productivity growth would still be positive.

Another very interesting part:

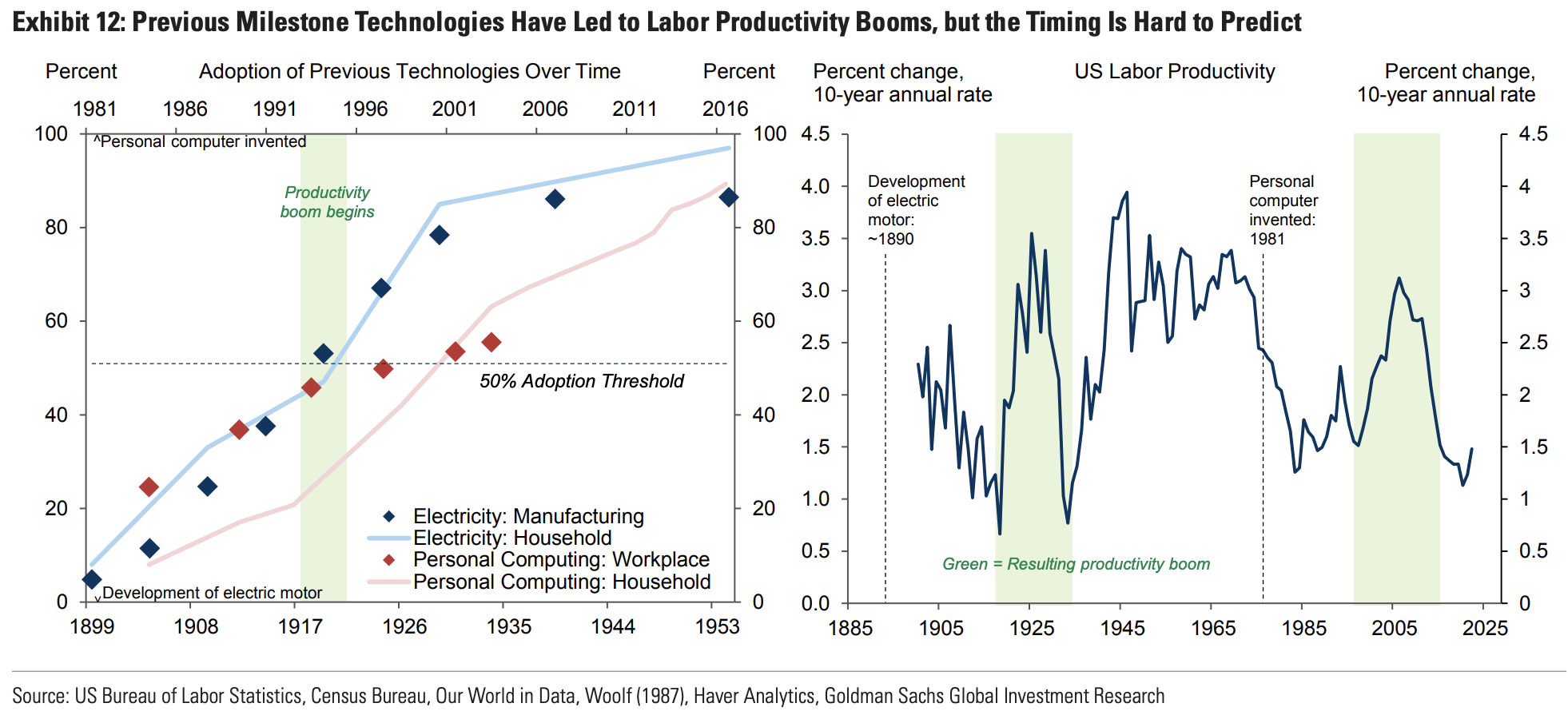

The combination of significant labor cost savings, new job creation, and a productivity boost for non-displaced workers raises the possibility of a labor productivity boom like those that followed the emergence of earlier general-purpose technologies like the electric motor and personal computer. These past experiences offer two key lessons.

First, the timing of a labor productivity boom is hard to predict, but in both cases started about 20 years after the technological breakthrough, at a point when roughly half of US businesses had adopted the technology.

Second, in both of these instances, labor productivity growth rose by around 1.5pp/year in the 10 years after the productivity boom started, suggesting that the labor productivity gains can be quite substantial.

When we think about how artificial intelligence is changing the nature of our jobs, these memories are useful to put things in perspective. It means: stop whining.

I don’t know how many people know this, but “Computer” used to be the name of a job for humans before becoming synonymous with “electronic machine”.

Quoting from Wikipedia:

The term “computer”, in use from the early 17th century (the first known written reference dates from 1613), meant “one who computes”: a person performing mathematical calculations, before electronic computers became commercially available.

…

It was not until World War I that computing became a profession. “The First World War required large numbers of human computers. Computers on both sides of the war produced map grids, surveying aids, navigation tables and artillery tables. With the men at war, most of these new computers were women and many were college educated.”This would happen again during World War II, as more men joined the fight, college educated women were left to fill their positions.

One of the first female computers, Elizabeth Webb Wilson, was hired by the Army in 1918 and was a graduate of George Washington University.

Wilson “patiently sought a war job that would make use of her mathematical skill. In later years, she would claim that the war spared her from the ‘Washington social whirl’, the rounds of society events that should have procured for her a husband” and instead she was able to have a career.

After the war, Wilson continued with a career in mathematics and became an actuary and turned her focus to life tables.

This is the material that will be greatly expanded in the Splendid Edition of the newsletter.

Let’s take another look at the 2023 AI Index report that the Stanford Institute for Human-Centered Artificial Intelligence (HAI) just released.

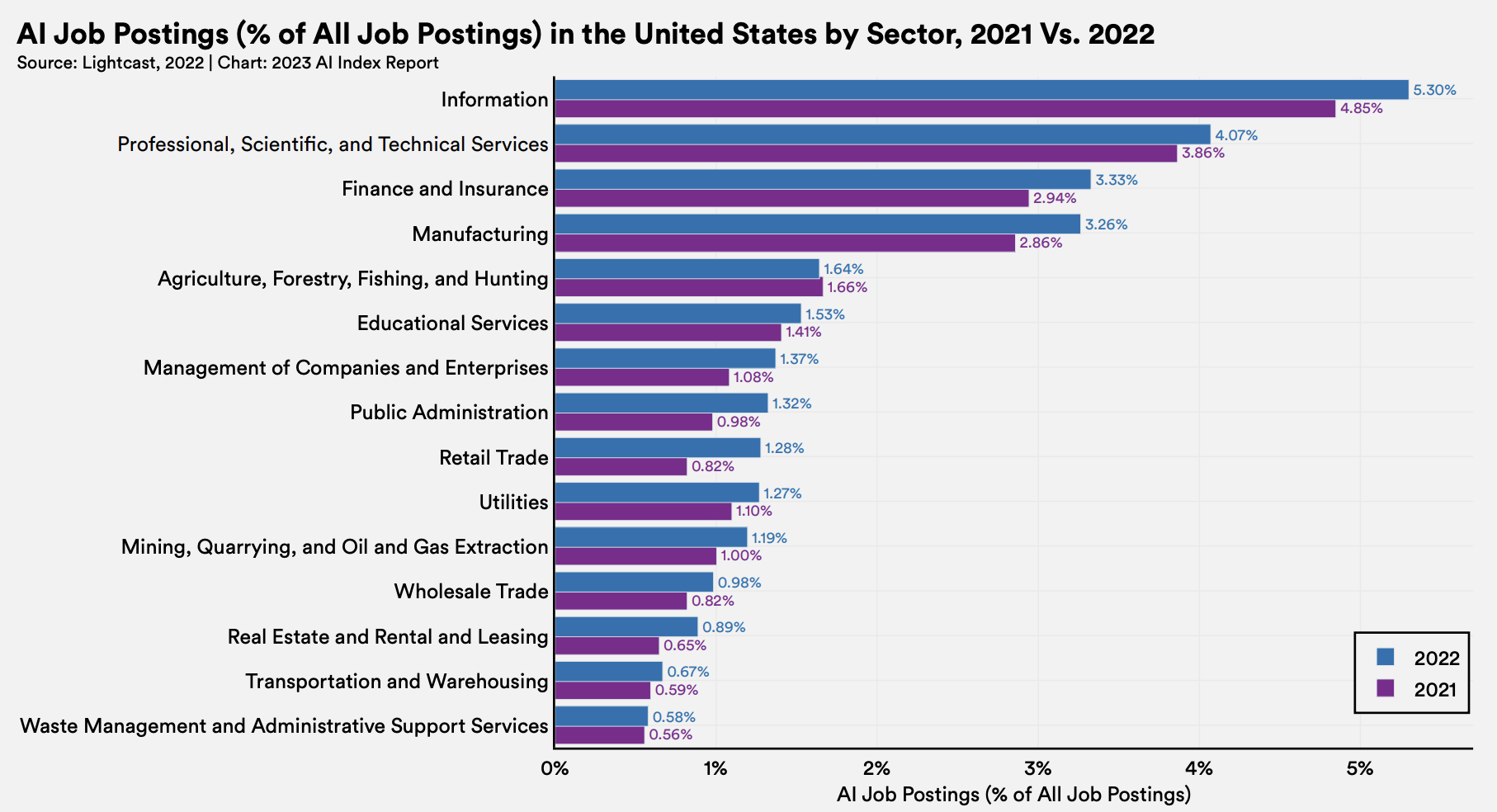

Perhaps, putting 300 charts in a single newsletter is not dull enough. So, let’s add another one:

The demand for AI skills is growing across sectors. More importantly, the demand for AI skills is not being limited just to specialized jobs (e.g.: I need a machine learning engineer in the Publishing industry).

There’s a growing demand for expertise in using generative AI tools (Dall-E, Adobe Firefly, GPT-4, etc.) for job profiles that have nothing to do with AI.

As an acute observer in the recruitment industry among the readers of Synthetic Work has put it, AI skills might well become the new Microsoft Office skills. And that’s because somewhere in Texas, the owner of a small travel agency uses GPT-4 on a daily basis:

How are small businesses reacting to AI?

Like the @shamelesstourist which does luxury tourism. Helps people plan great travel experiences.

Founder Kaleigh Kirkpatrick is using GPT all over the place already.

How are you using it? Building a prompt database? You will. pic.twitter.com/bRbhFVMmJO

— Robert Scoble (@Scobleizer) March 30, 2023

“Expertise in using generative AI tools for job profiles that have nothing to do with AI” means the capability to write instructions for AI models that effectively generate an output close to what the user desire.

That skill goes under the intimidating definition of “Prompt Engineering”. Even if absolutely nothing about it is related to engineering. In fact, real engineers should feel quite offended that their qualification is so shamelessly abused.

The need for prompt engineering will go away over the years as AI models become more and more capable at extrapolate what users want from the context and all the knowledge accumulated from previous conversations.

But for now, being good at prompt engineering is a huge competitive advantage as it makes an enormous difference in what’s possible to achieve. Those who read last week’s Splendid Edition of Synthetic Work Issue 6 (I Know That This Steak Doesn’t Exist) have had a taste of the incredible things that are possible.

Yes. I am considering writing a special issue completely dedicated to prompt engineering.

For any new technology to be successfully adopted in a work environment or by society, people must feel good about it (before, during, and after its use). No business rollout plan will ever be successful before taking this into account.

So far, in this section of Synthetic Work, we have talked about a few different things: how people feel about the threat of job displacements, the exhilaration of seeing large language models give people superhuman powers, and the manifestation of the Eliza effect.

The latter is worth special attention because AI can be used to emotionally manipulate people in a way no other technology can.

About this, Carissa Véliz, associate professor at the Institute for Ethics in AI at the University of Oxford, wrote an interesting article for Nature:

Limits need to be set on AI’s ability to simulate human feelings. Ensuring that chatbots don’t use emotive language, including emojis, would be a good start. Emojis are particularly manipulative. Humans instinctively respond to shapes that look like faces — even cartoonish or schematic ones — and emojis can induce these reactions. When you text your friend a joke and they reply with three tears-of-joy emojis, your body responds with endorphins and oxytocin as you revel in the knowledge that your friend is amused.

…

It’s true that a chatbot that doesn’t use emojis can still use words to express feelings. But emojis are arguably more powerful than words. Perhaps the best evidence for the power of emojis is that we developed them with the rise of text messaging. We wouldn’t all be using laughing emojis if words seemed enough to convey our emotions.

…

My worry is that, without appropriate safeguards, such technology could undermine people’s autonomy. AIs that ‘emote’ could induce us to make harmful mistakes by harnessing the power of our empathic responses. The dangers are already apparent. When one ten-year-old asked Amazon’s Alexa for a challenge, it told her to touch a penny to a live electrical outlet. Luckily, the girl didn’t follow Alexa’s advice, but a generative AI could be much more persuasive. Less dramatically, an AI could shame you into buying an expensive product you don’t want. You might think that would never happen to you, but a 2021 study found that people consistently underestimated how susceptible they were to misinformation.

Stanford students Bryan Hau-Ping Chiang and Alix Cui have hacked together a monocle that goes on top of existing glasses, listens to the ongoing conversation and transcribes it (thanks to Whisper, another OpenAI model), passes the transcribed text to GPT-4 asking to suggest what to say next, and project the generated answer in the field of vision through Augmented Reality technology.

The pitch is “Say goodbye to awkward dates and job interviews.”

Be certain to watch the video:

say goodbye to awkward dates and job interviews ☹️

we made rizzGPT — real-time Charisma as a Service (CaaS)

it listens to your conversation and tells you exactly what to say next 😱

built using GPT-4, Whisper and the Monocle AR glasses

with @C51Alix @varunshenoy_ pic.twitter.com/HycQGGXT6N

— Bryan Hau-Ping Chiang (@bryanhpchiang) March 26, 2023

If you are thinking that this is just a harmless prototype, I’d like to remind you that, almost certainly, we’ll see Apple launching their AR glasses at their WWDC conference this June.

And after Apple launches a new product, Chinese and Korean manufacturers are quick to replicate the innovation, letting people publish all sorts of apps on Android.

Here’s the list of the tools we’ll talk about, organized according to what I use the most or what I consider most important for my workflow as a knowledge worker and a creator (like Jesus, but with shorter hair and more followers):

- GPT-4

- Perplexity

- Prompt+

- Descript

- Fig

- Uncanny Automator Pro

- Stable Diffusion

- Pixelmator Pro

- 11Labs

- Grammarly

I actually wanted to list these tools in alphabetic order to mitigate any bias, but then I thought that it would have defeated the purpose of this newsletter: understand how our work is changing and how our perception of what’s important is dramatically shifting.